mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-03-20 11:45:35 +01:00

Compare commits

158 Commits

reyesj2-44

...

delta

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

6e3986b0b0 | ||

|

|

2585bdd23f | ||

|

|

ca588d2e78 | ||

|

|

f756ecb396 | ||

|

|

82107f00a1 | ||

|

|

5c53244b54 | ||

|

|

3b269e8b82 | ||

|

|

7ece93d7e0 | ||

|

|

14d254e81b | ||

|

|

7af6efda1e | ||

|

|

ce972238fe | ||

|

|

442bd1499d | ||

|

|

30ea309dff | ||

|

|

bfeefeea2f | ||

|

|

8251d56a96 | ||

|

|

1b1e602716 | ||

|

|

034b1d045b | ||

|

|

20bf88b338 | ||

|

|

d3f819017b | ||

|

|

c92aedfff3 | ||

|

|

7aded184b3 | ||

|

|

d3938b61d2 | ||

|

|

c2c5aea244 | ||

|

|

83b7fecbbc | ||

|

|

d227cf71c8 | ||

|

|

020b9db610 | ||

|

|

cceaebe350 | ||

|

|

a982056363 | ||

|

|

db81834e06 | ||

|

|

318e4ec54b | ||

|

|

20bf05e9f3 | ||

|

|

4254769e68 | ||

|

|

c16ff2bd99 | ||

|

|

0c88b32fc2 | ||

|

|

0814f34f0e | ||

|

|

b6366e52ba | ||

|

|

825f377d2d | ||

|

|

74ad2990a7 | ||

|

|

738ce62d35 | ||

|

|

057ec6f0f1 | ||

|

|

20c4da50b1 | ||

|

|

5fb396fc09 | ||

|

|

a0b1e31717 | ||

|

|

cacae12ba3 | ||

|

|

83bd8a025c | ||

|

|

2a271b950b | ||

|

|

e19e83bebb | ||

|

|

066918e27d | ||

|

|

930985b770 | ||

|

|

346dc446de | ||

|

|

341471d38e | ||

|

|

2349750e13 | ||

|

|

00986dc2fd | ||

|

|

d60bef1371 | ||

|

|

5806a85214 | ||

|

|

2d97dfc8a1 | ||

|

|

d6263812a6 | ||

|

|

ef7d1771ab | ||

|

|

4dc377c99f | ||

|

|

a52e5d0474 | ||

|

|

1a943aefc5 | ||

|

|

4bb61d999d | ||

|

|

e0e0e3e97b | ||

|

|

6b039b3f94 | ||

|

|

d2d2f0cb5f | ||

|

|

e6ee7dac7c | ||

|

|

7bf63b822d | ||

|

|

1a7d72c630 | ||

|

|

4224713cc6 | ||

|

|

b452e70419 | ||

|

|

6809497730 | ||

|

|

70597a77ab | ||

|

|

f5faf86cb3 | ||

|

|

be4e253620 | ||

|

|

ebc1152376 | ||

|

|

625bfb3ba7 | ||

|

|

c11b83c712 | ||

|

|

a3b471c1d1 | ||

|

|

eaf3f10adc | ||

|

|

84f4e460f6 | ||

|

|

88841c9814 | ||

|

|

64bb0dfb5b | ||

|

|

ddb26a9f42 | ||

|

|

744d8fdd5e | ||

|

|

6feb06e623 | ||

|

|

afc14ec29d | ||

|

|

59134c65d0 | ||

|

|

614537998a | ||

|

|

d2cee468a0 | ||

|

|

94f454c311 | ||

|

|

17881c9a36 | ||

|

|

5b2def6fdd | ||

|

|

9b6d29212d | ||

|

|

c1bff03b1c | ||

|

|

b00f113658 | ||

|

|

7dcd923ebf | ||

|

|

1fcd8a7c1a | ||

|

|

4a89f7f26b | ||

|

|

a9196348ab | ||

|

|

12dec366e0 | ||

|

|

1713f6af76 | ||

|

|

7f4adb70bd | ||

|

|

e2483e4be0 | ||

|

|

322c0b8d56 | ||

|

|

81c1d8362d | ||

|

|

d1156ee3fd | ||

|

|

18f971954b | ||

|

|

e55ac7062c | ||

|

|

c178eada22 | ||

|

|

92213e302f | ||

|

|

72193b0249 | ||

|

|

066d7106b0 | ||

|

|

589de8e361 | ||

|

|

914cd8b611 | ||

|

|

845290595e | ||

|

|

544b60d111 | ||

|

|

aa0787b0ff | ||

|

|

89f144df75 | ||

|

|

cfccbe2bed | ||

|

|

3dd9a06d67 | ||

|

|

4bfe9039ed | ||

|

|

75cddbf444 | ||

|

|

89b18341c5 | ||

|

|

90137f7093 | ||

|

|

480187b1f5 | ||

|

|

b3ed54633f | ||

|

|

0360d4145c | ||

|

|

2bec5afcdd | ||

|

|

4539024280 | ||

|

|

398bd0c1da | ||

|

|

91759587f5 | ||

|

|

bc9841ea8c | ||

|

|

32241faf55 | ||

|

|

685e22bd68 | ||

|

|

88de779ff7 | ||

|

|

d452694c55 | ||

|

|

7fba8ac2b4 | ||

|

|

0738208627 | ||

|

|

a3720219d8 | ||

|

|

385726b87c | ||

|

|

d78a5867b8 | ||

|

|

ad960c2101 | ||

|

|

7f07c96a2f | ||

|

|

90bea975d0 | ||

|

|

e8adea3022 | ||

|

|

71839bc87f | ||

|

|

6809a40257 | ||

|

|

cea55a72c3 | ||

|

|

e38a4a21ee | ||

|

|

7ac1e767ab | ||

|

|

2c4d833a5b | ||

|

|

41d3dd0aa5 | ||

|

|

6050ab6b21 | ||

|

|

ae05251359 | ||

|

|

f23158aed5 | ||

|

|

b03b75315d | ||

|

|

cbd98efaf4 | ||

|

|

1f7bf1fd88 |

6

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

6

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

@@ -2,13 +2,11 @@ body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

description: Which version of Security Onion are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4.10

|

||||

@@ -35,7 +33,7 @@ body:

|

||||

- 2.4.200

|

||||

- 2.4.201

|

||||

- 2.4.210

|

||||

- 3.0.0

|

||||

- 2.4.211

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

|

||||

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

Normal file

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

Normal file

@@ -0,0 +1,177 @@

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion are you asking about?

|

||||

options:

|

||||

-

|

||||

- 3.0.0

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Method

|

||||

description: How did you install Security Onion?

|

||||

options:

|

||||

-

|

||||

- Security Onion ISO image

|

||||

- Cloud image (Amazon, Azure, Google)

|

||||

- Network installation on Oracle 9 (unsupported)

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Description

|

||||

description: >

|

||||

Is this discussion about installation, configuration, upgrading, or other?

|

||||

options:

|

||||

-

|

||||

- installation

|

||||

- configuration

|

||||

- upgrading

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Type

|

||||

description: >

|

||||

When you installed, did you choose Import, Eval, Standalone, Distributed, or something else?

|

||||

options:

|

||||

-

|

||||

- Import

|

||||

- Eval

|

||||

- Standalone

|

||||

- Distributed

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Location

|

||||

description: >

|

||||

Is this deployment in the cloud, on-prem with Internet access, or airgap?

|

||||

options:

|

||||

-

|

||||

- cloud

|

||||

- on-prem with Internet access

|

||||

- airgap

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Hardware Specs

|

||||

description: >

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://securityonion.net/docs/hardware?

|

||||

options:

|

||||

-

|

||||

- Meets minimum requirements

|

||||

- Exceeds minimum requirements

|

||||

- Does not meet minimum requirements

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: CPU

|

||||

description: How many CPU cores do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: RAM

|

||||

description: How much RAM do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /

|

||||

description: How much storage do you have for the / partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /nsm

|

||||

description: How much storage do you have for the /nsm partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Collection

|

||||

description: >

|

||||

Are you collecting network traffic from a tap or span port?

|

||||

options:

|

||||

-

|

||||

- tap

|

||||

- span port

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Speeds

|

||||

description: >

|

||||

How much network traffic are you monitoring?

|

||||

options:

|

||||

-

|

||||

- Less than 1Gbps

|

||||

- 1Gbps to 10Gbps

|

||||

- more than 10Gbps

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Status

|

||||

description: >

|

||||

Does SOC Grid show all services on all nodes as running OK?

|

||||

options:

|

||||

-

|

||||

- Yes, all services on all nodes are running OK

|

||||

- No, one or more services are failed (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Salt Status

|

||||

description: >

|

||||

Do you get any failures when you run "sudo salt-call state.highstate"?

|

||||

options:

|

||||

-

|

||||

- Yes, there are salt failures (please provide detail below)

|

||||

- No, there are no failures

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Logs

|

||||

description: >

|

||||

Are there any additional clues in /opt/so/log/?

|

||||

options:

|

||||

-

|

||||

- Yes, there are additional clues in /opt/so/log/ (please provide detail below)

|

||||

- No, there are no additional clues

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Detail

|

||||

description: Please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and then provide detailed information to help us help you.

|

||||

placeholder: |-

|

||||

STOP! Before typing, please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 in their entirety!

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

validations:

|

||||

required: true

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Guidelines

|

||||

options:

|

||||

- label: I have read the discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and assert that I have followed the guidelines.

|

||||

required: true

|

||||

2

.github/workflows/pythontest.yml

vendored

2

.github/workflows/pythontest.yml

vendored

@@ -13,7 +13,7 @@ jobs:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

python-version: ["3.13"]

|

||||

python-version: ["3.14"]

|

||||

python-code-path: ["salt/sensoroni/files/analyzers", "salt/manager/tools/sbin"]

|

||||

|

||||

steps:

|

||||

|

||||

66

README.md

66

README.md

@@ -1,50 +1,58 @@

|

||||

## Security Onion 2.4

|

||||

<p align="center">

|

||||

<img src="https://securityonionsolutions.com/logo/logo-so-onion-dark.svg" width="400" alt="Security Onion Logo">

|

||||

</p>

|

||||

|

||||

Security Onion 2.4 is here!

|

||||

# Security Onion

|

||||

|

||||

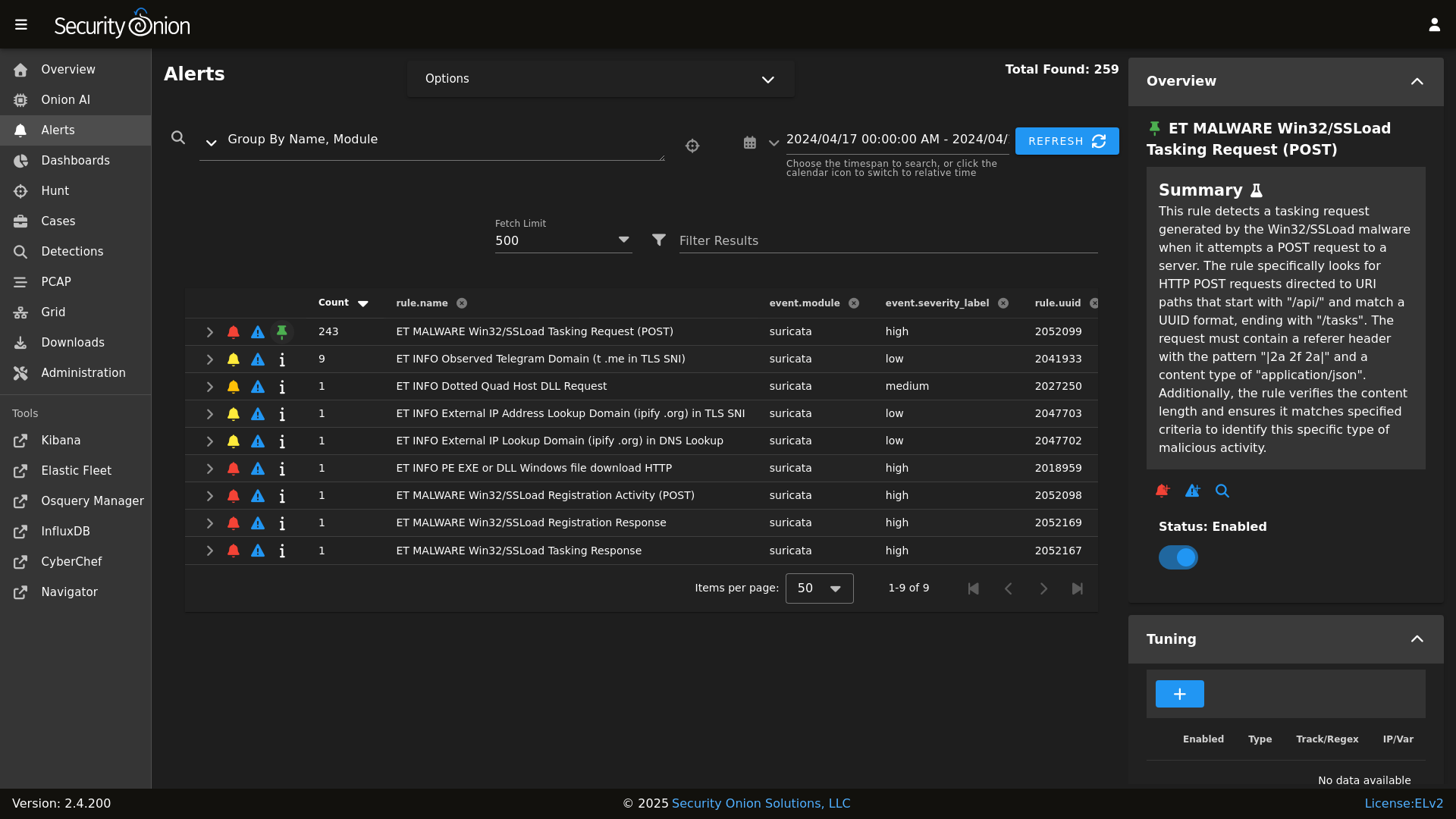

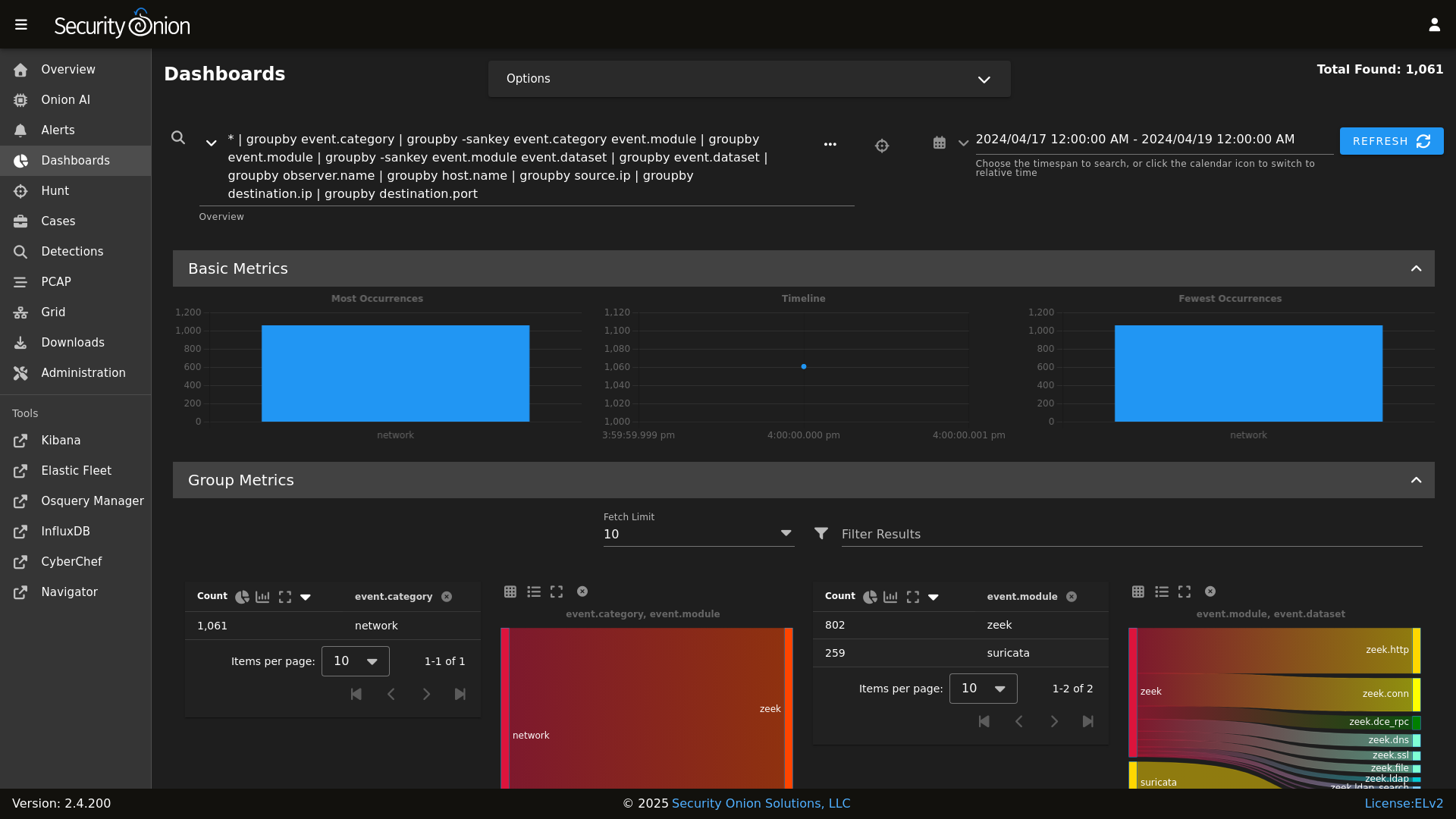

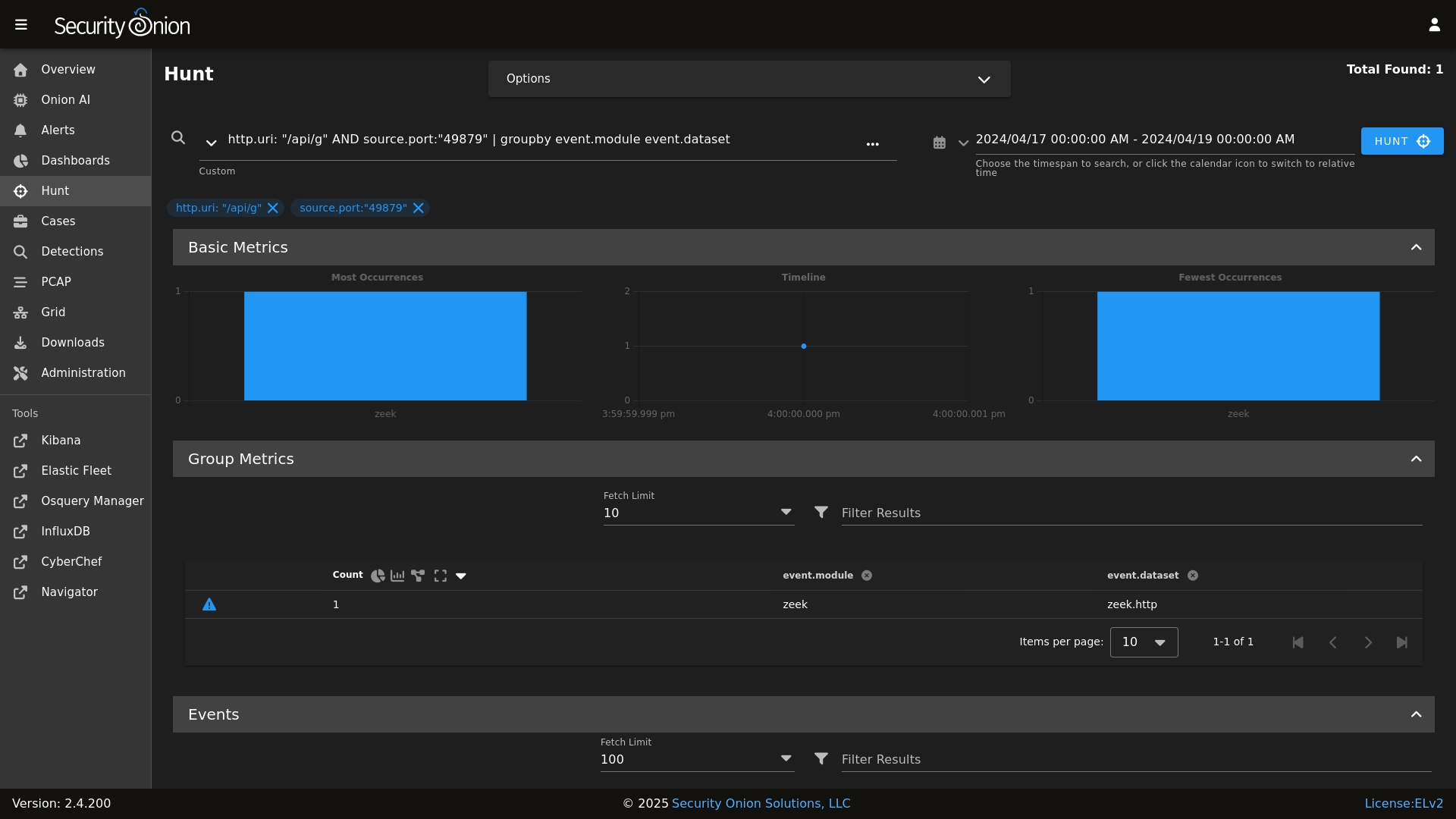

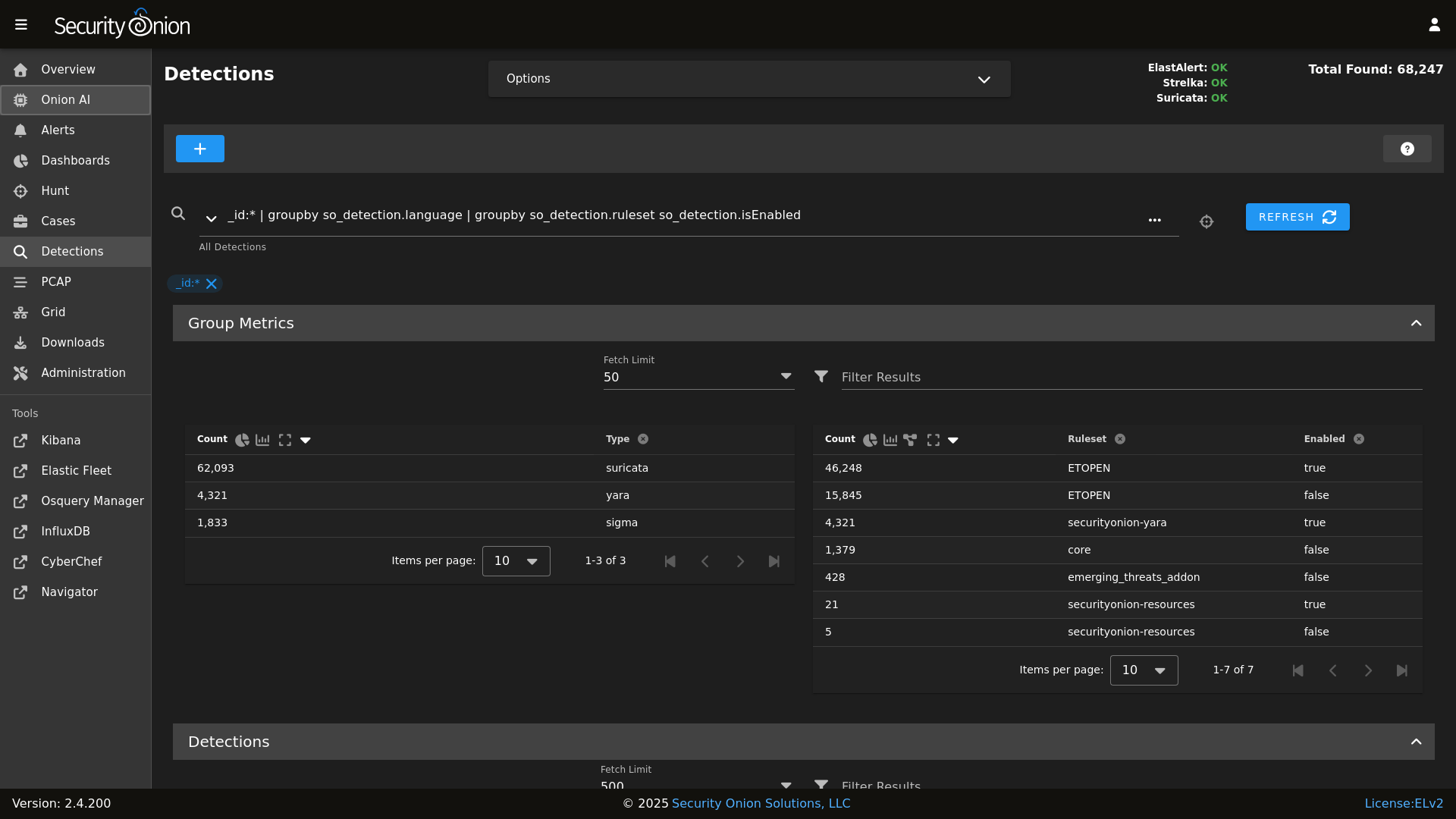

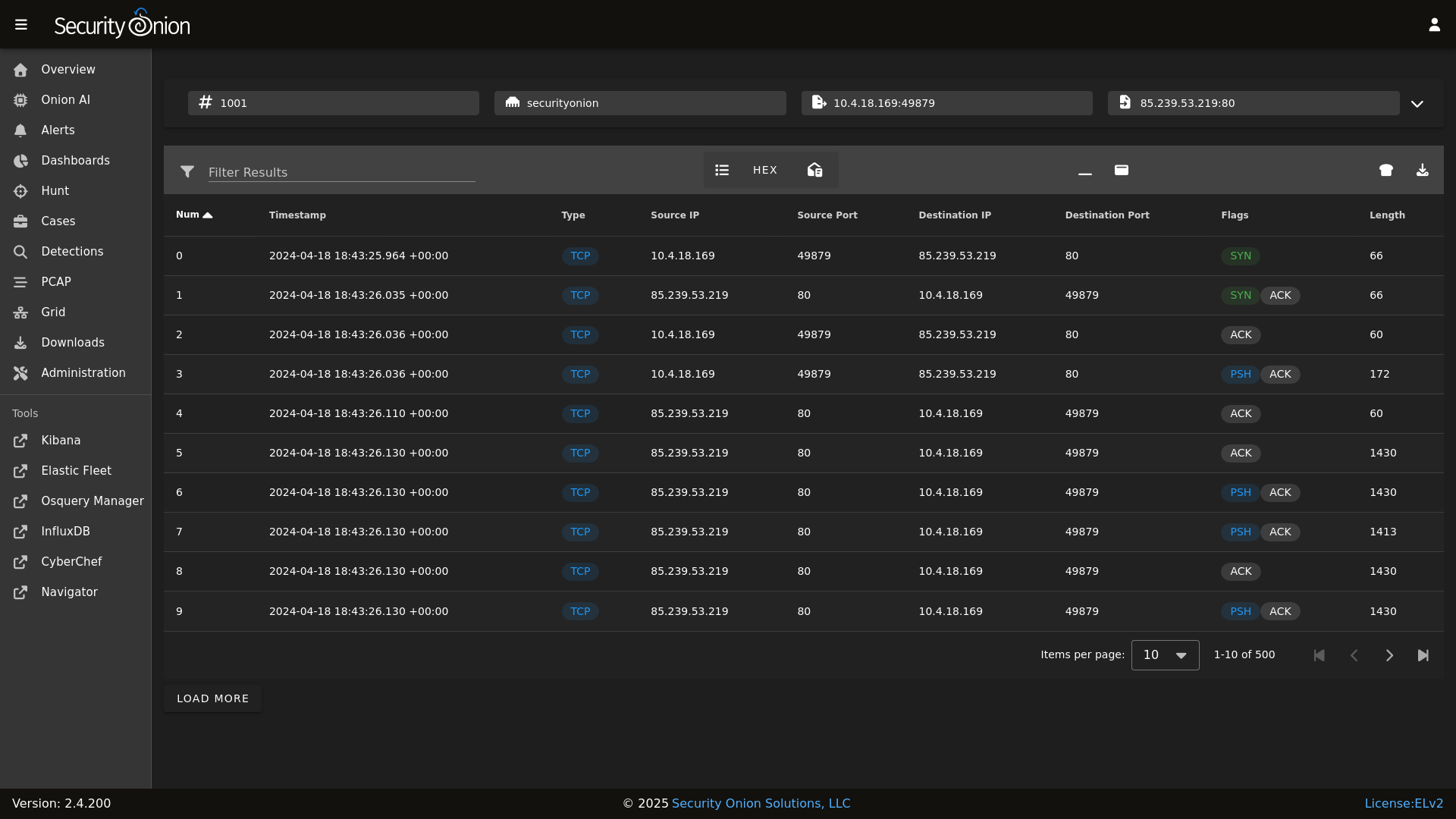

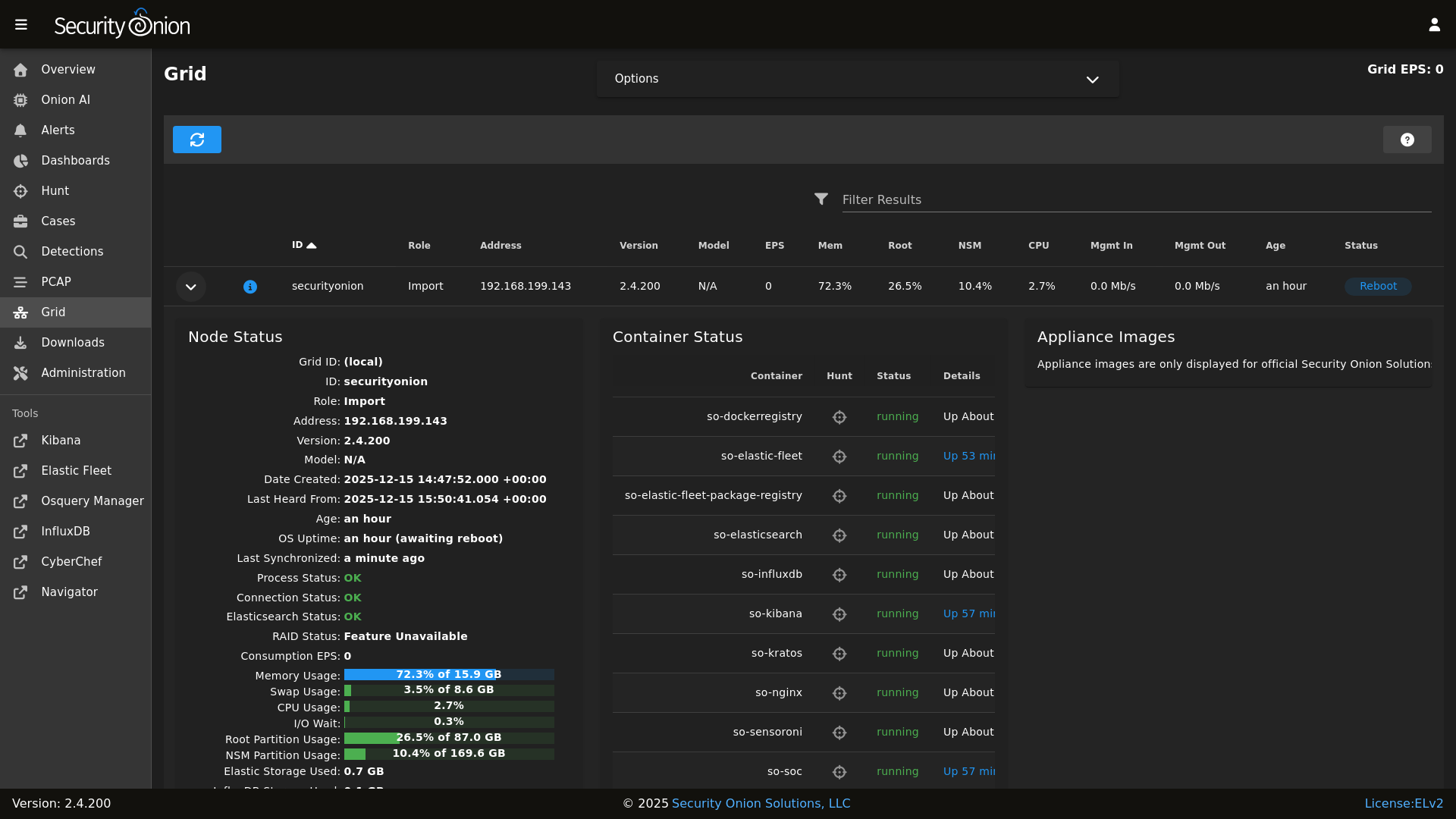

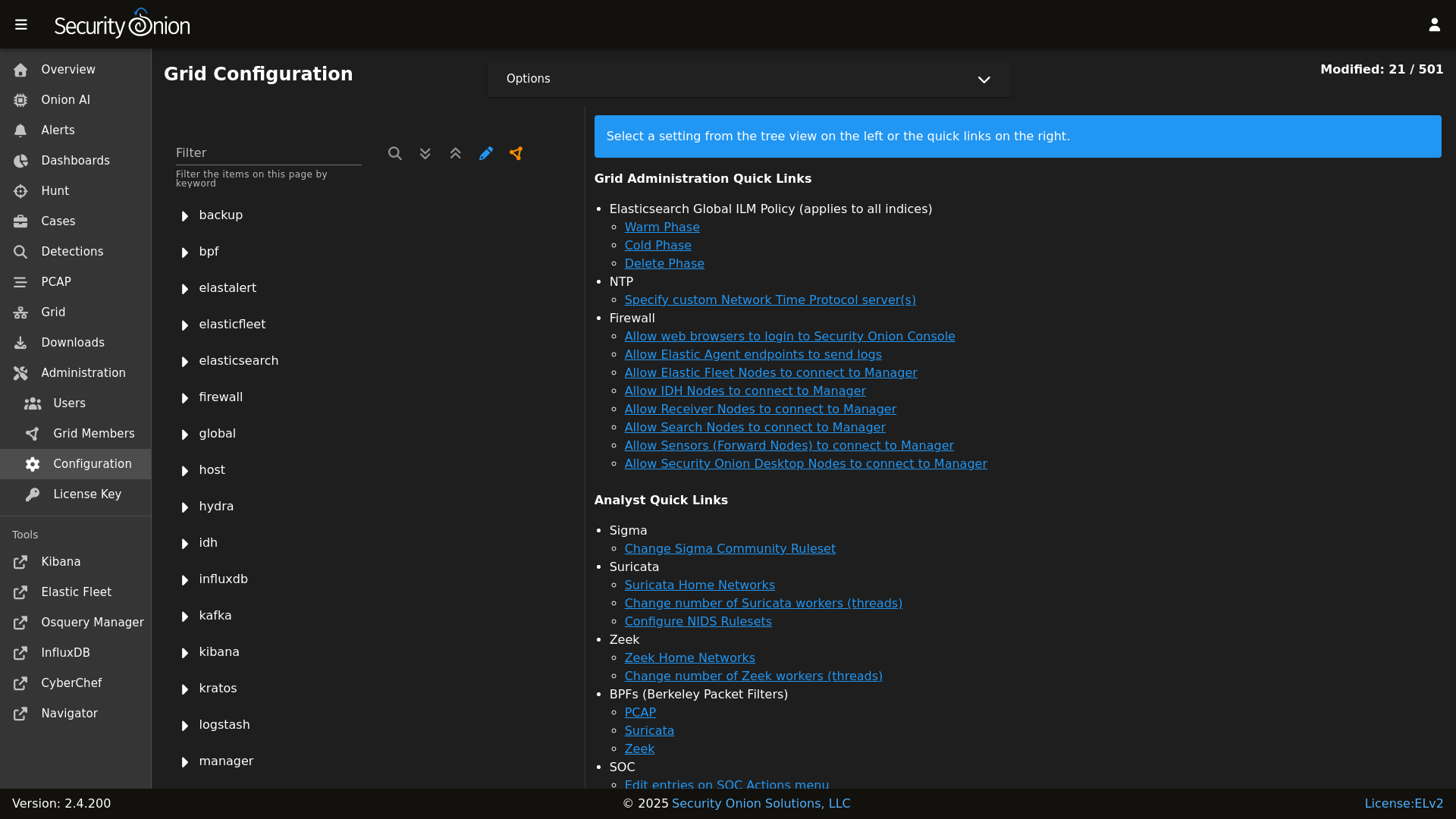

## Screenshots

|

||||

Security Onion is a free and open Linux distribution for threat hunting, enterprise security monitoring, and log management. It includes a comprehensive suite of tools designed to work together to provide visibility into your network and host activity.

|

||||

|

||||

Alerts

|

||||

|

||||

## ✨ Features

|

||||

|

||||

Dashboards

|

||||

|

||||

Security Onion includes everything you need to monitor your network and host systems:

|

||||

|

||||

Hunt

|

||||

|

||||

* **Security Onion Console (SOC)**: A unified web interface for analyzing security events and managing your grid.

|

||||

* **Elastic Stack**: Powerful search backed by Elasticsearch.

|

||||

* **Intrusion Detection**: Network-based IDS with Suricata and host-based monitoring with Elastic Fleet.

|

||||

* **Network Metadata**: Detailed network metadata generated by Zeek or Suricata.

|

||||

* **Full Packet Capture**: Retain and analyze raw network traffic with Suricata PCAP.

|

||||

|

||||

Detections

|

||||

|

||||

## ⭐ Security Onion Pro

|

||||

|

||||

PCAP

|

||||

|

||||

For organizations and enterprises requiring advanced capabilities, **Security Onion Pro** offers additional features designed for scale and efficiency:

|

||||

|

||||

Grid

|

||||

|

||||

* **Onion AI**: Leverage powerful AI-driven insights to accelerate your analysis and investigations.

|

||||

* **Enterprise Features**: Enhanced tools and integrations tailored for enterprise-grade security operations.

|

||||

|

||||

Config

|

||||

|

||||

For more information, visit the [Security Onion Pro](https://securityonionsolutions.com/pro) page.

|

||||

|

||||

### Release Notes

|

||||

## ☁️ Cloud Deployment

|

||||

|

||||

https://securityonion.net/docs/release-notes

|

||||

Security Onion is available and ready to deploy in the **AWS**, **Azure**, and **Google Cloud (GCP)** marketplaces.

|

||||

|

||||

### Requirements

|

||||

## 🚀 Getting Started

|

||||

|

||||

https://securityonion.net/docs/hardware

|

||||

| Goal | Resource |

|

||||

| :--- | :--- |

|

||||

| **Download** | [Security Onion ISO](https://securityonion.net/docs/download) |

|

||||

| **Requirements** | [Hardware Guide](https://securityonion.net/docs/hardware) |

|

||||

| **Install** | [Installation Instructions](https://securityonion.net/docs/installation) |

|

||||

| **What's New** | [Release Notes](https://securityonion.net/docs/release-notes) |

|

||||

|

||||

### Download

|

||||

## 📖 Documentation & Support

|

||||

|

||||

https://securityonion.net/docs/download

|

||||

For more detailed information, please visit our [Documentation](https://docs.securityonion.net).

|

||||

|

||||

### Installation

|

||||

* **FAQ**: [Frequently Asked Questions](https://securityonion.net/docs/faq)

|

||||

* **Community**: [Discussions & Support](https://securityonion.net/docs/community-support)

|

||||

* **Training**: [Official Training](https://securityonion.net/training)

|

||||

|

||||

https://securityonion.net/docs/installation

|

||||

## 🤝 Contributing

|

||||

|

||||

### FAQ

|

||||

We welcome contributions! Please see our [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines on how to get involved.

|

||||

|

||||

https://securityonion.net/docs/faq

|

||||

## 🛡️ License

|

||||

|

||||

### Feedback

|

||||

Security Onion is licensed under the terms of the license found in the [LICENSE](LICENSE) file.

|

||||

|

||||

https://securityonion.net/docs/community-support

|

||||

---

|

||||

*Built with 🧅 by Security Onion Solutions.*

|

||||

|

||||

@@ -4,6 +4,7 @@

|

||||

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 3.x | :white_check_mark: |

|

||||

| 2.4.x | :white_check_mark: |

|

||||

| 2.3.x | :x: |

|

||||

| 16.04.x | :x: |

|

||||

|

||||

@@ -87,8 +87,6 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -134,8 +132,6 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -185,8 +181,6 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -209,8 +203,6 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- strelka.soc_strelka

|

||||

@@ -297,8 +289,6 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- strelka.soc_strelka

|

||||

|

||||

@@ -1,24 +1,14 @@

|

||||

from os import path

|

||||

import subprocess

|

||||

|

||||

def check():

|

||||

|

||||

osfam = __grains__['os_family']

|

||||

retval = 'False'

|

||||

|

||||

if osfam == 'Debian':

|

||||

if path.exists('/var/run/reboot-required'):

|

||||

retval = 'True'

|

||||

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

||||

|

||||

elif osfam == 'RedHat':

|

||||

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

||||

|

||||

try:

|

||||

needs_restarting = subprocess.check_call(cmd, shell=True)

|

||||

except subprocess.CalledProcessError:

|

||||

retval = 'True'

|

||||

|

||||

else:

|

||||

retval = 'Unsupported OS: %s' % os

|

||||

try:

|

||||

needs_restarting = subprocess.check_call(cmd, shell=True)

|

||||

except subprocess.CalledProcessError:

|

||||

retval = 'True'

|

||||

|

||||

return retval

|

||||

|

||||

@@ -38,7 +38,6 @@

|

||||

] %}

|

||||

|

||||

{% set sensor_states = [

|

||||

'pcap',

|

||||

'suricata',

|

||||

'healthcheck',

|

||||

'tcpreplay',

|

||||

|

||||

@@ -1,10 +1,10 @@

|

||||

backup:

|

||||

locations:

|

||||

description: List of locations to back up to the destination.

|

||||

helpLink: backup.html

|

||||

helpLink: backup

|

||||

global: True

|

||||

destination:

|

||||

description: Directory to store the configuration backups in.

|

||||

helpLink: backup.html

|

||||

helpLink: backup

|

||||

global: True

|

||||

|

||||

@@ -1,21 +1,15 @@

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

{% set PCAP_BPF_STATUS = 0 %}

|

||||

{% set STENO_BPF_COMPILED = "" %}

|

||||

|

||||

{% if GLOBALS.pcap_engine == "TRANSITION" %}

|

||||

{% set PCAPBPF = ["ip and host 255.255.255.1 and port 1"] %}

|

||||

{% else %}

|

||||

{% import_yaml 'bpf/defaults.yaml' as BPFDEFAULTS %}

|

||||

{% set BPFMERGED = salt['pillar.get']('bpf', BPFDEFAULTS.bpf, merge=True) %}

|

||||

{% import 'bpf/macros.jinja' as MACROS %}

|

||||

{{ MACROS.remove_comments(BPFMERGED, 'pcap') }}

|

||||

{% set PCAPBPF = BPFMERGED.pcap %}

|

||||

{% endif %}

|

||||

|

||||

{% if PCAPBPF %}

|

||||

{% set PCAP_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + PCAPBPF|join(" "),cwd='/root') %}

|

||||

{% if PCAP_BPF_CALC['retcode'] == 0 %}

|

||||

{% set PCAP_BPF_STATUS = 1 %}

|

||||

{% set STENO_BPF_COMPILED = ",\\\"--filter=" + PCAP_BPF_CALC['stdout'] + "\\\"" %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

|

||||

@@ -3,14 +3,14 @@ bpf:

|

||||

description: List of BPF filters to apply to the PCAP engine.

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

helpLink: bpf.html

|

||||

helpLink: bpf

|

||||

suricata:

|

||||

description: List of BPF filters to apply to Suricata. This will apply to alerts and, if enabled, to metadata and PCAP logs generated by Suricata.

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

helpLink: bpf.html

|

||||

helpLink: bpf

|

||||

zeek:

|

||||

description: List of BPF filters to apply to Zeek.

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

helpLink: bpf.html

|

||||

helpLink: bpf

|

||||

|

||||

@@ -3,8 +3,6 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

|

||||

include:

|

||||

- docker

|

||||

|

||||

@@ -18,9 +16,3 @@ trusttheca:

|

||||

- show_changes: False

|

||||

- makedirs: True

|

||||

|

||||

{% if GLOBALS.os_family == 'Debian' %}

|

||||

symlinkca:

|

||||

file.symlink:

|

||||

- target: /etc/pki/tls/certs/intca.crt

|

||||

- name: /etc/ssl/certs/intca.crt

|

||||

{% endif %}

|

||||

|

||||

@@ -1,12 +0,0 @@

|

||||

{

|

||||

"registry-mirrors": [

|

||||

"https://:5000"

|

||||

],

|

||||

"bip": "172.17.0.1/24",

|

||||

"default-address-pools": [

|

||||

{

|

||||

"base": "172.17.0.0/24",

|

||||

"size": 24

|

||||

}

|

||||

]

|

||||

}

|

||||

@@ -20,11 +20,6 @@ kernel.printk:

|

||||

sysctl.present:

|

||||

- value: "3 4 1 3"

|

||||

|

||||

# Remove variables.txt from /tmp - This is temp

|

||||

rmvariablesfile:

|

||||

file.absent:

|

||||

- name: /tmp/variables.txt

|

||||

|

||||

# Add socore Group

|

||||

socoregroup:

|

||||

group.present:

|

||||

@@ -149,28 +144,6 @@ common_sbin_jinja:

|

||||

- so-import-pcap

|

||||

{% endif %}

|

||||

|

||||

{% if GLOBALS.role == 'so-heavynode' %}

|

||||

remove_so-pcap-import_heavynode:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-pcap-import

|

||||

|

||||

remove_so-import-pcap_heavynode:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-import-pcap

|

||||

{% endif %}

|

||||

|

||||

{% if not GLOBALS.is_manager%}

|

||||

# prior to 2.4.50 these scripts were in common/tools/sbin on the manager because of soup and distributed to non managers

|

||||

# these two states remove the scripts from non manager nodes

|

||||

remove_soup:

|

||||

file.absent:

|

||||

- name: /usr/sbin/soup

|

||||

|

||||

remove_so-firewall:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-firewall

|

||||

{% endif %}

|

||||

|

||||

so-status_script:

|

||||

file.managed:

|

||||

- name: /usr/sbin/so-status

|

||||

|

||||

@@ -1,52 +1,5 @@

|

||||

# we cannot import GLOBALS from vars/globals.map.jinja in this state since it is called in setup.virt.init

|

||||

# since it is early in setup of a new VM, the pillars imported in GLOBALS are not yet defined

|

||||

{% if grains.os_family == 'Debian' %}

|

||||

commonpkgs:

|

||||

pkg.installed:

|

||||

- skip_suggestions: True

|

||||

- pkgs:

|

||||

- apache2-utils

|

||||

- wget

|

||||

- ntpdate

|

||||

- jq

|

||||

- curl

|

||||

- ca-certificates

|

||||

- software-properties-common

|

||||

- apt-transport-https

|

||||

- openssl

|

||||

- netcat-openbsd

|

||||

- sqlite3

|

||||

- libssl-dev

|

||||

- procps

|

||||

- python3-dateutil

|

||||

- python3-docker

|

||||

- python3-packaging

|

||||

- python3-lxml

|

||||

- git

|

||||

- rsync

|

||||

- vim

|

||||

- tar

|

||||

- unzip

|

||||

- bc

|

||||

{% if grains.oscodename != 'focal' %}

|

||||

- python3-rich

|

||||

{% endif %}

|

||||

|

||||

{% if grains.oscodename == 'focal' %}

|

||||

# since Ubuntu requires and internet connection we can use pip to install modules

|

||||

python3-pip:

|

||||

pkg.installed

|

||||

|

||||

python-rich:

|

||||

pip.installed:

|

||||

- name: rich

|

||||

- target: /usr/local/lib/python3.8/dist-packages/

|

||||

- require:

|

||||

- pkg: python3-pip

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

|

||||

{% if grains.os_family == 'RedHat' %}

|

||||

|

||||

remove_mariadb:

|

||||

pkg.removed:

|

||||

@@ -84,5 +37,3 @@ commonpkgs:

|

||||

- unzip

|

||||

- wget

|

||||

- yum-utils

|

||||

|

||||

{% endif %}

|

||||

|

||||

@@ -3,8 +3,6 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||

|

||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||

{% if SOC_GLOBAL.global.airgap %}

|

||||

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

||||

@@ -13,14 +11,6 @@

|

||||

{% endif %}

|

||||

{% set SOVERSION = salt['file.read']('/etc/soversion').strip() %}

|

||||

|

||||

remove_common_soup:

|

||||

file.absent:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/soup

|

||||

|

||||

remove_common_so-firewall:

|

||||

file.absent:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-firewall

|

||||

|

||||

# This section is used to put the scripts in place in the Salt file system

|

||||

# in case a state run tries to overwrite what we do in the next section.

|

||||

copy_so-common_common_tools_sbin:

|

||||

@@ -120,23 +110,3 @@ copy_bootstrap-salt_sbin:

|

||||

- source: {{UPDATE_DIR}}/salt/salt/scripts/bootstrap-salt.sh

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

{# this is added in 2.4.120 to remove salt repo files pointing to saltproject.io to accomodate the move to broadcom and new bootstrap-salt script #}

|

||||

{% if salt['pkg.version_cmp'](SOVERSION, '2.4.120') == -1 %}

|

||||

{% set saltrepofile = '/etc/yum.repos.d/salt.repo' %}

|

||||

{% if grains.os_family == 'Debian' %}

|

||||

{% set saltrepofile = '/etc/apt/sources.list.d/salt.list' %}

|

||||

{% endif %}

|

||||

remove_saltproject_io_repo_manager:

|

||||

file.absent:

|

||||

- name: {{ saltrepofile }}

|

||||

{% endif %}

|

||||

|

||||

{% else %}

|

||||

fix_23_soup_sbin:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /usr/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

fix_23_soup_salt:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /opt/so/saltstack/defalt/salt/common/tools/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

{% endif %}

|

||||

|

||||

@@ -16,7 +16,7 @@

|

||||

|

||||

if [ "$#" -lt 2 ]; then

|

||||

cat 1>&2 <<EOF

|

||||

$0 compiles a BPF expression to be passed to stenotype to apply a socket filter.

|

||||

$0 compiles a BPF expression to be passed to PCAP to apply a socket filter.

|

||||

Its first argument is the interface (link type is required) and all other arguments

|

||||

are passed to TCPDump.

|

||||

|

||||

|

||||

@@ -333,8 +333,8 @@ get_elastic_agent_vars() {

|

||||

|

||||

if [ -f "$defaultsfile" ]; then

|

||||

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||

@@ -349,21 +349,16 @@ get_random_value() {

|

||||

}

|

||||

|

||||

gpg_rpm_import() {

|

||||

if [[ $is_oracle ]]; then

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

||||

else

|

||||

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

||||

fi

|

||||

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

||||

for RPMKEY in "${RPMKEYS[@]}"; do

|

||||

rpm --import $RPMKEYSLOC/$RPMKEY

|

||||

echo "Imported $RPMKEY"

|

||||

done

|

||||

elif [[ $is_rpm ]]; then

|

||||

echo "Importing the security onion GPG key"

|

||||

rpm --import ../salt/repo/client/files/oracle/keys/securityonion.pub

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

||||

else

|

||||

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

||||

fi

|

||||

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

||||

for RPMKEY in "${RPMKEYS[@]}"; do

|

||||

rpm --import $RPMKEYSLOC/$RPMKEY

|

||||

echo "Imported $RPMKEY"

|

||||

done

|

||||

}

|

||||

|

||||

header() {

|

||||

@@ -550,6 +545,22 @@ retry() {

|

||||

return $exitcode

|

||||

}

|

||||

|

||||

rollover_index() {

|

||||

idx=$1

|

||||

exists=$(so-elasticsearch-query $idx -o /dev/null -w "%{http_code}")

|

||||

if [[ $exists -eq 200 ]]; then

|

||||

rollover=$(so-elasticsearch-query $idx/_rollover -o /dev/null -w "%{http_code}" -XPOST)

|

||||

|

||||

if [[ $rollover -eq 200 ]]; then

|

||||

echo "Successfully triggered rollover for $idx..."

|

||||

else

|

||||

echo "Could not trigger rollover for $idx..."

|

||||

fi

|

||||

else

|

||||

echo "Could not find index $idx..."

|

||||

fi

|

||||

}

|

||||

|

||||

run_check_net_err() {

|

||||

local cmd=$1

|

||||

local err_msg=${2:-"Unknown error occured, please check /root/$WHATWOULDYOUSAYYAHDOHERE.log for details."} # Really need to rename that variable

|

||||

@@ -615,69 +626,19 @@ salt_minion_count() {

|

||||

}

|

||||

|

||||

set_os() {

|

||||

if [ -f /etc/redhat-release ]; then

|

||||

if grep -q "Rocky Linux release 9" /etc/redhat-release; then

|

||||

OS=rocky

|

||||

OSVER=9

|

||||

is_rocky=true

|

||||

is_rpm=true

|

||||

elif grep -q "CentOS Stream release 9" /etc/redhat-release; then

|

||||

OS=centos

|

||||

OSVER=9

|

||||

is_centos=true

|

||||

is_rpm=true

|

||||

elif grep -q "AlmaLinux release 9" /etc/redhat-release; then

|

||||

OS=alma

|

||||

OSVER=9

|

||||

is_alma=true

|

||||

is_rpm=true

|

||||

elif grep -q "Red Hat Enterprise Linux release 9" /etc/redhat-release; then

|

||||

if [ -f /etc/oracle-release ]; then

|

||||

OS=oracle

|

||||

OSVER=9

|

||||

is_oracle=true

|

||||

is_rpm=true

|

||||

else

|

||||

OS=rhel

|

||||

OSVER=9

|

||||

is_rhel=true

|

||||

is_rpm=true

|

||||

fi

|

||||

fi

|

||||

cron_service_name="crond"

|

||||

elif [ -f /etc/os-release ]; then

|

||||

if grep -q "UBUNTU_CODENAME=focal" /etc/os-release; then

|

||||

OSVER=focal

|

||||

UBVER=20.04

|

||||

OS=ubuntu

|

||||

is_ubuntu=true

|

||||

is_deb=true

|

||||

elif grep -q "UBUNTU_CODENAME=jammy" /etc/os-release; then

|

||||

OSVER=jammy

|

||||

UBVER=22.04

|

||||

OS=ubuntu

|

||||

is_ubuntu=true

|

||||

is_deb=true

|

||||

elif grep -q "VERSION_CODENAME=bookworm" /etc/os-release; then

|

||||

OSVER=bookworm

|

||||

DEBVER=12

|

||||

is_debian=true

|

||||

OS=debian

|

||||

is_deb=true

|

||||

fi

|

||||

cron_service_name="cron"

|

||||

if [ -f /etc/redhat-release ] && grep -q "Red Hat Enterprise Linux release 9" /etc/redhat-release && [ -f /etc/oracle-release ]; then

|

||||

OS=oracle

|

||||

OSVER=9

|

||||

is_oracle=true

|

||||

is_rpm=true

|

||||

fi

|

||||

cron_service_name="crond"

|

||||

}

|

||||

|

||||

set_minionid() {

|

||||

MINIONID=$(lookup_grain id)

|

||||

}

|

||||

|

||||

set_palette() {

|

||||

if [[ $is_deb ]]; then

|

||||

update-alternatives --set newt-palette /etc/newt/palette.original

|

||||

fi

|

||||

}

|

||||

|

||||

set_version() {

|

||||

CURRENTVERSION=0.0.0

|

||||

|

||||

@@ -32,7 +32,6 @@ container_list() {

|

||||

"so-nginx"

|

||||

"so-pcaptools"

|

||||

"so-soc"

|

||||

"so-steno"

|

||||

"so-suricata"

|

||||

"so-telegraf"

|

||||

"so-zeek"

|

||||

@@ -58,7 +57,6 @@ container_list() {

|

||||

"so-pcaptools"

|

||||

"so-redis"

|

||||

"so-soc"

|

||||

"so-steno"

|

||||

"so-strelka-backend"

|

||||

"so-strelka-manager"

|

||||

"so-suricata"

|

||||

@@ -71,7 +69,6 @@ container_list() {

|

||||

"so-logstash"

|

||||

"so-nginx"

|

||||

"so-redis"

|

||||

"so-steno"

|

||||

"so-suricata"

|

||||

"so-soc"

|

||||

"so-telegraf"

|

||||

|

||||

@@ -131,6 +131,7 @@ if [[ $EXCLUDE_STARTUP_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|not configured for GeoIP" # SO does not bundle the maxminddb with Zeek

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|HTTP 404: Not Found" # Salt loops until Kratos returns 200, during startup Kratos may not be ready

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Cancelling deferred write event maybeFenceReplicas because the event queue is now closed" # Kafka controller log during shutdown/restart

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Redis may have been restarted" # Redis likely restarted by salt

|

||||

fi

|

||||

|

||||

if [[ $EXCLUDE_FALSE_POSITIVE_ERRORS == 'Y' ]]; then

|

||||

@@ -179,7 +180,6 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|salt-minion-check" # bug in early 2.4 place Jinja script in non-jinja salt dir causing cron output errors

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|monitoring.metrics" # known issue with elastic agent casting the field incorrectly if an integer value shows up before a float

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|repodownload.conf" # known issue with reposync on pre-2.4.20

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|missing versions record" # stenographer corrupt index

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|soc.field." # known ingest type collisions issue with earlier versions of SO

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|error parsing signature" # Malformed Suricata rule, from upstream provider

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|sticky buffer has no matches" # Non-critical Suricata error

|

||||

|

||||

@@ -55,19 +55,22 @@ if [ $SKIP -ne 1 ]; then

|

||||

fi

|

||||

|

||||

delete_pcap() {

|

||||

PCAP_DATA="/nsm/pcap/"

|

||||

[ -d $PCAP_DATA ] && so-pcap-stop && rm -rf $PCAP_DATA/* && so-pcap-start

|

||||

PCAP_DATA="/nsm/suripcap/"

|

||||

[ -d $PCAP_DATA ] && rm -rf $PCAP_DATA/*

|

||||

}

|

||||

delete_suricata() {

|

||||

SURI_LOG="/nsm/suricata/"

|

||||

[ -d $SURI_LOG ] && so-suricata-stop && rm -rf $SURI_LOG/* && so-suricata-start

|

||||

[ -d $SURI_LOG ] && rm -rf $SURI_LOG/*

|

||||

}

|

||||

delete_zeek() {

|

||||

ZEEK_LOG="/nsm/zeek/logs/"

|

||||

[ -d $ZEEK_LOG ] && so-zeek-stop && rm -rf $ZEEK_LOG/* && so-zeek-start

|

||||

}

|

||||

|

||||

so-suricata-stop

|

||||

delete_pcap

|

||||

delete_suricata

|

||||

delete_zeek

|

||||

so-suricata-start

|

||||

|

||||

|

||||

|

||||

@@ -23,7 +23,6 @@ if [ $# -ge 1 ]; then

|

||||

fi

|

||||

|

||||

case $1 in

|

||||

"steno") docker stop so-steno && docker rm so-steno && salt-call state.apply pcap queue=True;;

|

||||

"elastic-fleet") docker stop so-elastic-fleet && docker rm so-elastic-fleet && salt-call state.apply elasticfleet queue=True;;

|

||||

*) docker stop so-$1 ; docker rm so-$1 ; salt-call state.apply $1 queue=True;;

|

||||

esac

|

||||

|

||||

@@ -72,7 +72,7 @@ clean() {

|

||||

done

|

||||

fi

|

||||

|

||||

## Clean up extracted pcaps from Steno

|

||||

## Clean up extracted pcaps

|

||||

PCAPS='/nsm/pcapout'

|

||||

OLDEST_PCAP=$(find $PCAPS -type f -printf '%T+ %p\n' | sort -n | head -n 1)

|

||||

if [ -z "$OLDEST_PCAP" -o "$OLDEST_PCAP" == ".." -o "$OLDEST_PCAP" == "." ]; then

|

||||

|

||||

@@ -23,7 +23,6 @@ if [ $# -ge 1 ]; then

|

||||

|

||||

case $1 in

|

||||

"all") salt-call state.highstate queue=True;;

|

||||

"steno") if docker ps | grep -q so-$1; then printf "\n$1 is already running!\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply pcap queue=True; fi ;;

|

||||

"elastic-fleet") if docker ps | grep -q so-$1; then printf "\n$1 is already running!\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply elasticfleet queue=True; fi ;;

|

||||

*) if docker ps | grep -E -q '^so-$1$'; then printf "\n$1 is already running\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply $1 queue=True; fi ;;

|

||||

esac

|

||||

|

||||

@@ -1,34 +0,0 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

so-curator:

|

||||

docker_container.absent:

|

||||

- force: True

|

||||

|

||||

so-curator_so-status.disabled:

|

||||

file.line:

|

||||

- name: /opt/so/conf/so-status/so-status.conf

|

||||

- match: ^so-curator$

|

||||

- mode: delete

|

||||

|

||||

so-curator-cluster-close:

|

||||

cron.absent:

|

||||

- identifier: so-curator-cluster-close

|

||||

|

||||

so-curator-cluster-delete:

|

||||

cron.absent:

|

||||

- identifier: so-curator-cluster-delete

|

||||

|

||||

delete_curator_configuration:

|

||||

file.absent:

|

||||

- name: /opt/so/conf/curator

|

||||

- recurse: True

|

||||

|

||||

{% set files = salt.file.find(path='/usr/sbin', name='so-curator*') %}

|

||||

{% if files|length > 0 %}

|

||||

delete_curator_scripts:

|

||||

file.absent:

|

||||

- names: {{files|yaml}}

|

||||

{% endif %}

|

||||

@@ -1,6 +1,10 @@

|

||||

docker:

|

||||

range: '172.17.1.0/24'

|

||||

gateway: '172.17.1.1'

|

||||

ulimits:

|

||||

- name: nofile

|

||||

soft: 1048576

|

||||

hard: 1048576

|

||||

containers:

|

||||

'so-dockerregistry':

|

||||

final_octet: 20

|

||||

@@ -9,6 +13,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-elastic-fleet':

|

||||

final_octet: 21

|

||||

port_bindings:

|

||||

@@ -16,6 +21,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-elasticsearch':

|

||||

final_octet: 22

|

||||

port_bindings:

|

||||

@@ -24,6 +30,16 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits:

|

||||

- name: memlock

|

||||

soft: -1

|

||||

hard: -1

|

||||

- name: nofile

|

||||

soft: 65536

|

||||

hard: 65536

|

||||

- name: nproc

|

||||

soft: 4096

|

||||

hard: 4096

|

||||

'so-influxdb':

|

||||

final_octet: 26

|

||||

port_bindings:

|

||||

@@ -31,6 +47,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-kibana':

|

||||

final_octet: 27

|

||||

port_bindings:

|

||||

@@ -38,6 +55,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-kratos':

|

||||

final_octet: 28

|

||||

port_bindings:

|

||||

@@ -46,6 +64,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-hydra':

|

||||

final_octet: 30

|

||||

port_bindings:

|

||||

@@ -54,6 +73,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-logstash':

|

||||

final_octet: 29

|

||||

port_bindings:

|

||||

@@ -70,6 +90,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-nginx':

|

||||

final_octet: 31

|

||||

port_bindings:

|

||||

@@ -81,6 +102,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-nginx-fleet-node':

|

||||

final_octet: 31

|

||||

port_bindings:

|

||||

@@ -88,6 +110,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-redis':

|

||||

final_octet: 33

|

||||

port_bindings:

|

||||

@@ -96,11 +119,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-sensoroni':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-soc':

|

||||

final_octet: 34

|

||||

port_bindings:

|

||||

@@ -108,16 +133,19 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-backend':

|

||||

final_octet: 36

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-filestream':

|

||||

final_octet: 37

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-frontend':

|

||||

final_octet: 38

|

||||

port_bindings:

|

||||

@@ -125,11 +153,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-manager':

|

||||

final_octet: 39

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-gatekeeper':

|

||||

final_octet: 40

|

||||

port_bindings:

|

||||

@@ -137,6 +167,7 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-strelka-coordinator':

|

||||

final_octet: 41

|

||||

port_bindings:

|

||||

@@ -144,11 +175,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-elastalert':

|

||||

final_octet: 42

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-elastic-fleet-package-registry':

|

||||

final_octet: 44

|

||||

port_bindings:

|

||||

@@ -156,11 +189,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-idh':

|

||||

final_octet: 45

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-elastic-agent':

|

||||

final_octet: 46

|

||||

port_bindings:

|

||||

@@ -169,28 +204,28 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-telegraf':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-steno':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

'so-suricata':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits:

|

||||

- memlock=524288000

|

||||

ulimits: []

|

||||

'so-zeek':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits:

|

||||

- name: core

|

||||

soft: 0

|

||||

hard: 0

|

||||

'so-kafka':

|

||||

final_octet: 88

|

||||

port_bindings:

|

||||

@@ -201,3 +236,4 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits: []

|

||||

|

||||

@@ -1,8 +1,8 @@

|

||||

{% import_yaml 'docker/defaults.yaml' as DOCKERDEFAULTS %}

|

||||

{% set DOCKER = salt['pillar.get']('docker', DOCKERDEFAULTS.docker, merge=True) %}

|

||||

{% set RANGESPLIT = DOCKER.range.split('.') %}

|

||||

{% set DOCKERMERGED = salt['pillar.get']('docker', DOCKERDEFAULTS.docker, merge=True) %}

|

||||

{% set RANGESPLIT = DOCKERMERGED.range.split('.') %}

|

||||

{% set FIRSTTHREE = RANGESPLIT[0] ~ '.' ~ RANGESPLIT[1] ~ '.' ~ RANGESPLIT[2] ~ '.' %}

|

||||

|

||||

{% for container, vals in DOCKER.containers.items() %}

|

||||

{% do DOCKER.containers[container].update({'ip': FIRSTTHREE ~ DOCKER.containers[container].final_octet}) %}

|

||||

{% for container, vals in DOCKERMERGED.containers.items() %}

|

||||

{% do DOCKERMERGED.containers[container].update({'ip': FIRSTTHREE ~ DOCKERMERGED.containers[container].final_octet}) %}

|

||||

{% endfor %}

|

||||

|

||||

24

salt/docker/files/daemon.json.jinja

Normal file

24

salt/docker/files/daemon.json.jinja

Normal file

@@ -0,0 +1,24 @@

|

||||

{% from 'docker/docker.map.jinja' import DOCKERMERGED -%}

|

||||

{

|

||||

"registry-mirrors": [

|

||||

"https://:5000"

|

||||

],

|

||||

"bip": "172.17.0.1/24",

|

||||

"default-address-pools": [

|

||||

{

|

||||

"base": "172.17.0.0/24",

|

||||

"size": 24

|

||||

}

|

||||

]

|

||||

{%- if DOCKERMERGED.ulimits %},

|

||||

"default-ulimits": {

|

||||

{%- for ULIMIT in DOCKERMERGED.ulimits %}

|

||||

"{{ ULIMIT.name }}": {

|

||||

"Name": "{{ ULIMIT.name }}",

|

||||

"Soft": {{ ULIMIT.soft }},

|

||||

"Hard": {{ ULIMIT.hard }}

|

||||

}{{ "," if not loop.last else "" }}

|

||||

{%- endfor %}

|

||||

}

|

||||

{%- endif %}

|

||||

}

|

||||

@@ -3,7 +3,7 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'docker/docker.map.jinja' import DOCKER %}

|

||||

{% from 'docker/docker.map.jinja' import DOCKERMERGED %}

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

|

||||

# docker service requires the ca.crt

|

||||

@@ -15,39 +15,6 @@ dockergroup:

|

||||

- name: docker

|

||||

- gid: 920

|

||||

|

||||

{% if GLOBALS.os_family == 'Debian' %}

|

||||

{% if grains.oscodename == 'bookworm' %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 2.2.1-1~debian.12~bookworm

|

||||

- docker-ce: 5:29.2.1-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:29.2.1-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:29.2.1-1~debian.12~bookworm

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% elif grains.oscodename == 'jammy' %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 2.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% else %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- docker-ce-cli: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

{% else %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

@@ -57,7 +24,6 @@ dockerheldpackages:

|

||||

- docker-ce-rootless-extras: 29.2.1-1.el9

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

|

||||

#disable docker from managing iptables

|

||||

iptables_disabled:

|

||||

@@ -75,10 +41,9 @@ dockeretc:

|

||||

file.directory:

|

||||

- name: /etc/docker

|

||||

|

||||

# Manager daemon.json

|

||||

docker_daemon:

|

||||

file.managed:

|

||||

- source: salt://common/files/daemon.json

|

||||

- source: salt://docker/files/daemon.json.jinja

|

||||

- name: /etc/docker/daemon.json

|

||||

- template: jinja

|

||||

|

||||

@@ -109,8 +74,8 @@ dockerreserveports:

|

||||

sos_docker_net:

|

||||

docker_network.present:

|

||||

- name: sobridge

|

||||

- subnet: {{ DOCKER.range }}

|

||||

- gateway: {{ DOCKER.gateway }}

|

||||

- subnet: {{ DOCKERMERGED.range }}

|

||||

- gateway: {{ DOCKERMERGED.gateway }}

|

||||

- options:

|

||||

com.docker.network.bridge.name: 'sobridge'

|

||||

com.docker.network.driver.mtu: '1500'

|

||||

|

||||

@@ -1,44 +1,82 @@

|

||||

docker:

|

||||

gateway:

|

||||

description: Gateway for the default docker interface.

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

advanced: True

|

||||

range:

|

||||

description: Default docker IP range for containers.

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

advanced: True

|

||||

ulimits:

|

||||

description: |

|

||||

Default ulimit settings applied to all containers via the Docker daemon. Each entry specifies a resource name (e.g. nofile, memlock, core, nproc) with soft and hard limits. Individual container ulimits override these defaults. Valid resource names include: cpu, fsize, data, stack, core, rss, nproc, nofile, memlock, as, locks, sigpending, msgqueue, nice, rtprio, rttime.

|

||||

forcedType: "[]{}"

|

||||

syntax: json

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

uiElements:

|

||||

- field: name

|

||||

label: Resource Name

|

||||

required: True

|

||||

regex: ^(cpu|fsize|data|stack|core|rss|nproc|nofile|memlock|as|locks|sigpending|msgqueue|nice|rtprio|rttime)$

|

||||

regexFailureMessage: You must enter a valid ulimit name (cpu, fsize, data, stack, core, rss, nproc, nofile, memlock, as, locks, sigpending, msgqueue, nice, rtprio, rttime).

|

||||

- field: soft

|

||||

label: Soft Limit

|

||||

forcedType: int

|

||||

- field: hard

|

||||

label: Hard Limit

|

||||

forcedType: int

|

||||

containers:

|

||||

so-dockerregistry: &dockerOptions

|

||||

final_octet:

|

||||

description: Last octet of the container IP address.

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

readonly: True

|

||||

advanced: True

|

||||

global: True

|

||||

port_bindings:

|

||||

description: List of port bindings for the container.

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

advanced: True

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

custom_bind_mounts:

|

||||

description: List of custom local volume bindings.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

extra_hosts:

|

||||

description: List of additional host entries for the container.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

extra_env:

|

||||

description: List of additional ENV entries for the container.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

helpLink: docker

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

ulimits:

|

||||

description: |

|

||||

Ulimit settings for the container. Each entry specifies a resource name (e.g. nofile, memlock, core, nproc) with optional soft and hard limits. Valid resource names include: cpu, fsize, data, stack, core, rss, nproc, nofile, memlock, as, locks, sigpending, msgqueue, nice, rtprio, rttime.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

forcedType: "[]{}"

|

||||

syntax: json

|

||||

uiElements:

|

||||

- field: name

|

||||

label: Resource Name

|

||||

required: True

|

||||

regex: ^(cpu|fsize|data|stack|core|rss|nproc|nofile|memlock|as|locks|sigpending|msgqueue|nice|rtprio|rttime)$

|

||||

regexFailureMessage: You must enter a valid ulimit name (cpu, fsize, data, stack, core, rss, nproc, nofile, memlock, as, locks, sigpending, msgqueue, nice, rtprio, rttime).

|

||||

- field: soft

|

||||

label: Soft Limit

|

||||

forcedType: int

|

||||

- field: hard

|

||||

label: Hard Limit

|

||||

forcedType: int

|

||||

so-elastic-fleet: *dockerOptions

|

||||

so-elasticsearch: *dockerOptions

|

||||

so-influxdb: *dockerOptions

|

||||

@@ -62,43 +100,6 @@ docker:

|

||||

so-idh: *dockerOptions

|

||||

so-elastic-agent: *dockerOptions

|

||||

so-telegraf: *dockerOptions

|

||||

so-steno: *dockerOptions

|

||||

so-suricata:

|

||||

final_octet:

|

||||

description: Last octet of the container IP address.

|

||||

helpLink: docker.html

|

||||

readonly: True

|

||||

advanced: True

|

||||

global: True

|

||||

port_bindings:

|

||||

description: List of port bindings for the container.

|

||||

helpLink: docker.html

|

||||

advanced: True

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

custom_bind_mounts:

|

||||