mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-03-24 13:32:37 +01:00

Compare commits

3 Commits

2.4.120-20

...

kaffytaffy

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

d91dd0dd3c | ||

|

|

a0388fd568 | ||

|

|

05244cfd75 |

2

.github/.gitleaks.toml

vendored

2

.github/.gitleaks.toml

vendored

@@ -536,7 +536,7 @@ secretGroup = 4

|

||||

|

||||

[allowlist]

|

||||

description = "global allow lists"

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''', '''.*:.*StrelkaHexDump.*''', '''.*:.*PLACEHOLDER.*''', '''ssl_.*password''']

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''', '''.*:.*StrelkaHexDump.*''', '''.*:.*PLACEHOLDER.*''']

|

||||

paths = [

|

||||

'''gitleaks.toml''',

|

||||

'''(.*?)(jpg|gif|doc|pdf|bin|svg|socket)$''',

|

||||

|

||||

11

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

11

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

@@ -11,6 +11,7 @@ body:

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4 Pre-release (Beta, Release Candidate)

|

||||

- 2.4.10

|

||||

- 2.4.20

|

||||

- 2.4.30

|

||||

@@ -21,9 +22,6 @@ body:

|

||||

- 2.4.80

|

||||

- 2.4.90

|

||||

- 2.4.100

|

||||

- 2.4.110

|

||||

- 2.4.111

|

||||

- 2.4.120

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

@@ -34,10 +32,9 @@ body:

|

||||

options:

|

||||

-

|

||||

- Security Onion ISO image

|

||||

- Cloud image (Amazon, Azure, Google)

|

||||

- Network installation on Red Hat derivative like Oracle, Rocky, Alma, etc. (unsupported)

|

||||

- Network installation on Ubuntu (unsupported)

|

||||

- Network installation on Debian (unsupported)

|

||||

- Network installation on Red Hat derivative like Oracle, Rocky, Alma, etc.

|

||||

- Network installation on Ubuntu

|

||||

- Network installation on Debian

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

|

||||

1

.github/workflows/close-threads.yml

vendored

1

.github/workflows/close-threads.yml

vendored

@@ -15,7 +15,6 @@ concurrency:

|

||||

|

||||

jobs:

|

||||

close-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

|

||||

2

.github/workflows/contrib.yml

vendored

2

.github/workflows/contrib.yml

vendored

@@ -18,7 +18,7 @@ jobs:

|

||||

with:

|

||||

path-to-signatures: 'signatures_v1.json'

|

||||

path-to-document: 'https://securityonionsolutions.com/cla'

|

||||

allowlist: dependabot[bot],jertel,dougburks,TOoSmOotH,defensivedepth,m0duspwnens

|

||||

allowlist: dependabot[bot],jertel,dougburks,TOoSmOotH,weslambert,defensivedepth,m0duspwnens

|

||||

remote-organization-name: Security-Onion-Solutions

|

||||

remote-repository-name: licensing

|

||||

|

||||

|

||||

1

.github/workflows/lock-threads.yml

vendored

1

.github/workflows/lock-threads.yml

vendored

@@ -15,7 +15,6 @@ concurrency:

|

||||

|

||||

jobs:

|

||||

lock-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: jertel/lock-threads@main

|

||||

|

||||

@@ -1,17 +1,17 @@

|

||||

### 2.4.120-20250212 ISO image released on 2025/02/12

|

||||

### 2.4.60-20240320 ISO image released on 2024/03/20

|

||||

|

||||

|

||||

### Download and Verify

|

||||

|

||||

2.4.120-20250212 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.120-20250212.iso

|

||||

2.4.60-20240320 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.60-20240320.iso

|

||||

|

||||

MD5: 3FF09F50AB1C9318CF0862DE9816102D

|

||||

SHA1: 197AFA5A85C5CF95D0289FCD21BED7615FB8DB5C

|

||||

SHA256: A59D94B09EEB39D8C2B6D0808792EC479B13D96FA7B32C3BEEFB6709C93F6692

|

||||

MD5: 178DD42D06B2F32F3870E0C27219821E

|

||||

SHA1: 73EDCD50817A7F6003FE405CF1808A30D034F89D

|

||||

SHA256: DD334B8D7088A7B78160C253B680D645E25984BA5CCAB5CC5C327CA72137FC06

|

||||

|

||||

Signature for ISO image:

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.120-20250212.iso.sig

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.60-20240320.iso.sig

|

||||

|

||||

Signing key:

|

||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||

@@ -25,29 +25,27 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

||||

|

||||

Download the signature file for the ISO:

|

||||

```

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.120-20250212.iso.sig

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.60-20240320.iso.sig

|

||||

```

|

||||

|

||||

Download the ISO image:

|

||||

```

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.120-20250212.iso

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.60-20240320.iso

|

||||

```

|

||||

|

||||

Verify the downloaded ISO image using the signature file:

|

||||

```

|

||||

gpg --verify securityonion-2.4.120-20250212.iso.sig securityonion-2.4.120-20250212.iso

|

||||

gpg --verify securityonion-2.4.60-20240320.iso.sig securityonion-2.4.60-20240320.iso

|

||||

```

|

||||

|

||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||

```

|

||||

gpg: Signature made Tue 11 Feb 2025 05:26:33 PM EST using RSA key ID FE507013

|

||||

gpg: Signature made Tue 19 Mar 2024 03:17:58 PM EDT using RSA key ID FE507013

|

||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||

gpg: WARNING: This key is not certified with a trusted signature!

|

||||

gpg: There is no indication that the signature belongs to the owner.

|

||||

Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

||||

```

|

||||

|

||||

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

||||

|

||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||

https://docs.securityonion.net/en/2.4/installation.html

|

||||

|

||||

13

README.md

13

README.md

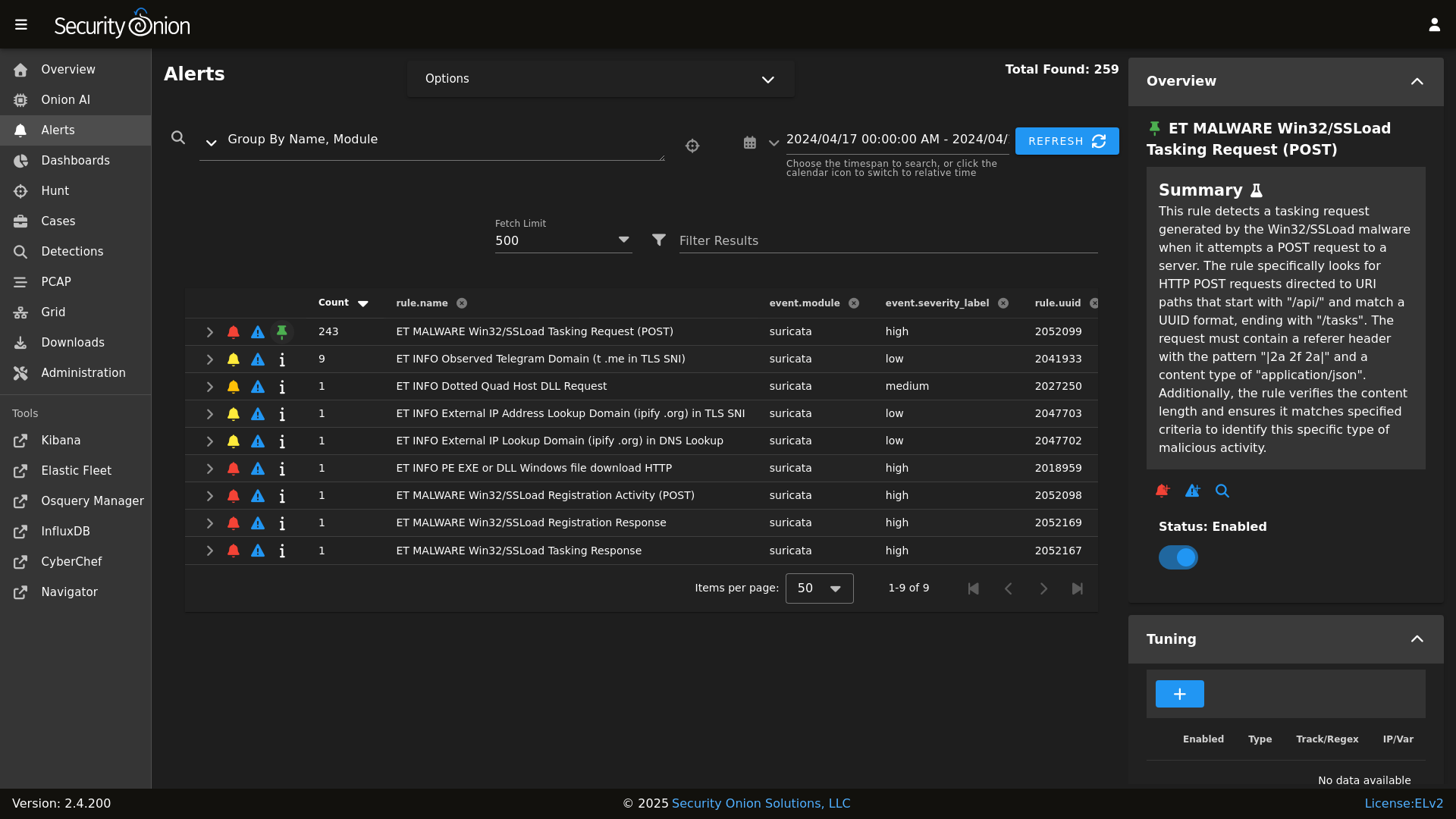

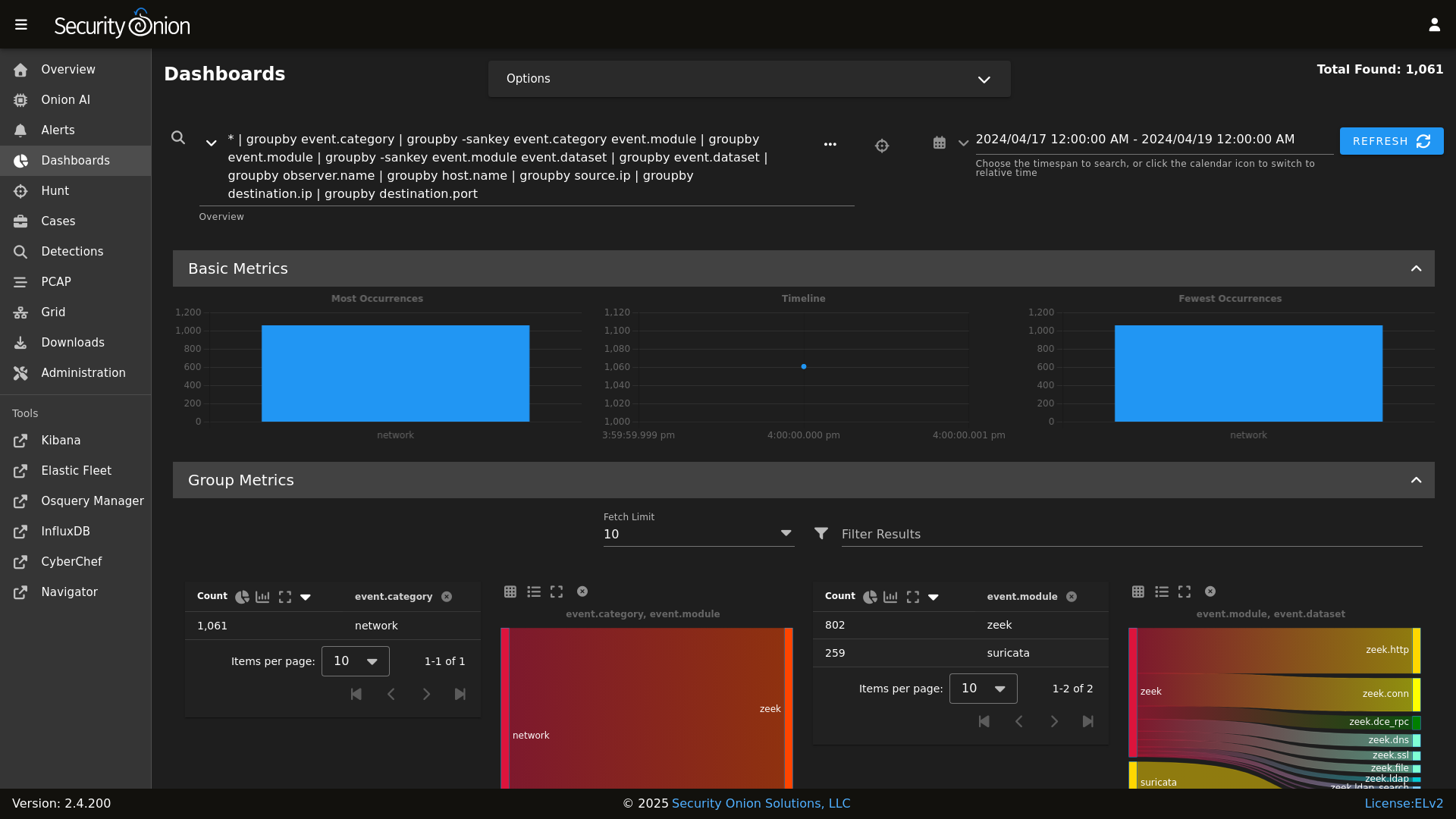

@@ -8,22 +8,19 @@ Alerts

|

||||

|

||||

|

||||

Dashboards

|

||||

|

||||

|

||||

|

||||

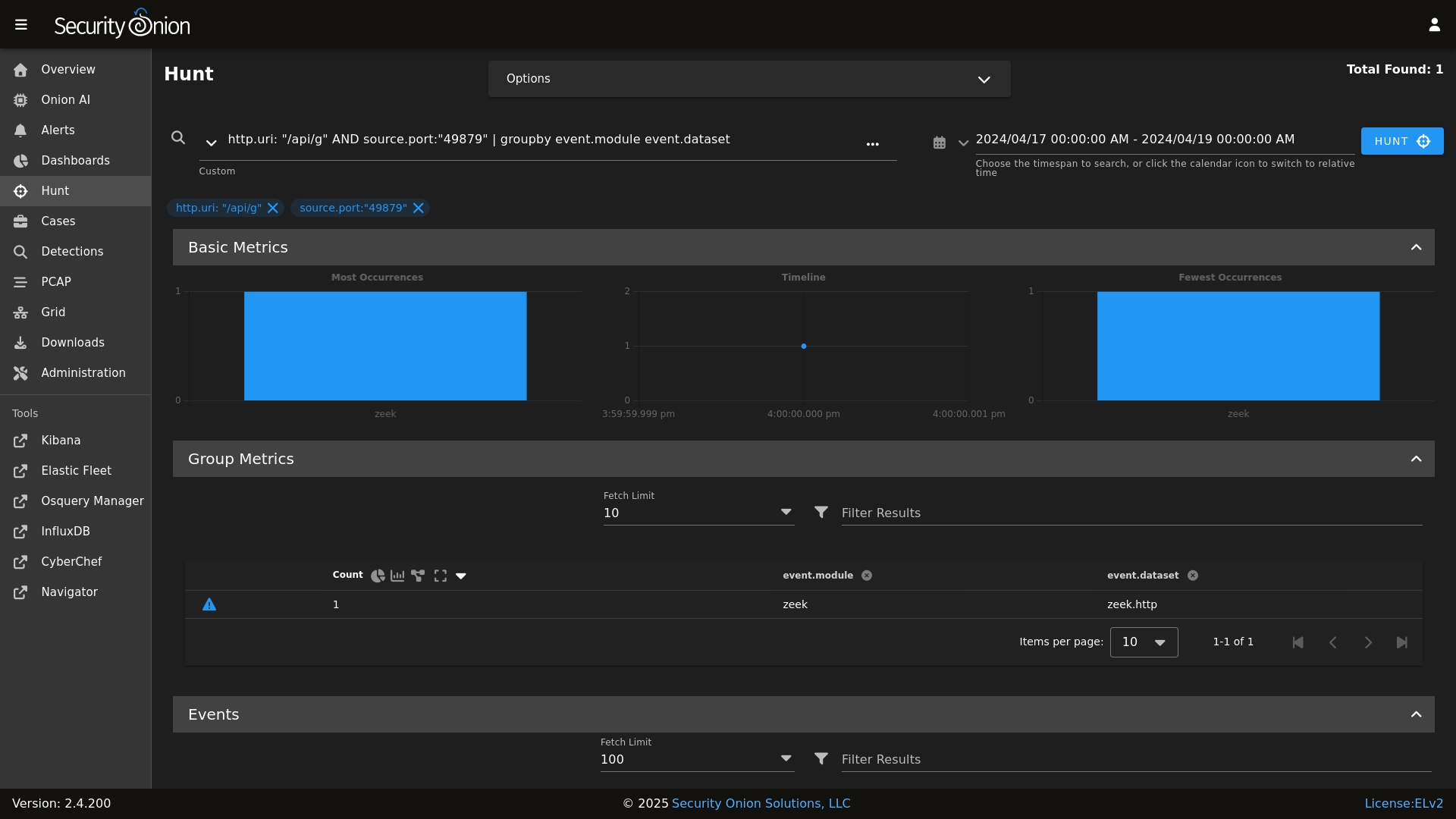

Hunt

|

||||

|

||||

|

||||

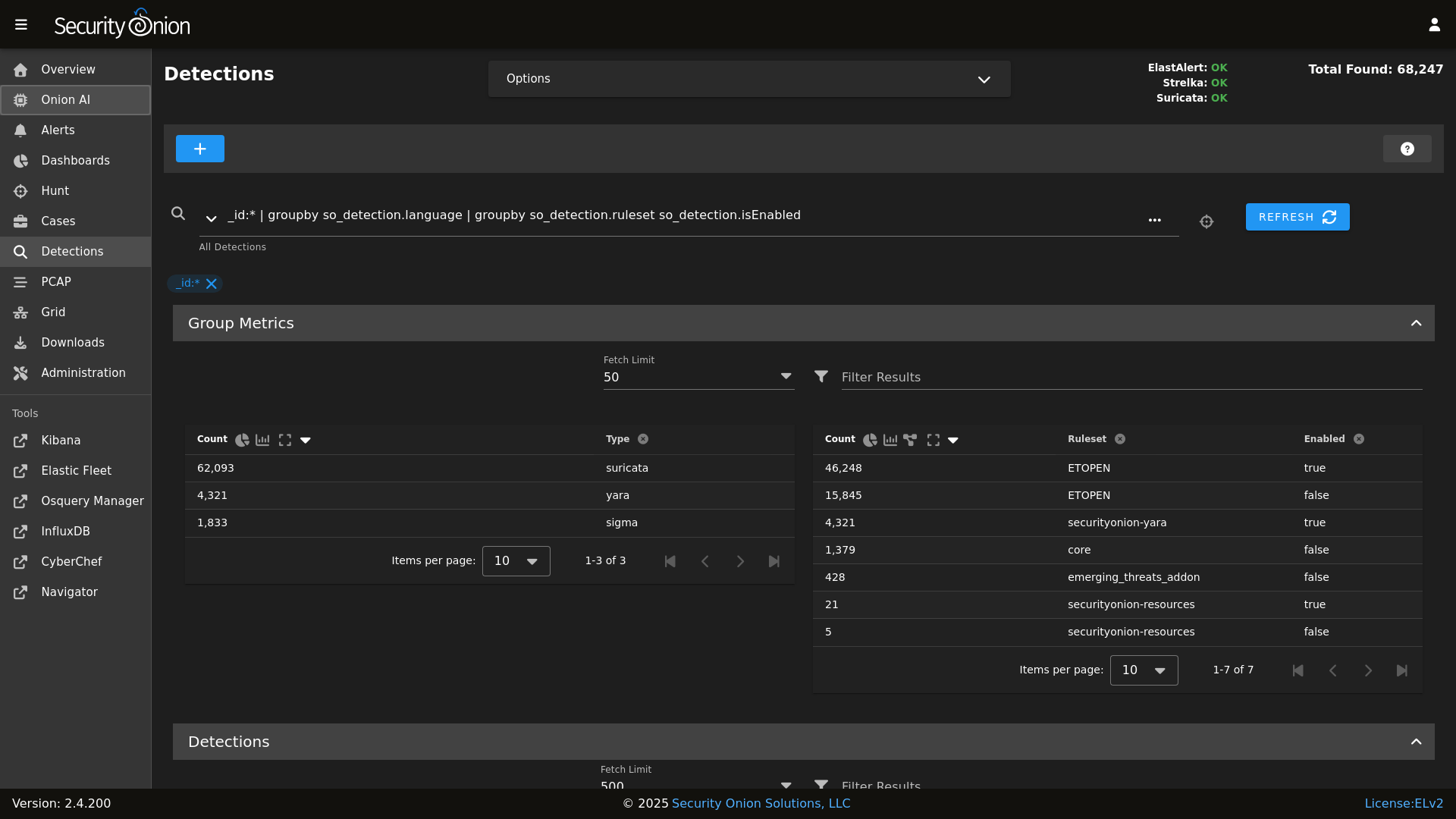

Detections

|

||||

|

||||

|

||||

|

||||

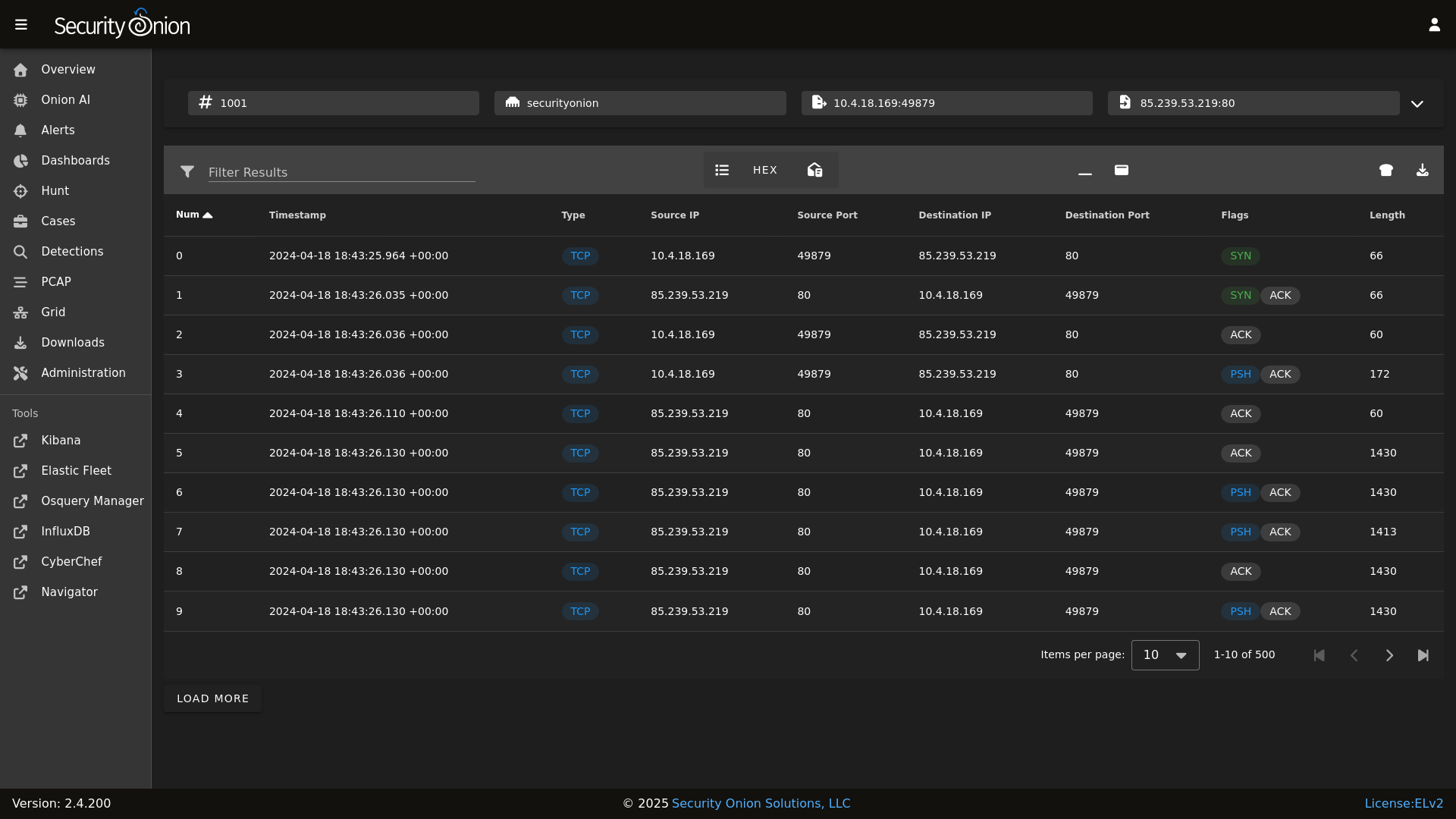

PCAP

|

||||

|

||||

|

||||

|

||||

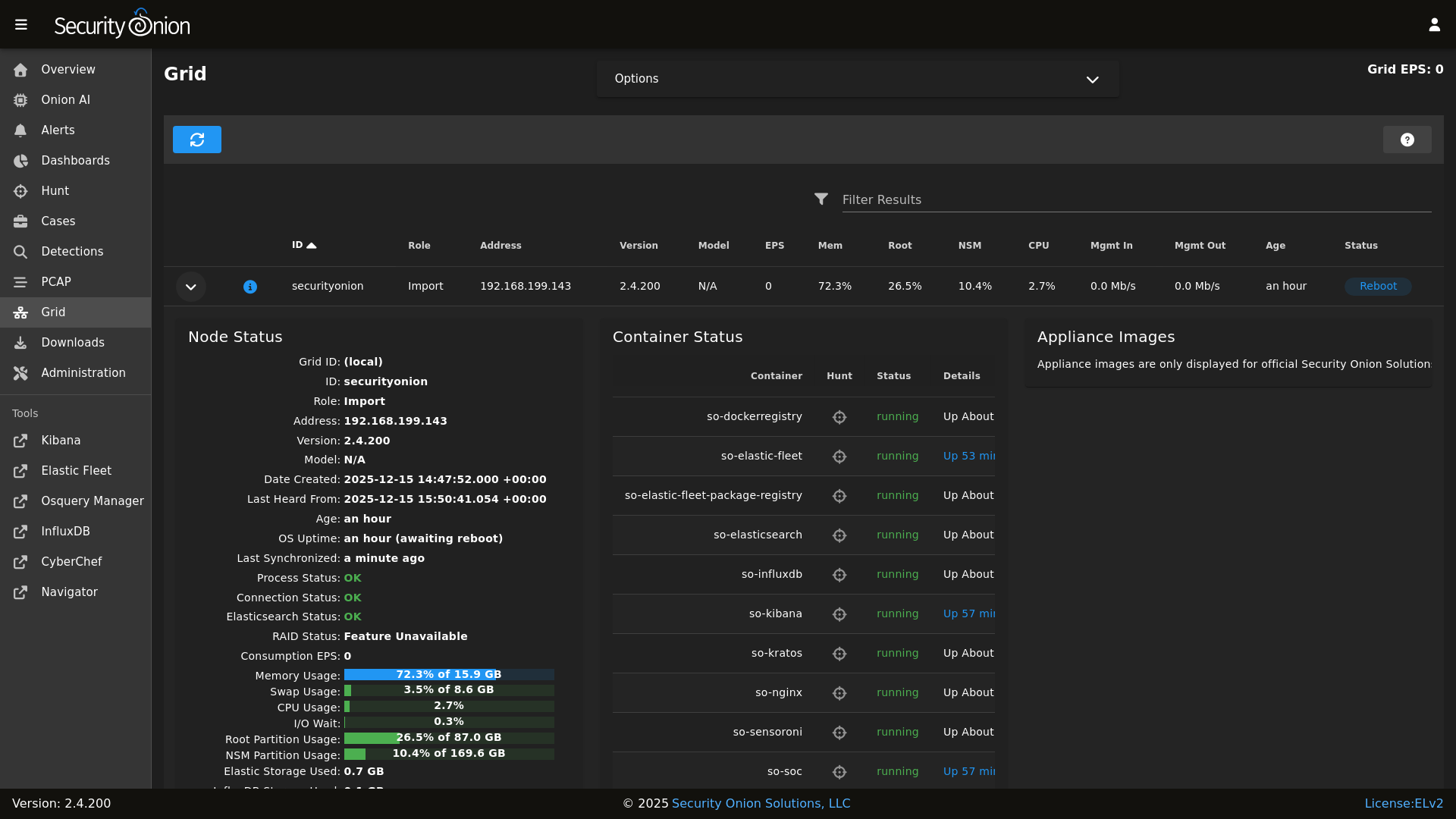

Grid

|

||||

|

||||

|

||||

|

||||

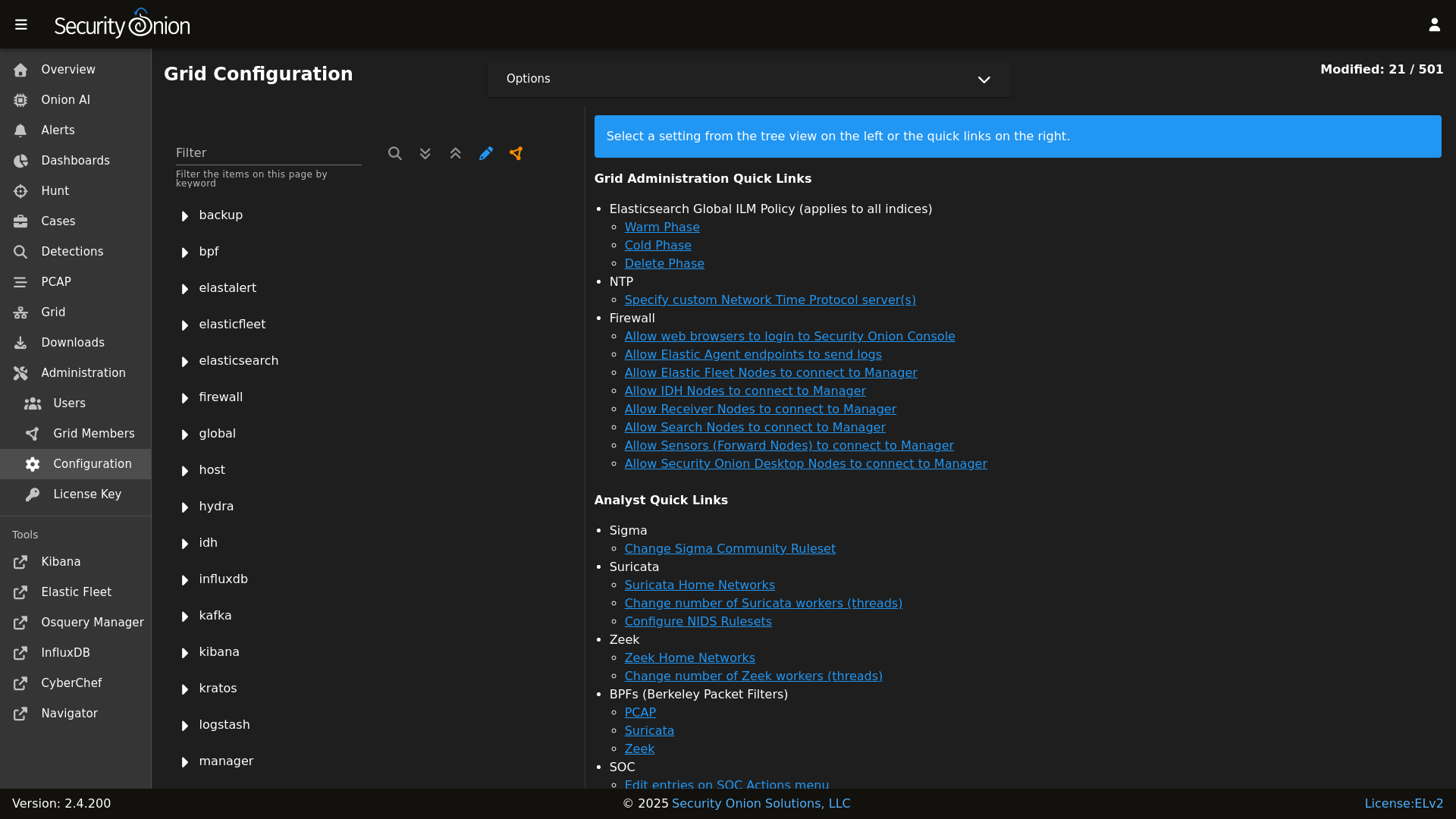

Config

|

||||

|

||||

|

||||

|

||||

### Release Notes

|

||||

|

||||

|

||||

@@ -5,11 +5,9 @@

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 2.4.x | :white_check_mark: |

|

||||

| 2.3.x | :x: |

|

||||

| 2.3.x | :white_check_mark: |

|

||||

| 16.04.x | :x: |

|

||||

|

||||

Security Onion 2.3 has reached End Of Life and is no longer supported.

|

||||

|

||||

Security Onion 16.04 has reached End Of Life and is no longer supported.

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

@@ -1,34 +0,0 @@

|

||||

{% set node_types = {} %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='elasticsearch:enabled:true',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='pillar') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

{% if hostname not in node_types[node_type] %}

|

||||

{% do node_types[node_type].update({hostname: ip[0]}) %}

|

||||

{% else %}

|

||||

{% do node_types[node_type][hostname].update(ip[0]) %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

|

||||

elasticsearch:

|

||||

nodes:

|

||||

{% for node_type, values in node_types.items() %}

|

||||

{{node_type}}:

|

||||

{% for hostname, ip in values.items() %}

|

||||

{{hostname}}:

|

||||

ip: {{ip}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

@@ -1,2 +1,30 @@

|

||||

{% set current_kafkanodes = salt.saltutil.runner('mine.get', tgt='G@role:so-manager or G@role:so-managersearch or G@role:so-standalone or G@role:so-receiver', fun='network.ip_addrs', tgt_type='compound') %}

|

||||

{% set pillar_kafkanodes = salt['pillar.get']('kafka:nodes', default={}, merge=True) %}

|

||||

|

||||

{% set existing_ids = [] %}

|

||||

{% for node in pillar_kafkanodes.values() %}

|

||||

{% if node.get('id') %}

|

||||

{% do existing_ids.append(node['nodeid']) %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

{% set all_possible_ids = range(1, 256)|list %}

|

||||

|

||||

{% set available_ids = [] %}

|

||||

{% for id in all_possible_ids %}

|

||||

{% if id not in existing_ids %}

|

||||

{% do available_ids.append(id) %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

{% set final_nodes = pillar_kafkanodes.copy() %}

|

||||

|

||||

{% for minionid, ip in current_kafkanodes.items() %}

|

||||

{% set hostname = minionid.split('_')[0] %}

|

||||

{% if hostname not in final_nodes %}

|

||||

{% set new_id = available_ids.pop(0) %}

|

||||

{% do final_nodes.update({hostname: {'nodeid': new_id, 'ip': ip[0]}}) %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

kafka:

|

||||

nodes:

|

||||

nodes: {{ final_nodes|tojson }}

|

||||

|

||||

@@ -1,15 +1,16 @@

|

||||

{% set node_types = {} %}

|

||||

{% set cached_grains = salt.saltutil.runner('cache.grains', tgt='*') %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='logstash:enabled:true',

|

||||

tgt='G@role:so-manager or G@role:so-managersearch or G@role:so-standalone or G@role:so-searchnode or G@role:so-heavynode or G@role:so-receiver or G@role:so-fleet ',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='pillar') | dictsort()

|

||||

tgt_type='compound') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% set hostname = cached_grains[minionid]['host'] %}

|

||||

{% set node_type = minionid.split('_')[1] %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

|

||||

@@ -1,34 +0,0 @@

|

||||

{% set node_types = {} %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='redis:enabled:true',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='pillar') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

{% if hostname not in node_types[node_type] %}

|

||||

{% do node_types[node_type].update({hostname: ip[0]}) %}

|

||||

{% else %}

|

||||

{% do node_types[node_type][hostname].update(ip[0]) %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

|

||||

redis:

|

||||

nodes:

|

||||

{% for node_type, values in node_types.items() %}

|

||||

{{node_type}}:

|

||||

{% for hostname, ip in values.items() %}

|

||||

{{hostname}}:

|

||||

ip: {{ip}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

@@ -16,8 +16,6 @@ base:

|

||||

- sensoroni.adv_sensoroni

|

||||

- telegraf.soc_telegraf

|

||||

- telegraf.adv_telegraf

|

||||

- versionlock.soc_versionlock

|

||||

- versionlock.adv_versionlock

|

||||

|

||||

'* and not *_desktop':

|

||||

- firewall.soc_firewall

|

||||

@@ -49,14 +47,10 @@ base:

|

||||

- kibana.adv_kibana

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

- influxdb.adv_influxdb

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -100,7 +94,6 @@ base:

|

||||

- kibana.secrets

|

||||

{% endif %}

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -118,8 +111,8 @@ base:

|

||||

- kibana.adv_kibana

|

||||

- strelka.soc_strelka

|

||||

- strelka.adv_strelka

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

@@ -154,14 +147,10 @@ base:

|

||||

- idstools.adv_idstools

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

- influxdb.adv_influxdb

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -226,22 +215,17 @@ base:

|

||||

- logstash.nodes

|

||||

- logstash.soc_logstash

|

||||

- logstash.adv_logstash

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||

- elasticsearch.auth

|

||||

{% endif %}

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- soc.license

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

|

||||

'*_receiver':

|

||||

- logstash.nodes

|

||||

@@ -257,7 +241,6 @@ base:

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

- soc.license

|

||||

|

||||

'*_import':

|

||||

- secrets

|

||||

@@ -269,7 +252,6 @@ base:

|

||||

- kibana.secrets

|

||||

{% endif %}

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -285,8 +267,8 @@ base:

|

||||

- kibana.adv_kibana

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

@@ -318,5 +300,3 @@ base:

|

||||

'*_desktop':

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- soc.license

|

||||

|

||||

14

pyci.sh

14

pyci.sh

@@ -15,16 +15,12 @@ TARGET_DIR=${1:-.}

|

||||

|

||||

PATH=$PATH:/usr/local/bin

|

||||

|

||||

if [ ! -d .venv ]; then

|

||||

python -m venv .venv

|

||||

fi

|

||||

|

||||

source .venv/bin/activate

|

||||

|

||||

if ! pip install flake8 pytest pytest-cov pyyaml; then

|

||||

echo "Unable to install dependencies."

|

||||

if ! which pytest &> /dev/null || ! which flake8 &> /dev/null ; then

|

||||

echo "Missing dependencies. Consider running the following command:"

|

||||

echo " python -m pip install flake8 pytest pytest-cov"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

pip install pytest pytest-cov

|

||||

flake8 "$TARGET_DIR" "--config=${HOME_DIR}/pytest.ini"

|

||||

python3 -m pytest "--cov-config=${HOME_DIR}/pytest.ini" "--cov=$TARGET_DIR" --doctest-modules --cov-report=term --cov-fail-under=100 "$TARGET_DIR"

|

||||

python3 -m pytest "--cov-config=${HOME_DIR}/pytest.ini" "--cov=$TARGET_DIR" --doctest-modules --cov-report=term --cov-fail-under=100 "$TARGET_DIR"

|

||||

@@ -24,7 +24,6 @@

|

||||

'influxdb',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'elasticfleet',

|

||||

'elastic-fleet-package-registry',

|

||||

'firewall',

|

||||

@@ -66,10 +65,8 @@

|

||||

'registry',

|

||||

'manager',

|

||||

'nginx',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'influxdb',

|

||||

'telegraf',

|

||||

'firewall',

|

||||

@@ -94,10 +91,8 @@

|

||||

'nginx',

|

||||

'telegraf',

|

||||

'influxdb',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'elasticfleet',

|

||||

'elastic-fleet-package-registry',

|

||||

'firewall',

|

||||

@@ -117,10 +112,8 @@

|

||||

'nginx',

|

||||

'telegraf',

|

||||

'influxdb',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'elastic-fleet-package-registry',

|

||||

'elasticfleet',

|

||||

'firewall',

|

||||

@@ -140,9 +133,7 @@

|

||||

'firewall',

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'stig',

|

||||

'kafka.ca',

|

||||

'kafka.ssl'

|

||||

'stig'

|

||||

],

|

||||

'so-standalone': [

|

||||

'salt.master',

|

||||

@@ -155,7 +146,6 @@

|

||||

'influxdb',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'elastic-fleet-package-registry',

|

||||

'elasticfleet',

|

||||

'firewall',

|

||||

@@ -202,13 +192,12 @@

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'kafka',

|

||||

'stig'

|

||||

'elasticsearch.ca'

|

||||

],

|

||||

'so-desktop': [

|

||||

'ssl',

|

||||

'docker_clean',

|

||||

'telegraf',

|

||||

'stig'

|

||||

'telegraf'

|

||||

],

|

||||

}, grain='role') %}

|

||||

|

||||

|

||||

@@ -4,5 +4,4 @@ backup:

|

||||

- /etc/pki

|

||||

- /etc/salt

|

||||

- /nsm/kratos

|

||||

- /nsm/hydra

|

||||

destination: "/nsm/backup"

|

||||

@@ -1,3 +1,6 @@

|

||||

mine_functions:

|

||||

x509.get_pem_entries: [/etc/pki/ca.crt]

|

||||

|

||||

x509_signing_policies:

|

||||

filebeat:

|

||||

- minions: '*'

|

||||

|

||||

@@ -14,11 +14,6 @@ net.core.wmem_default:

|

||||

sysctl.present:

|

||||

- value: 26214400

|

||||

|

||||

# Users are not a fan of console messages

|

||||

kernel.printk:

|

||||

sysctl.present:

|

||||

- value: "3 4 1 3"

|

||||

|

||||

# Remove variables.txt from /tmp - This is temp

|

||||

rmvariablesfile:

|

||||

file.absent:

|

||||

@@ -182,7 +177,6 @@ sostatus_log:

|

||||

file.managed:

|

||||

- name: /opt/so/log/sostatus/status.log

|

||||

- mode: 644

|

||||

- replace: False

|

||||

|

||||

# Install sostatus check cron. This is used to populate Grid.

|

||||

so-status_check_cron:

|

||||

|

||||

@@ -1,8 +1,3 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||

|

||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||

@@ -11,7 +6,6 @@

|

||||

{% else %}

|

||||

{% set UPDATE_DIR='/tmp/sogh/securityonion' %}

|

||||

{% endif %}

|

||||

{% set SOVERSION = salt['file.read']('/etc/soversion').strip() %}

|

||||

|

||||

remove_common_soup:

|

||||

file.absent:

|

||||

@@ -21,8 +15,6 @@ remove_common_so-firewall:

|

||||

file.absent:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-firewall

|

||||

|

||||

# This section is used to put the scripts in place in the Salt file system

|

||||

# in case a state run tries to overwrite what we do in the next section.

|

||||

copy_so-common_common_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-common

|

||||

@@ -51,21 +43,6 @@ copy_so-firewall_manager_tools_sbin:

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-yaml_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/so-yaml.py

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-yaml.py

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-repo-sync_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/so-repo-sync

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-repo-sync

|

||||

- preserve: True

|

||||

|

||||

# This section is used to put the new script in place so that it can be called during soup.

|

||||

# It is faster than calling the states that normally manage them to put them in place.

|

||||

copy_so-common_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-common

|

||||

@@ -101,24 +78,6 @@ copy_so-yaml_sbin:

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-repo-sync_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-repo-sync

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-repo-sync

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

{# this is added in 2.4.120 to remove salt repo files pointing to saltproject.io to accomodate the move to broadcom and new bootstrap-salt script #}

|

||||

{% if salt['pkg.version_cmp'](SOVERSION, '2.4.120') == -1 %}

|

||||

{% set saltrepofile = '/etc/yum.repos.d/salt.repo' %}

|

||||

{% if grains.os_family == 'Debian' %}

|

||||

{% set saltrepofile = '/etc/apt/sources.list.d/salt.list' %}

|

||||

{% endif %}

|

||||

remove_saltproject_io_repo_manager:

|

||||

file.absent:

|

||||

- name: {{ saltrepofile }}

|

||||

{% endif %}

|

||||

|

||||

{% else %}

|

||||

fix_23_soup_sbin:

|

||||

cmd.run:

|

||||

|

||||

@@ -5,13 +5,8 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

|

||||

|

||||

. /usr/sbin/so-common

|

||||

|

||||

cat << EOF

|

||||

|

||||

so-checkin will run a full salt highstate to apply all salt states. If a highstate is already running, this request will be queued and so it may pause for a few minutes before you see any more output. For more information about so-checkin and salt, please see:

|

||||

https://docs.securityonion.net/en/2.4/salt.html

|

||||

|

||||

EOF

|

||||

|

||||

salt-call state.highstate -l info queue=True

|

||||

salt-call state.highstate -l info

|

||||

|

||||

@@ -8,6 +8,12 @@

|

||||

# Elastic agent is not managed by salt. Because of this we must store this base information in a

|

||||

# script that accompanies the soup system. Since so-common is one of those special soup files,

|

||||

# and since this same logic is required during installation, it's included in this file.

|

||||

ELASTIC_AGENT_TARBALL_VERSION="8.10.4"

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||

|

||||

DEFAULT_SALT_DIR=/opt/so/saltstack/default

|

||||

DOC_BASE_URL="https://docs.securityonion.net/en/2.4"

|

||||

@@ -25,11 +31,6 @@ if ! echo "$PATH" | grep -q "/usr/sbin"; then

|

||||

export PATH="$PATH:/usr/sbin"

|

||||

fi

|

||||

|

||||

# See if a proxy is set. If so use it.

|

||||

if [ -f /etc/profile.d/so-proxy.sh ]; then

|

||||

. /etc/profile.d/so-proxy.sh

|

||||

fi

|

||||

|

||||

# Define a banner to separate sections

|

||||

banner="========================================================================="

|

||||

|

||||

@@ -168,46 +169,6 @@ check_salt_minion_status() {

|

||||

return $status

|

||||

}

|

||||

|

||||

# Compare es versions and return the highest version

|

||||

compare_es_versions() {

|

||||

# Save the original IFS

|

||||

local OLD_IFS="$IFS"

|

||||

|

||||

IFS=.

|

||||

local i ver1=($1) ver2=($2)

|

||||

|

||||

# Restore the original IFS

|

||||

IFS="$OLD_IFS"

|

||||

|

||||

# Determine the maximum length between the two version arrays

|

||||

local max_len=${#ver1[@]}

|

||||

if [[ ${#ver2[@]} -gt $max_len ]]; then

|

||||

max_len=${#ver2[@]}

|

||||

fi

|

||||

|

||||

# Compare each segment of the versions

|

||||

for ((i=0; i<max_len; i++)); do

|

||||

# If a segment in ver1 or ver2 is missing, set it to 0

|

||||

if [[ -z ${ver1[i]} ]]; then

|

||||

ver1[i]=0

|

||||

fi

|

||||

if [[ -z ${ver2[i]} ]]; then

|

||||

ver2[i]=0

|

||||

fi

|

||||

if ((10#${ver1[i]} > 10#${ver2[i]})); then

|

||||

echo "$1"

|

||||

return 0

|

||||

fi

|

||||

if ((10#${ver1[i]} < 10#${ver2[i]})); then

|

||||

echo "$2"

|

||||

return 0

|

||||

fi

|

||||

done

|

||||

|

||||

echo "$1" # If versions are equal, return either

|

||||

return 0

|

||||

}

|

||||

|

||||

copy_new_files() {

|

||||

# Copy new files over to the salt dir

|

||||

cd $UPDATE_DIR

|

||||

@@ -218,21 +179,6 @@ copy_new_files() {

|

||||

cd /tmp

|

||||

}

|

||||

|

||||

create_local_directories() {

|

||||

echo "Creating local pillar and salt directories if needed"

|

||||

PILLARSALTDIR=$1

|

||||

local_salt_dir="/opt/so/saltstack/local"

|

||||

for i in "pillar" "salt"; do

|

||||

for d in $(find $PILLARSALTDIR/$i -type d); do

|

||||

suffixdir=${d//$PILLARSALTDIR/}

|

||||

if [ ! -d "$local_salt_dir/$suffixdir" ]; then

|

||||

mkdir -pv $local_salt_dir$suffixdir

|

||||

fi

|

||||

done

|

||||

chown -R socore:socore $local_salt_dir/$i

|

||||

done

|

||||

}

|

||||

|

||||

disable_fastestmirror() {

|

||||

sed -i 's/enabled=1/enabled=0/' /etc/yum/pluginconf.d/fastestmirror.conf

|

||||

}

|

||||

@@ -297,6 +243,11 @@ fail() {

|

||||

exit 1

|

||||

}

|

||||

|

||||

get_random_value() {

|

||||

length=${1:-20}

|

||||

head -c 5000 /dev/urandom | tr -dc 'a-zA-Z0-9' | fold -w $length | head -n 1

|

||||

}

|

||||

|

||||

get_agent_count() {

|

||||

if [ -f /opt/so/log/agents/agentstatus.log ]; then

|

||||

AGENTCOUNT=$(cat /opt/so/log/agents/agentstatus.log | grep -wF active | awk '{print $2}')

|

||||

@@ -305,27 +256,6 @@ get_agent_count() {

|

||||

fi

|

||||

}

|

||||

|

||||

get_elastic_agent_vars() {

|

||||

local path="${1:-/opt/so/saltstack/default}"

|

||||

local defaultsfile="${path}/salt/elasticsearch/defaults.yaml"

|

||||

|

||||

if [ -f "$defaultsfile" ]; then

|

||||

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||

else

|

||||

fail "Could not find salt/elasticsearch/defaults.yaml"

|

||||

fi

|

||||

}

|

||||

|

||||

get_random_value() {

|

||||

length=${1:-20}

|

||||

head -c 5000 /dev/urandom | tr -dc 'a-zA-Z0-9' | fold -w $length | head -n 1

|

||||

}

|

||||

|

||||

gpg_rpm_import() {

|

||||

if [[ $is_oracle ]]; then

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

@@ -677,8 +607,6 @@ has_uppercase() {

|

||||

}

|

||||

|

||||

update_elastic_agent() {

|

||||

local path="${1:-/opt/so/saltstack/default}"

|

||||

get_elastic_agent_vars "$path"

|

||||

echo "Checking if Elastic Agent update is necessary..."

|

||||

download_and_verify "$ELASTIC_AGENT_URL" "$ELASTIC_AGENT_MD5_URL" "$ELASTIC_AGENT_FILE" "$ELASTIC_AGENT_MD5" "$ELASTIC_AGENT_EXPANSION_DIR"

|

||||

}

|

||||

|

||||

@@ -29,7 +29,6 @@ container_list() {

|

||||

"so-influxdb"

|

||||

"so-kibana"

|

||||

"so-kratos"

|

||||

"so-hydra"

|

||||

"so-nginx"

|

||||

"so-pcaptools"

|

||||

"so-soc"

|

||||

@@ -54,7 +53,6 @@ container_list() {

|

||||

"so-kafka"

|

||||

"so-kibana"

|

||||

"so-kratos"

|

||||

"so-hydra"

|

||||

"so-logstash"

|

||||

"so-nginx"

|

||||

"so-pcaptools"

|

||||

@@ -114,10 +112,6 @@ update_docker_containers() {

|

||||

container_list

|

||||

fi

|

||||

|

||||

# all the images using ELASTICSEARCHDEFAULTS.elasticsearch.version

|

||||

# does not include so-elastic-fleet since that container uses so-elastic-agent image

|

||||

local IMAGES_USING_ES_VERSION=("so-elasticsearch")

|

||||

|

||||

rm -rf $SIGNPATH >> "$LOG_FILE" 2>&1

|

||||

mkdir -p $SIGNPATH >> "$LOG_FILE" 2>&1

|

||||

|

||||

@@ -145,36 +139,15 @@ update_docker_containers() {

|

||||

$PROGRESS_CALLBACK $i

|

||||

fi

|

||||

|

||||

if [[ " ${IMAGES_USING_ES_VERSION[*]} " =~ [[:space:]]${i}[[:space:]] ]]; then

|

||||

# this is an es container so use version defined in elasticsearch defaults.yaml

|

||||

local UPDATE_DIR='/tmp/sogh/securityonion'

|

||||

if [ ! -d "$UPDATE_DIR" ]; then

|

||||

UPDATE_DIR=/securityonion

|

||||

fi

|

||||

local v1=0

|

||||

local v2=0

|

||||

if [[ -f "$UPDATE_DIR/salt/elasticsearch/defaults.yaml" ]]; then

|

||||

v1=$(egrep " +version: " "$UPDATE_DIR/salt/elasticsearch/defaults.yaml" | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

fi

|

||||

if [[ -f "$DEFAULT_SALT_DIR/salt/elasticsearch/defaults.yaml" ]]; then

|

||||

v2=$(egrep " +version: " "$DEFAULT_SALT_DIR/salt/elasticsearch/defaults.yaml" | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

fi

|

||||

local highest_es_version=$(compare_es_versions "$v1" "$v2")

|

||||

local image=$i:$highest_es_version$IMAGE_TAG_SUFFIX

|

||||

local sig_url=https://sigs.securityonion.net/es-$highest_es_version/$image.sig

|

||||

else

|

||||

# this is not an es container so use the so version for the version

|

||||

local image=$i:$VERSION$IMAGE_TAG_SUFFIX

|

||||

local sig_url=https://sigs.securityonion.net/$VERSION/$image.sig

|

||||

fi

|

||||

# Pull down the trusted docker image

|

||||

local image=$i:$VERSION$IMAGE_TAG_SUFFIX

|

||||

run_check_net_err \

|

||||

"docker pull $CONTAINER_REGISTRY/$IMAGEREPO/$image" \

|

||||

"Could not pull $image, please ensure connectivity to $CONTAINER_REGISTRY" >> "$LOG_FILE" 2>&1

|

||||

|

||||

# Get signature

|

||||

run_check_net_err \

|

||||

"curl --retry 5 --retry-delay 60 -A '$CURLTYPE/$CURRENTVERSION/$OS/$(uname -r)' $sig_url --output $SIGNPATH/$image.sig" \

|

||||

"curl --retry 5 --retry-delay 60 -A '$CURLTYPE/$CURRENTVERSION/$OS/$(uname -r)' https://sigs.securityonion.net/$VERSION/$i:$VERSION$IMAGE_TAG_SUFFIX.sig --output $SIGNPATH/$image.sig" \

|

||||

"Could not pull signature file for $image, please ensure connectivity to https://sigs.securityonion.net " \

|

||||

noretry >> "$LOG_FILE" 2>&1

|

||||

# Dump our hash values

|

||||

|

||||

@@ -95,8 +95,6 @@ if [[ $EXCLUDE_STARTUP_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|shutdown process" # server not yet ready (logstash waiting on elastic)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|contain valid certificates" # server not yet ready (logstash waiting on elastic)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|failedaction" # server not yet ready (logstash waiting on elastic)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|block in start_workers" # server not yet ready (logstash waiting on elastic)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|block in buffer_initialize" # server not yet ready (logstash waiting on elastic)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|no route to host" # server not yet ready

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|not running" # server not yet ready

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|unavailable" # server not yet ready

|

||||

@@ -125,7 +123,6 @@ if [[ $EXCLUDE_STARTUP_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|tls handshake error" # Docker registry container when new node comes onlines

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Unable to get license information" # Logstash trying to contact ES before it's ready

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|process already finished" # Telegraf script finished just as the auto kill timeout kicked in

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|No shard available" # Typical error when making a query before ES has finished loading all indices

|

||||

fi

|

||||

|

||||

if [[ $EXCLUDE_FALSE_POSITIVE_ERRORS == 'Y' ]]; then

|

||||

@@ -150,8 +147,6 @@ if [[ $EXCLUDE_FALSE_POSITIVE_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|status 200" # false positive (request successful, contained error string in content)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|app_layer.error" # false positive (suricata 7) in stats.log e.g. app_layer.error.imap.parser | Total | 0

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|is not an ip string literal" # false positive (Open Canary logging out blank IP addresses)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|syncing rule" # false positive (rule sync log line includes rule name which can contain 'error')

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|request_unauthorized" # false positive (login failures to Hydra result in an 'error' log)

|

||||

fi

|

||||

|

||||

if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

@@ -175,7 +170,6 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|cannot join on an empty table" # InfluxDB flux query, import nodes

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|exhausting result iterator" # InfluxDB flux query mismatched table results (temporary data issue)

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|failed to finish run" # InfluxDB rare error, self-recoverable

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Unable to gather disk name" # InfluxDB known error, can't read disks because the container doesn't have them mounted

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|iteration"

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|communication packets"

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|use of closed"

|

||||

@@ -207,12 +201,6 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Unknown column" # Elastalert errors from running EQL queries

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|parsing_exception" # Elastalert EQL parsing issue. Temp.

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|context deadline exceeded"

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Error running query:" # Specific issues with detection rules

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|detect-parse" # Suricata encountering a malformed rule

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|integrity check failed" # Detections: Exclude false positive due to automated testing

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|syncErrors" # Detections: Not an actual error

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Initialized license manager" # SOC log: before fields.status was changed to fields.licenseStatus

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|from NIC checksum offloading" # zeek reporter.log

|

||||

fi

|

||||

|

||||

RESULT=0

|

||||

@@ -248,11 +236,6 @@ exclude_log "playbook.log" # Playbook is removed as of 2.4.70, logs may still be

|

||||

exclude_log "mysqld.log" # MySQL is removed as of 2.4.70, logs may still be on disk

|

||||

exclude_log "soctopus.log" # Soctopus is removed as of 2.4.70, logs may still be on disk

|

||||

exclude_log "agentstatus.log" # ignore this log since it tracks agents in error state

|

||||

exclude_log "detections_runtime-status_yara.log" # temporarily ignore this log until Detections is more stable

|

||||

exclude_log "/nsm/kafka/data/" # ignore Kafka data directory from log check.

|

||||

|

||||

# Include Zeek reporter.log to detect errors after running known good pcap(s) through sensor

|

||||

echo "/nsm/zeek/spool/logger/reporter.log" >> /tmp/log_check_files

|

||||

|

||||

for log_file in $(cat /tmp/log_check_files); do

|

||||

status "Checking log file $log_file"

|

||||

|

||||

@@ -1,98 +0,0 @@

|

||||

#!/bin/bash

|

||||

#

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0."

|

||||

|

||||

set -e

|

||||

# This script is intended to be used in the case the ISO install did not properly setup TPM decrypt for LUKS partitions at boot.

|

||||

if [ -z $NOROOT ]; then

|

||||

# Check for prerequisites

|

||||

if [ "$(id -u)" -ne 0 ]; then

|

||||

echo "This script must be run using sudo!"

|

||||

exit 1

|

||||

fi

|

||||

fi

|

||||

ENROLL_TPM=N

|

||||

|

||||

while [[ $# -gt 0 ]]; do

|

||||

case $1 in

|

||||

--enroll-tpm)

|

||||

ENROLL_TPM=Y

|

||||

;;

|

||||

*)

|

||||

echo "Usage: $0 [options]"

|

||||

echo ""

|

||||

echo "where options are:"

|

||||

echo " --enroll-tpm for when TPM enrollment was not selected during ISO install."

|

||||

echo ""

|

||||

exit 1

|

||||

;;

|

||||

esac

|

||||

shift

|

||||

done

|

||||

|

||||

check_for_tpm() {

|

||||

echo -n "Checking for TPM: "

|

||||

if [ -d /sys/class/tpm/tpm0 ]; then

|

||||

echo -e "tpm0 found."

|

||||

TPM="yes"

|

||||

# Check if TPM is using sha1 or sha256

|

||||

if [ -d /sys/class/tpm/tpm0/pcr-sha1 ]; then

|

||||

echo -e "TPM is using sha1.\n"

|

||||

TPM_PCR="sha1"

|

||||

elif [ -d /sys/class/tpm/tpm0/pcr-sha256 ]; then

|

||||

echo -e "TPM is using sha256.\n"

|

||||

TPM_PCR="sha256"

|

||||

fi

|

||||

else

|

||||

echo -e "No TPM found.\n"

|

||||

exit 1

|

||||

fi

|

||||

}

|

||||

|

||||

check_for_luks_partitions() {

|

||||

echo "Checking for LUKS partitions"

|

||||

for part in $(lsblk -o NAME,FSTYPE -ln | grep crypto_LUKS | awk '{print $1}'); do

|

||||

echo "Found LUKS partition: $part"

|

||||

LUKS_PARTITIONS+=("$part")

|

||||

done

|

||||

if [ ${#LUKS_PARTITIONS[@]} -eq 0 ]; then

|

||||

echo -e "No LUKS partitions found.\n"

|

||||

exit 1

|

||||

fi

|

||||

echo ""

|

||||

}

|

||||

|

||||

enroll_tpm_in_luks() {

|

||||

read -s -p "Enter the LUKS passphrase used during ISO install: " LUKS_PASSPHRASE

|

||||

echo ""

|

||||

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||

echo "Enrolling TPM for LUKS device: /dev/$part"

|

||||

if [ "$TPM_PCR" == "sha1" ]; then

|

||||

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha1","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||

elif [ "$TPM_PCR" == "sha256" ]; then

|

||||

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha256","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||

fi

|

||||

done

|

||||

}

|

||||

|

||||

regenerate_tpm_enrollment_token() {

|

||||

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||

clevis luks regen -d /dev/$part -s 1 -q

|

||||

done

|

||||

}

|

||||

|

||||

check_for_tpm

|

||||

check_for_luks_partitions

|

||||

|

||||

if [[ $ENROLL_TPM == "Y" ]]; then

|

||||

enroll_tpm_in_luks

|

||||

else

|

||||

regenerate_tpm_enrollment_token

|

||||

fi

|

||||

|

||||

echo "Running dracut"

|

||||

dracut -fv

|

||||

echo -e "\nTPM configuration complete. Reboot the system to verify the TPM is correctly decrypting the LUKS partition(s) at boot.\n"

|

||||

@@ -10,7 +10,7 @@

|

||||

. /usr/sbin/so-common

|

||||

. /usr/sbin/so-image-common

|

||||

|

||||

REPLAYIFACE=${REPLAYIFACE:-"{{salt['pillar.get']('sensor:interface', '')}}"}

|

||||

REPLAYIFACE=${REPLAYIFACE:-$(lookup_pillar interface sensor)}

|

||||

REPLAYSPEED=${REPLAYSPEED:-10}

|

||||

|

||||

mkdir -p /opt/so/samples

|

||||

@@ -89,7 +89,6 @@ function suricata() {

|

||||

-v ${LOG_PATH}:/var/log/suricata/:rw \

|

||||

-v ${NSM_PATH}/:/nsm/:rw \

|

||||

-v "$PCAP:/input.pcap:ro" \

|

||||

-v /dev/null:/nsm/suripcap:rw \

|

||||

-v /opt/so/conf/suricata/bpf:/etc/suricata/bpf:ro \

|

||||

{{ MANAGER }}:5000/{{ IMAGEREPO }}/so-suricata:{{ VERSION }} \

|

||||

--runmode single -k none -r /input.pcap > $LOG_PATH/console.log 2>&1

|

||||

@@ -248,7 +247,7 @@ fi

|

||||

START_OLDEST_SLASH=$(echo $START_OLDEST | sed -e 's/-/%2F/g')

|

||||

END_NEWEST_SLASH=$(echo $END_NEWEST | sed -e 's/-/%2F/g')

|

||||

if [[ $VALID_PCAPS_COUNT -gt 0 ]] || [[ $SKIPPED_PCAPS_COUNT -gt 0 ]]; then

|

||||

URL="https://{{ URLBASE }}/#/dashboards?q=$HASH_FILTERS%20%7C%20groupby%20event.module*%20%7C%20groupby%20-sankey%20event.module*%20event.dataset%20%7C%20groupby%20event.dataset%20%7C%20groupby%20source.ip%20%7C%20groupby%20destination.ip%20%7C%20groupby%20destination.port%20%7C%20groupby%20network.protocol%20%7C%20groupby%20rule.name%20rule.category%20event.severity_label%20%7C%20groupby%20dns.query.name%20%7C%20groupby%20file.mime_type%20%7C%20groupby%20http.virtual_host%20http.uri%20%7C%20groupby%20notice.note%20notice.message%20notice.sub_message%20%7C%20groupby%20ssl.server_name%20%7C%20groupby%20source_geo.organization_name%20source.geo.country_name%20%7C%20groupby%20destination_geo.organization_name%20destination.geo.country_name&t=${START_OLDEST_SLASH}%2000%3A00%3A00%20AM%20-%20${END_NEWEST_SLASH}%2000%3A00%3A00%20AM&z=UTC"

|

||||

URL="https://{{ URLBASE }}/#/dashboards?q=$HASH_FILTERS%20%7C%20groupby%20-sankey%20event.dataset%20event.category%2a%20%7C%20groupby%20-pie%20event.category%20%7C%20groupby%20-bar%20event.module%20%7C%20groupby%20event.dataset%20%7C%20groupby%20event.module%20%7C%20groupby%20event.category%20%7C%20groupby%20observer.name%20%7C%20groupby%20source.ip%20%7C%20groupby%20destination.ip%20%7C%20groupby%20destination.port&t=${START_OLDEST_SLASH}%2000%3A00%3A00%20AM%20-%20${END_NEWEST_SLASH}%2000%3A00%3A00%20AM&z=UTC"

|

||||

|

||||

status "Import complete!"

|

||||

status

|

||||

|

||||

@@ -9,9 +9,6 @@

|

||||

|

||||

. /usr/sbin/so-common

|

||||

|

||||

software_raid=("SOSMN" "SOSMN-DE02" "SOSSNNV" "SOSSNNV-DE02" "SOS10k-DE02" "SOS10KNV" "SOS10KNV-DE02" "SOS10KNV-DE02" "SOS2000-DE02" "SOS-GOFAST-LT-DE02" "SOS-GOFAST-MD-DE02" "SOS-GOFAST-HV-DE02")

|

||||

hardware_raid=("SOS1000" "SOS1000F" "SOSSN7200" "SOS5000" "SOS4000")

|

||||

|

||||

{%- if salt['grains.get']('sosmodel', '') %}

|

||||

{%- set model = salt['grains.get']('sosmodel') %}

|

||||

model={{ model }}

|

||||

@@ -19,42 +16,33 @@ model={{ model }}

|

||||

if [[ $model =~ ^(SO2AMI01|SO2AZI01|SO2GCI01)$ ]]; then

|

||||

exit 0

|

||||

fi

|

||||

|

||||

for i in "${software_raid[@]}"; do

|

||||

if [[ "$model" == $i ]]; then

|

||||

is_softwareraid=true

|

||||

is_hwraid=false

|

||||

break

|

||||

fi

|

||||

done

|

||||

|

||||

for i in "${hardware_raid[@]}"; do

|

||||

if [[ "$model" == $i ]]; then

|

||||

is_softwareraid=false

|

||||

is_hwraid=true

|

||||

break

|

||||

fi

|

||||

done

|

||||

|

||||

{%- else %}

|

||||

echo "This is not an appliance"

|

||||

exit 0

|

||||

{%- endif %}

|

||||

if [[ $model =~ ^(SOS10K|SOS500|SOS1000|SOS1000F|SOS4000|SOSSN7200|SOSSNNV|SOSMN)$ ]]; then

|

||||

is_bossraid=true

|

||||

fi

|

||||

if [[ $model =~ ^(SOSSNNV|SOSMN)$ ]]; then

|

||||

is_swraid=true

|

||||

fi

|

||||

if [[ $model =~ ^(SOS10K|SOS500|SOS1000|SOS1000F|SOS4000|SOSSN7200)$ ]]; then

|

||||

is_hwraid=true

|

||||

fi

|

||||

|

||||

check_nsm_raid() {

|

||||

PERCCLI=$(/opt/raidtools/perccli/perccli64 /c0/v0 show|grep RAID|grep Optl)

|

||||

MEGACTL=$(/opt/raidtools/megasasctl |grep optimal)

|

||||

if [[ "$model" == "SOS500" || "$model" == "SOS500-DE02" ]]; then

|

||||

#This doesn't have raid

|

||||

HWRAID=0

|

||||

else

|

||||

|

||||

if [[ $APPLIANCE == '1' ]]; then

|

||||

if [[ -n $PERCCLI ]]; then

|

||||

HWRAID=0

|

||||

elif [[ -n $MEGACTL ]]; then

|

||||

HWRAID=0

|

||||

else

|

||||

HWRAID=1

|

||||

fi

|

||||

fi

|

||||

|

||||

fi

|

||||

|

||||

}

|

||||

@@ -62,27 +50,17 @@ check_nsm_raid() {

|

||||

check_boss_raid() {

|

||||

MVCLI=$(/usr/local/bin/mvcli info -o vd |grep status |grep functional)

|

||||

MVTEST=$(/usr/local/bin/mvcli info -o vd | grep "No adapter")

|

||||

BOSSNVMECLI=$(/usr/local/bin/mnv_cli info -o vd -i 0 | grep Functional)

|

||||

|

||||

# Is this NVMe Boss Raid?

|

||||

if [[ "$model" =~ "-DE02" ]]; then

|

||||

if [[ -n $BOSSNVMECLI ]]; then

|

||||

# Check to see if this is a SM based system

|

||||

if [[ -z $MVTEST ]]; then

|

||||

if [[ -n $MVCLI ]]; then

|

||||

BOSSRAID=0

|

||||

else

|

||||

BOSSRAID=1

|

||||

fi

|

||||

else

|

||||

# Check to see if this is a SM based system

|

||||

if [[ -z $MVTEST ]]; then

|

||||

if [[ -n $MVCLI ]]; then

|

||||

BOSSRAID=0

|

||||

else

|

||||

BOSSRAID=1

|

||||

fi

|

||||

else

|

||||

# This doesn't have boss raid so lets make it 0

|

||||

BOSSRAID=0

|

||||

fi

|

||||

# This doesn't have boss raid so lets make it 0

|

||||

BOSSRAID=0

|

||||

fi

|

||||

}

|

||||

|

||||

@@ -101,13 +79,14 @@ SWRAID=0

|

||||

BOSSRAID=0

|

||||

HWRAID=0

|

||||

|

||||

if [[ "$is_hwraid" == "true" ]]; then

|

||||

if [[ $is_hwraid ]]; then

|

||||

check_nsm_raid

|

||||

check_boss_raid

|

||||

fi

|

||||

if [[ "$is_softwareraid" == "true" ]]; then

|

||||

if [[ $is_bossraid ]]; then

|

||||

check_boss_raid

|

||||

fi

|

||||

if [[ $is_swraid ]]; then

|

||||

check_software_raid

|

||||

check_boss_raid

|

||||

fi

|

||||

|

||||

sum=$(($SWRAID + $BOSSRAID + $HWRAID))

|

||||

|

||||

@@ -51,14 +51,6 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-hydra':

|

||||

final_octet: 30

|

||||

port_bindings:

|

||||

- 0.0.0.0:4444:4444

|

||||

- 0.0.0.0:4445:4445

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-logstash':

|

||||

final_octet: 29

|

||||

port_bindings:

|

||||

@@ -82,7 +74,6 @@ docker:

|

||||

- 443:443

|

||||

- 8443:8443

|

||||

- 7788:7788

|

||||

- 7789:7789

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

@@ -189,8 +180,6 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits:

|

||||

- memlock=524288000

|

||||

'so-zeek':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

@@ -201,7 +190,6 @@ docker:

|

||||

port_bindings:

|

||||

- 0.0.0.0:9092:9092

|

||||

- 0.0.0.0:9093:9093

|

||||

- 0.0.0.0:8778:8778

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

|

||||

@@ -20,41 +20,41 @@ dockergroup:

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:27.2.0-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~debian.12~bookworm

|

||||

- containerd.io: 1.6.21-1

|

||||

- docker-ce: 5:24.0.3-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:24.0.3-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:24.0.3-1~debian.12~bookworm

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% elif grains.oscodename == 'jammy' %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- containerd.io: 1.6.21-1

|

||||

- docker-ce: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% else %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- docker-ce-cli: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~ubuntu.20.04~focal

|

||||

- containerd.io: 1.4.9-1

|

||||

- docker-ce: 5:20.10.8~3-0~ubuntu-focal

|

||||

- docker-ce-cli: 5:20.10.5~3-0~ubuntu-focal

|

||||

- docker-ce-rootless-extras: 5:20.10.5~3-0~ubuntu-focal

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% else %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-3.1.el9

|

||||

- docker-ce: 3:27.2.0-1.el9

|

||||

- docker-ce-cli: 1:27.2.0-1.el9

|

||||

- docker-ce-rootless-extras: 27.2.0-1.el9

|

||||

- containerd.io: 1.6.21-3.1.el9

|

||||

- docker-ce: 24.0.4-1.el9

|

||||

- docker-ce-cli: 24.0.4-1.el9

|

||||

- docker-ce-rootless-extras: 24.0.4-1.el9

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

|

||||

@@ -45,7 +45,6 @@ docker:

|

||||

so-influxdb: *dockerOptions

|

||||

so-kibana: *dockerOptions

|

||||

so-kratos: *dockerOptions

|

||||

so-hydra: *dockerOptions

|

||||

so-logstash: *dockerOptions

|

||||

so-nginx: *dockerOptions

|

||||

so-nginx-fleet-node: *dockerOptions

|

||||

@@ -64,42 +63,6 @@ docker:

|

||||

so-elastic-agent: *dockerOptions

|

||||

so-telegraf: *dockerOptions

|

||||

so-steno: *dockerOptions

|

||||

so-suricata:

|

||||

final_octet:

|

||||

description: Last octet of the container IP address.

|

||||

helpLink: docker.html

|

||||

readonly: True

|

||||

advanced: True

|

||||

global: True

|

||||

port_bindings:

|

||||

description: List of port bindings for the container.

|

||||

helpLink: docker.html

|

||||

advanced: True

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

custom_bind_mounts:

|

||||

description: List of custom local volume bindings.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

extra_hosts:

|

||||

description: List of additional host entries for the container.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

extra_env:

|

||||

description: List of additional ENV entries for the container.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

ulimits:

|

||||

description: Ulimits for the container, in bytes.

|

||||

advanced: True

|

||||

helpLink: docker.html

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

so-suricata: *dockerOptions

|

||||

so-zeek: *dockerOptions

|

||||

so-kafka: *dockerOptions

|

||||

@@ -82,36 +82,6 @@ elastasomodulesync:

|

||||

- group: 933

|

||||

- makedirs: True

|

||||

|

||||

elastacustomdir:

|

||||

file.directory:

|

||||

- name: /opt/so/conf/elastalert/custom

|

||||

- user: 933

|

||||

- group: 933

|

||||

- makedirs: True

|

||||

|

||||

elastacustomsync:

|

||||

file.recurse:

|

||||

- name: /opt/so/conf/elastalert/custom

|

||||

- source: salt://elastalert/files/custom

|

||||

- user: 933

|

||||

- group: 933

|

||||

- makedirs: True

|

||||

- file_mode: 660

|

||||

- show_changes: False

|

||||

|

||||

elastapredefinedsync:

|

||||

file.recurse:

|

||||

- name: /opt/so/conf/elastalert/predefined

|

||||

- source: salt://elastalert/files/predefined

|

||||

- user: 933

|

||||

- group: 933

|

||||

- makedirs: True

|

||||

- template: jinja

|

||||

- file_mode: 660

|

||||

- context:

|

||||

elastalert: {{ ELASTALERTMERGED }}

|

||||

- show_changes: False

|

||||

|

||||

elastaconf:

|

||||

file.managed:

|

||||

- name: /opt/so/conf/elastalert/elastalert_config.yaml

|

||||

|

||||

@@ -1,6 +1,5 @@

|

||||

elastalert:

|

||||

enabled: False

|

||||

alerter_parameters: ""

|

||||

config:

|

||||

rules_folder: /opt/elastalert/rules/

|

||||

scan_subdirectories: true

|

||||

|

||||

@@ -30,8 +30,6 @@ so-elastalert:

|

||||

- /opt/so/rules/elastalert:/opt/elastalert/rules/:ro

|

||||

- /opt/so/log/elastalert:/var/log/elastalert:rw

|

||||

- /opt/so/conf/elastalert/modules/:/opt/elastalert/modules/:ro

|

||||

- /opt/so/conf/elastalert/predefined/:/opt/elastalert/predefined/:ro

|

||||

- /opt/so/conf/elastalert/custom/:/opt/elastalert/custom/:ro

|

||||

- /opt/so/conf/elastalert/elastalert_config.yaml:/opt/elastalert/config.yaml:ro

|

||||

{% if DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

||||

{% for BIND in DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

||||

|

||||

@@ -1 +0,0 @@

|

||||

THIS IS A PLACEHOLDER FILE

|

||||

38

salt/elastalert/files/modules/so/playbook-es.py

Normal file

38

salt/elastalert/files/modules/so/playbook-es.py

Normal file

@@ -0,0 +1,38 @@

|

||||

# -*- coding: utf-8 -*-

|

||||

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

|

||||

from time import gmtime, strftime

|

||||

import requests,json

|

||||

from elastalert.alerts import Alerter

|

||||

|

||||

import urllib3

|

||||

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

|

||||

|

||||

class PlaybookESAlerter(Alerter):

|

||||

"""

|

||||

Use matched data to create alerts in elasticsearch

|

||||

"""

|

||||

|

||||

required_options = set(['play_title','play_url','sigma_level'])

|

||||

|

||||

def alert(self, matches):

|

||||

for match in matches:

|

||||

today = strftime("%Y.%m.%d", gmtime())

|

||||

timestamp = strftime("%Y-%m-%d"'T'"%H:%M:%S"'.000Z', gmtime())

|

||||

headers = {"Content-Type": "application/json"}

|

||||

|

||||

creds = None

|

||||