mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-04-25 05:57:49 +02:00

Compare commits

1358 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| a13b3f305a | |||

| 38089c6662 | |||

| 2d863f09eb | |||

| 37b98ba188 | |||

| 65d1e57ccd | |||

| 9ae32e2bd6 | |||

| 6e8f31e083 | |||

| 3c5cd941c7 | |||

| 2ea2a4d0a7 | |||

| 90102b1148 | |||

| ec81cbd70d | |||

| 59c0109c91 | |||

| 9af2a731ca | |||

| 9b656ebbc0 | |||

| 9d3744aa25 | |||

| 9fddd56c96 | |||

| 89c4f58296 | |||

| 0ba1e7521a | |||

| 36747cf940 | |||

| 118088c35f | |||

| 63373710b4 | |||

| 209da766ba | |||

| 433cde0f9e | |||

| 9fe9256a0f | |||

| 014aeffb2a | |||

| 3b86b60207 | |||

| 0f52530d07 | |||

| 726ec72350 | |||

| 560ec9106d | |||

| a51acfc314 | |||

| 78950ebfbb | |||

| d3ae2b03f0 | |||

| dd1fa51eb5 | |||

| 682289ef23 | |||

| 593cdbd060 | |||

| 4ed0ba5040 | |||

| 2472d6a727 | |||

| 18e31a4490 | |||

| 2caca92082 | |||

| abf74e0ae4 | |||

| dc7ce5ba8f | |||

| 6b5343f582 | |||

| ca6276b922 | |||

| 3e4136e641 | |||

| 15b8e1a753 | |||

| b7197bbd16 | |||

| 8966617508 | |||

| 9319c3f2e1 | |||

| d4fbf7d6a6 | |||

| e78fcbc6cb | |||

| 27b70cbf68 | |||

| ffb54135d1 | |||

| d40a8927c3 | |||

| 9172e10dba | |||

| 1907ea805c | |||

| 80598d7f8d | |||

| 13c3e7f5ff | |||

| d4389d5057 | |||

| cf2233bbb6 | |||

| 3847863b3d | |||

| 3368789b43 | |||

| 1bc7bbc76e | |||

| e108bb9bcd | |||

| 5414b0756c | |||

| 11c827927c | |||

| 3054b8dcb9 | |||

| 399758cd5f | |||

| 1c8a8c460c | |||

| ab28cee7cf | |||

| 5a3c1f0373 | |||

| 435da77388 | |||

| da2910e36f | |||

| eb512d9aa2 | |||

| 03f5e44be7 | |||

| f153c1125d | |||

| 99b61b5e1d | |||

| 8036df4b20 | |||

| aab55c8cf6 | |||

| f3c5d26a4e | |||

| 64776936cc | |||

| c17b324108 | |||

| 72e1cbbfb6 | |||

| f102351052 | |||

| ac28f90af3 | |||

| f6c6204555 | |||

| 9873121000 | |||

| 5630b353c4 | |||

| 04ed5835ae | |||

| 407cb2a537 | |||

| b520c1abb7 | |||

| 25b11c35fb | |||

| ef0301d364 | |||

| e694019027 | |||

| 22ebb2faf6 | |||

| 0d5ed2e835 | |||

| 8ab1769d70 | |||

| 6692fffb9b | |||

| 23414599ee | |||

| 8b3a38f573 | |||

| 9ec4322bf4 | |||

| 7037fc52f8 | |||

| 0e047cffad | |||

| 44b086a028 | |||

| 4e2eb86b36 | |||

| 1cbf60825d | |||

| 2d13bf1a61 | |||

| 968fee3488 | |||

| da51fd59a0 | |||

| 3fa0a98830 | |||

| e7bef745eb | |||

| 82b335ed04 | |||

| f35f42c83d | |||

| 4adaddf13f | |||

| b6579d7d45 | |||

| 87a5d20ac9 | |||

| 2875a7a2e5 | |||

| f27ebc47c1 | |||

| 63b4bdcebe | |||

| ba3660d0da | |||

| 83265d9d6c | |||

| 527a6ba454 | |||

| f84b0a3219 | |||

| ae6997a6b7 | |||

| 9d59e4250f | |||

| 48d9c14563 | |||

| 29b64eadd4 | |||

| 5dd5f9fc1c | |||

| 44c926ba8d | |||

| 6a55a8e5c0 | |||

| 64bad0a9cf | |||

| b6dd347eb8 | |||

| a89508f1ae | |||

| ed7b674fbb | |||

| 0c2a4cbaba | |||

| 57562ad5e3 | |||

| 95581f505a | |||

| 599de60dc8 | |||

| 77101fec12 | |||

| 069d32be1a | |||

| e78e6b74ed | |||

| 16217912db | |||

| 635ddc9b21 | |||

| 18d8f0d448 | |||

| 1c42d70d30 | |||

| 282f13a774 | |||

| f867be9e04 | |||

| 4939447764 | |||

| 5a59975cb8 | |||

| 20f3cedc01 | |||

| e563d71856 | |||

| 1ca78fd297 | |||

| e76ee718e0 | |||

| 5c90a5f27e | |||

| bee429fe29 | |||

| ecbb353d68 | |||

| ed21b94c28 | |||

| 2a282a29c3 | |||

| bc09b418ca | |||

| 6f6db61a69 | |||

| 9fce80dba3 | |||

| abfec85e28 | |||

| 9aa655365b | |||

| aa56085758 | |||

| 9a3760951a | |||

| 4c8373452d | |||

| 0bb5db2e72 | |||

| 2dbc7d8485 | |||

| 858e884ec2 | |||

| 4672eeb99b | |||

| aa824e7b6c | |||

| bb2a1b9521 | |||

| 3a22ef8e86 | |||

| 54080c42fe | |||

| a1fa87c150 | |||

| 0c553633b1 | |||

| 12486599e0 | |||

| 3c16218c5a | |||

| f9850025ea | |||

| 65b76d72ca | |||

| afca15f444 | |||

| 65b9843f14 | |||

| 653e2d8205 | |||

| bbaf6df914 | |||

| bc182c1c43 | |||

| fe9b934af6 | |||

| 373298430b | |||

| 4a18eb02f3 | |||

| 0aab3e185e | |||

| b1fb05dd28 | |||

| 9437a47946 | |||

| bdf4f6190d | |||

| f24a3a51ce | |||

| ba6043392c | |||

| 60eb1611ea | |||

| 3ef6ea9155 | |||

| 2b38bc778d | |||

| e334d44c95 | |||

| 39662ccf14 | |||

| fd69d1c714 | |||

| 63eebdf6ac | |||

| e19845e41d | |||

| c1190064ad | |||

| 4f94d953c9 | |||

| 71a83c1fe9 | |||

| 5553be02ac | |||

| b20fad2839 | |||

| 16edca7834 | |||

| 2545f9907f | |||

| 4efc951eaf | |||

| d75191d679 | |||

| ee667a48c9 | |||

| 067a83a87c | |||

| d84dbf9535 | |||

| d71254ad29 | |||

| de7b7ff989 | |||

| 510900e640 | |||

| 00483018ca | |||

| 9416a14971 | |||

| c9faa1a340 | |||

| 9bda01bd29 | |||

| eead0c42d4 | |||

| 741e6039c1 | |||

| db09b465bd | |||

| a59f2ded38 | |||

| e2fe04dadc | |||

| 563bf2ff3a | |||

| 07eeb4e2a0 | |||

| 5dc5b99b05 | |||

| ba69c67dc2 | |||

| d1d5f8a2b6 | |||

| 48324911ce | |||

| 4b0126a2e7 | |||

| 8a3c2e7242 | |||

| f55c1a4078 | |||

| c4d81a249a | |||

| 4c9d172721 | |||

| 36a936d3d6 | |||

| d6164446c6 | |||

| bb7a918a16 | |||

| be254b15f2 | |||

| 83e1e3efdc | |||

| 7c48f9d6ec | |||

| f2947de0ca | |||

| d07c46f27e | |||

| 47e418a441 | |||

| 87b1207ac0 | |||

| a86cbaa6fa | |||

| c68cd6cf33 | |||

| 3071a1de41 | |||

| e75d0c8094 | |||

| 14c685ab10 | |||

| 54082858dc | |||

| 4b7e7978ef | |||

| 066de70638 | |||

| 19c6796927 | |||

| 77c9b4fb54 | |||

| 3104137190 | |||

| c8b65ecca0 | |||

| 555c881235 | |||

| 0ac9a1f9cc | |||

| 3c0554a42c | |||

| 0b19179630 | |||

| 30a14f8aaf | |||

| 877fc36013 | |||

| a892adb66f | |||

| a49b05661d | |||

| 266fc4e866 | |||

| b738325880 | |||

| ad7821391d | |||

| 1b0c146b54 | |||

| 1848a835f5 | |||

| 23cc75c68d | |||

| 17fcf12608 | |||

| 6a8737e9a2 | |||

| 9543058a2c | |||

| b66cd82110 | |||

| 41ebb403ca | |||

| c94436fcbd | |||

| a59eda319e | |||

| 8a76975d8c | |||

| 737da45e7f | |||

| df1bf8e67b | |||

| f95757c551 | |||

| 5e46138961 | |||

| dc8aa4d923 | |||

| 1d3e39b6bd | |||

| 9ad7303cf2 | |||

| b1daa22dfc | |||

| 49c4edbcbe | |||

| f4c3103f84 | |||

| a2aea5530b | |||

| 01234f87f9 | |||

| 5d4186ac07 | |||

| 425ca35a22 | |||

| fe5ca3a0c8 | |||

| 7fad710ca1 | |||

| 8d6c2600c9 | |||

| 38c7ea0801 | |||

| abe0a9ec27 | |||

| f0f8513370 | |||

| bffd24e0d5 | |||

| 71cbab8fcc | |||

| 6816d06710 | |||

| d19615f743 | |||

| 894e009b95 | |||

| 1a4515fc8a | |||

| 31696803e1 | |||

| e715dfa354 | |||

| c723a09107 | |||

| 8cf3ceeb71 | |||

| 921fc95668 | |||

| 9e42fb927d | |||

| 87d72e852c | |||

| ba2782c5e7 | |||

| 9169fca9f8 | |||

| 1028fb1346 | |||

| 6846487909 | |||

| 2cc0c4c0ac | |||

| 5a5b643155 | |||

| e97bec2bc1 | |||

| 78db64a419 | |||

| 55d32c5b98 | |||

| 333213d1dd | |||

| 03b16a5582 | |||

| 20c76abac4 | |||

| 4158e18675 | |||

| f0c391e801 | |||

| 922a77ac55 | |||

| a62f96595c | |||

| fb8a79e112 | |||

| 782a3eccfe | |||

| 2c996fe7ad | |||

| 0c177ec923 | |||

| 41f00c0aa1 | |||

| 05b30771c5 | |||

| e3249c8e4c | |||

| a0b6e1076f | |||

| 85bb5a327c | |||

| 68f5c9965a | |||

| 727d0443a2 | |||

| b915cea52f | |||

| d98a1d5ae5 | |||

| 6f5bb136ff | |||

| 695ec149f1 | |||

| 50103aebb3 | |||

| 6f81e234cd | |||

| 7732435b64 | |||

| 2cf36f1e8f | |||

| 43d63a3187 | |||

| 37116a9bdd | |||

| 6297a2632b | |||

| 5cc752f128 | |||

| 68d95cd1cb | |||

| 1a68c3cd24 | |||

| 40294e2762 | |||

| 87eec4ae88 | |||

| 676696b24a | |||

| da27fce95f | |||

| 8acc37a7d1 | |||

| 5f1b467e64 | |||

| fe7fb7f54d | |||

| 577bfac886 | |||

| 468b6e4831 | |||

| c75d209d7f | |||

| b29b264d5c | |||

| c99e7da5a7 | |||

| 60d66b973c | |||

| 304830d2ee | |||

| d7285d69a7 | |||

| 7cdd1f89d7 | |||

| b7cab1d118 | |||

| f03a472ee5 | |||

| c7a0801eed | |||

| 5e0015e9ac | |||

| 5a72c558cb | |||

| a6e907f76c | |||

| a3f79850fe | |||

| 2d3eb22057 | |||

| 8437fcd94c | |||

| 1b25db4573 | |||

| f8ed2e6e8e | |||

| f22c61a0a2 | |||

| 5069d1163c | |||

| 31edf2e8ea | |||

| 6b8893ded5 | |||

| 1f8b7bda89 | |||

| b9204cbe99 | |||

| 59233d6550 | |||

| 1ac72e5b24 | |||

| 7805ca8beb | |||

| 47b2481cdd | |||

| fa933d3f53 | |||

| 6f7914f3c4 | |||

| 0c9e230294 | |||

| f4dc73a206 | |||

| 437c9cab68 | |||

| 6da96a733f | |||

| 82796370ce | |||

| 8c16feb772 | |||

| ce1f363424 | |||

| e8860a7d2c | |||

| beb26596fd | |||

| 6a5ff04804 | |||

| ff3bb11fbb | |||

| 8be5082b60 | |||

| 5faa4f0a30 | |||

| da7770a900 | |||

| 8178338971 | |||

| 79ed17b506 | |||

| fa1d53a309 | |||

| a41b0dbfea | |||

| d28375b304 | |||

| 07c0b539d7 | |||

| d18ebd6e36 | |||

| 5a642b151b | |||

| 0aa4ea3e87 | |||

| efcef90ead | |||

| af56aa4f16 | |||

| d5257468eb | |||

| a3b0db7949 | |||

| 5f509eb2d8 | |||

| a38d561684 | |||

| 4b559ec182 | |||

| 0b209d69e5 | |||

| 2785587840 | |||

| 9f95306458 | |||

| 55bed0771b | |||

| 0b5ee49873 | |||

| 1646459052 | |||

| 8ec003d89f | |||

| 224f0606c2 | |||

| 910125f13a | |||

| 5eca1acbeb | |||

| d551faeb16 | |||

| 6a6afeef75 | |||

| 869f60ccaa | |||

| 12c82d2812 | |||

| a2b50c6d40 | |||

| ab7ae6cddd | |||

| 7a9a12ae3d | |||

| b49a296276 | |||

| 9b9321d23a | |||

| 1922ad95d5 | |||

| 11493cb615 | |||

| 0def41f03c | |||

| 1c191e426f | |||

| de98baaad4 | |||

| df0e19ff80 | |||

| d22d864ba6 | |||

| 898b352af9 | |||

| 76a8e315b7 | |||

| edaf695463 | |||

| 53fcac4a02 | |||

| 44054ba95f | |||

| 10aa77977e | |||

| 8e90658856 | |||

| 965d0543f4 | |||

| e353855855 | |||

| c54217a8cb | |||

| 710b3bac3d | |||

| 8a90579df7 | |||

| 39c8766914 | |||

| 694ea743cc | |||

| 3d9e7d1e97 | |||

| ca71c00f1c | |||

| 2f2394dca2 | |||

| fee4c20912 | |||

| 03342fd477 | |||

| 6dbff3b9df | |||

| 2f375b89a8 | |||

| f67ac80c56 | |||

| b06a35099f | |||

| 087099b9b6 | |||

| 04fe2ca996 | |||

| bdb5748b44 | |||

| 1cbe5580a6 | |||

| b57674a7cc | |||

| 53bd7bcc29 | |||

| 6787b97c6a | |||

| 0d43f9aaf4 | |||

| 40540f47bf | |||

| 24e05c9491 | |||

| 02c9465dfb | |||

| a4d484ea47 | |||

| c9d650f4c8 | |||

| 9de8814412 | |||

| 35e7659904 | |||

| ed1d2d0a8b | |||

| 903de330c2 | |||

| 8621352701 | |||

| 564ab105ba | |||

| b637e27c8d | |||

| d31ea4097d | |||

| c277b7acfa | |||

| 97a9e0989d | |||

| 6bdccec6b1 | |||

| 35945ed224 | |||

| 7319d7ae9b | |||

| 8b38cbe8cf | |||

| 35ea084466 | |||

| c89582ffb6 | |||

| d6db94a4d4 | |||

| e2acf027a9 | |||

| d6d8ba7479 | |||

| 41a4321b03 | |||

| 2ae049071d | |||

| e82df53997 | |||

| 273e78da94 | |||

| 446376395e | |||

| a13001dce0 | |||

| 8819e1d4d6 | |||

| 1baea3bcd5 | |||

| 1c37c05824 | |||

| cd1db36c13 | |||

| 5898c9ef31 | |||

| 951f04c265 | |||

| 4b069d91ab | |||

| 34ab949dfc | |||

| 59191008a0 | |||

| 17a04a75c9 | |||

| 7561ec0512 | |||

| 884d669ae9 | |||

| 8a88b16b9e | |||

| 6545ae588d | |||

| 5ab54fcfc5 | |||

| ae4befe377 | |||

| 0c320e3501 | |||

| 933f4fa6c8 | |||

| d80c88f613 | |||

| 6d2e851a43 | |||

| 209aae50bc | |||

| eef1b40436 | |||

| 34db6fb823 | |||

| eeaf077baf | |||

| 120d21c0da | |||

| 6fc988740d | |||

| 66457ad8f8 | |||

| 69670c481d | |||

| cae011babb | |||

| 02ea939abc | |||

| be028aa23e | |||

| 24b7f7a7ce | |||

| 12cce111db | |||

| add72d7a5c | |||

| c7a1d4758b | |||

| 8436b647dd | |||

| 387ce22385 | |||

| cc3c28135d | |||

| 6b6724afcf | |||

| c37a179a3c | |||

| 77e6ee3c36 | |||

| 3e71663669 | |||

| d519369c6f | |||

| 883d9560a0 | |||

| 984971c63c | |||

| 6adef20a06 | |||

| cb8faf7c5f | |||

| 740723ecd6 | |||

| d70371c540 | |||

| b6986d5c61 | |||

| 02e6e11be7 | |||

| d26484fe1a | |||

| 12d10d7d42 | |||

| 7ea37ac2dd | |||

| 7aae72cfcf | |||

| ec427cde08 | |||

| c2efd7ef64 | |||

| 77c58e665e | |||

| 9530901d1d | |||

| e83afa3e30 | |||

| 70fb28a8b3 | |||

| 8355432356 | |||

| 2247cafe5f | |||

| 85a8da6331 | |||

| ddabab253c | |||

| 2e42eddbc2 | |||

| 07a590dda8 | |||

| ec8eac3430 | |||

| 05b84327b8 | |||

| 0607532e4a | |||

| 3018886f72 | |||

| e02bdffe34 | |||

| 5073d62ee8 | |||

| e2ff48164b | |||

| 43832f9c34 | |||

| 5da5a04025 | |||

| 25b51135fc | |||

| aa91c1fef2 | |||

| 801a5a6824 | |||

| f63c26b7f2 | |||

| 336a40d646 | |||

| bb0cfc5253 | |||

| 106aaa9c3e | |||

| ff7db0be63 | |||

| b96d3473f2 | |||

| fb27e7c479 | |||

| 261acee8a0 | |||

| a9585b2a7f | |||

| 62fa15c63e | |||

| e995576b1d | |||

| d247c9d704 | |||

| b21b545756 | |||

| 5e8748c436 | |||

| e2cca917c1 | |||

| d8700137d2 | |||

| 2c42d4b19e | |||

| a3c7e40c40 | |||

| 94fe456e28 | |||

| 662db41857 | |||

| 7623dd20b9 | |||

| 2b323ab661 | |||

| 8de01625a8 | |||

| d0d7ab57ca | |||

| f4cbe20ddf | |||

| 0d92a1594a | |||

| daaead618e | |||

| 19469205e1 | |||

| cae9e6230f | |||

| 6c4c815683 | |||

| 6769386c86 | |||

| 36272efda7 | |||

| 6b97d07a89 | |||

| da82395dcf | |||

| b5e5bd57ad | |||

| ad4fb52b81 | |||

| 4e849ecc90 | |||

| 7e37cd0f05 | |||

| 3952c1a9b7 | |||

| c13c37f406 | |||

| 9240c3c6f0 | |||

| 2aa01280e7 | |||

| 1675b787bf | |||

| 4866eb2315 | |||

| f785fb2772 | |||

| 8c9f863808 | |||

| 1751e35121 | |||

| 6676afc7de | |||

| 699ea1ac3e | |||

| 90fdb9c465 | |||

| 48291f5271 | |||

| 3a41b090c1 | |||

| 139b36b189 | |||

| 6ddf887342 | |||

| 6ba9e057a9 | |||

| 6600484f8e | |||

| b02c38175c | |||

| 4497f6561f | |||

| 0fc03baf58 | |||

| fb81c6e2e3 | |||

| ad28ea275f | |||

| 41951659ec | |||

| 451a4784a1 | |||

| 1b7095fa81 | |||

| 89d789fe0f | |||

| 49055e260f | |||

| a465039887 | |||

| b60cf29598 | |||

| 0e09d73aa0 | |||

| 520a5671ca | |||

| fc824359ed | |||

| 7caa7cec6b | |||

| 0695140f83 | |||

| ed1e2c8908 | |||

| 594900a8d4 | |||

| 6894fa4e4d | |||

| 2334d82d36 | |||

| c0a2ea3138 | |||

| d4acb1a33a | |||

| 5de9e5baf4 | |||

| 3a34da354f | |||

| 469390696e | |||

| 0a4a48b61e | |||

| 58a63e0765 | |||

| 251bc6f45e | |||

| b84d997f87 | |||

| b5bccc5e05 | |||

| b4e5ac9796 | |||

| 2db95fe1b4 | |||

| 934b0f45a1 | |||

| a88227d13f | |||

| 21a7b76352 | |||

| 03082339ca | |||

| 8f6226b531 | |||

| 2c4eccd7e0 | |||

| fa57494694 | |||

| 3f1741e75a | |||

| 48331ce35b | |||

| c2ac60b82e | |||

| fedfbe9fec | |||

| 9947f9def4 | |||

| c205438771 | |||

| 8cde05807c | |||

| 2ac0aba916 | |||

| af003cc2a1 | |||

| 0d4f6b4fe6 | |||

| 7093254439 | |||

| bd7644a557 | |||

| 90b740a997 | |||

| 5547a1b7ab | |||

| 1b90fd8581 | |||

| bbdf7bb5a7 | |||

| fb8ad71b27 | |||

| e43b7607bb | |||

| a265c06e31 | |||

| 2aa954cb0a | |||

| 73812b11a3 | |||

| 38ab426470 | |||

| d0a6881c2c | |||

| c7c4e65df1 | |||

| 49b150797d | |||

| 57268ba934 | |||

| 1208915896 | |||

| 42f5ad9939 | |||

| 8e0d895afb | |||

| 998c85e3f8 | |||

| 32f3ee0b01 | |||

| a90aed25fb | |||

| ae14e4870d | |||

| 273a1d7e9c | |||

| b3f8ed7dcd | |||

| ad5a424c03 | |||

| e06787445c | |||

| 8a4f5d6dcb | |||

| 81dd951064 | |||

| c12f138899 | |||

| 884a7041af | |||

| 023008c54c | |||

| 6f7de954d9 | |||

| 46371aaaf5 | |||

| 1fde2e2755 | |||

| 1aad9d1b2f | |||

| 9703e70163 | |||

| f6735207d7 | |||

| e5f76a9c6e | |||

| d1c86cb9ff | |||

| 8ccb24dda2 | |||

| 932054e9da | |||

| 8b35002169 | |||

| f68527d366 | |||

| 81e3d26540 | |||

| 96b60fa39a | |||

| f172a74fbc | |||

| c4be56ec7b | |||

| 96195806ab | |||

| 88bbd3440d | |||

| 495a9c0783 | |||

| 905bc564fc | |||

| f6f387428f | |||

| db5abcb3cf | |||

| 27e310c2a1 | |||

| 236eb0cbcc | |||

| 841d0b4b1f | |||

| 272f97e2d7 | |||

| eac9a3fc86 | |||

| 32dc26f2e7 | |||

| 1b14142e4c | |||

| 2fef1d5fa7 | |||

| 3bbfc3865d | |||

| 6947fd6414 | |||

| d3e5be78fd | |||

| 09e005127e | |||

| d3ea596deb | |||

| d6d315e8d5 | |||

| 58dc073678 | |||

| 8c9186d8dd | |||

| aee842b912 | |||

| 3a5a59af59 | |||

| 8f3a874e61 | |||

| 66dc6274e6 | |||

| 302e580d8f | |||

| 4cf60a6054 | |||

| 8f6d82af97 | |||

| 8ab54dcead | |||

| 9704c8917e | |||

| 540ee156db | |||

| 344e2bf1d0 | |||

| 3441c0684e | |||

| ed560f19d3 | |||

| b3f6012856 | |||

| 9ae26ec866 | |||

| 20aaa79476 | |||

| 2bb77251b0 | |||

| 36791665f3 | |||

| 4d4744a89b | |||

| f3be63051b | |||

| 743ed316f8 | |||

| e4b4bbcfdc | |||

| b6e090f29f | |||

| 25006ed20b | |||

| 4469a93a75 | |||

| 0027016b5a | |||

| 0143e2412d | |||

| 20212414c4 | |||

| 8a63ed5124 | |||

| 096dadf9bd | |||

| b441fe662f | |||

| e5117a343d | |||

| b9d692eb0e | |||

| 36a7f54160 | |||

| 96134684dc | |||

| 374ab0779a | |||

| d0d1cc9106 | |||

| 162a32fd08 | |||

| 9035fa3037 | |||

| b4b87e5620 | |||

| 97c53d70a4 | |||

| 53b4f7bd5c | |||

| 192c8c78c7 | |||

| 62a063dae4 | |||

| 79014a53ec | |||

| e910f04beb | |||

| ef5b63337b | |||

| 799e92e595 | |||

| c835c523a9 | |||

| 9ec1492fad | |||

| 5af1bfe142 | |||

| 482c5324db | |||

| 3c1f1cd50e | |||

| aecd900203 | |||

| 89f5d9f292 | |||

| de43a202a3 | |||

| 6176fa7ca5 | |||

| 9ff27e5b6a | |||

| 5922fc0e45 | |||

| b48e259fee | |||

| b4d85a7bf8 | |||

| 38881231ac | |||

| b2d2a9f0ed | |||

| 32021cf272 | |||

| 4410e136b1 | |||

| 81d4584819 | |||

| f765dc23ea | |||

| 657ef97d17 | |||

| 8f247f962a | |||

| bcbdab1682 | |||

| 5b4ec70ca6 | |||

| ce114a2601 | |||

| 5de59a879a | |||

| a2e6469a38 | |||

| 5c933910aa | |||

| a3c3f08511 | |||

| 9aa58be286 | |||

| db56b3d6a3 | |||

| 7d6182a18f | |||

| 074f84ae4d | |||

| 8ce0d76287 | |||

| 3be3df00d1 | |||

| d99d4756c3 | |||

| 0d83b13585 | |||

| 6505d3e2ce | |||

| 6edfadd18b | |||

| 9552510c7d | |||

| 36ddcfa4e5 | |||

| fcc1337e1a | |||

| 10f9d0f4bd | |||

| edf531739c | |||

| 11d7e66ea0 | |||

| caaedee5a7 | |||

| 1bdd79c578 | |||

| c199acc64e | |||

| a01704a1d7 | |||

| 53f258b08f | |||

| a308a39bbe | |||

| 5c00655ad0 | |||

| 67a608ea56 | |||

| 01d983fc00 | |||

| d6f1bcfdf0 | |||

| f156573f8d | |||

| b3e0e68896 | |||

| 86803f1fb5 | |||

| aad08a830b | |||

| c9db6c0f18 | |||

| d9a9c8738c | |||

| cb0ed9ae6d | |||

| 4f72fca2d7 | |||

| 1dc426b8ce | |||

| 8995012c80 | |||

| 2c4ba2e8b2 | |||

| c42959d040 | |||

| fa6dcd7f83 | |||

| 9c6365aa2f | |||

| 6e4c4febfb | |||

| 732d2aadf8 | |||

| cace817c79 | |||

| e1c361e555 | |||

| 502277b1b7 | |||

| 57f5a22f0f | |||

| 4b18a0e758 | |||

| f6a9a764de | |||

| e65214b097 | |||

| cc47f9a595 | |||

| eb633be437 | |||

| df0dc2e4d1 | |||

| 766f4dd661 | |||

| f53fb69ffb | |||

| ba0ec18a33 | |||

| 79182cecfd | |||

| 8cf82c4b6a | |||

| 78d4586033 | |||

| 02cf1074f2 | |||

| a881cab469 | |||

| 00bd93c026 | |||

| 2c10ad7eec | |||

| 167051af28 | |||

| eb9c5e9af0 | |||

| 2f942a3e37 | |||

| 03f97b309a | |||

| c6a962a46b | |||

| 1ddf45bbbe | |||

| f0c4cebaca | |||

| 87c42ece00 | |||

| 4f8fcd3369 | |||

| 5b2d91b5b5 | |||

| a84322f9b7 | |||

| 2de95bcb63 | |||

| 1e9e2facde | |||

| 592c67d1f2 | |||

| e91dd29cb2 | |||

| 13c9142814 | |||

| ef4f2491f3 | |||

| 645555b990 | |||

| 839275814c | |||

| 9b973e07e2 | |||

| 0027385da9 | |||

| 4ef77f9050 | |||

| debbdec350 | |||

| bf4ac0c2dd | |||

| cb9e7e63db | |||

| 32560af767 | |||

| 1e5ac61ff5 | |||

| 5315c51197 | |||

| 8917f9b9d2 | |||

| c0dc05f26a | |||

| 2aa801d906 | |||

| c192ec9109 | |||

| 7ab31e36af | |||

| 0fd9fb9294 | |||

| 059f80bfc4 | |||

| bab2f7282c | |||

| 02920b5ac9 | |||

| 25b0934cda | |||

| d3c7ea4805 | |||

| 82c3d78672 | |||

| 97b68609bc | |||

| 1d611e618f | |||

| f4b8d385ee | |||

| b7e0923ec4 | |||

| 4930ae4ba6 | |||

| d11479ec5f | |||

| 901e3c4a20 | |||

| 81842462ba | |||

| e15c14cc2e | |||

| f7ddf57f39 | |||

| 47e67fda46 | |||

| 7d0251952c | |||

| 5536f5a8c2 | |||

| 2c932fae9d | |||

| 24445cf36a | |||

| 0feb25c962 | |||

| 3abb4d79ba | |||

| 1df183deb3 | |||

| 77834c1e58 | |||

| d6207705cd | |||

| e4b61aa08d | |||

| 736ff2930d | |||

| 6aff526d9e | |||

| 8101171c97 | |||

| 000507c366 | |||

| 82fdee45aa | |||

| 2419fa43b6 | |||

| acc7619023 | |||

| dcd761ad74 | |||

| 9871ecd223 | |||

| 56a7fdcfcd | |||

| 6325f6db16 | |||

| b253cd45ca | |||

| 1724565331 | |||

| 00a7beaca2 | |||

| c129bba7e5 | |||

| fb298224fc | |||

| 1feed47185 | |||

| 923de356e1 | |||

| cea9af4e01 | |||

| 0f6d894322 | |||

| 9f879164ec | |||

| 1ddc4b6ff8 | |||

| 58f80120bd | |||

| a0e08e4f41 | |||

| 2813d67670 | |||

| c49b134122 | |||

| 48ce377b02 | |||

| 40de01e8c4 | |||

| 2fe88a1e66 | |||

| 214117e0e0 | |||

| bc2d3e43f0 | |||

| b3528b2139 | |||

| 7ecd067e2b | |||

| 576c1d7cc1 | |||

| 6320528263 | |||

| 6528632861 | |||

| 928b3b5471 | |||

| f1c8467e9b | |||

| f5337eba1a | |||

| de28e15805 | |||

| 09ba15f9bb | |||

| ba9892941d | |||

| b381c51246 | |||

| 64726af69c | |||

| 7a4fea7a12 | |||

| db47256cdd | |||

| ba2392997b | |||

| 1a1bcb3526 | |||

| 997e6c141a | |||

| 9a3c997779 | |||

| 53ed4d49c2 | |||

| 0cee5b54a1 | |||

| 3f8e15d16f | |||

| f8f6a1433a | |||

| 83188401c5 | |||

| b01367a294 | |||

| d8e0e320f4 | |||

| b033f0d20f | |||

| b71b4225c4 | |||

| 2a39f5f0b5 | |||

| e27e690bc8 | |||

| 57371ffe5a | |||

| 4440ecd433 | |||

| 277ad61920 | |||

| 0860b1501e | |||

| b06610088a | |||

| aa2f168b73 | |||

| d1f7e5f4a7 | |||

| 05a81596e5 | |||

| 00d1ca0b62 | |||

| dbd4a5bd98 | |||

| 3db34a3346 | |||

| f9890778ad | |||

| e342dae818 | |||

| 64e294ef48 | |||

| 992bbdfac1 | |||

| a4cd695cc8 | |||

| 9f85b3cb4f | |||

| e9fd7d8b8b | |||

| fa1a428133 | |||

| 8e18986671 | |||

| a3b97b40ba | |||

| 634dd9907d | |||

| 1d12dcd243 | |||

| 2ec8d6abf0 | |||

| 98c19e5934 | |||

| 03e7636a18 | |||

| 6ce9561ba7 | |||

| b80dd996cc | |||

| 63cea88c1d | |||

| f41c75c633 | |||

| 20f706f165 | |||

| c74b440922 | |||

| badaab94de | |||

| 2be6c603ab | |||

| 7700a5a1bf | |||

| 687a89e30b | |||

| 06a0492226 | |||

| 4e4034e054 | |||

| 5b06aa518e | |||

| c91fb438bb | |||

| 54c9a3ec71 | |||

| cc1babbea6 | |||

| bde67266d4 | |||

| 1de1e2fdc2 | |||

| 2293574f2e | |||

| 3077c21bd9 | |||

| a52ca6e298 | |||

| 02e1a29f0c | |||

| 1b9ed1c72b | |||

| 9564158c32 | |||

| ce1f75aab6 | |||

| a0ce46e702 | |||

| f501fac9cd | |||

| 8b95edd91a | |||

| c5e5763014 | |||

| 2322ed4b6d | |||

| 38d69701a4 | |||

| 4dc0f06331 | |||

| ec7bcd9b0c | |||

| 24140c4cda | |||

| 6909d3ed14 | |||

| cf5feafb1e | |||

| ebc20a86eb | |||

| e792fbe023 | |||

| 02b619193d | |||

| e5aab3b707 | |||

| 089fcbd0c5 | |||

| 62bafb94f9 | |||

| 9d6fb98e3b | |||

| 7bd9a84aa1 | |||

| 328b714306 | |||

| 2a979197a0 | |||

| 6f7f09f1cd | |||

| f9804c218d | |||

| dfc4498921 | |||

| 9049f9cf03 | |||

| 79a5f3a89f | |||

| c7cb11e919 | |||

| da81d93930 | |||

| 44344612b7 | |||

| 7ac4bc52a3 | |||

| 9aaa33c224 | |||

| a13e6257c3 | |||

| ef18cb3704 | |||

| d5c7eec4ef | |||

| a2c444e03b | |||

| 40c3f9a156 | |||

| bd23d1ab7b | |||

| a1e0041b14 | |||

| 7483dbf442 | |||

| 0f30e787b3 | |||

| 5d50dbb69e | |||

| 867ea5a1ac | |||

| 52cfc59113 | |||

| 789eafa8c2 | |||

| ed712477d6 | |||

| e3cb0a9953 | |||

| 743bbfea35 | |||

| e8a5a5bffb | |||

| a97fa9675b | |||

| 2418d9a096 | |||

| 2a8ed24045 | |||

| f1c91e91b1 | |||

| 5405bc4e20 | |||

| 47a580d110 | |||

| 61a43f7df5 | |||

| 21ffcbf2fd | |||

| 563c0631ba | |||

| 77cbf35625 | |||

| d7972032e4 | |||

| f6dcefe0f8 | |||

| d5a1406095 | |||

| 3d3be6bd29 | |||

| 52fec5fef0 | |||

| ddb776c80e | |||

| 469258ee5e | |||

| 4fec2a18a5 | |||

| c7ed29dfa8 | |||

| 80cbe5f6e8 | |||

| a64eb0ba97 | |||

| dbb1b82e1b | |||

| f34627f709 | |||

| 59451fc4d0 | |||

| dc77b20723 | |||

| 51869ce5b2 | |||

| 98705608a6 | |||

| 8055088d25 | |||

| d0cfaaeb26 | |||

| fbacfce0e4 | |||

| 082704ce1f | |||

| 71b6311edc | |||

| 7e71c60334 | |||

| c5c2600799 | |||

| c6c3cc82e4 | |||

| b17b68034e | |||

| cbd1c05929 | |||

| b14d33ced8 | |||

| a5b1660778 | |||

| d5c4a2887e | |||

| b4b84038ed | |||

| 85ce0bb472 | |||

| b0bd64bc10 | |||

| 17dd21703d | |||

| 767c922083 | |||

| a57ba7e35d | |||

| 81c1678ec7 | |||

| 1593da4597 | |||

| 8359f1983c | |||

| 87a20ffede | |||

| c597766390 | |||

| 3d10a60502 | |||

| 220c534ad4 | |||

| c7604e893e | |||

| b56486d88e | |||

| c99f19251b | |||

| 544fa824ea | |||

| dd034edad6 | |||

| 2419cf86ee | |||

| 61f9573ace | |||

| 7595072e85 | |||

| e60e21d9ff | |||

| b46a5c4b2a | |||

| 40ff2677c4 | |||

| 80b40503fb | |||

| 6a501efa75 | |||

| 1f6463a9bb | |||

| 2d4f4791e0 | |||

| 102906f5dd | |||

| 6c151d3ebd | |||

| 17e6f5b899 | |||

| a38495ce39 | |||

| 38629a7676 | |||

| 9a4ae2b832 | |||

| 3fdcb92dfe | |||

| 725f5414ba | |||

| 73aceb9697 | |||

| 03c89a02ad | |||

| 666d4ea260 | |||

| 4c58aa2ccf | |||

| 26619e5f8d | |||

| 57d90a62f7 | |||

| a8b8a1d0b7 | |||

| e4375a6568 | |||

| b8f9a9a311 | |||

| 3d7f2bc691 | |||

| e799edaf49 | |||

| be003f7ee4 | |||

| 868cb8183c | |||

| b3f94961ea | |||

| 12120e94c8 | |||

| 49a60bac76 | |||

| f07f0775ac | |||

| e93e58fedb | |||

| 8459054ff8 | |||

| 43ec897397 | |||

| 4b73f859d1 | |||

| 969cf25818 | |||

| e25bbd8a0d | |||

| 5b11c41434 | |||

| 99f21ce46f | |||

| 9dc31b6db4 | |||

| 083d96fab2 | |||

| f21e717dcd | |||

| 87e9d2997b | |||

| 288b5ac4d2 | |||

| 533c3b7569 | |||

| 32874d2e9d | |||

| fca7753f73 | |||

| fcdb02d61e | |||

| 4dcc79d245 | |||

| 6c7b4e5492 | |||

| a341f1b7b7 | |||

| 01bd3545d0 | |||

| d823d5dcc9 | |||

| 9fed2ac616 | |||

| d5ab8ff191 | |||

| 2b28283095 | |||

| 499b889b56 | |||

| aa5063c5df | |||

| 9f07388fa4 | |||

| cd674947bb | |||

| 976ad4152d | |||

| 2633f348ac | |||

| 1ab72e9288 | |||

| ef92fba867 | |||

| 36c96c4beb | |||

| d79ad53daf | |||

| 4c4b873eca | |||

| a062939705 | |||

| 3f14885539 | |||

| 393077ba9e | |||

| b0f9585da1 | |||

| 7c8ba04820 | |||

| 31f83c6dee | |||

| 8cccaef664 | |||

| 1944d09978 | |||

| a7d282b412 | |||

| aade62491c | |||

| b901555793 | |||

| debe146dcf | |||

| c8ef8cc88e | |||

| 9bd176621d | |||

| 05baaacc83 | |||

| 9bc44c122f | |||

| 1fdd8acd0c | |||

| 92a6eac976 | |||

| dc227df229 | |||

| ff35a58f3f | |||

| 64fde6b02e | |||

| 1047462898 | |||

| 76ba89c356 | |||

| f3b4ee6a0b | |||

| d6421ee7cc | |||

| 148ef5833e | |||

| a67cbb3276 | |||

| 0485c83388 | |||

| a8d3363a6f | |||

| dba7b84adb | |||

| 2567ceea74 | |||

| 4ec31dbf35 | |||

| e4e326cd06 | |||

| 0d17f4f486 | |||

| 7838393b9f | |||

| c90c72dbba | |||

| 04eb73ac27 | |||

| de082f6100 | |||

| 2c44c8e468 | |||

| 06b60ca96b | |||

| 4d64a9777e | |||

| 26a12477ac | |||

| 43447e5df5 | |||

| c66f595666 | |||

| ad64b873c0 | |||

| c6be0a48a1 | |||

| 5eb0364a98 | |||

| 8d0074c712 | |||

| 3883a89212 | |||

| cfa61a6c26 | |||

| 7f28cdd2a3 | |||

| 9ea3eaafae | |||

| 16249cc80d | |||

| 2589670755 | |||

| 17bc96c3b3 | |||

| b87ee4904f | |||

| 7519a8c39d | |||

| df4bf95b93 | |||

| 602e00058a | |||

| 6aba7b6bcf | |||

| ff7aaa95e1 | |||

| f166919160 | |||

| aecbfd28ee | |||

| b24e3ff6c4 | |||

| cda67b2894 | |||

| 6040c5062b | |||

| d83266c546 | |||

| 6039a1430e | |||

| c2d4e870c8 | |||

| 1faceddc40 | |||

| 471f467e63 | |||

| a0d8be4dc6 | |||

| 035451cdb8 | |||

| af392681e3 | |||

| a0bb6a700a | |||

| ad000550a6 | |||

| 0fc6a74b6d | |||

| 0b96635bcc | |||

| 5b2e39f80d | |||

| a8b6470a14 | |||

| e945f1c38f | |||

| d0dff9572d | |||

| 68e8c159ce | |||

| a8038c90ce | |||

| 91c990e30a | |||

| b6b49c876b | |||

| cf98a95dd1 | |||

| 921e79c56c | |||

| 2cfbf30f05 | |||

| 3e08506c4e | |||

| d4cba6908e | |||

| dfd3456343 | |||

| 3cd1598067 | |||

| 1be86cdf8e | |||

| bdae8d5017 | |||

| d5e17da9d3 | |||

| e4b10aa28c | |||

| 1c1b079058 | |||

| 967a0807ad | |||

| b8d8a5fd6b | |||

| 18a54b86f4 | |||

| 17af095e14 | |||

| a71cbcfc9b | |||

| 29aa6dceed | |||

| 81ee333b07 |

@@ -1,47 +1,47 @@

|

|||||||

### 2.3.120-20220425 ISO image built on 2022/04/25

|

### 2.4.5-20230807 ISO image released on 2023/08/07

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

### Download and Verify

|

### Download and Verify

|

||||||

|

|

||||||

2.3.120-20220425 ISO image:

|

2.4.5-20230807 ISO image:

|

||||||

https://download.securityonion.net/file/securityonion/securityonion-2.3.120-20220425.iso

|

https://download.securityonion.net/file/securityonion/securityonion-2.4.5-20230807.iso

|

||||||

|

|

||||||

MD5: C99729E452B064C471BEF04532F28556

|

MD5: F83FD635025A3A65B380EAFCEB61A92E

|

||||||

SHA1: 60BF07D5347C24568C7B793BFA9792E98479CFBF

|

SHA1: 5864D4CD520617E3328A3D956CAFCC378A8D2D08

|

||||||

SHA256: CD17D0D7CABE21D45FA45E1CF91C5F24EB9608C79FF88480134E5592AFDD696E

|

SHA256: D333BAE0DD198DFD80DF59375456D228A4E18A24EDCDB15852CD4CA3F92B69A7

|

||||||

|

|

||||||

Signature for ISO image:

|

Signature for ISO image:

|

||||||

https://github.com/Security-Onion-Solutions/securityonion/raw/master/sigs/securityonion-2.3.120-20220425.iso.sig

|

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.5-20230807.iso.sig

|

||||||

|

|

||||||

Signing key:

|

Signing key:

|

||||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/master/KEYS

|

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||||

|

|

||||||

For example, here are the steps you can use on most Linux distributions to download and verify our Security Onion ISO image.

|

For example, here are the steps you can use on most Linux distributions to download and verify our Security Onion ISO image.

|

||||||

|

|

||||||

Download and import the signing key:

|

Download and import the signing key:

|

||||||

```

|

```

|

||||||

wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/master/KEYS -O - | gpg --import -

|

wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS -O - | gpg --import -

|

||||||

```

|

```

|

||||||

|

|

||||||

Download the signature file for the ISO:

|

Download the signature file for the ISO:

|

||||||

```

|

```

|

||||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/master/sigs/securityonion-2.3.120-20220425.iso.sig

|

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.5-20230807.iso.sig

|

||||||

```

|

```

|

||||||

|

|

||||||

Download the ISO image:

|

Download the ISO image:

|

||||||

```

|

```

|

||||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.3.120-20220425.iso

|

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.5-20230807.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

Verify the downloaded ISO image using the signature file:

|

Verify the downloaded ISO image using the signature file:

|

||||||

```

|

```

|

||||||

gpg --verify securityonion-2.3.120-20220425.iso.sig securityonion-2.3.120-20220425.iso

|

gpg --verify securityonion-2.4.5-20230807.iso.sig securityonion-2.4.5-20230807.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||||

```

|

```

|

||||||

gpg: Signature made Mon 25 Apr 2022 08:20:40 AM EDT using RSA key ID FE507013

|

gpg: Signature made Sat 05 Aug 2023 10:12:46 AM EDT using RSA key ID FE507013

|

||||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||||

gpg: WARNING: This key is not certified with a trusted signature!

|

gpg: WARNING: This key is not certified with a trusted signature!

|

||||||

gpg: There is no indication that the signature belongs to the owner.

|

gpg: There is no indication that the signature belongs to the owner.

|

||||||

@@ -49,4 +49,4 @@ Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

|||||||

```

|

```

|

||||||

|

|

||||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||||

https://docs.securityonion.net/en/2.3/installation.html

|

https://docs.securityonion.net/en/2.4/installation.html

|

||||||

@@ -1,20 +1,26 @@

|

|||||||

## Security Onion 2.4

|

## Security Onion 2.4 Release Candidate 2 (RC2)

|

||||||

|

|

||||||

Security Onion 2.4 is here!

|

Security Onion 2.4 Release Candidate 2 (RC2) is here!

|

||||||

|

|

||||||

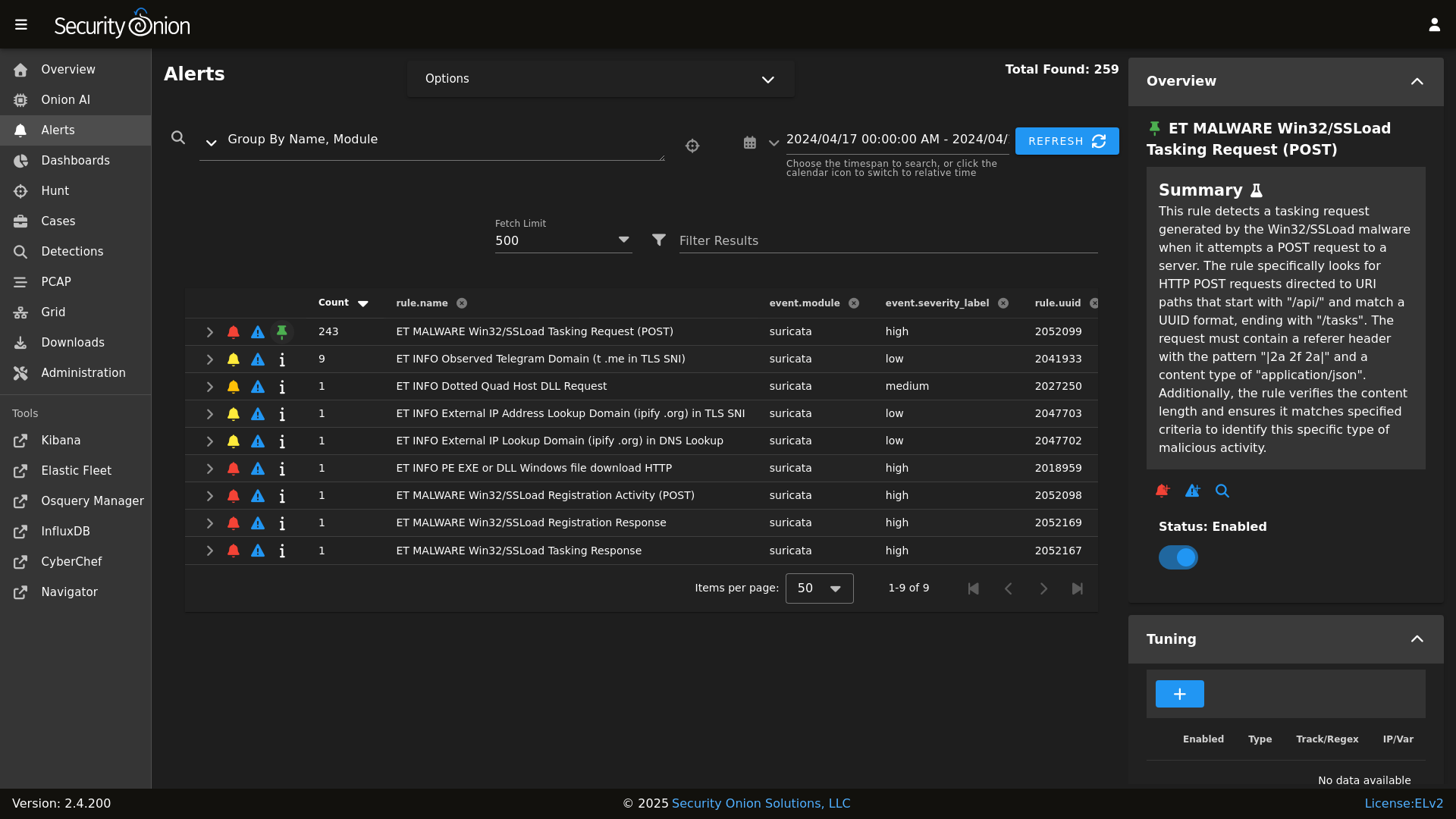

## Screenshots

|

## Screenshots

|

||||||

|

|

||||||

Alerts

|

Alerts

|

||||||

|

|

||||||

|

|

||||||

Dashboards

|

Dashboards

|

||||||

|

|

||||||

|

|

||||||

Hunt

|

Hunt

|

||||||

|

|

||||||

|

|

||||||

Cases

|

PCAP

|

||||||

|

|

||||||

|

|

||||||

|

Grid

|

||||||

|

|

||||||

|

|

||||||

|

Config

|

||||||

|

|

||||||

|

|

||||||

### Release Notes

|

### Release Notes

|

||||||

|

|

||||||

|

|||||||

@@ -1,13 +0,0 @@

|

|||||||

logrotate:

|

|

||||||

conf: |

|

|

||||||

daily

|

|

||||||

rotate 14

|

|

||||||

missingok

|

|

||||||

copytruncate

|

|

||||||

compress

|

|

||||||

create

|

|

||||||

extension .log

|

|

||||||

dateext

|

|

||||||

dateyesterday

|

|

||||||

group_conf: |

|

|

||||||

su root socore

|

|

||||||

@@ -1,42 +0,0 @@

|

|||||||

logstash:

|

|

||||||

pipelines:

|

|

||||||

helix:

|

|

||||||

config:

|

|

||||||

- so/0010_input_hhbeats.conf

|

|

||||||

- so/1033_preprocess_snort.conf

|

|

||||||

- so/1100_preprocess_bro_conn.conf

|

|

||||||

- so/1101_preprocess_bro_dhcp.conf

|

|

||||||

- so/1102_preprocess_bro_dns.conf

|

|

||||||

- so/1103_preprocess_bro_dpd.conf

|

|

||||||

- so/1104_preprocess_bro_files.conf

|

|

||||||

- so/1105_preprocess_bro_ftp.conf

|

|

||||||

- so/1106_preprocess_bro_http.conf

|

|

||||||

- so/1107_preprocess_bro_irc.conf

|

|

||||||

- so/1108_preprocess_bro_kerberos.conf

|

|

||||||

- so/1109_preprocess_bro_notice.conf

|

|

||||||

- so/1110_preprocess_bro_rdp.conf

|

|

||||||

- so/1111_preprocess_bro_signatures.conf

|

|

||||||

- so/1112_preprocess_bro_smtp.conf

|

|

||||||

- so/1113_preprocess_bro_snmp.conf

|

|

||||||

- so/1114_preprocess_bro_software.conf

|

|

||||||

- so/1115_preprocess_bro_ssh.conf

|

|

||||||

- so/1116_preprocess_bro_ssl.conf

|

|

||||||

- so/1117_preprocess_bro_syslog.conf

|

|

||||||

- so/1118_preprocess_bro_tunnel.conf

|

|

||||||

- so/1119_preprocess_bro_weird.conf

|

|

||||||

- so/1121_preprocess_bro_mysql.conf

|

|

||||||

- so/1122_preprocess_bro_socks.conf

|

|

||||||

- so/1123_preprocess_bro_x509.conf

|

|

||||||

- so/1124_preprocess_bro_intel.conf

|

|

||||||

- so/1125_preprocess_bro_modbus.conf

|

|

||||||

- so/1126_preprocess_bro_sip.conf

|

|

||||||

- so/1127_preprocess_bro_radius.conf

|

|

||||||

- so/1128_preprocess_bro_pe.conf

|

|

||||||

- so/1129_preprocess_bro_rfb.conf

|

|

||||||

- so/1130_preprocess_bro_dnp3.conf

|

|

||||||

- so/1131_preprocess_bro_smb_files.conf

|

|

||||||

- so/1132_preprocess_bro_smb_mapping.conf

|

|

||||||

- so/1133_preprocess_bro_ntlm.conf

|

|

||||||

- so/1134_preprocess_bro_dce_rpc.conf

|

|

||||||

- so/8001_postprocess_common_ip_augmentation.conf

|

|

||||||

- so/9997_output_helix.conf.jinja

|

|

||||||

@@ -4,6 +4,7 @@ logstash:

|

|||||||

- 0.0.0.0:3765:3765

|

- 0.0.0.0:3765:3765

|

||||||

- 0.0.0.0:5044:5044

|

- 0.0.0.0:5044:5044

|

||||||

- 0.0.0.0:5055:5055

|

- 0.0.0.0:5055:5055

|

||||||

|

- 0.0.0.0:5056:5056

|

||||||

- 0.0.0.0:5644:5644

|

- 0.0.0.0:5644:5644

|

||||||

- 0.0.0.0:6050:6050

|

- 0.0.0.0:6050:6050

|

||||||

- 0.0.0.0:6051:6051

|

- 0.0.0.0:6051:6051

|

||||||

|

|||||||

@@ -1,8 +0,0 @@

|

|||||||

logstash:

|

|

||||||

pipelines:

|

|

||||||

manager:

|

|

||||||

config:

|

|

||||||

- so/0011_input_endgame.conf

|

|

||||||

- so/0012_input_elastic_agent.conf

|

|

||||||

- so/9999_output_redis.conf.jinja

|

|

||||||

|

|

||||||

@@ -2,7 +2,7 @@

|

|||||||

{% set cached_grains = salt.saltutil.runner('cache.grains', tgt='*') %}

|

{% set cached_grains = salt.saltutil.runner('cache.grains', tgt='*') %}

|

||||||

{% for minionid, ip in salt.saltutil.runner(

|

{% for minionid, ip in salt.saltutil.runner(

|

||||||

'mine.get',

|

'mine.get',

|

||||||

tgt='G@role:so-manager or G@role:so-managersearch or G@role:so-standalone or G@role:so-searchnode or G@role:so-heavynode or G@role:so-receiver or G@role:so-helix ',

|

tgt='G@role:so-manager or G@role:so-managersearch or G@role:so-standalone or G@role:so-searchnode or G@role:so-heavynode or G@role:so-receiver or G@role:so-fleet ',

|

||||||

fun='network.ip_addrs',

|

fun='network.ip_addrs',

|

||||||

tgt_type='compound') | dictsort()

|

tgt_type='compound') | dictsort()

|

||||||

%}

|

%}

|

||||||

|

|||||||

@@ -1,8 +0,0 @@

|

|||||||

logstash:

|

|

||||||

pipelines:

|

|

||||||

receiver:

|

|

||||||

config:

|

|

||||||

- so/0011_input_endgame.conf

|

|

||||||

- so/0012_input_elastic_agent.conf

|

|

||||||

- so/9999_output_redis.conf.jinja

|

|

||||||

|

|

||||||

@@ -1,7 +0,0 @@

|

|||||||

logstash:

|

|

||||||

pipelines:

|

|

||||||

search:

|

|

||||||

config:

|

|

||||||

- so/0900_input_redis.conf.jinja

|

|

||||||

- so/9805_output_elastic_agent.conf.jinja

|

|

||||||

- so/9900_output_endgame.conf.jinja

|

|

||||||

@@ -0,0 +1,14 @@

|

|||||||

|

# Copyright Jason Ertel (github.com/jertel).

|

||||||

|

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||||

|

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||||

|

# https://securityonion.net/license; you may not use this file except in compliance with

|

||||||

|

# the Elastic License 2.0.

|

||||||

|

|

||||||

|

# Note: Per the Elastic License 2.0, the second limitation states:

|

||||||

|

#

|

||||||

|

# "You may not move, change, disable, or circumvent the license key functionality

|

||||||

|

# in the software, and you may not remove or obscure any functionality in the

|

||||||

|

# software that is protected by the license key."

|

||||||

|

|

||||||

|

# This file is generated by Security Onion and contains a list of license-enabled features.

|

||||||

|

features: []

|

||||||

+141

-65

@@ -1,44 +1,26 @@

|

|||||||

base:

|

base:

|

||||||

'*':

|

'*':

|

||||||

- patch.needs_restarting

|

- global.soc_global

|

||||||

- ntp.soc_ntp

|

- global.adv_global

|

||||||

- ntp.adv_ntp

|

|

||||||

- logrotate

|

|

||||||

- docker.soc_docker

|

- docker.soc_docker

|

||||||

- docker.adv_docker

|

- docker.adv_docker

|

||||||

|

- firewall.soc_firewall

|

||||||

|

- firewall.adv_firewall

|

||||||

|

- influxdb.token

|

||||||

|

- logrotate.soc_logrotate

|

||||||

|

- logrotate.adv_logrotate

|

||||||

|

- nginx.soc_nginx

|

||||||

|

- nginx.adv_nginx

|

||||||

|

- node_data.ips

|

||||||

|

- ntp.soc_ntp

|

||||||

|

- ntp.adv_ntp

|

||||||

|

- patch.needs_restarting

|

||||||

|

- patch.soc_patch

|

||||||

|

- patch.adv_patch

|

||||||

- sensoroni.soc_sensoroni

|

- sensoroni.soc_sensoroni

|

||||||

- sensoroni.adv_sensoroni

|

- sensoroni.adv_sensoroni

|

||||||

- telegraf.soc_telegraf

|

- telegraf.soc_telegraf

|

||||||

- telegraf.adv_telegraf

|

- telegraf.adv_telegraf

|

||||||

- influxdb.token

|

|

||||||

- node_data.ips

|

|

||||||

|

|

||||||

'* and not *_eval and not *_import':

|

|

||||||

- logstash.nodes

|

|

||||||

|

|

||||||

'*_eval or *_heavynode or *_sensor or *_standalone or *_import':

|

|

||||||

- match: compound

|

|

||||||

- zeek

|

|

||||||

- bpf.soc_bpf

|

|

||||||

- bpf.adv_bpf

|

|

||||||

|

|

||||||

'*_managersearch or *_heavynode':

|

|

||||||

- match: compound

|

|

||||||

- logstash

|

|

||||||

- logstash.manager

|

|

||||||

- logstash.search

|

|

||||||

- logstash.soc_logstash

|

|

||||||

- logstash.adv_logstash

|

|

||||||

- elasticsearch.index_templates

|

|

||||||

- elasticsearch.soc_elasticsearch

|

|

||||||

- elasticsearch.adv_elasticsearch

|

|

||||||

|

|

||||||

'*_manager':

|

|

||||||

- logstash

|

|

||||||

- logstash.manager

|

|

||||||

- logstash.soc_logstash

|

|

||||||

- logstash.adv_logstash

|

|

||||||

- elasticsearch.index_templates

|

|

||||||

|

|

||||||

'*_manager or *_managersearch':

|

'*_manager or *_managersearch':

|

||||||

- match: compound

|

- match: compound

|

||||||

@@ -49,14 +31,20 @@ base:

|

|||||||

- kibana.secrets

|

- kibana.secrets

|

||||||

{% endif %}

|

{% endif %}

|

||||||

- secrets

|

- secrets

|

||||||

- soc_global

|

|

||||||

- adv_global

|

|

||||||

- manager.soc_manager

|

- manager.soc_manager

|

||||||

- manager.adv_manager

|

- manager.adv_manager

|

||||||

- idstools.soc_idstools

|

- idstools.soc_idstools

|

||||||

- idstools.adv_idstools

|

- idstools.adv_idstools

|

||||||

|

- logstash.nodes

|

||||||

|

- logstash.soc_logstash

|

||||||

|

- logstash.adv_logstash

|

||||||

- soc.soc_soc

|

- soc.soc_soc

|

||||||

- soc.adv_soc

|

- soc.adv_soc

|

||||||

|

- soc.license

|

||||||

|

- soctopus.soc_soctopus

|

||||||

|

- soctopus.adv_soctopus

|

||||||

|

- kibana.soc_kibana

|

||||||

|

- kibana.adv_kibana

|

||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

- kratos.adv_kratos

|

- kratos.adv_kratos

|

||||||

- redis.soc_redis

|

- redis.soc_redis

|

||||||

@@ -65,17 +53,31 @@ base:

|

|||||||

- influxdb.adv_influxdb

|

- influxdb.adv_influxdb

|

||||||

- elasticsearch.soc_elasticsearch

|

- elasticsearch.soc_elasticsearch

|

||||||

- elasticsearch.adv_elasticsearch

|

- elasticsearch.adv_elasticsearch

|

||||||

|

- elasticfleet.soc_elasticfleet

|

||||||

|

- elasticfleet.adv_elasticfleet

|

||||||

|

- elastalert.soc_elastalert

|

||||||

|

- elastalert.adv_elastalert

|

||||||

- backup.soc_backup

|

- backup.soc_backup

|

||||||

- backup.adv_backup

|

- backup.adv_backup

|

||||||

- firewall.soc_firewall

|

- curator.soc_curator

|

||||||

- firewall.adv_firewall

|

- curator.adv_curator

|

||||||

|

- soctopus.soc_soctopus

|

||||||

|

- soctopus.adv_soctopus

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_sensor':

|

'*_sensor':

|

||||||

- healthcheck.sensor

|

- healthcheck.sensor

|

||||||

- soc_global

|

- strelka.soc_strelka

|

||||||

- adv_global

|

- strelka.adv_strelka

|

||||||

|

- zeek.soc_zeek

|

||||||

|

- zeek.adv_zeek

|

||||||

|

- bpf.soc_bpf

|

||||||

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

|

- suricata.soc_suricata

|

||||||

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

@@ -89,15 +91,28 @@ base:

|

|||||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/kibana/secrets.sls') %}

|

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/kibana/secrets.sls') %}

|

||||||

- kibana.secrets

|

- kibana.secrets

|

||||||

{% endif %}

|

{% endif %}

|

||||||

- soc_global

|

|

||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

- elasticsearch.soc_elasticsearch

|

- elasticsearch.soc_elasticsearch

|

||||||

- elasticsearch.adv_elasticsearch

|

- elasticsearch.adv_elasticsearch

|

||||||

|

- elasticfleet.soc_elasticfleet

|

||||||

|

- elasticfleet.adv_elasticfleet

|

||||||

|

- elastalert.soc_elastalert

|

||||||

|

- elastalert.adv_elastalert

|

||||||

- manager.soc_manager

|

- manager.soc_manager

|

||||||

- manager.adv_manager

|

- manager.adv_manager

|

||||||

- idstools.soc_idstools

|

- idstools.soc_idstools

|

||||||

- idstools.adv_idstools

|

- idstools.adv_idstools

|

||||||

- soc.soc_soc

|

- soc.soc_soc

|

||||||

|

- soc.adv_soc

|

||||||

|

- soc.license

|

||||||

|

- soctopus.soc_soctopus

|

||||||

|

- soctopus.adv_soctopus

|

||||||

|

- kibana.soc_kibana

|

||||||

|

- kibana.adv_kibana

|

||||||

|

- strelka.soc_strelka

|

||||||

|

- strelka.adv_strelka

|

||||||

|

- curator.soc_curator

|

||||||

|

- curator.adv_curator

|

||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

- kratos.adv_kratos

|

- kratos.adv_kratos

|

||||||

- redis.soc_redis

|

- redis.soc_redis

|

||||||

@@ -106,15 +121,19 @@ base:

|

|||||||

- influxdb.adv_influxdb

|

- influxdb.adv_influxdb

|

||||||

- backup.soc_backup

|

- backup.soc_backup

|

||||||

- backup.adv_backup

|

- backup.adv_backup

|

||||||

- firewall.soc_firewall

|

- zeek.soc_zeek

|

||||||

- firewall.adv_firewall

|

- zeek.adv_zeek

|

||||||

|

- bpf.soc_bpf

|

||||||

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

|

- suricata.soc_suricata

|

||||||

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_standalone':

|

'*_standalone':

|

||||||

- logstash

|

- logstash.nodes

|

||||||

- logstash.manager

|

|

||||||

- logstash.search

|

|

||||||

- logstash.soc_logstash

|

- logstash.soc_logstash

|

||||||

- logstash.adv_logstash

|

- logstash.adv_logstash

|

||||||

- elasticsearch.index_templates

|

- elasticsearch.index_templates

|

||||||

@@ -126,7 +145,6 @@ base:

|

|||||||

{% endif %}

|

{% endif %}

|

||||||

- secrets

|

- secrets

|

||||||

- healthcheck.standalone

|

- healthcheck.standalone

|

||||||

- soc_global

|

|

||||||

- idstools.soc_idstools

|

- idstools.soc_idstools

|

||||||

- idstools.adv_idstools

|

- idstools.adv_idstools

|

||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

@@ -137,51 +155,82 @@ base:

|

|||||||

- influxdb.adv_influxdb

|

- influxdb.adv_influxdb

|

||||||

- elasticsearch.soc_elasticsearch

|

- elasticsearch.soc_elasticsearch

|

||||||

- elasticsearch.adv_elasticsearch

|

- elasticsearch.adv_elasticsearch

|

||||||

|

- elasticfleet.soc_elasticfleet

|

||||||

|

- elasticfleet.adv_elasticfleet

|

||||||

|

- elastalert.soc_elastalert

|

||||||

|

- elastalert.adv_elastalert

|

||||||

- manager.soc_manager

|

- manager.soc_manager

|

||||||

- manager.adv_manager

|

- manager.adv_manager

|

||||||

- soc.soc_soc

|

- soc.soc_soc

|

||||||

|

- soc.adv_soc

|

||||||

|

- soc.license

|

||||||

|

- soctopus.soc_soctopus

|

||||||

|

- soctopus.adv_soctopus

|

||||||

|

- kibana.soc_kibana

|

||||||

|

- kibana.adv_kibana

|

||||||

|

- strelka.soc_strelka

|

||||||

|

- strelka.adv_strelka

|

||||||

|

- curator.soc_curator

|

||||||

|

- curator.adv_curator

|

||||||

- backup.soc_backup

|

- backup.soc_backup

|

||||||

- backup.adv_backup

|

- backup.adv_backup

|

||||||

- firewall.soc_firewall

|

- zeek.soc_zeek

|

||||||

- firewall.adv_firewall

|

- zeek.adv_zeek

|

||||||

|

- bpf.soc_bpf

|

||||||

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

|

- suricata.soc_suricata

|

||||||

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_heavynode':

|

'*_heavynode':

|

||||||

- elasticsearch.auth

|

- elasticsearch.auth

|

||||||

- soc_global

|

- logstash.nodes

|

||||||

|

- logstash.soc_logstash

|

||||||

|

- logstash.adv_logstash

|

||||||

|

- elasticsearch.soc_elasticsearch

|

||||||

|

- elasticsearch.adv_elasticsearch

|

||||||

|

- curator.soc_curator

|

||||||

|

- curator.adv_curator

|

||||||

- redis.soc_redis

|

- redis.soc_redis

|

||||||

|

- redis.adv_redis

|

||||||

|

- zeek.soc_zeek

|

||||||

|

- zeek.adv_zeek

|

||||||

|

- bpf.soc_bpf

|

||||||

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

|

- suricata.soc_suricata

|

||||||

|

- suricata.adv_suricata

|

||||||

|

- strelka.soc_strelka

|

||||||

|

- strelka.adv_strelka

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_idh':

|

'*_idh':

|

||||||

- soc_global

|

|

||||||

- adv_global

|

|

||||||

- idh.soc_idh

|

- idh.soc_idh

|

||||||

- idh.adv_idh

|

- idh.adv_idh

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_searchnode':

|

'*_searchnode':

|

||||||

- logstash

|

- logstash.nodes

|

||||||

- logstash.search

|

|

||||||

- logstash.soc_logstash

|

- logstash.soc_logstash

|

||||||

- logstash.adv_logstash

|

- logstash.adv_logstash

|

||||||

- elasticsearch.index_templates

|

|

||||||

- elasticsearch.soc_elasticsearch

|

- elasticsearch.soc_elasticsearch

|

||||||

- elasticsearch.adv_elasticsearch

|

- elasticsearch.adv_elasticsearch

|

||||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||||

- elasticsearch.auth

|

- elasticsearch.auth

|

||||||

{% endif %}

|

{% endif %}

|

||||||

- redis.soc_redis

|

- redis.soc_redis

|

||||||

- soc_global

|

- redis.adv_redis

|

||||||

- adv_global

|

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_receiver':

|

'*_receiver':

|

||||||

- logstash

|

- logstash.nodes

|

||||||

- logstash.receiver

|

|

||||||

- logstash.soc_logstash

|

- logstash.soc_logstash

|

||||||

- logstash.adv_logstash

|

- logstash.adv_logstash

|

||||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||||

@@ -189,8 +238,6 @@ base:

|

|||||||

{% endif %}

|

{% endif %}

|

||||||

- redis.soc_redis

|

- redis.soc_redis

|

||||||

- redis.adv_redis

|

- redis.adv_redis

|

||||||

- soc_global

|

|

||||||

- adv_global

|

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

@@ -206,11 +253,21 @@ base:

|

|||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

- elasticsearch.soc_elasticsearch

|

- elasticsearch.soc_elasticsearch

|

||||||

- elasticsearch.adv_elasticsearch

|

- elasticsearch.adv_elasticsearch

|

||||||

|

- elasticfleet.soc_elasticfleet

|

||||||

|

- elasticfleet.adv_elasticfleet

|

||||||

|

- elastalert.soc_elastalert

|

||||||

|

- elastalert.adv_elastalert

|

||||||

- manager.soc_manager

|

- manager.soc_manager

|

||||||

- manager.adv_manager

|

- manager.adv_manager

|

||||||

- soc.soc_soc

|

- soc.soc_soc

|

||||||

- soc_global

|

- soc.adv_soc

|

||||||

- adv_global

|

- soc.license

|

||||||

|

- soctopus.soc_soctopus

|

||||||

|

- soctopus.adv_soctopus

|

||||||

|

- kibana.soc_kibana

|

||||||

|

- kibana.adv_kibana

|

||||||

|

- curator.soc_curator

|

||||||

|

- curator.adv_curator

|

||||||

- backup.soc_backup

|

- backup.soc_backup

|

||||||

- backup.adv_backup

|

- backup.adv_backup

|

||||||

- kratos.soc_kratos

|

- kratos.soc_kratos

|

||||||

@@ -219,11 +276,30 @@ base:

|

|||||||

- redis.adv_redis

|

- redis.adv_redis

|

||||||

- influxdb.soc_influxdb

|

- influxdb.soc_influxdb

|

||||||

- influxdb.adv_influxdb

|

- influxdb.adv_influxdb

|

||||||

- firewall.soc_firewall

|

- zeek.soc_zeek

|

||||||

- firewall.adv_firewall

|

- zeek.adv_zeek

|

||||||

|

- bpf.soc_bpf

|

||||||

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

|

- suricata.soc_suricata

|

||||||

|

- suricata.adv_suricata

|

||||||

|

- strelka.soc_strelka

|

||||||

|

- strelka.adv_strelka

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

'*_workstation':

|

'*_fleet':

|

||||||

|

- backup.soc_backup

|

||||||

|

- backup.adv_backup

|

||||||

|

- logstash.nodes

|

||||||

|

- logstash.soc_logstash

|

||||||

|

- logstash.adv_logstash

|

||||||

|

- elasticfleet.soc_elasticfleet

|

||||||

|

- elasticfleet.adv_elasticfleet

|

||||||

|

- minions.{{ grains.id }}

|

||||||

|

- minions.adv_{{ grains.id }}

|

||||||

|

|

||||||

|

'*_desktop':

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

- minions.adv_{{ grains.id }}

|

- minions.adv_{{ grains.id }}

|

||||||

|

|||||||

@@ -3,14 +3,14 @@ import subprocess

|

|||||||

|

|

||||||

def check():

|

def check():

|

||||||

|

|

||||||

os = __grains__['os']

|

osfam = __grains__['os_family']

|

||||||

retval = 'False'

|

retval = 'False'

|

||||||

|

|

||||||

if os == 'Ubuntu':

|

if osfam == 'Debian':

|

||||||

if path.exists('/var/run/reboot-required'):

|

if path.exists('/var/run/reboot-required'):

|

||||||

retval = 'True'

|

retval = 'True'

|

||||||

|

|

||||||

elif os == 'Rocky':

|

elif osfam == 'RedHat':

|

||||||

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

||||||

|

|

||||||

try:

|

try:

|

||||||

|

|||||||

@@ -3,16 +3,6 @@

|

|||||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

# Elastic License 2.0.

|

# Elastic License 2.0.

|

||||||

|

|

||||||

|

|

||||||

{% set ZEEKVER = salt['pillar.get']('global:mdengine', '') %}

|

|

||||||

{% set PLAYBOOK = salt['pillar.get']('manager:playbook', '0') %}

|

|

||||||

{% set ELASTALERT = salt['pillar.get']('elastalert:enabled', True) %}

|

|

||||||

{% set ELASTICSEARCH = salt['pillar.get']('elasticsearch:enabled', True) %}

|

|

||||||

{% set KIBANA = salt['pillar.get']('kibana:enabled', True) %}

|

|

||||||

{% set LOGSTASH = salt['pillar.get']('logstash:enabled', True) %}

|

|

||||||

{% set CURATOR = salt['pillar.get']('curator:enabled', True) %}

|

|

||||||

{% set REDIS = salt['pillar.get']('redis:enabled', True) %}

|

|

||||||

{% set STRELKA = salt['pillar.get']('strelka:enabled', '0') %}

|

|

||||||

{% set ISAIRGAP = salt['pillar.get']('global:airgap', False) %}

|

{% set ISAIRGAP = salt['pillar.get']('global:airgap', False) %}

|

||||||

{% import_yaml 'salt/minion.defaults.yaml' as saltversion %}

|

{% import_yaml 'salt/minion.defaults.yaml' as saltversion %}

|

||||||

{% set saltversion = saltversion.salt.minion.version %}

|

{% set saltversion = saltversion.salt.minion.version %}

|

||||||

@@ -35,6 +25,7 @@

|

|||||||

'soc',

|

'soc',

|

||||||

'kratos',

|

'kratos',

|

||||||

'elasticfleet',

|

'elasticfleet',

|

||||||

|

'elastic-fleet-package-registry',

|

||||||

'firewall',

|

'firewall',

|

||||||

'idstools',

|

'idstools',

|

||||||

'suricata.manager',

|

'suricata.manager',

|

||||||

@@ -55,23 +46,7 @@

|

|||||||

'pcap',

|

'pcap',

|

||||||

'suricata',

|

'suricata',

|

||||||

'healthcheck',

|

'healthcheck',

|

||||||

'schedule',

|

'elasticagent',

|

||||||

'tcpreplay',

|

|

||||||

'docker_clean'

|

|

||||||

],

|

|

||||||

'so-helixsensor': [

|

|

||||||

'salt.master',

|

|

||||||

'ca',

|

|

||||||

'ssl',

|

|

||||||

'registry',

|

|

||||||

'telegraf',

|

|

||||||

'firewall',

|

|

||||||

'idstools',

|

|

||||||

'suricata.manager',

|

|

||||||

'zeek',

|

|

||||||

'redis',

|

|

||||||

'elasticsearch',

|

|

||||||

'logstash',

|

|

||||||

'schedule',

|

'schedule',

|

||||||

'tcpreplay',

|

'tcpreplay',

|

||||||

'docker_clean'

|

'docker_clean'

|

||||||

@@ -105,7 +80,8 @@

|

|||||||

'schedule',

|

'schedule',

|

||||||

'tcpreplay',

|

'tcpreplay',

|

||||||

'docker_clean',

|

'docker_clean',

|

||||||

'elasticfleet'

|

'elasticfleet',

|

||||||

|

'elastic-fleet-package-registry'

|

||||||

],

|

],

|

||||||

'so-manager': [

|

'so-manager': [

|

||||||

'salt.master',

|

'salt.master',

|

||||||

@@ -119,6 +95,7 @@

|

|||||||

'soc',

|

'soc',

|

||||||

'kratos',

|

'kratos',

|

||||||

'elasticfleet',

|

'elasticfleet',

|

||||||

|

'elastic-fleet-package-registry',

|

||||||

'firewall',

|

'firewall',

|

||||||

'idstools',

|

'idstools',

|

||||||

'suricata.manager',

|

'suricata.manager',

|

||||||

@@ -137,6 +114,7 @@

|

|||||||

'influxdb',

|

'influxdb',

|

||||||

'soc',

|

'soc',

|

||||||

'kratos',

|

'kratos',

|

||||||

|

'elastic-fleet-package-registry',

|

||||||

'elasticfleet',