mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-03-18 10:46:29 +01:00

Compare commits

21 Commits

mreeves/re

...

2.4/dev

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9ddd01748c | ||

|

|

89e470059e | ||

|

|

79b30e43d9 | ||

|

|

5cebce32f7 | ||

|

|

810681c92e | ||

|

|

51f9104d0f | ||

|

|

8da5ed673b | ||

|

|

83ba40b548 | ||

|

|

7de8528b34 | ||

|

|

e6bd57e08d | ||

|

|

06664440ad | ||

|

|

bd31f2898b | ||

|

|

5bf9d92b52 | ||

|

|

48c369ed11 | ||

|

|

7fec2d59a7 | ||

|

|

a0ad589c3a | ||

|

|

0bd54e2835 | ||

|

|

58f5c56b72 | ||

|

|

6472c610d0 | ||

|

|

179c1ea7f7 | ||

|

|

db964cad21 |

4

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

4

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

@@ -2,11 +2,13 @@ body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion are you asking about?

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4.10

|

||||

|

||||

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

@@ -1,177 +0,0 @@

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion are you asking about?

|

||||

options:

|

||||

-

|

||||

- 3.0.0

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Method

|

||||

description: How did you install Security Onion?

|

||||

options:

|

||||

-

|

||||

- Security Onion ISO image

|

||||

- Cloud image (Amazon, Azure, Google)

|

||||

- Network installation on Oracle 9 (unsupported)

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Description

|

||||

description: >

|

||||

Is this discussion about installation, configuration, upgrading, or other?

|

||||

options:

|

||||

-

|

||||

- installation

|

||||

- configuration

|

||||

- upgrading

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Type

|

||||

description: >

|

||||

When you installed, did you choose Import, Eval, Standalone, Distributed, or something else?

|

||||

options:

|

||||

-

|

||||

- Import

|

||||

- Eval

|

||||

- Standalone

|

||||

- Distributed

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Location

|

||||

description: >

|

||||

Is this deployment in the cloud, on-prem with Internet access, or airgap?

|

||||

options:

|

||||

-

|

||||

- cloud

|

||||

- on-prem with Internet access

|

||||

- airgap

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Hardware Specs

|

||||

description: >

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://securityonion.net/docs/hardware?

|

||||

options:

|

||||

-

|

||||

- Meets minimum requirements

|

||||

- Exceeds minimum requirements

|

||||

- Does not meet minimum requirements

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: CPU

|

||||

description: How many CPU cores do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: RAM

|

||||

description: How much RAM do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /

|

||||

description: How much storage do you have for the / partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /nsm

|

||||

description: How much storage do you have for the /nsm partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Collection

|

||||

description: >

|

||||

Are you collecting network traffic from a tap or span port?

|

||||

options:

|

||||

-

|

||||

- tap

|

||||

- span port

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Speeds

|

||||

description: >

|

||||

How much network traffic are you monitoring?

|

||||

options:

|

||||

-

|

||||

- Less than 1Gbps

|

||||

- 1Gbps to 10Gbps

|

||||

- more than 10Gbps

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Status

|

||||

description: >

|

||||

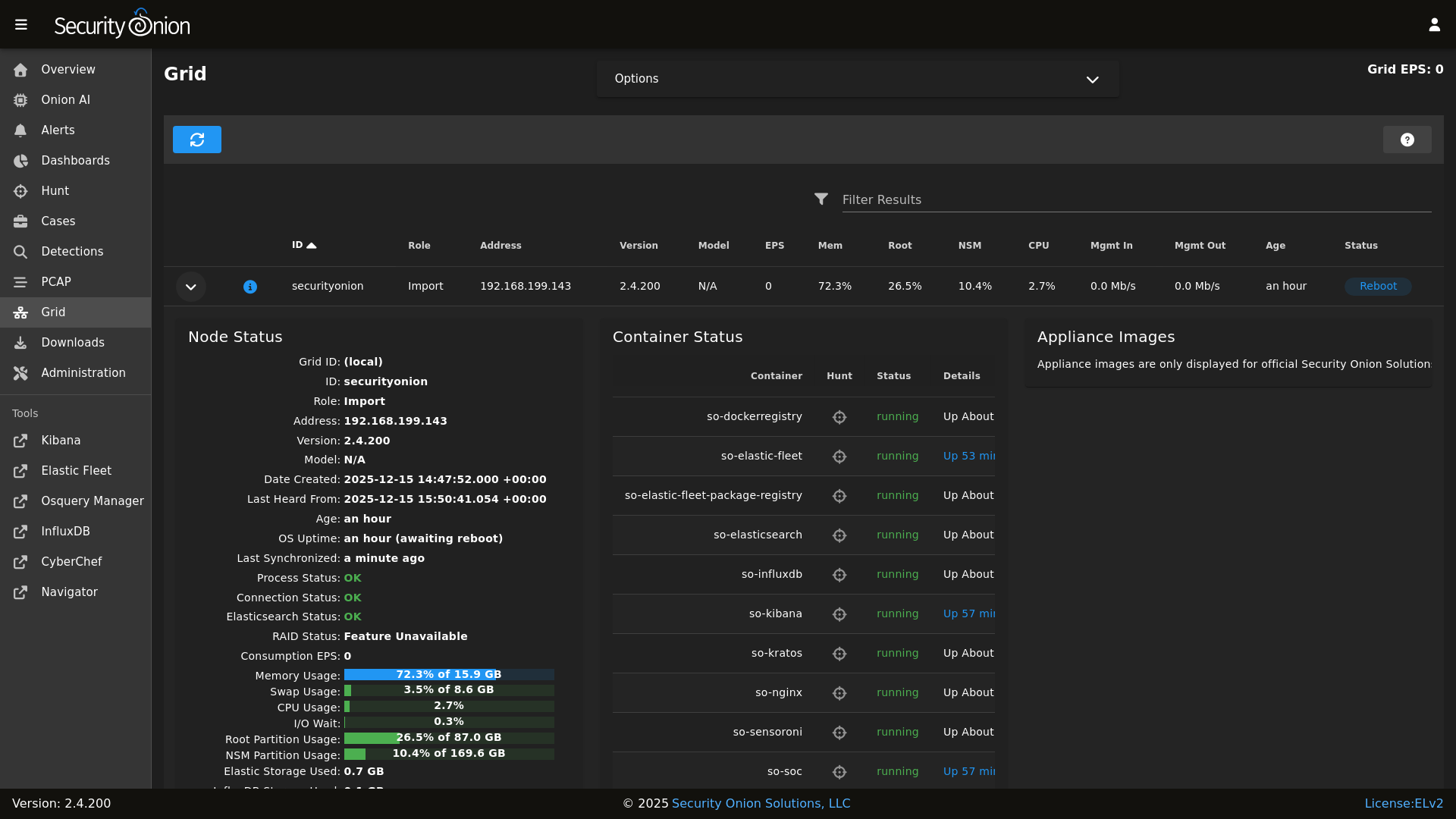

Does SOC Grid show all services on all nodes as running OK?

|

||||

options:

|

||||

-

|

||||

- Yes, all services on all nodes are running OK

|

||||

- No, one or more services are failed (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Salt Status

|

||||

description: >

|

||||

Do you get any failures when you run "sudo salt-call state.highstate"?

|

||||

options:

|

||||

-

|

||||

- Yes, there are salt failures (please provide detail below)

|

||||

- No, there are no failures

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Logs

|

||||

description: >

|

||||

Are there any additional clues in /opt/so/log/?

|

||||

options:

|

||||

-

|

||||

- Yes, there are additional clues in /opt/so/log/ (please provide detail below)

|

||||

- No, there are no additional clues

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Detail

|

||||

description: Please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and then provide detailed information to help us help you.

|

||||

placeholder: |-

|

||||

STOP! Before typing, please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 in their entirety!

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

validations:

|

||||

required: true

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Guidelines

|

||||

options:

|

||||

- label: I have read the discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and assert that I have followed the guidelines.

|

||||

required: true

|

||||

@@ -1,17 +1,17 @@

|

||||

### 2.4.210-20260302 ISO image released on 2026/03/02

|

||||

### 2.4.211-20260312 ISO image released on 2026/03/12

|

||||

|

||||

|

||||

### Download and Verify

|

||||

|

||||

2.4.210-20260302 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

||||

2.4.211-20260312 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.211-20260312.iso

|

||||

|

||||

MD5: 575F316981891EBED2EE4E1F42A1F016

|

||||

SHA1: 600945E8823221CBC5F1C056084A71355308227E

|

||||

SHA256: A6AA6471125F07FA6E2796430E94BEAFDEF728E833E9728FDFA7106351EBC47E

|

||||

MD5: 7082210AE9FF4D2634D71EAD4DC8F7A3

|

||||

SHA1: F76E08C47FD786624B2385B4235A3D61A4C3E9DC

|

||||

SHA256: CE6E61788DFC492E4897EEDC139D698B2EDBEB6B631DE0043F66E94AF8A0FF4E

|

||||

|

||||

Signature for ISO image:

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.211-20260312.iso.sig

|

||||

|

||||

Signing key:

|

||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||

@@ -25,22 +25,22 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

||||

|

||||

Download the signature file for the ISO:

|

||||

```

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.211-20260312.iso.sig

|

||||

```

|

||||

|

||||

Download the ISO image:

|

||||

```

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.211-20260312.iso

|

||||

```

|

||||

|

||||

Verify the downloaded ISO image using the signature file:

|

||||

```

|

||||

gpg --verify securityonion-2.4.210-20260302.iso.sig securityonion-2.4.210-20260302.iso

|

||||

gpg --verify securityonion-2.4.211-20260312.iso.sig securityonion-2.4.211-20260312.iso

|

||||

```

|

||||

|

||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||

```

|

||||

gpg: Signature made Mon 02 Mar 2026 11:55:24 AM EST using RSA key ID FE507013

|

||||

gpg: Signature made Wed 11 Mar 2026 03:05:09 PM EDT using RSA key ID FE507013

|

||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||

gpg: WARNING: This key is not certified with a trusted signature!

|

||||

gpg: There is no indication that the signature belongs to the owner.

|

||||

|

||||

66

README.md

66

README.md

@@ -1,58 +1,50 @@

|

||||

<p align="center">

|

||||

<img src="https://securityonionsolutions.com/logo/logo-so-onion-dark.svg" width="400" alt="Security Onion Logo">

|

||||

</p>

|

||||

## Security Onion 2.4

|

||||

|

||||

# Security Onion

|

||||

Security Onion 2.4 is here!

|

||||

|

||||

Security Onion is a free and open Linux distribution for threat hunting, enterprise security monitoring, and log management. It includes a comprehensive suite of tools designed to work together to provide visibility into your network and host activity.

|

||||

## Screenshots

|

||||

|

||||

## ✨ Features

|

||||

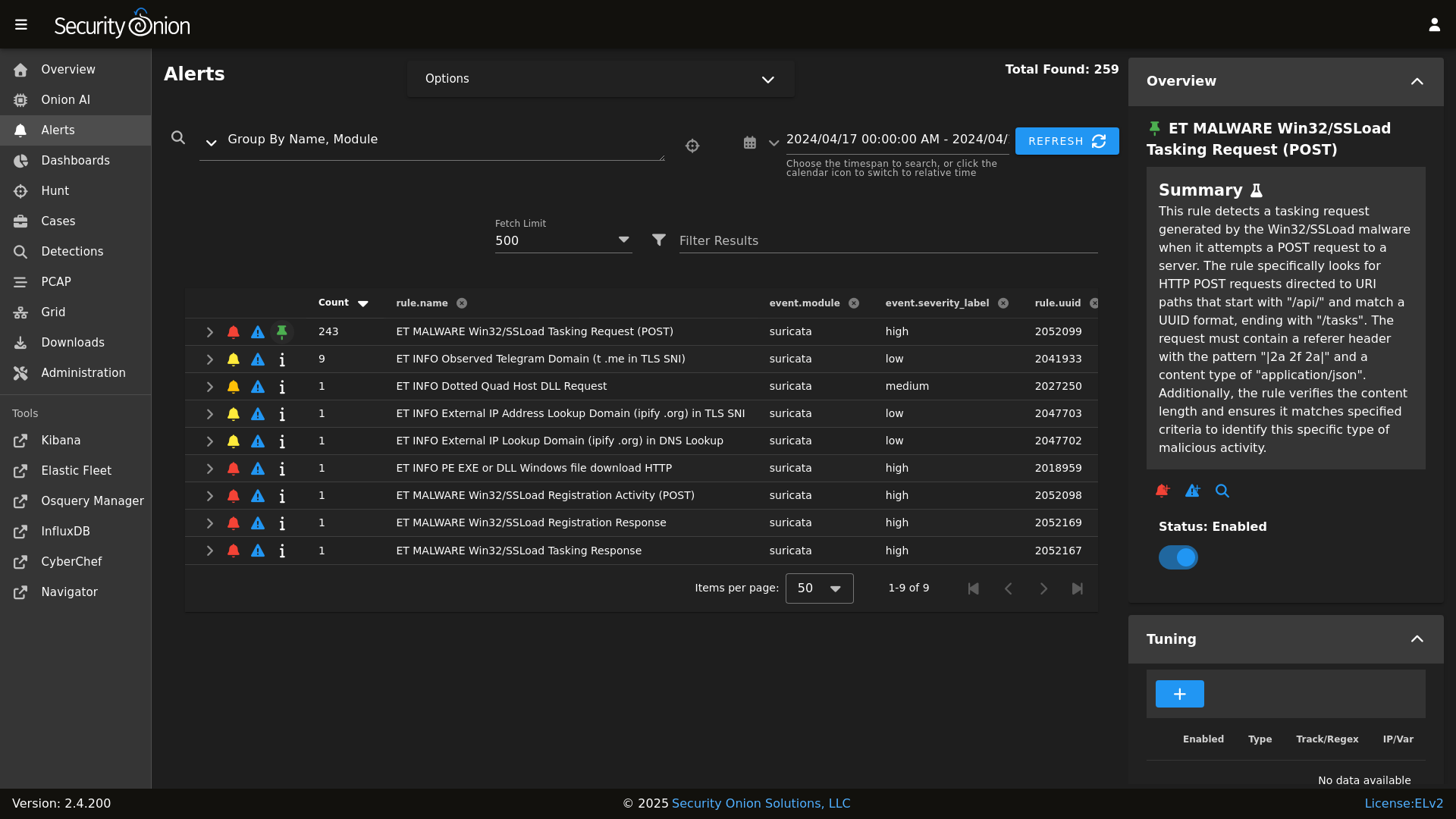

Alerts

|

||||

|

||||

|

||||

Security Onion includes everything you need to monitor your network and host systems:

|

||||

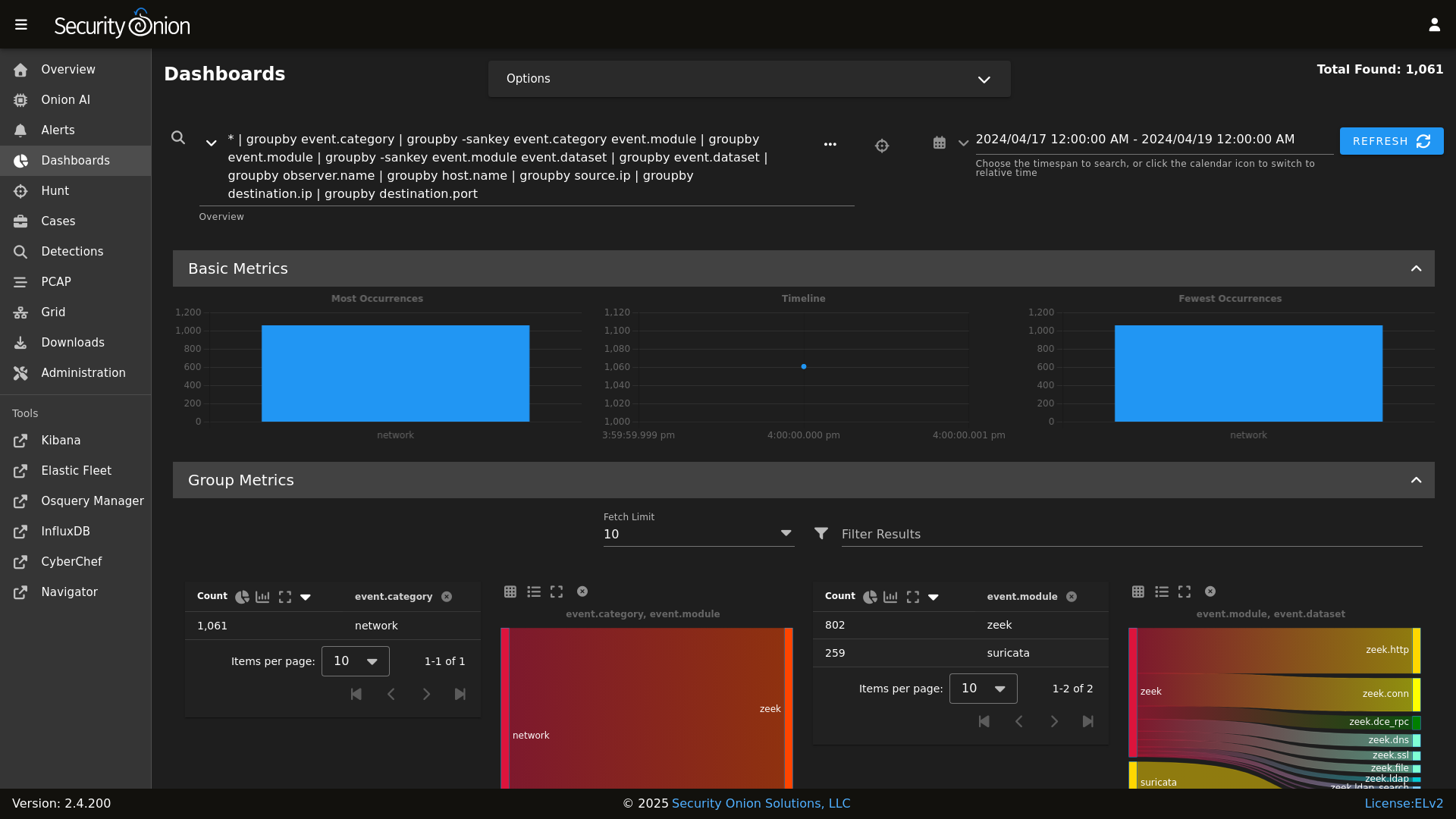

Dashboards

|

||||

|

||||

|

||||

* **Security Onion Console (SOC)**: A unified web interface for analyzing security events and managing your grid.

|

||||

* **Elastic Stack**: Powerful search backed by Elasticsearch.

|

||||

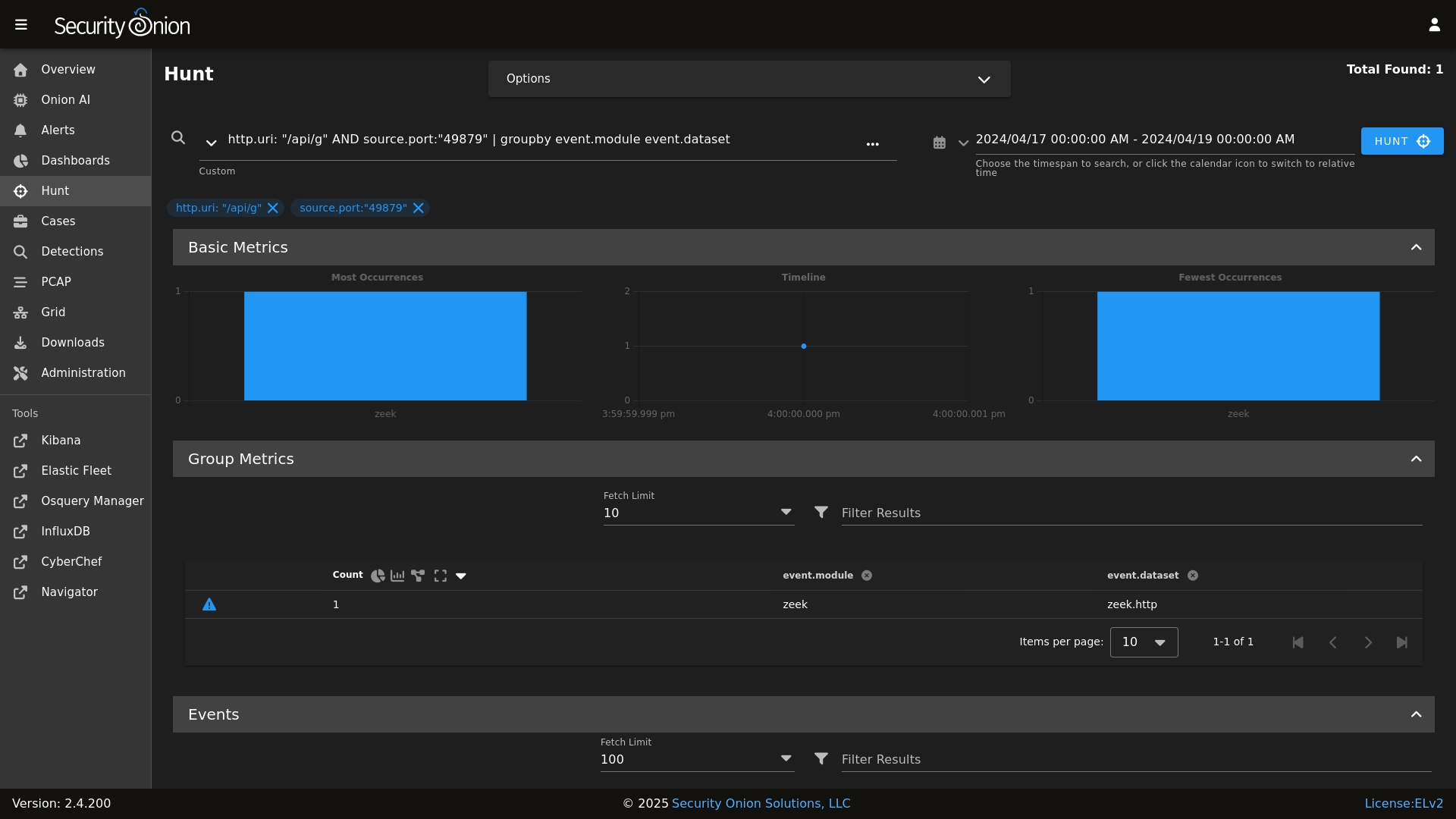

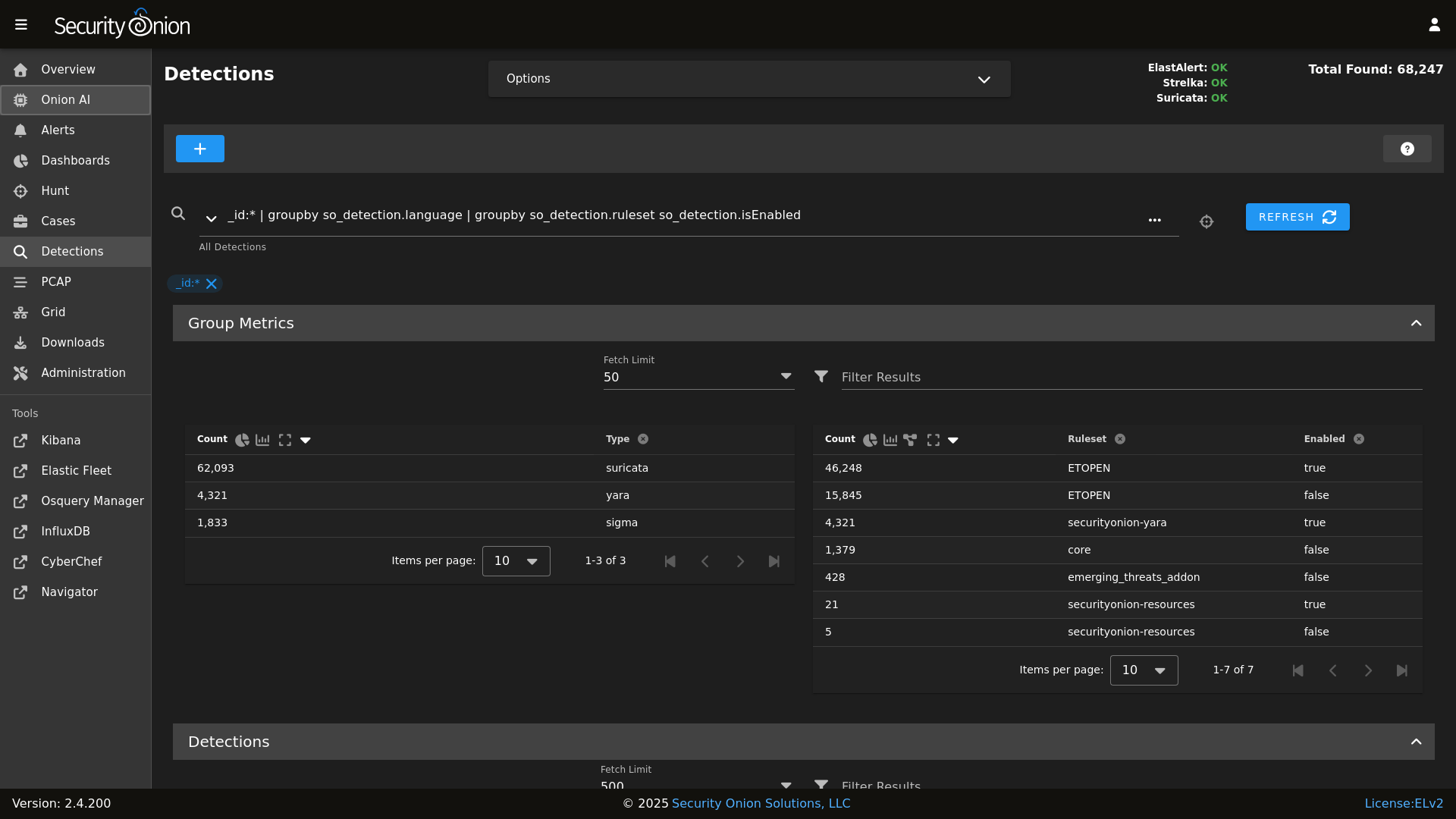

* **Intrusion Detection**: Network-based IDS with Suricata and host-based monitoring with Elastic Fleet.

|

||||

* **Network Metadata**: Detailed network metadata generated by Zeek or Suricata.

|

||||

* **Full Packet Capture**: Retain and analyze raw network traffic with Suricata PCAP.

|

||||

Hunt

|

||||

|

||||

|

||||

## ⭐ Security Onion Pro

|

||||

Detections

|

||||

|

||||

|

||||

For organizations and enterprises requiring advanced capabilities, **Security Onion Pro** offers additional features designed for scale and efficiency:

|

||||

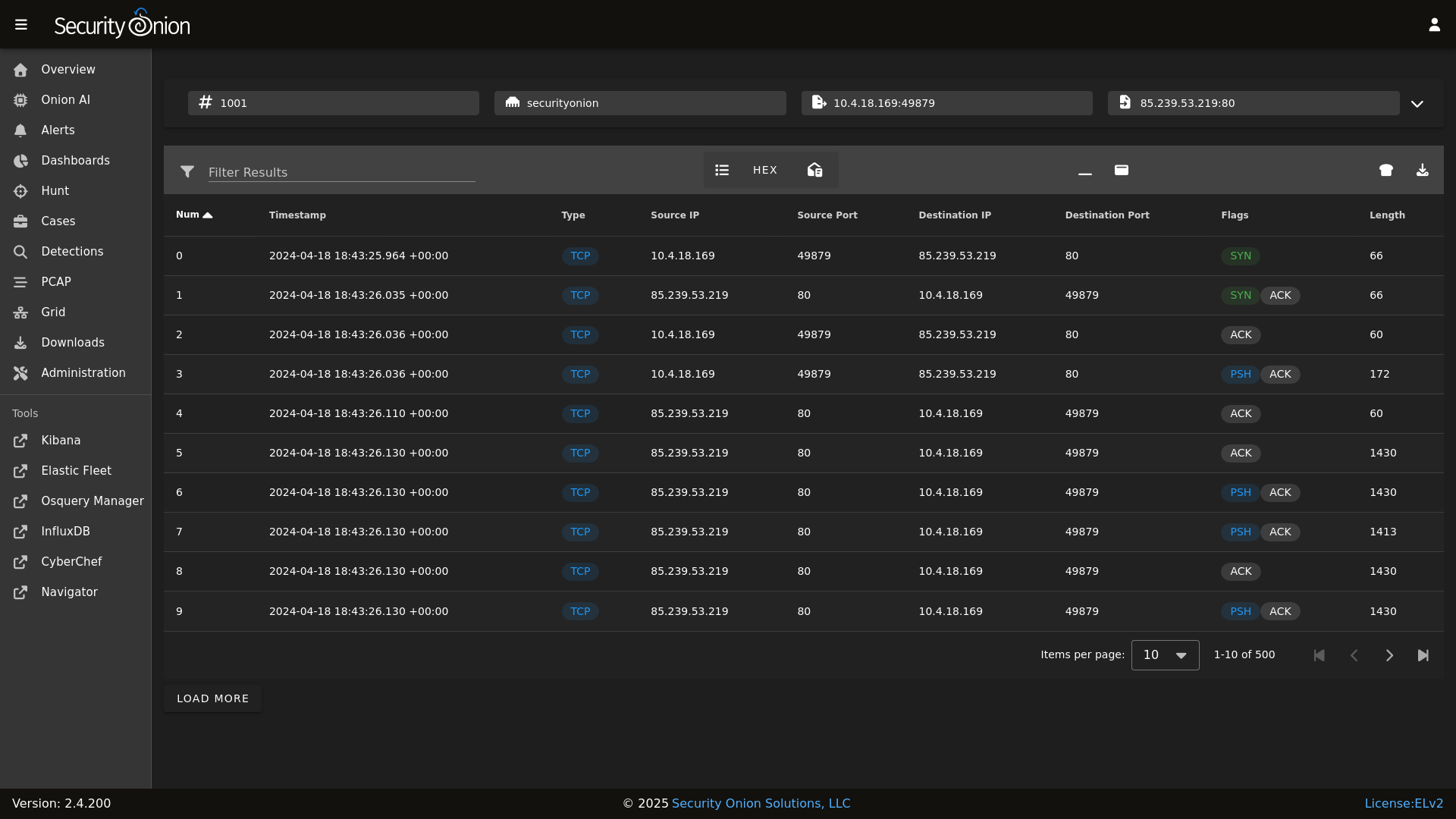

PCAP

|

||||

|

||||

|

||||

* **Onion AI**: Leverage powerful AI-driven insights to accelerate your analysis and investigations.

|

||||

* **Enterprise Features**: Enhanced tools and integrations tailored for enterprise-grade security operations.

|

||||

Grid

|

||||

|

||||

|

||||

For more information, visit the [Security Onion Pro](https://securityonionsolutions.com/pro) page.

|

||||

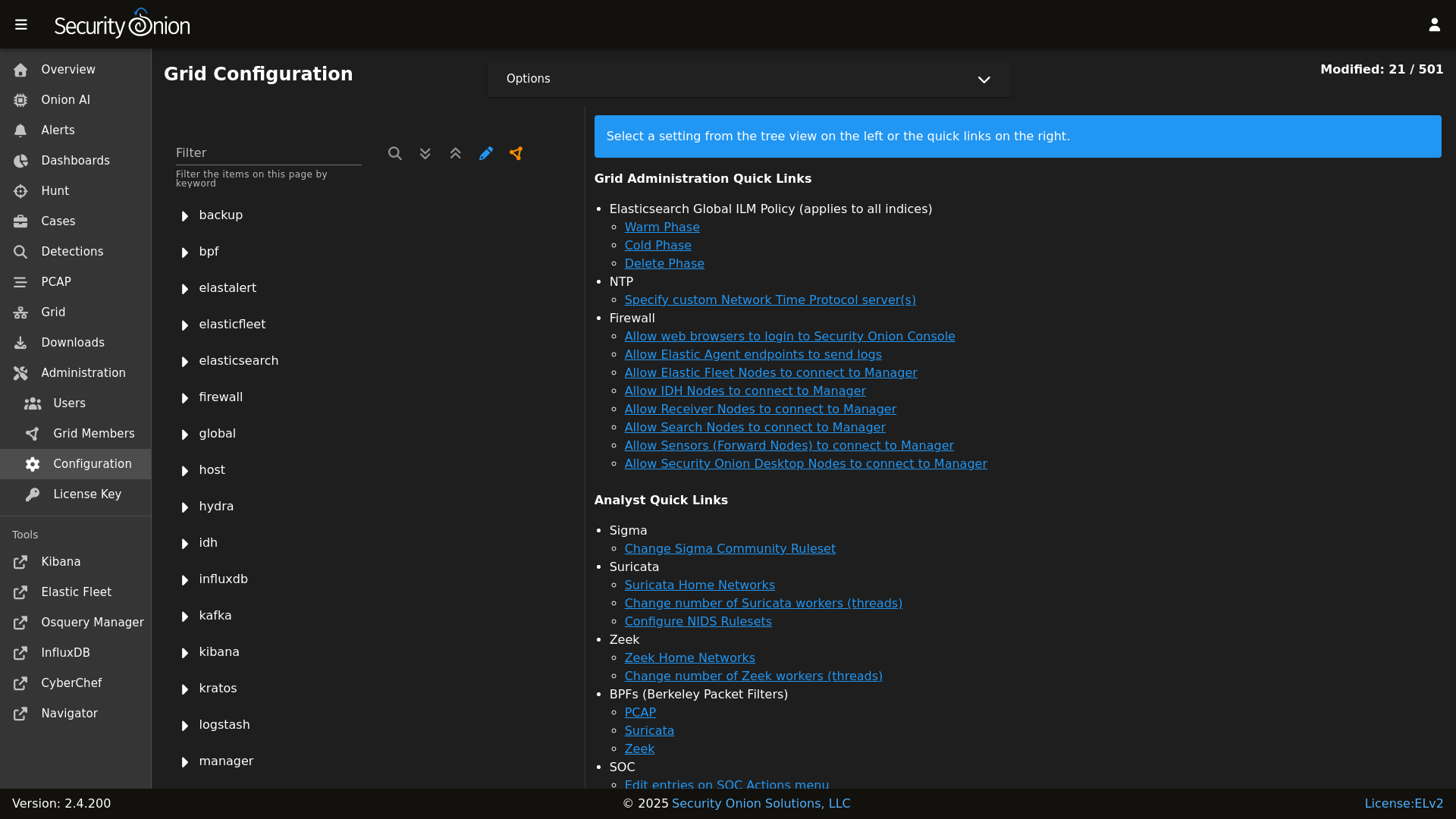

Config

|

||||

|

||||

|

||||

## ☁️ Cloud Deployment

|

||||

### Release Notes

|

||||

|

||||

Security Onion is available and ready to deploy in the **AWS**, **Azure**, and **Google Cloud (GCP)** marketplaces.

|

||||

https://securityonion.net/docs/release-notes

|

||||

|

||||

## 🚀 Getting Started

|

||||

### Requirements

|

||||

|

||||

| Goal | Resource |

|

||||

| :--- | :--- |

|

||||

| **Download** | [Security Onion ISO](https://securityonion.net/docs/download) |

|

||||

| **Requirements** | [Hardware Guide](https://securityonion.net/docs/hardware) |

|

||||

| **Install** | [Installation Instructions](https://securityonion.net/docs/installation) |

|

||||

| **What's New** | [Release Notes](https://securityonion.net/docs/release-notes) |

|

||||

https://securityonion.net/docs/hardware

|

||||

|

||||

## 📖 Documentation & Support

|

||||

### Download

|

||||

|

||||

For more detailed information, please visit our [Documentation](https://docs.securityonion.net).

|

||||

https://securityonion.net/docs/download

|

||||

|

||||

* **FAQ**: [Frequently Asked Questions](https://securityonion.net/docs/faq)

|

||||

* **Community**: [Discussions & Support](https://securityonion.net/docs/community-support)

|

||||

* **Training**: [Official Training](https://securityonion.net/training)

|

||||

### Installation

|

||||

|

||||

## 🤝 Contributing

|

||||

https://securityonion.net/docs/installation

|

||||

|

||||

We welcome contributions! Please see our [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines on how to get involved.

|

||||

### FAQ

|

||||

|

||||

## 🛡️ License

|

||||

https://securityonion.net/docs/faq

|

||||

|

||||

Security Onion is licensed under the terms of the license found in the [LICENSE](LICENSE) file.

|

||||

### Feedback

|

||||

|

||||

---

|

||||

*Built with 🧅 by Security Onion Solutions.*

|

||||

https://securityonion.net/docs/community-support

|

||||

|

||||

@@ -4,7 +4,6 @@

|

||||

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 3.x | :white_check_mark: |

|

||||

| 2.4.x | :white_check_mark: |

|

||||

| 2.3.x | :x: |

|

||||

| 16.04.x | :x: |

|

||||

|

||||

@@ -87,6 +87,8 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -132,6 +134,8 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -181,6 +185,8 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

@@ -203,6 +209,8 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- strelka.soc_strelka

|

||||

@@ -289,6 +297,8 @@ base:

|

||||

- zeek.adv_zeek

|

||||

- bpf.soc_bpf

|

||||

- bpf.adv_bpf

|

||||

- pcap.soc_pcap

|

||||

- pcap.adv_pcap

|

||||

- suricata.soc_suricata

|

||||

- suricata.adv_suricata

|

||||

- strelka.soc_strelka

|

||||

|

||||

@@ -38,6 +38,7 @@

|

||||

] %}

|

||||

|

||||

{% set sensor_states = [

|

||||

'pcap',

|

||||

'suricata',

|

||||

'healthcheck',

|

||||

'tcpreplay',

|

||||

|

||||

@@ -1,15 +1,21 @@

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

{% set PCAP_BPF_STATUS = 0 %}

|

||||

{% set STENO_BPF_COMPILED = "" %}

|

||||

|

||||

{% if GLOBALS.pcap_engine == "TRANSITION" %}

|

||||

{% set PCAPBPF = ["ip and host 255.255.255.1 and port 1"] %}

|

||||

{% else %}

|

||||

{% import_yaml 'bpf/defaults.yaml' as BPFDEFAULTS %}

|

||||

{% set BPFMERGED = salt['pillar.get']('bpf', BPFDEFAULTS.bpf, merge=True) %}

|

||||

{% import 'bpf/macros.jinja' as MACROS %}

|

||||

{{ MACROS.remove_comments(BPFMERGED, 'pcap') }}

|

||||

{% set PCAPBPF = BPFMERGED.pcap %}

|

||||

{% endif %}

|

||||

|

||||

{% if PCAPBPF %}

|

||||

{% set PCAP_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + PCAPBPF|join(" "),cwd='/root') %}

|

||||

{% if PCAP_BPF_CALC['retcode'] == 0 %}

|

||||

{% set PCAP_BPF_STATUS = 1 %}

|

||||

{% set STENO_BPF_COMPILED = ",\\\"--filter=" + PCAP_BPF_CALC['stdout'] + "\\\"" %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

|

||||

@@ -3,6 +3,8 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||

|

||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||

{% if SOC_GLOBAL.global.airgap %}

|

||||

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

||||

@@ -118,3 +120,23 @@ copy_bootstrap-salt_sbin:

|

||||

- source: {{UPDATE_DIR}}/salt/salt/scripts/bootstrap-salt.sh

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

{# this is added in 2.4.120 to remove salt repo files pointing to saltproject.io to accomodate the move to broadcom and new bootstrap-salt script #}

|

||||

{% if salt['pkg.version_cmp'](SOVERSION, '2.4.120') == -1 %}

|

||||

{% set saltrepofile = '/etc/yum.repos.d/salt.repo' %}

|

||||

{% if grains.os_family == 'Debian' %}

|

||||

{% set saltrepofile = '/etc/apt/sources.list.d/salt.list' %}

|

||||

{% endif %}

|

||||

remove_saltproject_io_repo_manager:

|

||||

file.absent:

|

||||

- name: {{ saltrepofile }}

|

||||

{% endif %}

|

||||

|

||||

{% else %}

|

||||

fix_23_soup_sbin:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /usr/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

fix_23_soup_salt:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /opt/so/saltstack/defalt/salt/common/tools/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

{% endif %}

|

||||

|

||||

@@ -16,7 +16,7 @@

|

||||

|

||||

if [ "$#" -lt 2 ]; then

|

||||

cat 1>&2 <<EOF

|

||||

$0 compiles a BPF expression to be passed to PCAP to apply a socket filter.

|

||||

$0 compiles a BPF expression to be passed to stenotype to apply a socket filter.

|

||||

Its first argument is the interface (link type is required) and all other arguments

|

||||

are passed to TCPDump.

|

||||

|

||||

|

||||

@@ -333,8 +333,8 @@ get_elastic_agent_vars() {

|

||||

|

||||

if [ -f "$defaultsfile" ]; then

|

||||

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||

@@ -349,16 +349,21 @@ get_random_value() {

|

||||

}

|

||||

|

||||

gpg_rpm_import() {

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

||||

else

|

||||

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

||||

if [[ $is_oracle ]]; then

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

||||

else

|

||||

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

||||

fi

|

||||

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

||||

for RPMKEY in "${RPMKEYS[@]}"; do

|

||||

rpm --import $RPMKEYSLOC/$RPMKEY

|

||||

echo "Imported $RPMKEY"

|

||||

done

|

||||

elif [[ $is_rpm ]]; then

|

||||

echo "Importing the security onion GPG key"

|

||||

rpm --import ../salt/repo/client/files/oracle/keys/securityonion.pub

|

||||

fi

|

||||

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

||||

for RPMKEY in "${RPMKEYS[@]}"; do

|

||||

rpm --import $RPMKEYSLOC/$RPMKEY

|

||||

echo "Imported $RPMKEY"

|

||||

done

|

||||

}

|

||||

|

||||

header() {

|

||||

@@ -610,19 +615,69 @@ salt_minion_count() {

|

||||

}

|

||||

|

||||

set_os() {

|

||||

if [ -f /etc/redhat-release ] && grep -q "Red Hat Enterprise Linux release 9" /etc/redhat-release && [ -f /etc/oracle-release ]; then

|

||||

OS=oracle

|

||||

OSVER=9

|

||||

is_oracle=true

|

||||

is_rpm=true

|

||||

if [ -f /etc/redhat-release ]; then

|

||||

if grep -q "Rocky Linux release 9" /etc/redhat-release; then

|

||||

OS=rocky

|

||||

OSVER=9

|

||||

is_rocky=true

|

||||

is_rpm=true

|

||||

elif grep -q "CentOS Stream release 9" /etc/redhat-release; then

|

||||

OS=centos

|

||||

OSVER=9

|

||||

is_centos=true

|

||||

is_rpm=true

|

||||

elif grep -q "AlmaLinux release 9" /etc/redhat-release; then

|

||||

OS=alma

|

||||

OSVER=9

|

||||

is_alma=true

|

||||

is_rpm=true

|

||||

elif grep -q "Red Hat Enterprise Linux release 9" /etc/redhat-release; then

|

||||

if [ -f /etc/oracle-release ]; then

|

||||

OS=oracle

|

||||

OSVER=9

|

||||

is_oracle=true

|

||||

is_rpm=true

|

||||

else

|

||||

OS=rhel

|

||||

OSVER=9

|

||||

is_rhel=true

|

||||

is_rpm=true

|

||||

fi

|

||||

fi

|

||||

cron_service_name="crond"

|

||||

elif [ -f /etc/os-release ]; then

|

||||

if grep -q "UBUNTU_CODENAME=focal" /etc/os-release; then

|

||||

OSVER=focal

|

||||

UBVER=20.04

|

||||

OS=ubuntu

|

||||

is_ubuntu=true

|

||||

is_deb=true

|

||||

elif grep -q "UBUNTU_CODENAME=jammy" /etc/os-release; then

|

||||

OSVER=jammy

|

||||

UBVER=22.04

|

||||

OS=ubuntu

|

||||

is_ubuntu=true

|

||||

is_deb=true

|

||||

elif grep -q "VERSION_CODENAME=bookworm" /etc/os-release; then

|

||||

OSVER=bookworm

|

||||

DEBVER=12

|

||||

is_debian=true

|

||||

OS=debian

|

||||

is_deb=true

|

||||

fi

|

||||

cron_service_name="cron"

|

||||

fi

|

||||

cron_service_name="crond"

|

||||

}

|

||||

|

||||

set_minionid() {

|

||||

MINIONID=$(lookup_grain id)

|

||||

}

|

||||

|

||||

set_palette() {

|

||||

if [[ $is_deb ]]; then

|

||||

update-alternatives --set newt-palette /etc/newt/palette.original

|

||||

fi

|

||||

}

|

||||

|

||||

set_version() {

|

||||

CURRENTVERSION=0.0.0

|

||||

|

||||

@@ -32,6 +32,7 @@ container_list() {

|

||||

"so-nginx"

|

||||

"so-pcaptools"

|

||||

"so-soc"

|

||||

"so-steno"

|

||||

"so-suricata"

|

||||

"so-telegraf"

|

||||

"so-zeek"

|

||||

@@ -57,6 +58,7 @@ container_list() {

|

||||

"so-pcaptools"

|

||||

"so-redis"

|

||||

"so-soc"

|

||||

"so-steno"

|

||||

"so-strelka-backend"

|

||||

"so-strelka-manager"

|

||||

"so-suricata"

|

||||

@@ -69,6 +71,7 @@ container_list() {

|

||||

"so-logstash"

|

||||

"so-nginx"

|

||||

"so-redis"

|

||||

"so-steno"

|

||||

"so-suricata"

|

||||

"so-soc"

|

||||

"so-telegraf"

|

||||

|

||||

@@ -179,6 +179,7 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|salt-minion-check" # bug in early 2.4 place Jinja script in non-jinja salt dir causing cron output errors

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|monitoring.metrics" # known issue with elastic agent casting the field incorrectly if an integer value shows up before a float

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|repodownload.conf" # known issue with reposync on pre-2.4.20

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|missing versions record" # stenographer corrupt index

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|soc.field." # known ingest type collisions issue with earlier versions of SO

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|error parsing signature" # Malformed Suricata rule, from upstream provider

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|sticky buffer has no matches" # Non-critical Suricata error

|

||||

|

||||

@@ -55,22 +55,19 @@ if [ $SKIP -ne 1 ]; then

|

||||

fi

|

||||

|

||||

delete_pcap() {

|

||||

PCAP_DATA="/nsm/suripcap/"

|

||||

[ -d $PCAP_DATA ] && rm -rf $PCAP_DATA/*

|

||||

PCAP_DATA="/nsm/pcap/"

|

||||

[ -d $PCAP_DATA ] && so-pcap-stop && rm -rf $PCAP_DATA/* && so-pcap-start

|

||||

}

|

||||

delete_suricata() {

|

||||

SURI_LOG="/nsm/suricata/"

|

||||

[ -d $SURI_LOG ] && rm -rf $SURI_LOG/*

|

||||

[ -d $SURI_LOG ] && so-suricata-stop && rm -rf $SURI_LOG/* && so-suricata-start

|

||||

}

|

||||

delete_zeek() {

|

||||

ZEEK_LOG="/nsm/zeek/logs/"

|

||||

[ -d $ZEEK_LOG ] && so-zeek-stop && rm -rf $ZEEK_LOG/* && so-zeek-start

|

||||

}

|

||||

|

||||

so-suricata-stop

|

||||

delete_pcap

|

||||

delete_suricata

|

||||

delete_zeek

|

||||

so-suricata-start

|

||||

|

||||

|

||||

|

||||

@@ -23,6 +23,7 @@ if [ $# -ge 1 ]; then

|

||||

fi

|

||||

|

||||

case $1 in

|

||||

"steno") docker stop so-steno && docker rm so-steno && salt-call state.apply pcap queue=True;;

|

||||

"elastic-fleet") docker stop so-elastic-fleet && docker rm so-elastic-fleet && salt-call state.apply elasticfleet queue=True;;

|

||||

*) docker stop so-$1 ; docker rm so-$1 ; salt-call state.apply $1 queue=True;;

|

||||

esac

|

||||

|

||||

@@ -72,7 +72,7 @@ clean() {

|

||||

done

|

||||

fi

|

||||

|

||||

## Clean up extracted pcaps

|

||||

## Clean up extracted pcaps from Steno

|

||||

PCAPS='/nsm/pcapout'

|

||||

OLDEST_PCAP=$(find $PCAPS -type f -printf '%T+ %p\n' | sort -n | head -n 1)

|

||||

if [ -z "$OLDEST_PCAP" -o "$OLDEST_PCAP" == ".." -o "$OLDEST_PCAP" == "." ]; then

|

||||

|

||||

@@ -23,6 +23,7 @@ if [ $# -ge 1 ]; then

|

||||

|

||||

case $1 in

|

||||

"all") salt-call state.highstate queue=True;;

|

||||

"steno") if docker ps | grep -q so-$1; then printf "\n$1 is already running!\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply pcap queue=True; fi ;;

|

||||

"elastic-fleet") if docker ps | grep -q so-$1; then printf "\n$1 is already running!\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply elasticfleet queue=True; fi ;;

|

||||

*) if docker ps | grep -E -q '^so-$1$'; then printf "\n$1 is already running\n\n"; else docker rm so-$1 >/dev/null 2>&1 ; salt-call state.apply $1 queue=True; fi ;;

|

||||

esac

|

||||

|

||||

@@ -174,6 +174,11 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-steno':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-suricata':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

|

||||

@@ -62,6 +62,7 @@ docker:

|

||||

so-idh: *dockerOptions

|

||||

so-elastic-agent: *dockerOptions

|

||||

so-telegraf: *dockerOptions

|

||||

so-steno: *dockerOptions

|

||||

so-suricata:

|

||||

final_octet:

|

||||

description: Last octet of the container IP address.

|

||||

|

||||

@@ -1,3 +1,3 @@

|

||||

global:

|

||||

pcapengine: SURICATA

|

||||

pcapengine: STENO

|

||||

pipeline: REDIS

|

||||

@@ -18,11 +18,13 @@ global:

|

||||

regexFailureMessage: You must enter either ZEEK or SURICATA.

|

||||

global: True

|

||||

pcapengine:

|

||||

description: Which engine to use for generating pcap. Currently only SURICATA is supported.

|

||||

regex: ^(SURICATA)$

|

||||

description: Which engine to use for generating pcap. Options are STENO, SURICATA or TRANSITION.

|

||||

regex: ^(STENO|SURICATA|TRANSITION)$

|

||||

options:

|

||||

- STENO

|

||||

- SURICATA

|

||||

regexFailureMessage: You must enter either SURICATA.

|

||||

- TRANSITION

|

||||

regexFailureMessage: You must enter either STENO, SURICATA or TRANSITION.

|

||||

global: True

|

||||

ids:

|

||||

description: Which IDS engine to use. Currently only Suricata is supported.

|

||||

|

||||

27

salt/influxdb/templates/alarm_steno_packet_loss.json

Normal file

27

salt/influxdb/templates/alarm_steno_packet_loss.json

Normal file

@@ -0,0 +1,27 @@

|

||||

[{

|

||||

"apiVersion": "influxdata.com/v2alpha1",

|

||||

"kind": "CheckThreshold",

|

||||

"metadata": {

|

||||

"name": "steno-packet-loss"

|

||||

},

|

||||

"spec": {

|

||||

"description": "Triggers when the average percent of packet loss is above the defined threshold. To tune this alert, modify the value for the appropriate alert level.",

|

||||

"every": "1m",

|

||||

"name": "Stenographer Packet Loss",

|

||||

"query": "from(bucket: \"telegraf/so_short_term\")\n |\u003e range(start: v.timeRangeStart, stop: v.timeRangeStop)\n |\u003e filter(fn: (r) =\u003e r[\"_measurement\"] == \"stenodrop\")\n |\u003e filter(fn: (r) =\u003e r[\"_field\"] == \"drop\")\n |\u003e aggregateWindow(every: 1m, fn: mean, createEmpty: false)\n |\u003e yield(name: \"mean\")",

|

||||

"status": "active",

|

||||

"statusMessageTemplate": "Stenographer Packet Loss on node ${r.host} has reached the ${ r._level } threshold. The current packet loss is ${ r.drop }%.",

|

||||

"thresholds": [

|

||||

{

|

||||

"level": "CRIT",

|

||||

"type": "greater",

|

||||

"value": 5

|

||||

},

|

||||

{

|

||||

"level": "WARN",

|

||||

"type": "greater",

|

||||

"value": 3

|

||||

}

|

||||

]

|

||||

}

|

||||

}]

|

||||

File diff suppressed because one or more lines are too long

@@ -180,6 +180,16 @@ logrotate:

|

||||

- extension .log

|

||||

- dateext

|

||||

- dateyesterday

|

||||

/opt/so/log/stenographer/*_x_log:

|

||||

- daily

|

||||

- rotate 14

|

||||

- missingok

|

||||

- copytruncate

|

||||

- compress

|

||||

- create

|

||||

- extension .log

|

||||

- dateext

|

||||

- dateyesterday

|

||||

/opt/so/log/salt/so-salt-minion-check:

|

||||

- daily

|

||||

- rotate 14

|

||||

|

||||

@@ -112,6 +112,13 @@ logrotate:

|

||||

multiline: True

|

||||

global: True

|

||||

forcedType: "[]string"

|

||||

"/opt/so/log/stenographer/*_x_log":

|

||||

description: List of logrotate options for this file.

|

||||

title: /opt/so/log/stenographer/*.log

|

||||

advanced: True

|

||||

multiline: True

|

||||

global: True

|

||||

forcedType: "[]string"

|

||||

"/opt/so/log/salt/so-salt-minion-check":

|

||||

description: List of logrotate options for this file.

|

||||

title: /opt/so/log/salt/so-salt-minion-check

|

||||

|

||||

@@ -1,2 +1,2 @@

|

||||

https://repo.securityonion.net/file/so-repo/prod/3/oracle/9

|

||||

https://repo-alt.securityonion.net/prod/3/oracle/9

|

||||

https://repo.securityonion.net/file/so-repo/prod/2.4/oracle/9

|

||||

https://repo-alt.securityonion.net/prod/2.4/oracle/9

|

||||

@@ -462,14 +462,19 @@ function add_sensor_to_minion() {

|

||||

echo " lb_procs: '$CORECOUNT'"

|

||||

echo "suricata:"

|

||||

echo " enabled: True "

|

||||

echo " pcap:"

|

||||

echo " enabled: True"

|

||||

if [[ $is_pcaplimit ]]; then

|

||||

echo " pcap:"

|

||||

echo " maxsize: $MAX_PCAP_SPACE"

|

||||

fi

|

||||

echo " config:"

|

||||

echo " af-packet:"

|

||||

echo " threads: '$CORECOUNT'"

|

||||

echo "pcap:"

|

||||

echo " enabled: True"

|

||||

if [[ $is_pcaplimit ]]; then

|

||||

echo " config:"

|

||||

echo " diskfreepercentage: $DFREEPERCENT"

|

||||

fi

|

||||

echo " "

|

||||

} >> $PILLARFILE

|

||||

if [ $? -ne 0 ]; then

|

||||

|

||||

@@ -143,7 +143,7 @@ show_usage() {

|

||||

echo " -v Show verbose output (files changed/added/deleted)"

|

||||

echo " -vv Show very verbose output (includes file diffs)"

|

||||

echo " --test Test mode - show what would change without making changes"

|

||||

echo " branch Git branch to checkout (default: 3/main)"

|

||||

echo " branch Git branch to checkout (default: 2.4/main)"

|

||||

echo ""

|

||||

echo "Examples:"

|

||||

echo " $0 # Normal operation"

|

||||

@@ -193,7 +193,7 @@ done

|

||||

|

||||

# Set default branch if not provided

|

||||

if [ -z "$BRANCH" ]; then

|

||||

BRANCH=3/main

|

||||

BRANCH=2.4/main

|

||||

fi

|

||||

|

||||

got_root

|

||||

|

||||

@@ -256,7 +256,7 @@ def replacelistobject(args):

|

||||

def addKey(content, key, value):

|

||||

pieces = key.split(".", 1)

|

||||

if len(pieces) > 1:

|

||||

if pieces[0] not in content or content[pieces[0]] is None:

|

||||

if not pieces[0] in content:

|

||||

content[pieces[0]] = {}

|

||||

addKey(content[pieces[0]], pieces[1], value)

|

||||

elif key in content:

|

||||

@@ -346,12 +346,7 @@ def get(args):

|

||||

print(f"Key '{key}' not found by so-yaml.py", file=sys.stderr)

|

||||

return 2

|

||||

|

||||

if isinstance(output, bool):

|

||||

print(str(output).lower())

|

||||

elif isinstance(output, (dict, list)):

|

||||

print(yaml.safe_dump(output).strip())

|

||||

else:

|

||||

print(output)

|

||||

print(yaml.safe_dump(output))

|

||||

return 0

|

||||

|

||||

|

||||

|

||||

@@ -393,7 +393,7 @@ class TestRemove(unittest.TestCase):

|

||||

|

||||

result = soyaml.get([filename, "key1.child2.deep1"])

|

||||

self.assertEqual(result, 0)

|

||||

self.assertEqual("45\n", mock_stdout.getvalue())

|

||||

self.assertIn("45\n...", mock_stdout.getvalue())

|

||||

|

||||

def test_get_str(self):

|

||||

with patch('sys.stdout', new=StringIO()) as mock_stdout:

|

||||

@@ -404,18 +404,7 @@ class TestRemove(unittest.TestCase):

|

||||

|

||||

result = soyaml.get([filename, "key1.child2.deep1"])

|

||||

self.assertEqual(result, 0)

|

||||

self.assertEqual("hello\n", mock_stdout.getvalue())

|

||||

|

||||

def test_get_bool(self):

|

||||

with patch('sys.stdout', new=StringIO()) as mock_stdout:

|

||||

filename = "/tmp/so-yaml_test-get.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123, child2: { deep1: 45 } }, key2: false, key3: [e,f,g]}")

|

||||

file.close()

|

||||

|

||||

result = soyaml.get([filename, "key2"])

|

||||

self.assertEqual(result, 0)

|

||||

self.assertEqual("false\n", mock_stdout.getvalue())

|

||||

self.assertIn("hello\n...", mock_stdout.getvalue())

|

||||

|

||||

def test_get_list(self):

|

||||

with patch('sys.stdout', new=StringIO()) as mock_stdout:

|

||||

|

||||

File diff suppressed because it is too large

Load Diff

184

salt/manager/tools/sbin/soupto3

Executable file

184

salt/manager/tools/sbin/soupto3

Executable file

@@ -0,0 +1,184 @@

|

||||

#!/bin/bash

|

||||

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

|

||||

. /usr/sbin/so-common

|

||||

|

||||

UPDATE_URL=https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/refs/heads/3/main/VERSION

|

||||

|

||||

# Check if already running version 3

|

||||

CURRENT_VERSION=$(cat /etc/soversion 2>/dev/null)

|

||||

if [[ "$CURRENT_VERSION" =~ ^3\. ]]; then

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " Already Running Security Onion 3"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " This system is already running Security Onion $CURRENT_VERSION."

|

||||

echo " Use 'soup' to update within the 3.x release line."

|

||||

echo ""

|

||||

exit 0

|

||||

fi

|

||||

|

||||

echo ""

|

||||

echo "Checking PCAP settings."

|

||||

echo ""

|

||||

|

||||

# Check pcapengine setting - must be SURICATA before upgrading to version 3

|

||||

PCAP_ENGINE=$(lookup_pillar "pcapengine")

|

||||

|

||||

PCAP_DELETED=false

|

||||

|

||||

prompt_delete_pcap() {

|

||||

read -rp " Would you like to delete all remaining Stenographer PCAP data? (y/N): " DELETE_PCAP

|

||||

if [[ "$DELETE_PCAP" =~ ^[Yy]$ ]]; then

|

||||

echo ""

|

||||

echo " WARNING: This will permanently delete all Stenographer PCAP data"

|

||||

echo " on all nodes. This action cannot be undone."

|

||||

echo ""

|

||||

read -rp " Are you sure? (y/N): " CONFIRM_DELETE

|

||||

if [[ "$CONFIRM_DELETE" =~ ^[Yy]$ ]]; then

|

||||

echo ""

|

||||

echo " Deleting Stenographer PCAP data on all nodes..."

|

||||

salt '*' cmd.run "rm -rf /nsm/pcap/* && rm -rf /nsm/pcapindex/*"

|

||||

echo " Done."

|

||||

PCAP_DELETED=true

|

||||

else

|

||||

echo ""

|

||||

echo " Delete cancelled."

|

||||

fi

|

||||

fi

|

||||

}

|

||||

|

||||

pcapengine_not_changed() {

|

||||

echo ""

|

||||

echo " PCAP engine must be set to SURICATA before upgrading to Security Onion 3."

|

||||

echo " You can change this in SOC by navigating to:"

|

||||

echo " Configuration -> global -> pcapengine"

|

||||

}

|

||||

|

||||

prompt_change_engine() {

|

||||

local current_engine=$1

|

||||

echo ""

|

||||

read -rp " Would you like to change the PCAP engine to SURICATA now? (y/N): " CHANGE_ENGINE

|

||||

if [[ "$CHANGE_ENGINE" =~ ^[Yy]$ ]]; then

|

||||

if [[ "$PCAP_DELETED" != "true" ]]; then

|

||||

echo ""

|

||||

echo " WARNING: Stenographer PCAP data was not deleted. If you proceed,"

|

||||

echo " this data will no longer be accessible through SOC and will never"

|

||||

echo " be automatically deleted. You will need to manually remove it later."

|

||||

echo ""

|

||||

read -rp " Continue with changing pcapengine to SURICATA? (y/N): " CONFIRM_CHANGE

|

||||

if [[ ! "$CONFIRM_CHANGE" =~ ^[Yy]$ ]]; then

|

||||

pcapengine_not_changed

|

||||

return 1

|

||||

fi

|

||||

fi

|

||||

echo ""

|

||||

echo " Updating PCAP engine to SURICATA..."

|

||||

so-yaml.py replace /opt/so/saltstack/local/pillar/global/soc_global.sls global.pcapengine SURICATA

|

||||

echo " Done."

|

||||

return 0

|

||||

else

|

||||

pcapengine_not_changed

|

||||

return 1

|

||||

fi

|

||||

}

|

||||

|

||||

case "$PCAP_ENGINE" in

|

||||

SURICATA)

|

||||

echo "PCAP engine settings OK."

|

||||

;;

|

||||

TRANSITION|STENO)

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " PCAP Engine Check Failed"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " Your PCAP engine is currently set to $PCAP_ENGINE."

|

||||

echo ""

|

||||

echo " Before upgrading to Security Onion 3, Stenographer PCAP data must be"

|

||||

echo " removed and the PCAP engine must be set to SURICATA."

|

||||

echo ""

|

||||

echo " To check remaining Stenographer PCAP usage, run:"

|

||||

echo " salt '*' cmd.run 'du -sh /nsm/pcap'"

|

||||

echo ""

|

||||

|

||||

prompt_delete_pcap

|

||||

if ! prompt_change_engine "$PCAP_ENGINE"; then

|

||||

echo ""

|

||||

exit 1

|

||||

fi

|

||||

;;

|

||||

*)

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " PCAP Engine Check Failed"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " Unable to determine the PCAP engine setting (got: '$PCAP_ENGINE')."

|

||||

echo " Please ensure the PCAP engine is set to SURICATA."

|

||||

echo " In SOC, navigate to Configuration -> global -> pcapengine"

|

||||

echo " and change the value to SURICATA."

|

||||

echo ""

|

||||

exit 1

|

||||

;;

|

||||

esac

|

||||

|

||||

echo ""

|

||||

echo "Checking Versions."

|

||||

echo ""

|

||||

|

||||

# Check if Security Onion 3 has been released

|

||||

VERSION=$(curl -sSf "$UPDATE_URL" 2>/dev/null)

|

||||

|

||||

if [[ -z "$VERSION" ]]; then

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " Unable to Check Version"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " Could not retrieve version information from:"

|

||||

echo " $UPDATE_URL"

|

||||

echo ""

|

||||

echo " Please check your network connection and try again."

|

||||

echo ""

|

||||

exit 1

|

||||

fi

|

||||

|

||||

if [[ "$VERSION" == "UNRELEASED" ]]; then

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " Security Onion 3 Not Available"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " Security Onion 3 has not been released yet."

|

||||

echo ""

|

||||

echo " Please check back later or visit https://securityonion.net for updates."

|

||||

echo ""

|

||||

exit 1

|

||||

fi

|

||||

|

||||

# Validate version format (e.g., 3.0.2)

|

||||

if [[ ! "$VERSION" =~ ^[0-9]+\.[0-9]+\.[0-9]+$ ]]; then

|

||||

echo ""

|

||||

echo "========================================================================="

|

||||

echo " Invalid Version"

|

||||

echo "========================================================================="

|

||||

echo ""

|

||||

echo " Received unexpected version format: '$VERSION'"

|

||||

echo ""

|

||||

echo " Please check back later or visit https://securityonion.net for updates."

|

||||

echo ""

|

||||

exit 1

|

||||

fi

|

||||

|

||||

echo "Security Onion 3 ($VERSION) is available. Upgrading..."

|

||||

echo ""

|

||||

|

||||

# All checks passed - proceed with upgrade

|

||||

BRANCH=3/main soup

|

||||

@@ -3,7 +3,6 @@ nginx:

|

||||

external_suricata: False

|

||||

ssl:

|

||||

replace_cert: False

|

||||

alt_names: []

|

||||

config:

|

||||

throttle_login_burst: 12

|

||||

throttle_login_rate: 20

|

||||

|

||||

@@ -60,8 +60,6 @@ http {

|

||||

{%- endif %}

|

||||

|

||||

{%- if GLOBALS.is_manager %}

|

||||

{%- set all_names = [GLOBALS.hostname, GLOBALS.url_base] + NGINXMERGED.ssl.alt_names %}

|

||||

{%- set full_server_name = all_names | unique | join(' ') %}

|

||||

|

||||

server {

|

||||

listen 80 default_server;

|

||||

@@ -71,7 +69,7 @@ http {

|

||||

|

||||

server {

|

||||

listen 8443;

|

||||

server_name {{ full_server_name }};

|

||||

server_name {{ GLOBALS.url_base }};

|

||||

root /opt/socore/html;

|

||||

location /artifacts/ {

|

||||

try_files $uri =206;

|

||||

@@ -114,7 +112,7 @@ http {

|

||||

|

||||

server {

|

||||

listen 7788;

|

||||

server_name {{ full_server_name }};

|

||||

server_name {{ GLOBALS.url_base }};

|

||||

root /nsm/rules;

|

||||

location / {

|

||||

allow all;

|

||||

@@ -130,7 +128,7 @@ http {

|

||||

server {

|

||||

listen 7789 ssl;

|

||||

http2 on;

|

||||

server_name {{ full_server_name }};

|

||||

server_name {{ GLOBALS.url_base }};

|

||||

root /surirules;

|

||||

|

||||

add_header Content-Security-Policy "default-src 'self' 'unsafe-inline' 'unsafe-eval' https: data: blob: wss:; frame-ancestors 'self'";

|

||||

@@ -163,7 +161,7 @@ http {

|

||||

server {

|

||||

listen 443 ssl;

|

||||

http2 on;

|

||||

server_name {{ full_server_name }};

|

||||

server_name {{ GLOBALS.url_base }};

|

||||

root /opt/socore/html;

|

||||

index index.html;

|

||||

|

||||

@@ -387,13 +385,15 @@ http {

|

||||

error_page 429 = @error429;

|

||||

|

||||

location @error401 {

|

||||

if ($request_uri ~* (^/api/.*|^/connect/.*|^/oauth2/.*)) {

|

||||

if ($request_uri ~* (^/connect/.*|^/oauth2/.*)) {

|

||||

return 401;

|

||||

}

|

||||

|

||||

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains";

|

||||

if ($request_uri ~* ^/(?!(^/api/.*))) {

|

||||

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains";

|

||||

}

|

||||

|

||||

if ($request_uri ~* ^/(?!(login|auth|oauth2|$))) {

|

||||

if ($request_uri ~* ^/(?!(api/|login|auth|oauth2|$))) {

|

||||

add_header Set-Cookie "AUTH_REDIRECT=$request_uri;Path=/;Max-Age=14400";

|

||||

}

|

||||

return 302 /auth/self-service/login/browser;

|

||||

|

||||

@@ -30,12 +30,6 @@ nginx:

|

||||

advanced: True

|

||||

global: True

|

||||

helpLink: nginx.html

|

||||

alt_names:

|

||||

description: Provide a list of alternate names to allow remote systems the ability to refer to the SOC API as another hostname.

|

||||

global: True

|

||||

forcedType: '[]string'

|

||||

multiline: True

|

||||

helpLink: nginx.html

|

||||

config:

|

||||

throttle_login_burst:

|

||||

description: Number of login requests that can burst without triggering request throttling. Higher values allow more repeated login attempts. Values greater than zero are required in order to provide a usable login flow.

|

||||

|

||||

@@ -49,17 +49,6 @@ managerssl_key:

|

||||

- docker_container: so-nginx

|

||||

|

||||

# Create a cert for the reverse proxy

|

||||

{% set san_list = [GLOBALS.hostname, GLOBALS.node_ip, GLOBALS.url_base] + NGINXMERGED.ssl.alt_names %}

|

||||

{% set unique_san_list = san_list | unique %}

|

||||

{% set managerssl_san_list = [] %}

|

||||

{% for item in unique_san_list %}

|

||||

{% if item | ipaddr %}

|

||||

{% do managerssl_san_list.append("IP:" + item) %}

|

||||

{% else %}

|

||||

{% do managerssl_san_list.append("DNS:" + item) %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

{% set managerssl_san = managerssl_san_list | join(', ') %}

|

||||

managerssl_crt:

|

||||

x509.certificate_managed:

|

||||

- name: /etc/pki/managerssl.crt

|

||||

@@ -67,7 +56,7 @@ managerssl_crt:

|

||||

- signing_policy: managerssl

|

||||

- private_key: /etc/pki/managerssl.key

|

||||

- CN: {{ GLOBALS.hostname }}

|

||||

- subjectAltName: {{ managerssl_san }}

|

||||

- subjectAltName: "DNS:{{ GLOBALS.hostname }}, IP:{{ GLOBALS.node_ip }}, DNS:{{ GLOBALS.url_base }}"

|

||||

- days_remaining: 7

|

||||

- days_valid: 820

|

||||

- backup: True

|

||||

|

||||

22

salt/pcap/ca.sls

Normal file

22

salt/pcap/ca.sls

Normal file

@@ -0,0 +1,22 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'allowed_states.map.jinja' import allowed_states %}

|

||||

{% if sls.split('.')[0] in allowed_states or sls in allowed_states%}

|

||||

|

||||

stenoca:

|

||||

file.directory:

|

||||

- name: /opt/so/conf/steno/certs

|

||||

- user: 941

|

||||

- group: 939

|

||||

- makedirs: True

|

||||

|

||||

{% else %}

|

||||

|

||||

{{sls}}_state_not_allowed:

|

||||

test.fail_without_changes:

|

||||

- name: {{sls}}_state_not_allowed

|

||||

|

||||

{% endif %}

|

||||

@@ -1,59 +0,0 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

|

||||

{% if GLOBALS.is_sensor %}

|

||||

|

||||

delete_so-steno_so-status.conf:

|

||||

file.line:

|

||||

- name: /opt/so/conf/so-status/so-status.conf

|

||||

- mode: delete

|

||||

- match: so-steno

|

||||

|

||||

remove_stenographer_user:

|

||||

user.absent:

|

||||

- name: stenographer

|

||||

- force: True

|

||||

|

||||

remove_stenographer_log_dir:

|

||||

file.absent:

|

||||

- name: /opt/so/log/stenographer

|

||||

|

||||

remove_stenoloss_script:

|

||||

file.absent:

|

||||

- name: /opt/so/conf/telegraf/scripts/stenoloss.sh

|

||||

|

||||

remove_steno_conf_dir:

|

||||

file.absent:

|

||||

- name: /opt/so/conf/steno

|

||||

|

||||

remove_so_pcap_export:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-pcap-export

|

||||

|

||||

remove_so_pcap_restart:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-pcap-restart

|

||||

|

||||

remove_so_pcap_start:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-pcap-start

|

||||

|

||||

remove_so_pcap_stop:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-pcap-stop

|

||||

|

||||

so-steno:

|

||||

docker_container.absent:

|

||||

- force: True

|

||||

|

||||

{% else %}

|

||||

|

||||

{{sls}}.non_sensor_node:

|

||||

test.show_notification:

|

||||

- text: "Stenographer cleanup not applicable on non-sensor nodes."

|

||||

|

||||

{% endif %}

|

||||

13

salt/pcap/config.map.jinja

Normal file

13

salt/pcap/config.map.jinja

Normal file

@@ -0,0 +1,13 @@

|

||||

{# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

Elastic License 2.0. #}

|

||||

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

{% import_yaml 'pcap/defaults.yaml' as PCAPDEFAULTS %}

|

||||

{% set PCAPMERGED = salt['pillar.get']('pcap', PCAPDEFAULTS.pcap, merge=True) %}

|

||||

|

||||

{# disable stenographer if the pcap engine is set to SURICATA #}

|

||||

{% if GLOBALS.pcap_engine == "SURICATA" %}

|

||||

{% do PCAPMERGED.update({'enabled': False}) %}

|

||||

{% endif %}

|

||||

87

salt/pcap/config.sls

Normal file

87

salt/pcap/config.sls

Normal file

@@ -0,0 +1,87 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'allowed_states.map.jinja' import allowed_states %}

|

||||

{% if sls.split('.')[0] in allowed_states %}

|

||||

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

{% from "pcap/config.map.jinja" import PCAPMERGED %}

|

||||

{% from 'bpf/pcap.map.jinja' import PCAPBPF, PCAP_BPF_STATUS, PCAP_BPF_CALC, STENO_BPF_COMPILED %}

|

||||

|

||||

# PCAP Section

|

||||

stenographergroup:

|

||||

group.present:

|

||||

- name: stenographer

|

||||

- gid: 941

|

||||

|

||||

stenographer:

|

||||

user.present:

|

||||

- uid: 941

|

||||

- gid: 941

|

||||

- home: /opt/so/conf/steno

|

||||

|

||||

stenoconfdir:

|

||||

file.directory:

|

||||

- name: /opt/so/conf/steno

|

||||

- user: 941

|

||||

- group: 939

|

||||

- makedirs: True

|

||||

|

||||

pcap_sbin:

|

||||

file.recurse:

|

||||

- name: /usr/sbin

|

||||

- source: salt://pcap/tools/sbin

|

||||

- user: 939

|

||||

- group: 939

|

||||

- file_mode: 755

|

||||

|

||||

{% if PCAPBPF and not PCAP_BPF_STATUS %}

|

||||

stenoPCAPbpfcompilationfailure:

|

||||

test.configurable_test_state:

|

||||

- changes: False

|

||||

- result: False

|

||||

- comment: "BPF Syntax Error - Discarding Specified BPF. Error: {{ PCAP_BPF_CALC['stderr'] }}"

|

||||

{% endif %}

|

||||

|

||||

stenoconf:

|

||||

file.managed:

|

||||

- name: /opt/so/conf/steno/config

|

||||

- source: salt://pcap/files/config.jinja

|

||||

- user: stenographer

|

||||

- group: stenographer

|

||||

- mode: 644

|

||||

- template: jinja

|

||||

- defaults:

|

||||

PCAPMERGED: {{ PCAPMERGED }}

|

||||

STENO_BPF_COMPILED: "{{ STENO_BPF_COMPILED }}"

|

||||

|

||||

pcaptmpdir:

|

||||

file.directory:

|

||||

- name: /nsm/pcaptmp

|

||||

- user: 941

|

||||

- group: 941

|

||||

- makedirs: True

|

||||

|

||||

pcapindexdir:

|

||||

file.directory:

|

||||

- name: /nsm/pcapindex

|

||||

- user: 941

|

||||

- group: 941

|

||||

- makedirs: True

|

||||

|

||||

stenolog:

|

||||

file.directory:

|

||||

- name: /opt/so/log/stenographer

|

||||

- user: 941

|

||||

- group: 941

|

||||

- makedirs: True

|

||||

|

||||

{% else %}

|

||||

|

||||

{{sls}}_state_not_allowed:

|

||||

test.fail_without_changes:

|

||||

- name: {{sls}}_state_not_allowed

|

||||

|

||||

{% endif %}

|

||||

11

salt/pcap/defaults.yaml

Normal file

11

salt/pcap/defaults.yaml

Normal file

@@ -0,0 +1,11 @@

|

||||

pcap:

|

||||

enabled: False

|

||||

config:

|

||||

maxdirectoryfiles: 30000

|

||||

diskfreepercentage: 10

|

||||

blocks: 2048

|

||||

preallocate_file_mb: 4096

|

||||

aiops: 128

|

||||

pin_to_cpu: False

|

||||

cpus_to_pin_to: []

|

||||

disks: []

|

||||

27

salt/pcap/disabled.sls

Normal file

27

salt/pcap/disabled.sls

Normal file

@@ -0,0 +1,27 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% from 'allowed_states.map.jinja' import allowed_states %}

|

||||

{% if sls.split('.')[0] in allowed_states %}

|

||||

|

||||

include:

|

||||

- pcap.sostatus

|

||||

|

||||

so-steno:

|

||||

docker_container.absent:

|

||||

- force: True

|

||||

|

||||

so-steno_so-status.disabled:

|