mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-05-10 21:30:30 +02:00

merge

This commit is contained in:

@@ -2,13 +2,11 @@ body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

description: Which version of Security Onion are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4.10

|

||||

@@ -35,6 +33,7 @@ body:

|

||||

- 2.4.200

|

||||

- 2.4.201

|

||||

- 2.4.210

|

||||

- 3.0.0

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

@@ -96,7 +95,7 @@ body:

|

||||

attributes:

|

||||

label: Hardware Specs

|

||||

description: >

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://docs.securityonion.net/en/2.4/hardware.html?

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://securityonion.net/docs/hardware?

|

||||

options:

|

||||

-

|

||||

- Meets minimum requirements

|

||||

|

||||

+12

-12

@@ -1,17 +1,17 @@

|

||||

### 2.4.201-20260114 ISO image released on 2026/1/15

|

||||

### 2.4.210-20260302 ISO image released on 2026/03/02

|

||||

|

||||

|

||||

### Download and Verify

|

||||

|

||||

2.4.201-20260114 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.201-20260114.iso

|

||||

2.4.210-20260302 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

||||

|

||||

MD5: 20E926E433203798512EF46E590C89B9

|

||||

SHA1: 779E4084A3E1A209B494493B8F5658508B6014FA

|

||||

SHA256: 3D10E7C885AEC5C5D4F4E50F9644FF9728E8C0A2E36EBB8C96B32569685A7C40

|

||||

MD5: 575F316981891EBED2EE4E1F42A1F016

|

||||

SHA1: 600945E8823221CBC5F1C056084A71355308227E

|

||||

SHA256: A6AA6471125F07FA6E2796430E94BEAFDEF728E833E9728FDFA7106351EBC47E

|

||||

|

||||

Signature for ISO image:

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.201-20260114.iso.sig

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

||||

|

||||

Signing key:

|

||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||

@@ -25,22 +25,22 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

||||

|

||||

Download the signature file for the ISO:

|

||||

```

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.201-20260114.iso.sig

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

||||

```

|

||||

|

||||

Download the ISO image:

|

||||

```

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.201-20260114.iso

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

||||

```

|

||||

|

||||

Verify the downloaded ISO image using the signature file:

|

||||

```

|

||||

gpg --verify securityonion-2.4.201-20260114.iso.sig securityonion-2.4.201-20260114.iso

|

||||

gpg --verify securityonion-2.4.210-20260302.iso.sig securityonion-2.4.210-20260302.iso

|

||||

```

|

||||

|

||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||

```

|

||||

gpg: Signature made Wed 14 Jan 2026 05:23:39 PM EST using RSA key ID FE507013

|

||||

gpg: Signature made Mon 02 Mar 2026 11:55:24 AM EST using RSA key ID FE507013

|

||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||

gpg: WARNING: This key is not certified with a trusted signature!

|

||||

gpg: There is no indication that the signature belongs to the owner.

|

||||

@@ -50,4 +50,4 @@ Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

||||

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

||||

|

||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||

https://docs.securityonion.net/en/2.4/installation.html

|

||||

https://securityonion.net/docs/installation

|

||||

|

||||

@@ -1,50 +1,58 @@

|

||||

## Security Onion 2.4

|

||||

<p align="center">

|

||||

<img src="https://securityonionsolutions.com/logo/logo-so-onion-dark.svg" width="400" alt="Security Onion Logo">

|

||||

</p>

|

||||

|

||||

Security Onion 2.4 is here!

|

||||

# Security Onion

|

||||

|

||||

## Screenshots

|

||||

Security Onion is a free and open Linux distribution for threat hunting, enterprise security monitoring, and log management. It includes a comprehensive suite of tools designed to work together to provide visibility into your network and host activity.

|

||||

|

||||

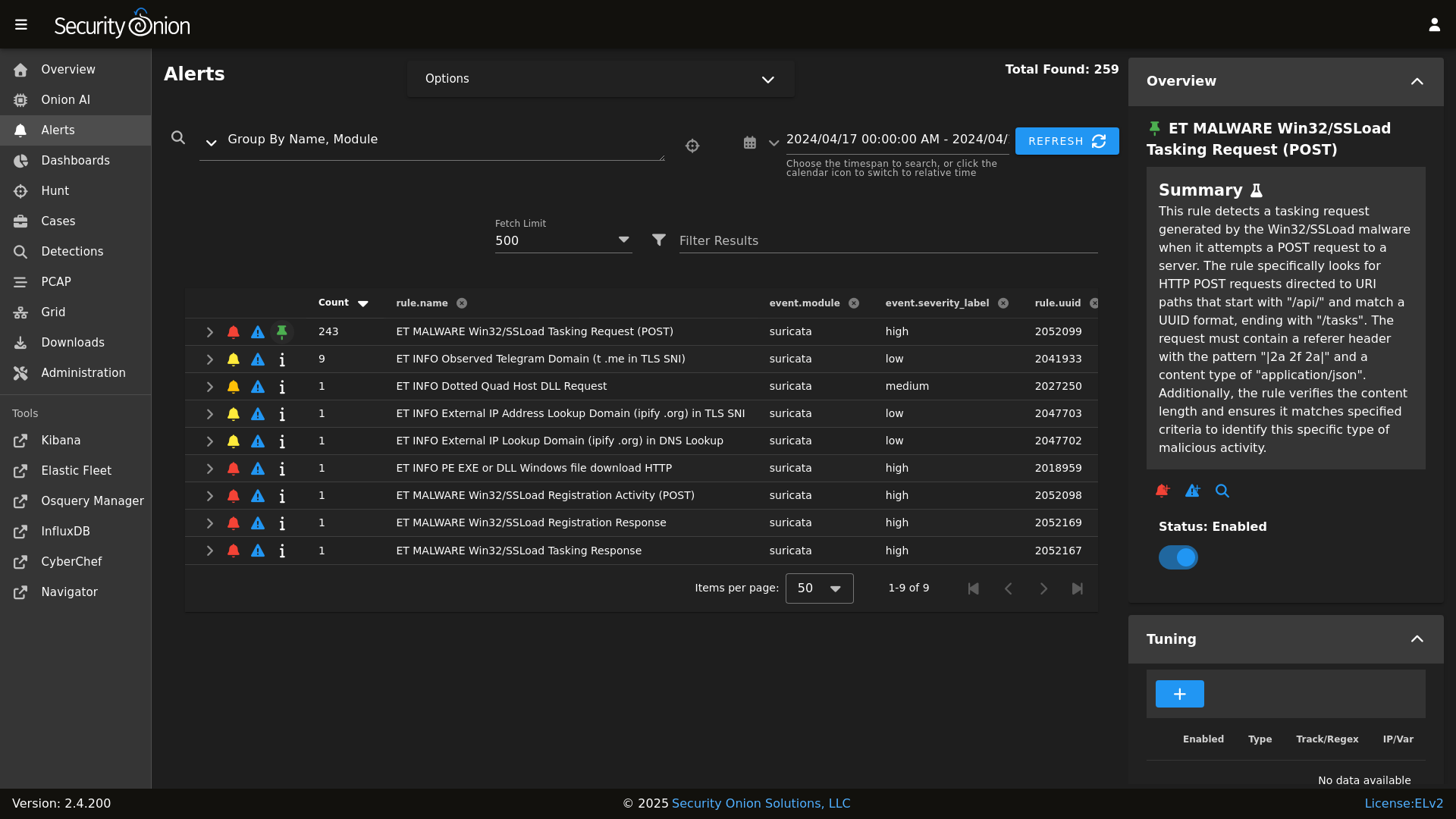

Alerts

|

||||

|

||||

## ✨ Features

|

||||

|

||||

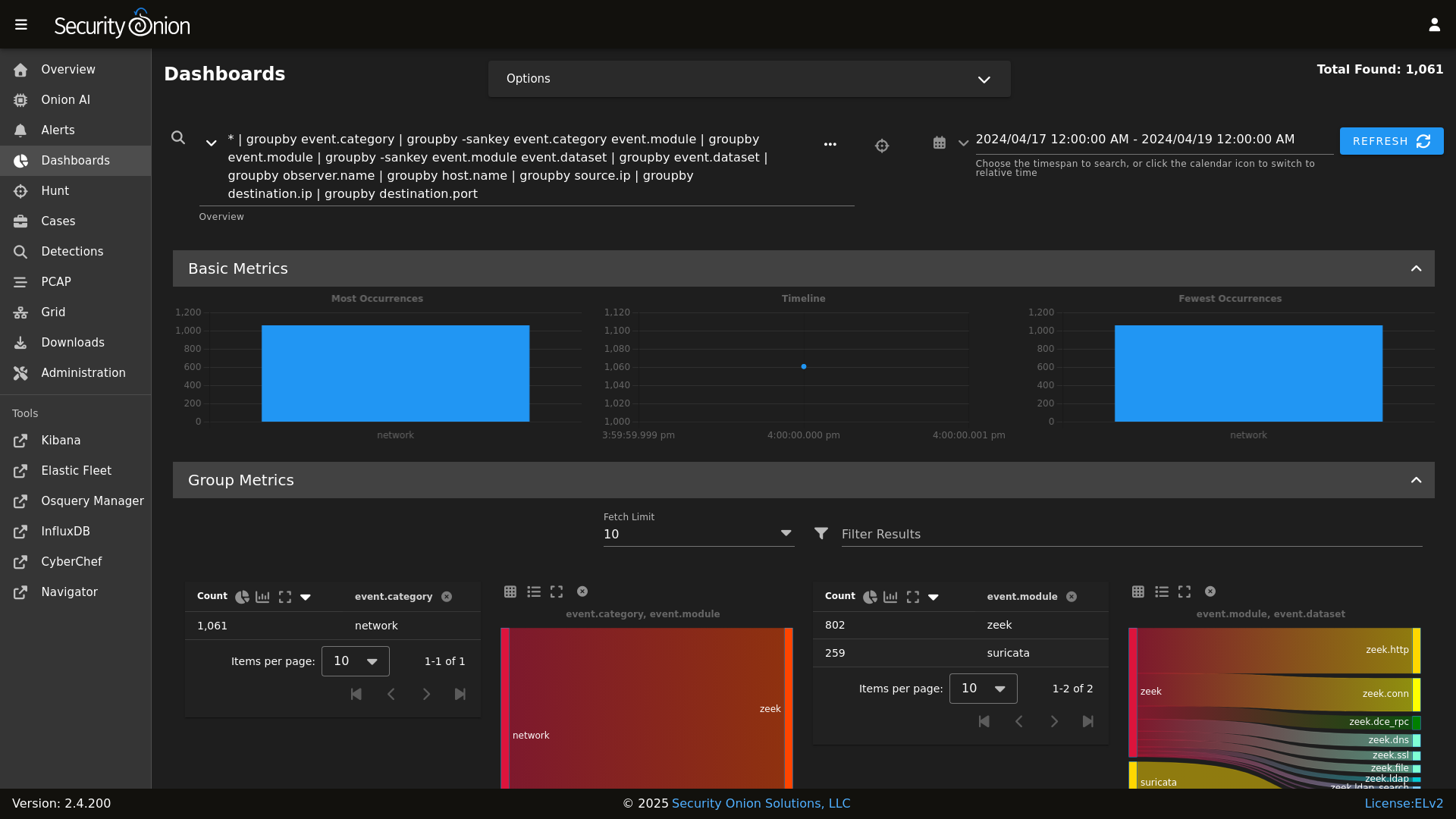

Dashboards

|

||||

|

||||

Security Onion includes everything you need to monitor your network and host systems:

|

||||

|

||||

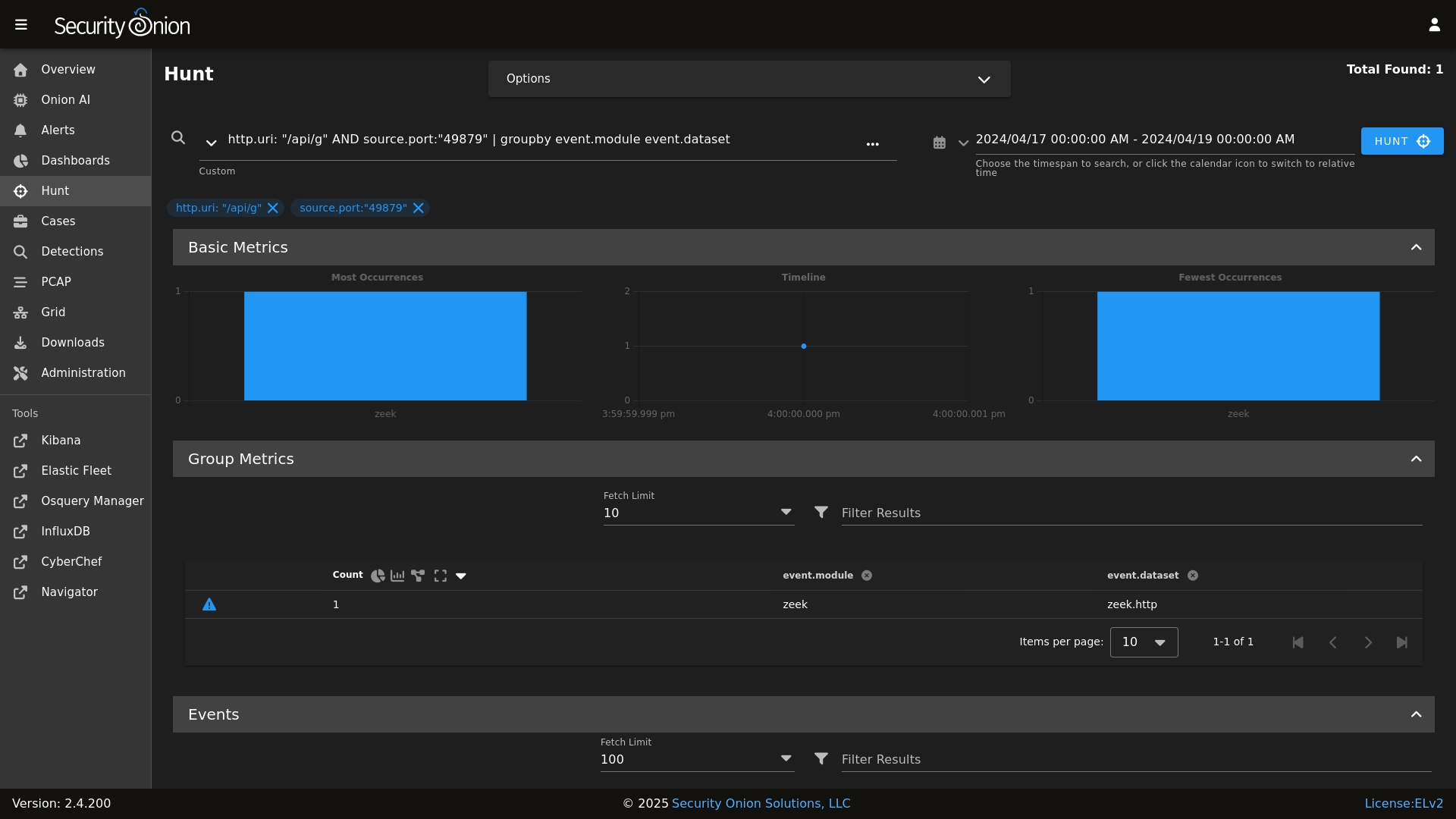

Hunt

|

||||

|

||||

* **Security Onion Console (SOC)**: A unified web interface for analyzing security events and managing your grid.

|

||||

* **Elastic Stack**: Powerful search backed by Elasticsearch.

|

||||

* **Intrusion Detection**: Network-based IDS with Suricata and host-based monitoring with Elastic Fleet.

|

||||

* **Network Metadata**: Detailed network metadata generated by Zeek or Suricata.

|

||||

* **Full Packet Capture**: Retain and analyze raw network traffic with Suricata PCAP.

|

||||

|

||||

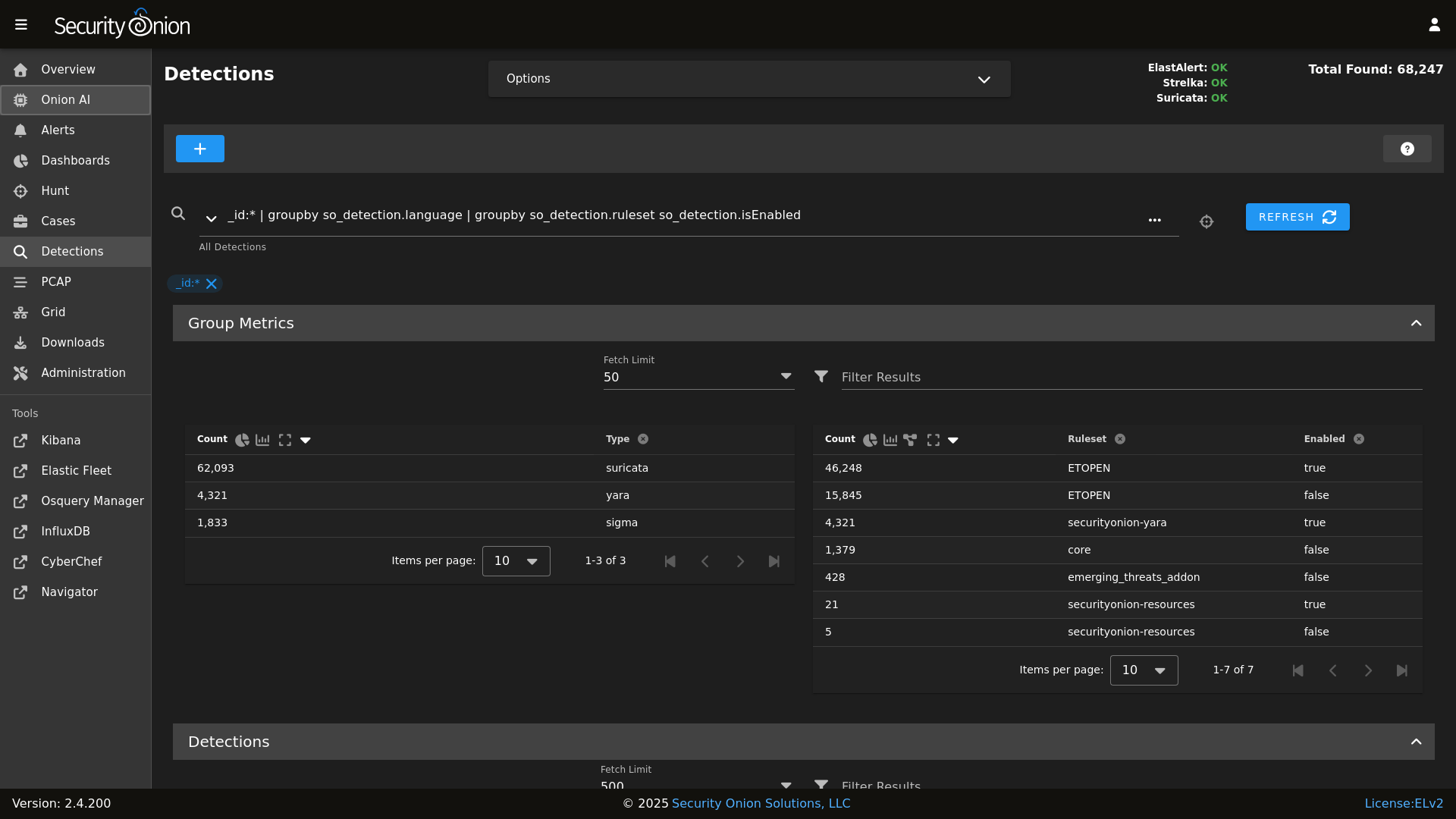

Detections

|

||||

|

||||

## ⭐ Security Onion Pro

|

||||

|

||||

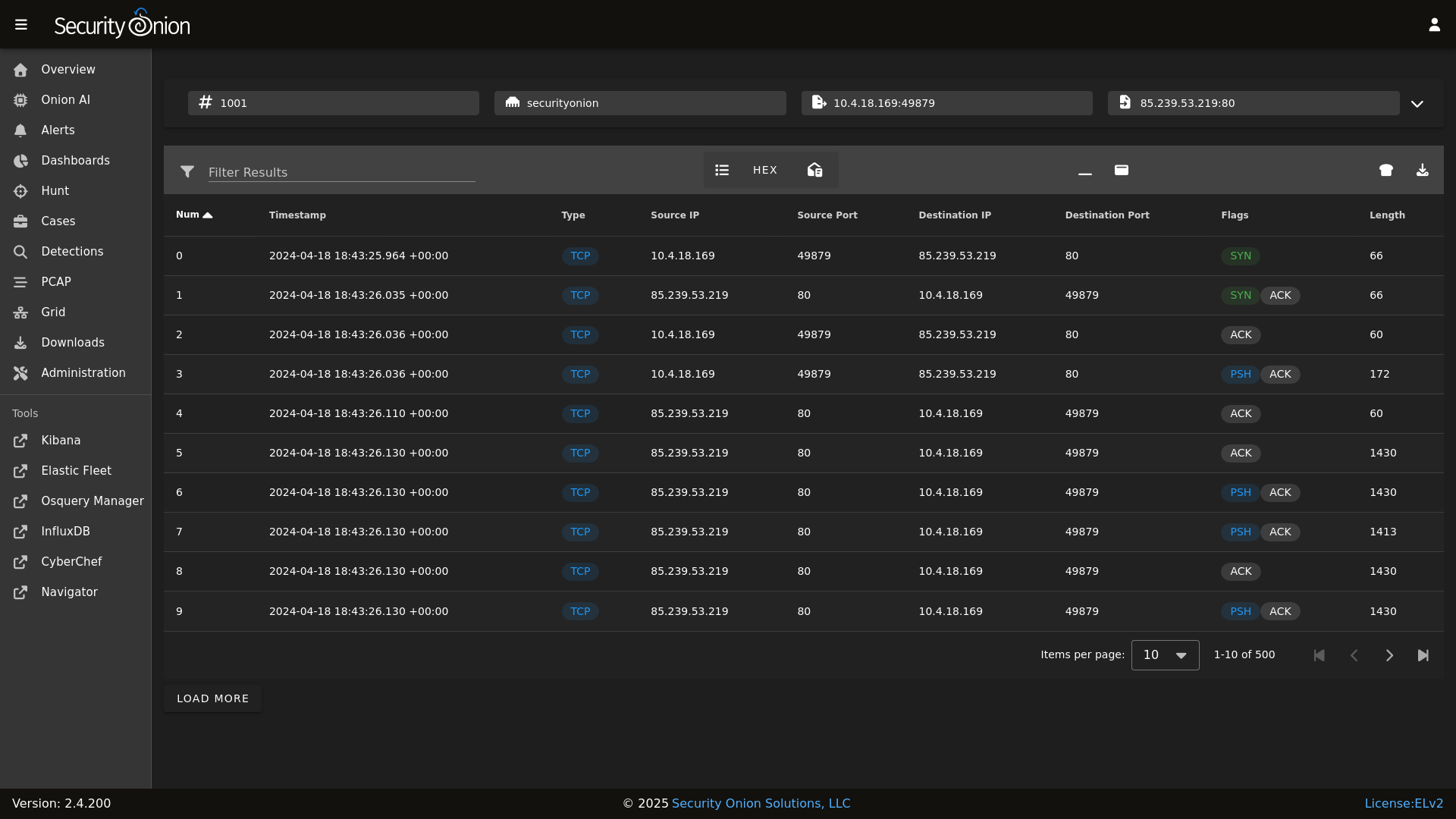

PCAP

|

||||

|

||||

For organizations and enterprises requiring advanced capabilities, **Security Onion Pro** offers additional features designed for scale and efficiency:

|

||||

|

||||

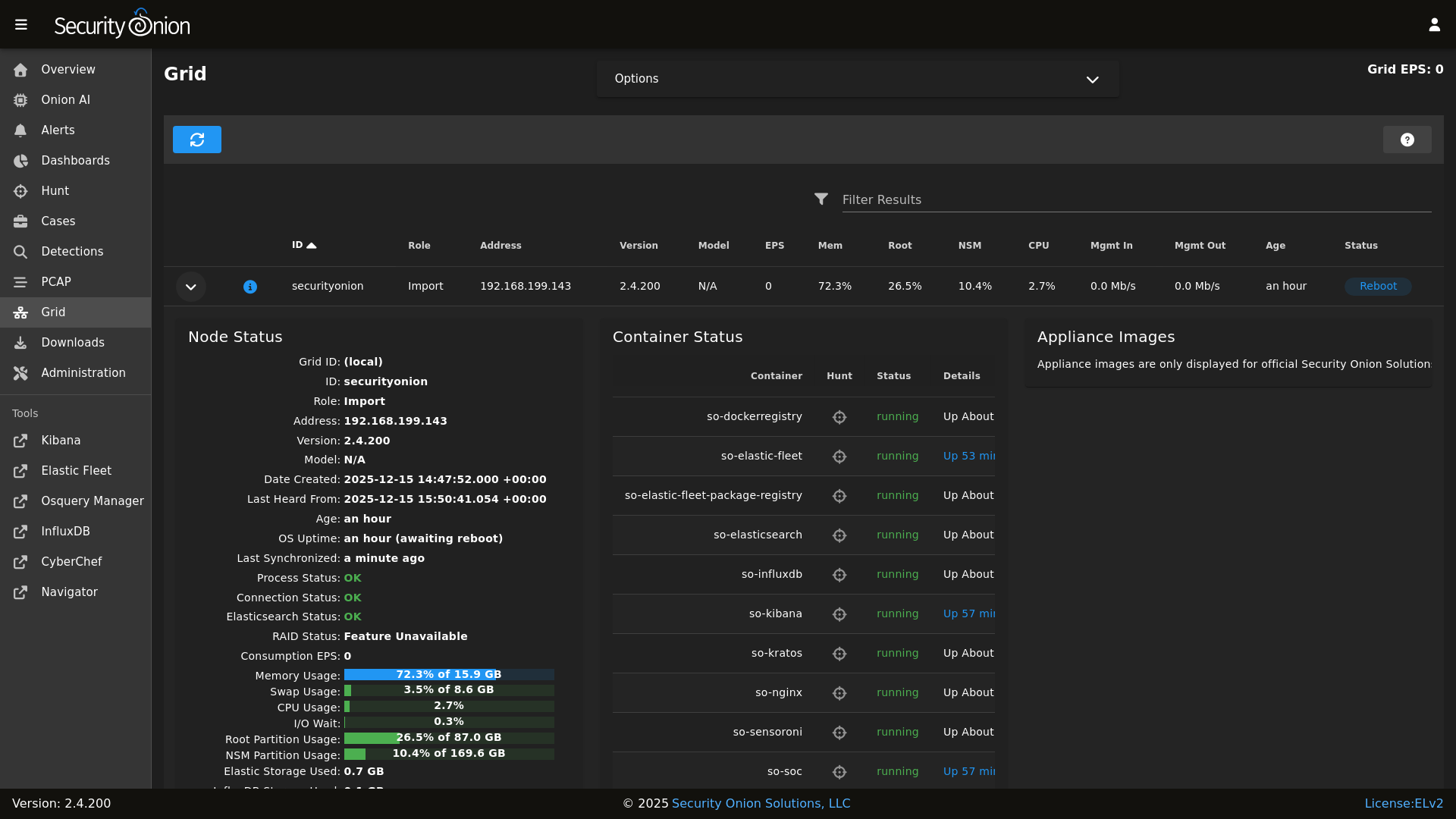

Grid

|

||||

|

||||

* **Onion AI**: Leverage powerful AI-driven insights to accelerate your analysis and investigations.

|

||||

* **Enterprise Features**: Enhanced tools and integrations tailored for enterprise-grade security operations.

|

||||

|

||||

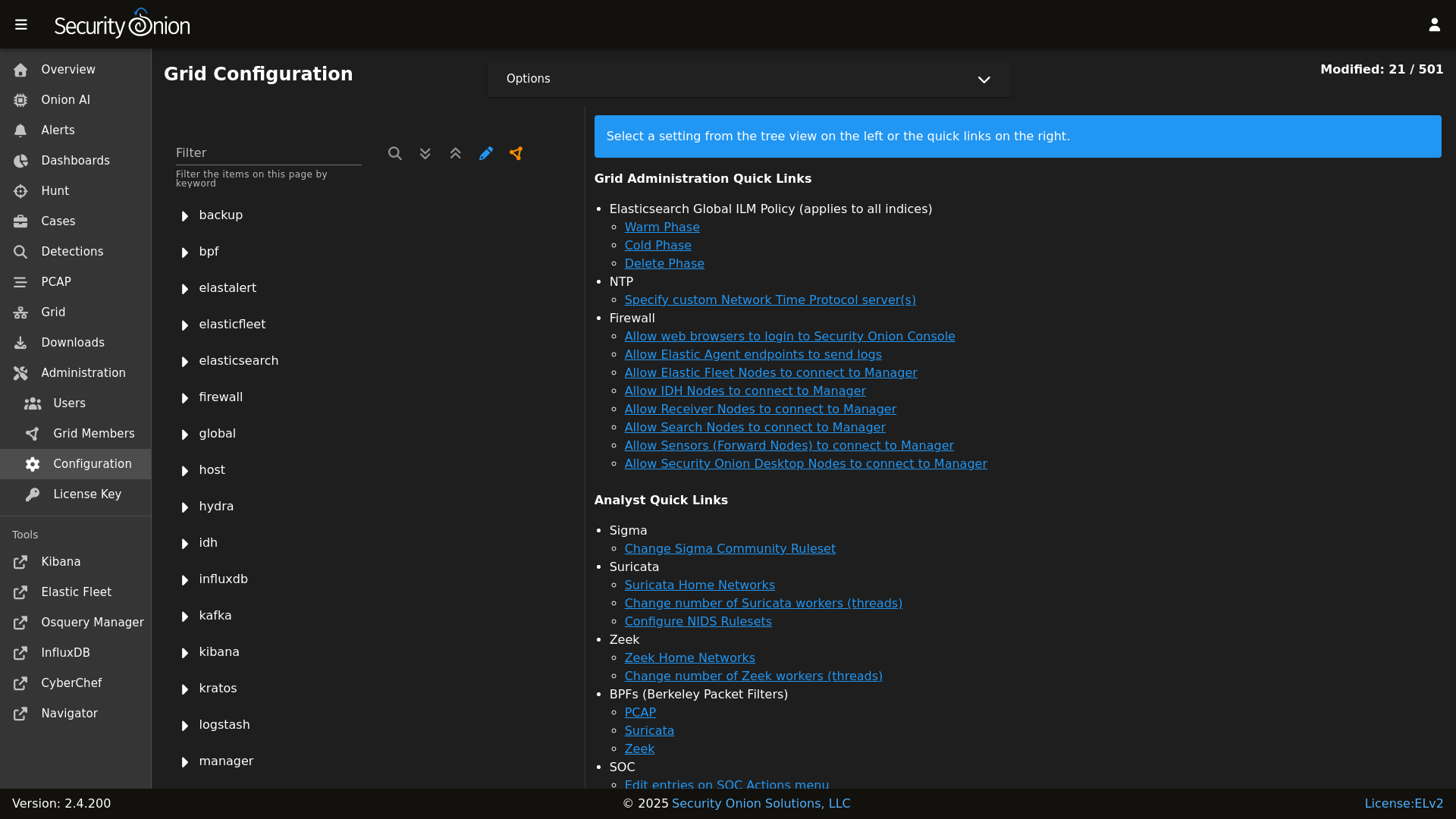

Config

|

||||

|

||||

For more information, visit the [Security Onion Pro](https://securityonionsolutions.com/pro) page.

|

||||

|

||||

### Release Notes

|

||||

## ☁️ Cloud Deployment

|

||||

|

||||

https://docs.securityonion.net/en/2.4/release-notes.html

|

||||

Security Onion is available and ready to deploy in the **AWS**, **Azure**, and **Google Cloud (GCP)** marketplaces.

|

||||

|

||||

### Requirements

|

||||

## 🚀 Getting Started

|

||||

|

||||

https://docs.securityonion.net/en/2.4/hardware.html

|

||||

| Goal | Resource |

|

||||

| :--- | :--- |

|

||||

| **Download** | [Security Onion ISO](https://securityonion.net/docs/download) |

|

||||

| **Requirements** | [Hardware Guide](https://securityonion.net/docs/hardware) |

|

||||

| **Install** | [Installation Instructions](https://securityonion.net/docs/installation) |

|

||||

| **What's New** | [Release Notes](https://securityonion.net/docs/release-notes) |

|

||||

|

||||

### Download

|

||||

## 📖 Documentation & Support

|

||||

|

||||

https://docs.securityonion.net/en/2.4/download.html

|

||||

For more detailed information, please visit our [Documentation](https://docs.securityonion.net).

|

||||

|

||||

### Installation

|

||||

* **FAQ**: [Frequently Asked Questions](https://securityonion.net/docs/faq)

|

||||

* **Community**: [Discussions & Support](https://securityonion.net/docs/community-support)

|

||||

* **Training**: [Official Training](https://securityonion.net/training)

|

||||

|

||||

https://docs.securityonion.net/en/2.4/installation.html

|

||||

## 🤝 Contributing

|

||||

|

||||

### FAQ

|

||||

We welcome contributions! Please see our [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines on how to get involved.

|

||||

|

||||

https://docs.securityonion.net/en/2.4/faq.html

|

||||

## 🛡️ License

|

||||

|

||||

### Feedback

|

||||

Security Onion is licensed under the terms of the license found in the [LICENSE](LICENSE) file.

|

||||

|

||||

https://docs.securityonion.net/en/2.4/community-support.html

|

||||

---

|

||||

*Built with 🧅 by Security Onion Solutions.*

|

||||

|

||||

@@ -4,6 +4,7 @@

|

||||

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 3.x | :white_check_mark: |

|

||||

| 2.4.x | :white_check_mark: |

|

||||

| 2.3.x | :x: |

|

||||

| 16.04.x | :x: |

|

||||

|

||||

@@ -1,10 +1,12 @@

|

||||

{% macro remove_comments(bpfmerged, app) %}

|

||||

|

||||

{# remove comments from the bpf #}

|

||||

{% set app_list = [] %}

|

||||

{% for bpf in bpfmerged[app] %}

|

||||

{% if bpf.strip().startswith('#') %}

|

||||

{% do bpfmerged[app].pop(loop.index0) %}

|

||||

{% if not bpf.strip().startswith('#') %}

|

||||

{% do app_list.append(bpf) %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

{% do bpfmerged.update({app: app_list}) %}

|

||||

|

||||

{% endmacro %}

|

||||

|

||||

@@ -13,7 +13,7 @@

|

||||

{% endif %}

|

||||

|

||||

{% if PCAPBPF %}

|

||||

{% set PCAP_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ PCAPBPF|join(" "), cwd='/root') %}

|

||||

{% set PCAP_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + PCAPBPF|join(" "),cwd='/root') %}

|

||||

{% if PCAP_BPF_CALC['retcode'] == 0 %}

|

||||

{% set PCAP_BPF_STATUS = 1 %}

|

||||

{% set STENO_BPF_COMPILED = ",\\\"--filter=" + PCAP_BPF_CALC['stdout'] + "\\\"" %}

|

||||

|

||||

@@ -9,7 +9,7 @@

|

||||

{% set SURICATABPF = BPFMERGED.suricata %}

|

||||

|

||||

{% if SURICATABPF %}

|

||||

{% set SURICATA_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ SURICATABPF|join(" "), cwd='/root') %}

|

||||

{% set SURICATA_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + SURICATABPF|join(" "),cwd='/root') %}

|

||||

{% if SURICATA_BPF_CALC['retcode'] == 0 %}

|

||||

{% set SURICATA_BPF_STATUS = 1 %}

|

||||

{% endif %}

|

||||

|

||||

@@ -9,7 +9,7 @@

|

||||

{% set ZEEKBPF = BPFMERGED.zeek %}

|

||||

|

||||

{% if ZEEKBPF %}

|

||||

{% set ZEEK_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ ZEEKBPF|join(" "), cwd='/root') %}

|

||||

{% set ZEEK_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + ZEEKBPF|join(" "),cwd='/root') %}

|

||||

{% if ZEEK_BPF_CALC['retcode'] == 0 %}

|

||||

{% set ZEEK_BPF_STATUS = 1 %}

|

||||

{% endif %}

|

||||

|

||||

@@ -3,8 +3,6 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||

|

||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||

{% if SOC_GLOBAL.global.airgap %}

|

||||

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

||||

@@ -120,23 +118,3 @@ copy_bootstrap-salt_sbin:

|

||||

- source: {{UPDATE_DIR}}/salt/salt/scripts/bootstrap-salt.sh

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

{# this is added in 2.4.120 to remove salt repo files pointing to saltproject.io to accomodate the move to broadcom and new bootstrap-salt script #}

|

||||

{% if salt['pkg.version_cmp'](SOVERSION, '2.4.120') == -1 %}

|

||||

{% set saltrepofile = '/etc/yum.repos.d/salt.repo' %}

|

||||

{% if grains.os_family == 'Debian' %}

|

||||

{% set saltrepofile = '/etc/apt/sources.list.d/salt.list' %}

|

||||

{% endif %}

|

||||

remove_saltproject_io_repo_manager:

|

||||

file.absent:

|

||||

- name: {{ saltrepofile }}

|

||||

{% endif %}

|

||||

|

||||

{% else %}

|

||||

fix_23_soup_sbin:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /usr/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

fix_23_soup_salt:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /opt/so/saltstack/defalt/salt/common/tools/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

{% endif %}

|

||||

|

||||

@@ -10,7 +10,7 @@

|

||||

cat << EOF

|

||||

|

||||

so-checkin will run a full salt highstate to apply all salt states. If a highstate is already running, this request will be queued and so it may pause for a few minutes before you see any more output. For more information about so-checkin and salt, please see:

|

||||

https://docs.securityonion.net/en/2.4/salt.html

|

||||

https://securityonion.net/docs/salt

|

||||

|

||||

EOF

|

||||

|

||||

|

||||

@@ -10,7 +10,7 @@

|

||||

# and since this same logic is required during installation, it's included in this file.

|

||||

|

||||

DEFAULT_SALT_DIR=/opt/so/saltstack/default

|

||||

DOC_BASE_URL="https://docs.securityonion.net/en/2.4"

|

||||

DOC_BASE_URL="https://securityonion.net/docs"

|

||||

|

||||

if [ -z $NOROOT ]; then

|

||||

# Check for prerequisites

|

||||

@@ -333,8 +333,8 @@ get_elastic_agent_vars() {

|

||||

|

||||

if [ -f "$defaultsfile" ]; then

|

||||

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||

|

||||

@@ -228,6 +228,7 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|marked for removal" # docker container getting recycled

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|tcp 127.0.0.1:6791: bind: address already in use" # so-elastic-fleet agent restarting. Seen starting w/ 8.18.8 https://github.com/elastic/kibana/issues/201459

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|TransformTask\] \[logs-(tychon|aws_billing|microsoft_defender_endpoint).*user so_kibana lacks the required permissions \[logs-\1" # Known issue with 3 integrations using kibana_system role vs creating unique api creds with proper permissions.

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|manifest unknown" # appears in so-dockerregistry log for so-tcpreplay following docker upgrade to 29.2.1-1

|

||||

fi

|

||||

|

||||

RESULT=0

|

||||

|

||||

@@ -6,7 +6,7 @@

|

||||

# Elastic License 2.0.

|

||||

|

||||

source /usr/sbin/so-common

|

||||

doc_desktop_url="$DOC_BASE_URL/desktop.html"

|

||||

doc_desktop_url="$DOC_BASE_URL/desktop"

|

||||

|

||||

{# we only want the script to install the desktop if it is OEL -#}

|

||||

{% if grains.os == 'OEL' -%}

|

||||

|

||||

+13

-13

@@ -20,20 +20,20 @@ dockergroup:

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:27.2.0-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~debian.12~bookworm

|

||||

- containerd.io: 2.2.1-1~debian.12~bookworm

|

||||

- docker-ce: 5:29.2.1-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:29.2.1-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:29.2.1-1~debian.12~bookworm

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% elif grains.oscodename == 'jammy' %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-1

|

||||

- docker-ce: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:27.2.0-1~ubuntu.22.04~jammy

|

||||

- containerd.io: 2.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:29.2.1-1~ubuntu.22.04~jammy

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% else %}

|

||||

@@ -51,10 +51,10 @@ dockerheldpackages:

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.7.21-3.1.el9

|

||||

- docker-ce: 3:27.2.0-1.el9

|

||||

- docker-ce-cli: 1:27.2.0-1.el9

|

||||

- docker-ce-rootless-extras: 27.2.0-1.el9

|

||||

- containerd.io: 2.2.1-1.el9

|

||||

- docker-ce: 3:29.2.1-1.el9

|

||||

- docker-ce-cli: 1:29.2.1-1.el9

|

||||

- docker-ce-rootless-extras: 29.2.1-1.el9

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

@@ -117,4 +117,4 @@ sos_docker_net:

|

||||

com.docker.network.bridge.enable_ip_masquerade: 'true'

|

||||

com.docker.network.bridge.enable_icc: 'true'

|

||||

com.docker.network.bridge.host_binding_ipv4: '0.0.0.0'

|

||||

- unless: 'docker network ls | grep sobridge'

|

||||

- unless: ip l | grep sobridge

|

||||

|

||||

@@ -17,7 +17,7 @@

|

||||

"paths": [

|

||||

"/nsm/suricata/eve*.json"

|

||||

],

|

||||

"data_stream.dataset": "filestream.generic",

|

||||

"data_stream.dataset": "suricata",

|

||||

"pipeline": "suricata.common",

|

||||

"parsers": "#- ndjson:\n# target: \"\"\n# message_key: msg\n#- multiline:\n# type: count\n# count_lines: 3\n",

|

||||

"exclude_files": [

|

||||

@@ -41,4 +41,4 @@

|

||||

}

|

||||

},

|

||||

"force": true

|

||||

}

|

||||

}

|

||||

|

||||

@@ -858,6 +858,8 @@ elasticsearch:

|

||||

composed_of:

|

||||

- agent-mappings

|

||||

- dtc-agent-mappings

|

||||

- event-mappings

|

||||

- file-mappings

|

||||

- host-mappings

|

||||

- dtc-host-mappings

|

||||

- http-mappings

|

||||

|

||||

@@ -81,6 +81,14 @@

|

||||

"ignore_missing": true

|

||||

}

|

||||

},

|

||||

{

|

||||

"rename": {

|

||||

"field": "file",

|

||||

"target_field": "file.path",

|

||||

"ignore_failure": true,

|

||||

"ignore_missing": true

|

||||

}

|

||||

},

|

||||

{

|

||||

"pipeline": {

|

||||

"name": "common"

|

||||

|

||||

@@ -84,13 +84,6 @@ elasticsearch:

|

||||

custom008: *pipelines

|

||||

custom009: *pipelines

|

||||

custom010: *pipelines

|

||||

managed_integrations:

|

||||

description: List of integrations to add into SOC config UI. Enter the full or partial integration name. Eg. 1password, 1pass

|

||||

forcedType: "[]string"

|

||||

multiline: True

|

||||

global: True

|

||||

advanced: True

|

||||

helpLink: elasticsearch.html

|

||||

index_settings:

|

||||

global_overrides:

|

||||

index_template:

|

||||

|

||||

@@ -1,91 +1,103 @@

|

||||

{

|

||||

"_meta": {

|

||||

"documentation": "https://www.elastic.co/guide/en/ecs/current/ecs-dns.html",

|

||||

"ecs_version": "1.12.2"

|

||||

},

|

||||

"template": {

|

||||

"mappings": {

|

||||

"properties": {

|

||||

"dns": {

|

||||

"properties": {

|

||||

"answers": {

|

||||

"properties": {

|

||||

"class": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"data": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"name": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"ttl": {

|

||||

"type": "long"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

"_meta": {

|

||||

"documentation": "https://www.elastic.co/guide/en/ecs/current/ecs-dns.html",

|

||||

"ecs_version": "1.12.2"

|

||||

},

|

||||

"template": {

|

||||

"mappings": {

|

||||

"properties": {

|

||||

"dns": {

|

||||

"properties": {

|

||||

"answers": {

|

||||

"properties": {

|

||||

"class": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"data": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"name": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"ttl": {

|

||||

"type": "long"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

},

|

||||

"type": "object"

|

||||

},

|

||||

"header_flags": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"id": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"op_code": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"question": {

|

||||

"properties": {

|

||||

"class": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"name": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"registered_domain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"subdomain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"top_level_domain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"query": {

|

||||

"properties" :{

|

||||

"type":{

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"type_name": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"resolved_ip": {

|

||||

"type": "ip"

|

||||

},

|

||||

"response_code": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

}

|

||||

}

|

||||

},

|

||||

"type": "object"

|

||||

},

|

||||

"header_flags": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"id": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"op_code": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"question": {

|

||||

"properties": {

|

||||

"class": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"name": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"registered_domain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"subdomain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"top_level_domain": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"resolved_ip": {

|

||||

"type": "ip"

|

||||

},

|

||||

"response_code": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"type": {

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -15,6 +15,13 @@

|

||||

"ignore_above": 1024,

|

||||

"type": "keyword"

|

||||

},

|

||||

"bytes": {

|

||||

"properties": {

|

||||

"missing": {

|

||||

"type": "long"

|

||||

}

|

||||

}

|

||||

},

|

||||

"code_signature": {

|

||||

"properties": {

|

||||

"digest_algorithm": {

|

||||

|

||||

@@ -32,7 +32,7 @@ global:

|

||||

readonly: True

|

||||

advanced: True

|

||||

url_base:

|

||||

description: Used for handling of authentication cookies.

|

||||

description: The base URL for the Security Onion Console. Must be accessible by all nodes in the grid, as well as all analysts. Also used for handling of authentication cookies. Can be an IP address or a hostname/FQDN. Do not include protocol (http/https) or port number.

|

||||

global: True

|

||||

airgap:

|

||||

description: Airgapped systems do not have network connectivity to the internet. This setting represents how this grid was configured during initial setup. While it is technically possible to manually switch systems between airgap and non-airgap, there are some nuances and additional steps involved. For that reason this setting is marked read-only. Contact your support representative for guidance if there is a need to change this setting.

|

||||

|

||||

File diff suppressed because one or more lines are too long

@@ -1,2 +1,2 @@

|

||||

https://repo.securityonion.net/file/so-repo/prod/2.4/oracle/9

|

||||

https://repo-alt.securityonion.net/prod/2.4/oracle/9

|

||||

https://repo.securityonion.net/file/so-repo/prod/3/oracle/9

|

||||

https://repo-alt.securityonion.net/prod/3/oracle/9

|

||||

@@ -4,7 +4,7 @@

|

||||

# Elastic License 2.0.

|

||||

|

||||

{# Managed elasticsearch/soc_elasticsearch.yaml file for adding integration configuration items to UI #}

|

||||

{% set managed_integrations = salt['pillar.get']('elasticsearch:managed_integrations', []) %}

|

||||

{% set managed_integrations = salt['pillar.get']('manager:managed_integrations', []) %}

|

||||

{% if managed_integrations and salt['file.file_exists']('/opt/so/state/esfleet_package_components.json') and salt['file.file_exists']('/opt/so/state/esfleet_component_templates.json') %}

|

||||

{% from 'elasticfleet/integration-defaults.map.jinja' import ADDON_INTEGRATION_DEFAULTS %}

|

||||

{% set addon_integration_keys = ADDON_INTEGRATION_DEFAULTS.keys() %}

|

||||

|

||||

@@ -78,3 +78,10 @@ manager:

|

||||

advanced: True

|

||||

helpLink: elastic-fleet.html

|

||||

forcedType: int

|

||||

managed_integrations:

|

||||

description: List of integrations to add into SOC config UI. Enter the full or partial integration name. Eg. 1password, 1pass

|

||||

forcedType: "[]string"

|

||||

multiline: True

|

||||

global: True

|

||||

advanced: True

|

||||

helpLink: elasticsearch.html

|

||||

@@ -143,7 +143,7 @@ show_usage() {

|

||||

echo " -v Show verbose output (files changed/added/deleted)"

|

||||

echo " -vv Show very verbose output (includes file diffs)"

|

||||

echo " --test Test mode - show what would change without making changes"

|

||||

echo " branch Git branch to checkout (default: 2.4/main)"

|

||||

echo " branch Git branch to checkout (default: 3/main)"

|

||||

echo ""

|

||||

echo "Examples:"

|

||||

echo " $0 # Normal operation"

|

||||

@@ -193,7 +193,7 @@ done

|

||||

|

||||

# Set default branch if not provided

|

||||

if [ -z "$BRANCH" ]; then

|

||||

BRANCH=2.4/main

|

||||

BRANCH=3/main

|

||||

fi

|

||||

|

||||

got_root

|

||||

|

||||

@@ -9,6 +9,7 @@ import os

|

||||

import sys

|

||||

import time

|

||||

import yaml

|

||||

import json

|

||||

|

||||

lockFile = "/tmp/so-yaml.lock"

|

||||

|

||||

@@ -16,19 +17,24 @@ lockFile = "/tmp/so-yaml.lock"

|

||||

def showUsage(args):

|

||||

print('Usage: {} <COMMAND> <YAML_FILE> [ARGS...]'.format(sys.argv[0]), file=sys.stderr)

|

||||

print(' General commands:', file=sys.stderr)

|

||||

print(' append - Append a list item to a yaml key, if it exists and is a list. Requires KEY and LISTITEM args.', file=sys.stderr)

|

||||

print(' removelistitem - Remove a list item from a yaml key, if it exists and is a list. Requires KEY and LISTITEM args.', file=sys.stderr)

|

||||

print(' add - Add a new key and set its value. Fails if key already exists. Requires KEY and VALUE args.', file=sys.stderr)

|

||||

print(' get - Displays (to stdout) the value stored in the given key. Requires KEY arg.', file=sys.stderr)

|

||||

print(' remove - Removes a yaml key, if it exists. Requires KEY arg.', file=sys.stderr)

|

||||

print(' replace - Replaces (or adds) a new key and set its value. Requires KEY and VALUE args.', file=sys.stderr)

|

||||

print(' help - Prints this usage information.', file=sys.stderr)

|

||||

print(' append - Append a list item to a yaml key, if it exists and is a list. Requires KEY and LISTITEM args.', file=sys.stderr)

|

||||

print(' appendlistobject - Append an object to a yaml list key. Requires KEY and JSON_OBJECT args.', file=sys.stderr)

|

||||

print(' removelistitem - Remove a list item from a yaml key, if it exists and is a list. Requires KEY and LISTITEM args.', file=sys.stderr)

|

||||

print(' replacelistobject - Replace a list object based on a condition. Requires KEY, CONDITION_FIELD, CONDITION_VALUE, and JSON_OBJECT args.', file=sys.stderr)

|

||||

print(' add - Add a new key and set its value. Fails if key already exists. Requires KEY and VALUE args.', file=sys.stderr)

|

||||

print(' get - Displays (to stdout) the value stored in the given key. Requires KEY arg.', file=sys.stderr)

|

||||

print(' remove - Removes a yaml key, if it exists. Requires KEY arg.', file=sys.stderr)

|

||||

print(' replace - Replaces (or adds) a new key and set its value. Requires KEY and VALUE args.', file=sys.stderr)

|

||||

print(' help - Prints this usage information.', file=sys.stderr)

|

||||

print('', file=sys.stderr)

|

||||

print(' Where:', file=sys.stderr)

|

||||

print(' YAML_FILE - Path to the file that will be modified. Ex: /opt/so/conf/service/conf.yaml', file=sys.stderr)

|

||||

print(' KEY - YAML key, does not support \' or " characters at this time. Ex: level1.level2', file=sys.stderr)

|

||||

print(' VALUE - Value to set for a given key. Can be a literal value or file:<path> to load from a YAML file.', file=sys.stderr)

|

||||

print(' LISTITEM - Item to append to a given key\'s list value. Can be a literal value or file:<path> to load from a YAML file.', file=sys.stderr)

|

||||

print(' YAML_FILE - Path to the file that will be modified. Ex: /opt/so/conf/service/conf.yaml', file=sys.stderr)

|

||||

print(' KEY - YAML key, does not support \' or " characters at this time. Ex: level1.level2', file=sys.stderr)

|

||||

print(' VALUE - Value to set for a given key. Can be a literal value or file:<path> to load from a YAML file.', file=sys.stderr)

|

||||

print(' LISTITEM - Item to append to a given key\'s list value. Can be a literal value or file:<path> to load from a YAML file.', file=sys.stderr)

|

||||

print(' JSON_OBJECT - JSON string representing an object to append to a list.', file=sys.stderr)

|

||||

print(' CONDITION_FIELD - Field name to match in list items (e.g., "name").', file=sys.stderr)

|

||||

print(' CONDITION_VALUE - Value to match for the condition field.', file=sys.stderr)

|

||||

sys.exit(1)

|

||||

|

||||

|

||||

@@ -122,6 +128,52 @@ def append(args):

|

||||

return 0

|

||||

|

||||

|

||||

def appendListObjectItem(content, key, listObject):

|

||||

pieces = key.split(".", 1)

|

||||

if len(pieces) > 1:

|

||||

appendListObjectItem(content[pieces[0]], pieces[1], listObject)

|

||||

else:

|

||||

try:

|

||||

if not isinstance(content[key], list):

|

||||

raise AttributeError("Value is not a list")

|

||||

content[key].append(listObject)

|

||||

except AttributeError:

|

||||

print("The existing value for the given key is not a list. No action was taken on the file.", file=sys.stderr)

|

||||

return 1

|

||||

except KeyError:

|

||||

print("The key provided does not exist. No action was taken on the file.", file=sys.stderr)

|

||||

return 1

|

||||

|

||||

|

||||

def appendlistobject(args):

|

||||

if len(args) != 3:

|

||||

print('Missing filename, key arg, or JSON object to append', file=sys.stderr)

|

||||

showUsage(None)

|

||||

return 1

|

||||

|

||||

filename = args[0]

|

||||

key = args[1]

|

||||

jsonString = args[2]

|

||||

|

||||

try:

|

||||

# Parse the JSON string into a Python dictionary

|

||||

listObject = json.loads(jsonString)

|

||||

except json.JSONDecodeError as e:

|

||||

print(f'Invalid JSON string: {e}', file=sys.stderr)

|

||||

return 1

|

||||

|

||||

# Verify that the parsed content is a dictionary (object)

|

||||

if not isinstance(listObject, dict):

|

||||

print('The JSON string must represent an object (dictionary), not an array or primitive value.', file=sys.stderr)

|

||||

return 1

|

||||

|

||||

content = loadYaml(filename)

|

||||

appendListObjectItem(content, key, listObject)

|

||||

writeYaml(filename, content)

|

||||

|

||||

return 0

|

||||

|

||||

|

||||

def removelistitem(args):

|

||||

if len(args) != 3:

|

||||

print('Missing filename, key arg, or list item to remove', file=sys.stderr)

|

||||

@@ -139,6 +191,68 @@ def removelistitem(args):

|

||||

return 0

|

||||

|

||||

|

||||

def replaceListObjectByCondition(content, key, conditionField, conditionValue, newObject):

|

||||

pieces = key.split(".", 1)

|

||||

if len(pieces) > 1:

|

||||

replaceListObjectByCondition(content[pieces[0]], pieces[1], conditionField, conditionValue, newObject)

|

||||

else:

|

||||

try:

|

||||

if not isinstance(content[key], list):

|

||||

raise AttributeError("Value is not a list")

|

||||

|

||||

# Find and replace the item that matches the condition

|

||||

found = False

|

||||

for i, item in enumerate(content[key]):

|

||||

if isinstance(item, dict) and item.get(conditionField) == conditionValue:

|

||||

content[key][i] = newObject

|

||||

found = True

|

||||

break

|

||||

|

||||

if not found:

|

||||

print(f"No list item found with {conditionField}={conditionValue}. No action was taken on the file.", file=sys.stderr)

|

||||

return 1

|

||||

|

||||

except AttributeError:

|

||||

print("The existing value for the given key is not a list. No action was taken on the file.", file=sys.stderr)

|

||||

return 1

|

||||

except KeyError:

|

||||

print("The key provided does not exist. No action was taken on the file.", file=sys.stderr)

|

||||

return 1

|

||||

|

||||

|

||||

def replacelistobject(args):

|

||||

if len(args) != 5:

|

||||

print('Missing filename, key arg, condition field, condition value, or JSON object', file=sys.stderr)

|

||||

showUsage(None)

|

||||

return 1

|

||||

|

||||

filename = args[0]

|

||||

key = args[1]

|

||||

conditionField = args[2]

|

||||

conditionValue = args[3]

|

||||

jsonString = args[4]

|

||||

|

||||

try:

|

||||

# Parse the JSON string into a Python dictionary

|

||||

newObject = json.loads(jsonString)

|

||||

except json.JSONDecodeError as e:

|

||||

print(f'Invalid JSON string: {e}', file=sys.stderr)

|

||||

return 1

|

||||

|

||||

# Verify that the parsed content is a dictionary (object)

|

||||

if not isinstance(newObject, dict):

|

||||

print('The JSON string must represent an object (dictionary), not an array or primitive value.', file=sys.stderr)

|

||||

return 1

|

||||

|

||||

content = loadYaml(filename)

|

||||

result = replaceListObjectByCondition(content, key, conditionField, conditionValue, newObject)

|

||||

|

||||

if result != 1:

|

||||

writeYaml(filename, content)

|

||||

|

||||

return result if result is not None else 0

|

||||

|

||||

|

||||

def addKey(content, key, value):

|

||||

pieces = key.split(".", 1)

|

||||

if len(pieces) > 1:

|

||||

@@ -229,7 +343,7 @@ def get(args):

|

||||

content = loadYaml(filename)

|

||||

output = getKeyValue(content, key)

|

||||

if output is None:

|

||||

print("Not found", file=sys.stderr)

|

||||

print(f"Key '{key}' not found by so-yaml.py", file=sys.stderr)

|

||||

return 2

|

||||

|

||||

print(yaml.safe_dump(output))

|

||||

@@ -247,7 +361,9 @@ def main():

|

||||

"help": showUsage,

|

||||

"add": add,

|

||||

"append": append,

|

||||

"appendlistobject": appendlistobject,

|

||||

"removelistitem": removelistitem,

|

||||

"replacelistobject": replacelistobject,

|

||||

"get": get,

|

||||

"remove": remove,

|

||||

"replace": replace,

|

||||

|

||||

@@ -580,3 +580,340 @@ class TestRemoveListItem(unittest.TestCase):

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The existing value for the given key is not a list. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

|

||||

class TestAppendListObject(unittest.TestCase):

|

||||

|

||||

def test_appendlistobject_missing_arg(self):

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "help"]

|

||||

soyaml.appendlistobject(["file", "key"])

|

||||

sysmock.assert_called()

|

||||

self.assertIn("Missing filename, key arg, or JSON object to append", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123 }, key2: [{name: item1, value: 10}]}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"name": "item2", "value": 20}'

|

||||

soyaml.appendlistobject([filename, "key2", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n child1: 123\nkey2:\n- name: item1\n value: 10\n- name: item2\n value: 20\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_appendlistobject_nested(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: [{name: a, id: 1}], child2: abc }, key2: false}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"name": "b", "id": 2}'

|

||||

soyaml.appendlistobject([filename, "key1.child1", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

# YAML doesn't guarantee key order in dictionaries, so check for content

|

||||

self.assertIn("child1:", actual)

|

||||

self.assertIn("name: a", actual)

|

||||

self.assertIn("id: 1", actual)

|

||||

self.assertIn("name: b", actual)

|

||||

self.assertIn("id: 2", actual)

|

||||

self.assertIn("child2: abc", actual)

|

||||

self.assertIn("key2: false", actual)

|

||||

|

||||

def test_appendlistobject_nested_deep(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123, child2: { deep1: 45, deep2: [{x: 1}] } }, key2: false}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"x": 2, "y": 3}'

|

||||

soyaml.appendlistobject([filename, "key1.child2.deep2", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n child1: 123\n child2:\n deep1: 45\n deep2:\n - x: 1\n - x: 2\n y: 3\nkey2: false\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_appendlistobject_invalid_json(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

result = soyaml.appendlistobject([filename, "key1", "{invalid json"])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("Invalid JSON string:", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_not_dict(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

# Try to append an array instead of an object

|

||||

result = soyaml.appendlistobject([filename, "key1", "[1, 2, 3]"])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("The JSON string must represent an object (dictionary)", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_not_dict_primitive(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

# Try to append a primitive value

|

||||

result = soyaml.appendlistobject([filename, "key1", "123"])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("The JSON string must represent an object (dictionary)", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_key_noexist(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "appendlistobject", filename, "key2", '{"name": "item2"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The key provided does not exist. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_key_noexist_deep(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: [{name: a}] }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "appendlistobject", filename, "key1.child2", '{"name": "b"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The key provided does not exist. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_key_nonlist(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123 }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "appendlistobject", filename, "key1", '{"name": "item"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The existing value for the given key is not a list. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_appendlistobject_key_nonlist_deep(self):

|

||||

filename = "/tmp/so-yaml_test-appendlistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123, child2: { deep1: 45 } }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "appendlistobject", filename, "key1.child2.deep1", '{"name": "item"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The existing value for the given key is not a list. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

|

||||

class TestReplaceListObject(unittest.TestCase):

|

||||

|

||||

def test_replacelistobject_missing_arg(self):

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "help"]

|

||||

soyaml.replacelistobject(["file", "key", "field"])

|

||||

sysmock.assert_called()

|

||||

self.assertIn("Missing filename, key arg, condition field, condition value, or JSON object", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1, value: 10}, {name: item2, value: 20}]}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"name": "item2", "value": 25, "extra": "field"}'

|

||||

soyaml.replacelistobject([filename, "key1", "name", "item2", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n- name: item1\n value: 10\n- extra: field\n name: item2\n value: 25\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_replacelistobject_nested(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: [{id: '1', status: active}, {id: '2', status: inactive}] }}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"id": "2", "status": "active", "updated": true}'

|

||||

soyaml.replacelistobject([filename, "key1.child1", "id", "2", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n child1:\n - id: '1'\n status: active\n - id: '2'\n status: active\n updated: true\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_replacelistobject_nested_deep(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123, child2: { deep1: 45, deep2: [{name: a, val: 1}, {name: b, val: 2}] } }}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"name": "b", "val": 99}'

|

||||

soyaml.replacelistobject([filename, "key1.child2.deep2", "name", "b", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n child1: 123\n child2:\n deep1: 45\n deep2:\n - name: a\n val: 1\n - name: b\n val: 99\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_replacelistobject_invalid_json(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

result = soyaml.replacelistobject([filename, "key1", "name", "item1", "{invalid json"])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("Invalid JSON string:", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_not_dict(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

result = soyaml.replacelistobject([filename, "key1", "name", "item1", "[1, 2, 3]"])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("The JSON string must represent an object (dictionary)", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_condition_not_found(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1, value: 10}, {name: item2, value: 20}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

json_obj = '{"name": "item3", "value": 30}'

|

||||

result = soyaml.replacelistobject([filename, "key1", "name", "item3", json_obj])

|

||||

self.assertEqual(result, 1)

|

||||

self.assertIn("No list item found with name=item3", mock_stderr.getvalue())

|

||||

|

||||

# Verify file was not modified

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

self.assertIn("item1", actual)

|

||||

self.assertIn("item2", actual)

|

||||

self.assertNotIn("item3", actual)

|

||||

|

||||

def test_replacelistobject_key_noexist(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1}]}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "replacelistobject", filename, "key2", "name", "item1", '{"name": "item2"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The key provided does not exist. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_key_noexist_deep(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: [{name: a}] }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "replacelistobject", filename, "key1.child2", "name", "a", '{"name": "b"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The key provided does not exist. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_key_nonlist(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123 }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "replacelistobject", filename, "key1", "name", "item", '{"name": "item"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The existing value for the given key is not a list. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_key_nonlist_deep(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: { child1: 123, child2: { deep1: 45 } }}")

|

||||

file.close()

|

||||

|

||||

with patch('sys.exit', new=MagicMock()) as sysmock:

|

||||

with patch('sys.stderr', new=StringIO()) as mock_stderr:

|

||||

sys.argv = ["cmd", "replacelistobject", filename, "key1.child2.deep1", "name", "item", '{"name": "item"}']

|

||||

soyaml.main()

|

||||

sysmock.assert_called()

|

||||

self.assertEqual("The existing value for the given key is not a list. No action was taken on the file.\n", mock_stderr.getvalue())

|

||||

|

||||

def test_replacelistobject_string_condition_value(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{name: item1, value: 10}, {name: item2, value: 20}]}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"name": "item1", "value": 15}'

|

||||

soyaml.replacelistobject([filename, "key1", "name", "item1", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n- name: item1\n value: 15\n- name: item2\n value: 20\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

def test_replacelistobject_numeric_condition_value(self):

|

||||

filename = "/tmp/so-yaml_test-replacelistobject.yaml"

|

||||

file = open(filename, "w")

|

||||

file.write("{key1: [{id: '1', status: active}, {id: '2', status: inactive}]}")

|

||||

file.close()

|

||||

|

||||

json_obj = '{"id": "1", "status": "updated"}'

|

||||

soyaml.replacelistobject([filename, "key1", "id", "1", json_obj])

|

||||

|

||||

file = open(filename, "r")

|

||||

actual = file.read()

|

||||

file.close()

|

||||

|

||||

expected = "key1:\n- id: '1'\n status: updated\n- id: '2'\n status: inactive\n"

|

||||

self.assertEqual(actual, expected)

|

||||

|

||||

@@ -52,7 +52,7 @@ check_err() {

|

||||

;;

|

||||

28)

|

||||

echo 'No space left on device'

|

||||

echo "Likely ran out of space on disk, please review hardware requirements for Security Onion: $DOC_BASE_URL/hardware.html"

|

||||

echo "Likely ran out of space on disk, please review hardware requirements for Security Onion: $DOC_BASE_URL/hardware"

|

||||

;;

|

||||

30)

|

||||

echo 'Read-only file system'

|

||||

@@ -701,6 +701,21 @@ post_to_2.4.210() {

|

||||

echo "Regenerating Elastic Agent Installers"

|

||||

/sbin/so-elastic-agent-gen-installers

|

||||

|

||||

# migrate elasticsearch:managed_integrations pillar to manager:managed_integrations

|

||||

if managed_integrations=$(/usr/sbin/so-yaml.py get /opt/so/saltstack/local/pillar/elasticsearch/soc_elasticsearch.sls elasticsearch.managed_integrations 2>/dev/null); then

|

||||

local managed_integrations_old_pillar="/tmp/elasticsearch-managed_integrations.yaml"

|

||||

|

||||

echo "Migrating managed_integrations pillar"

|

||||

echo -e "$managed_integrations" > "$managed_integrations_old_pillar"

|

||||

|

||||

/usr/sbin/so-yaml.py add /opt/so/saltstack/local/pillar/manager/soc_manager.sls manager.managed_integrations file:$managed_integrations_old_pillar > /dev/null 2>&1

|

||||

|

||||

/usr/sbin/so-yaml.py remove /opt/so/saltstack/local/pillar/elasticsearch/soc_elasticsearch.sls elasticsearch.managed_integrations

|

||||

fi

|

||||

|

||||

# Remove so-rule-update script left behind by the idstools removal in 2.4.200

|

||||

rm -f /usr/sbin/so-rule-update

|

||||

|

||||

POSTVERSION=2.4.210

|

||||

}

|

||||

|

||||

@@ -988,7 +1003,9 @@ up_to_2.4.210() {

|

||||

# Elastic Update for this release, so download Elastic Agent files

|

||||

determine_elastic_agent_upgrade

|

||||

create_ca_pillar

|

||||

|

||||

# This state is used to deal with the breaking change introduced in 3006.17 - https://docs.saltproject.io/en/3006/topics/releases/3006.17.html

|

||||

# This is the only way the state is called so we can use concurrent=True

|

||||

salt-call state.apply salt.master.add_minimum_auth_version --file-root=$UPDATE_DIR/salt --local concurrent=True

|

||||

INSTALLEDVERSION=2.4.210

|

||||

}

|

||||

|

||||

@@ -1036,7 +1053,7 @@ used and enables informed prioritization of future development.

|

||||

|

||||

Adjust this setting at anytime via the SOC Configuration screen.

|

||||

|

||||

Documentation: https://docs.securityonion.net/en/2.4/telemetry.html

|

||||

Documentation: https://securityonion.net/docs/telemetry

|

||||

|

||||

ASSIST_EOF

|

||||

|

||||

@@ -1184,7 +1201,7 @@ suricata_idstools_removal_pre() {

|

||||

install -d -o 939 -g 939 -m 755 /opt/so/conf/soc/fingerprints

|

||||

install -o 939 -g 939 -m 644 /dev/null /opt/so/conf/soc/fingerprints/suricataengine.syncBlock

|

||||

cat > /opt/so/conf/soc/fingerprints/suricataengine.syncBlock << EOF

|

||||

Suricata ruleset sync is blocked until this file is removed. **CRITICAL** Make sure that you have manually added any custom Suricata rulesets via SOC config before removing this file - review the documentation for more details: https://docs.securityonion.net/en/2.4/nids.html#sync-block

|

||||

Suricata ruleset sync is blocked until this file is removed. **CRITICAL** Make sure that you have manually added any custom Suricata rulesets via SOC config before removing this file - review the documentation for more details: https://securityonion.net/docs/nids

|

||||

EOF

|

||||

|

||||

# Remove possible symlink & create salt local rules dir

|

||||

@@ -1750,7 +1767,7 @@ verify_es_version_compatibility() {

|

||||

fi

|

||||

|

||||

echo -e "\n##############################################################################################################################\n"

|

||||

echo "A previously required intermediate Elasticsearch upgrade was detected. Verifying that all Searchnodes/Heavynodes have successfully upgraded Elasticsearch to $es_required_version_statefile_value before proceeding with soup to avoid potential data loss!"

|

||||

echo "A previously required intermediate Elasticsearch upgrade was detected. Verifying that all Searchnodes/Heavynodes have successfully upgraded Elasticsearch to $es_required_version_statefile_value before proceeding with soup to avoid potential data loss! This command can take up to an hour to complete."

|

||||

timeout --foreground 4000 bash "$es_verification_script" "$es_required_version_statefile_value" "$statefile"

|

||||

if [[ $? -ne 0 ]]; then

|

||||

echo -e "\n!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!\n"

|

||||

@@ -1808,6 +1825,25 @@ verify_es_version_compatibility() {

|

||||

|

||||

}

|

||||

|

||||

wait_for_salt_minion_with_restart() {

|

||||

local minion="$1"

|

||||

local max_wait="${2:-60}"

|

||||

local interval="${3:-3}"

|

||||

local logfile="$4"

|

||||

|

||||

wait_for_salt_minion "$minion" "$max_wait" "$interval" "$logfile"

|

||||

local result=$?

|

||||

|

||||

if [[ $result -ne 0 ]]; then

|

||||

echo "$(date '+%a %d %b %Y %H:%M:%S.%6N') - salt-minion not ready, attempting restart..."

|

||||

systemctl_func "restart" "salt-minion"

|

||||