mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-04-01 10:21:51 +02:00

Merge pull request #14778 from Security-Onion-Solutions/vlb2

hardware virtualization

This commit is contained in:

4

.github/.gitleaks.toml

vendored

4

.github/.gitleaks.toml

vendored

@@ -536,11 +536,11 @@ secretGroup = 4

|

||||

|

||||

[allowlist]

|

||||

description = "global allow lists"

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''']

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''', '''.*:.*StrelkaHexDump.*''', '''.*:.*PLACEHOLDER.*''', '''ssl_.*password''', '''integration_key\s=\s"so-logs-"''']

|

||||

paths = [

|

||||

'''gitleaks.toml''',

|

||||

'''(.*?)(jpg|gif|doc|pdf|bin|svg|socket)$''',

|

||||

'''(go.mod|go.sum)$''',

|

||||

|

||||

'''salt/nginx/files/enterprise-attack.json'''

|

||||

'''(.*?)whl$

|

||||

]

|

||||

|

||||

199

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

Normal file

199

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

Normal file

@@ -0,0 +1,199 @@

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4.10

|

||||

- 2.4.20

|

||||

- 2.4.30

|

||||

- 2.4.40

|

||||

- 2.4.50

|

||||

- 2.4.60

|

||||

- 2.4.70

|

||||

- 2.4.80

|

||||

- 2.4.90

|

||||

- 2.4.100

|

||||

- 2.4.110

|

||||

- 2.4.111

|

||||

- 2.4.120

|

||||

- 2.4.130

|

||||

- 2.4.140

|

||||

- 2.4.141

|

||||

- 2.4.150

|

||||

- 2.4.160

|

||||

- 2.4.170

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Method

|

||||

description: How did you install Security Onion?

|

||||

options:

|

||||

-

|

||||

- Security Onion ISO image

|

||||

- Cloud image (Amazon, Azure, Google)

|

||||

- Network installation on Red Hat derivative like Oracle, Rocky, Alma, etc. (unsupported)

|

||||

- Network installation on Ubuntu (unsupported)

|

||||

- Network installation on Debian (unsupported)

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Description

|

||||

description: >

|

||||

Is this discussion about installation, configuration, upgrading, or other?

|

||||

options:

|

||||

-

|

||||

- installation

|

||||

- configuration

|

||||

- upgrading

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Type

|

||||

description: >

|

||||

When you installed, did you choose Import, Eval, Standalone, Distributed, or something else?

|

||||

options:

|

||||

-

|

||||

- Import

|

||||

- Eval

|

||||

- Standalone

|

||||

- Distributed

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Location

|

||||

description: >

|

||||

Is this deployment in the cloud, on-prem with Internet access, or airgap?

|

||||

options:

|

||||

-

|

||||

- cloud

|

||||

- on-prem with Internet access

|

||||

- airgap

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Hardware Specs

|

||||

description: >

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://docs.securityonion.net/en/2.4/hardware.html?

|

||||

options:

|

||||

-

|

||||

- Meets minimum requirements

|

||||

- Exceeds minimum requirements

|

||||

- Does not meet minimum requirements

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: CPU

|

||||

description: How many CPU cores do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: RAM

|

||||

description: How much RAM do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /

|

||||

description: How much storage do you have for the / partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /nsm

|

||||

description: How much storage do you have for the /nsm partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Collection

|

||||

description: >

|

||||

Are you collecting network traffic from a tap or span port?

|

||||

options:

|

||||

-

|

||||

- tap

|

||||

- span port

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Speeds

|

||||

description: >

|

||||

How much network traffic are you monitoring?

|

||||

options:

|

||||

-

|

||||

- Less than 1Gbps

|

||||

- 1Gbps to 10Gbps

|

||||

- more than 10Gbps

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Status

|

||||

description: >

|

||||

Does SOC Grid show all services on all nodes as running OK?

|

||||

options:

|

||||

-

|

||||

- Yes, all services on all nodes are running OK

|

||||

- No, one or more services are failed (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Salt Status

|

||||

description: >

|

||||

Do you get any failures when you run "sudo salt-call state.highstate"?

|

||||

options:

|

||||

-

|

||||

- Yes, there are salt failures (please provide detail below)

|

||||

- No, there are no failures

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Logs

|

||||

description: >

|

||||

Are there any additional clues in /opt/so/log/?

|

||||

options:

|

||||

-

|

||||

- Yes, there are additional clues in /opt/so/log/ (please provide detail below)

|

||||

- No, there are no additional clues

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Detail

|

||||

description: Please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and then provide detailed information to help us help you.

|

||||

placeholder: |-

|

||||

STOP! Before typing, please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 in their entirety!

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

validations:

|

||||

required: true

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Guidelines

|

||||

options:

|

||||

- label: I have read the discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and assert that I have followed the guidelines.

|

||||

required: true

|

||||

12

.github/ISSUE_TEMPLATE

vendored

12

.github/ISSUE_TEMPLATE

vendored

@@ -1,12 +0,0 @@

|

||||

PLEASE STOP AND READ THIS INFORMATION!

|

||||

|

||||

If you are creating an issue just to ask a question, you will likely get faster and better responses by posting to our discussions forum instead:

|

||||

https://securityonion.net/discuss

|

||||

|

||||

If you think you have found a possible bug or are observing a behavior that you weren't expecting, use the discussion forum to start a conversation about it instead of creating an issue.

|

||||

|

||||

If you are very familiar with the latest version of the product and are confident you have found a bug in Security Onion, you can continue with creating an issue here, but please make sure you have done the following:

|

||||

- duplicated the issue on a fresh installation of the latest version

|

||||

- provide information about your system and how you installed Security Onion

|

||||

- include relevant log files

|

||||

- include reproduction steps

|

||||

38

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

38

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

@@ -0,0 +1,38 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: This option is for experienced community members to report a confirmed, reproducible bug

|

||||

title: ''

|

||||

labels: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

PLEASE STOP AND READ THIS INFORMATION!

|

||||

|

||||

If you are creating an issue just to ask a question, you will likely get faster and better responses by posting to our discussions forum at https://securityonion.net/discuss.

|

||||

|

||||

If you think you have found a possible bug or are observing a behavior that you weren't expecting, use the discussion forum at https://securityonion.net/discuss to start a conversation about it instead of creating an issue.

|

||||

|

||||

If you are very familiar with the latest version of the product and are confident you have found a bug in Security Onion, you can continue with creating an issue here, but please make sure you have done the following:

|

||||

- duplicated the issue on a fresh installation of the latest version

|

||||

- provide information about your system and how you installed Security Onion

|

||||

- include relevant log files

|

||||

- include reproduction steps

|

||||

|

||||

**Describe the bug**

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

**To Reproduce**

|

||||

Steps to reproduce the behavior:

|

||||

1. Go to '...'

|

||||

2. Click on '....'

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

**Screenshots**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

5

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

5

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,5 @@

|

||||

blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: Security Onion Discussions

|

||||

url: https://securityonion.com/discussions

|

||||

about: Please ask and answer questions here

|

||||

33

.github/workflows/close-threads.yml

vendored

Normal file

33

.github/workflows/close-threads.yml

vendored

Normal file

@@ -0,0 +1,33 @@

|

||||

name: 'Close Threads'

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '50 1 * * *'

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

discussions: write

|

||||

|

||||

concurrency:

|

||||

group: lock-threads

|

||||

|

||||

jobs:

|

||||

close-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

steps:

|

||||

- uses: actions/stale@v5

|

||||

with:

|

||||

days-before-issue-stale: -1

|

||||

days-before-issue-close: 60

|

||||

stale-issue-message: "This issue is stale because it has been inactive for an extended period. Stale issues convey that the issue, while important to someone, is not critical enough for the author, or other community members to work on, sponsor, or otherwise shepherd the issue through to a resolution."

|

||||

close-issue-message: "This issue was closed because it has been stale for an extended period. It will be automatically locked in 30 days, after which no further commenting will be available."

|

||||

days-before-pr-stale: 45

|

||||

days-before-pr-close: 60

|

||||

stale-pr-message: "This PR is stale because it has been inactive for an extended period. The longer a PR remains stale the more out of date with the main branch it becomes."

|

||||

close-pr-message: "This PR was closed because it has been stale for an extended period. It will be automatically locked in 30 days. If there is still a commitment to finishing this PR re-open it before it is locked."

|

||||

4

.github/workflows/contrib.yml

vendored

4

.github/workflows/contrib.yml

vendored

@@ -11,14 +11,14 @@ jobs:

|

||||

steps:

|

||||

- name: "Contributor Check"

|

||||

if: (github.event.comment.body == 'recheck' || github.event.comment.body == 'I have read the CLA Document and I hereby sign the CLA') || github.event_name == 'pull_request_target'

|

||||

uses: cla-assistant/github-action@v2.1.3-beta

|

||||

uses: cla-assistant/github-action@v2.3.1

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

PERSONAL_ACCESS_TOKEN : ${{ secrets.PERSONAL_ACCESS_TOKEN }}

|

||||

with:

|

||||

path-to-signatures: 'signatures_v1.json'

|

||||

path-to-document: 'https://securityonionsolutions.com/cla'

|

||||

allowlist: dependabot[bot],jertel,dougburks,TOoSmOotH,weslambert,defensivedepth,m0duspwnens

|

||||

allowlist: dependabot[bot],jertel,dougburks,TOoSmOotH,defensivedepth,m0duspwnens

|

||||

remote-organization-name: Security-Onion-Solutions

|

||||

remote-repository-name: licensing

|

||||

|

||||

|

||||

26

.github/workflows/lock-threads.yml

vendored

Normal file

26

.github/workflows/lock-threads.yml

vendored

Normal file

@@ -0,0 +1,26 @@

|

||||

name: 'Lock Threads'

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '50 2 * * *'

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

discussions: write

|

||||

|

||||

concurrency:

|

||||

group: lock-threads

|

||||

|

||||

jobs:

|

||||

lock-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: jertel/lock-threads@main

|

||||

with:

|

||||

include-discussion-currently-open: true

|

||||

discussion-inactive-days: 90

|

||||

issue-inactive-days: 30

|

||||

pr-inactive-days: 30

|

||||

8

.github/workflows/pythontest.yml

vendored

8

.github/workflows/pythontest.yml

vendored

@@ -1,10 +1,6 @@

|

||||

name: python-test

|

||||

|

||||

on:

|

||||

push:

|

||||

paths:

|

||||

- "salt/sensoroni/files/analyzers/**"

|

||||

- "salt/manager/tools/sbin"

|

||||

pull_request:

|

||||

paths:

|

||||

- "salt/sensoroni/files/analyzers/**"

|

||||

@@ -17,7 +13,7 @@ jobs:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

python-version: ["3.10"]

|

||||

python-version: ["3.13"]

|

||||

python-code-path: ["salt/sensoroni/files/analyzers", "salt/manager/tools/sbin"]

|

||||

|

||||

steps:

|

||||

@@ -36,4 +32,4 @@ jobs:

|

||||

flake8 ${{ matrix.python-code-path }} --show-source --max-complexity=12 --doctests --max-line-length=200 --statistics

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

pytest ${{ matrix.python-code-path }} --cov=${{ matrix.python-code-path }} --doctest-modules --cov-report=term --cov-fail-under=100 --cov-config=pytest.ini

|

||||

PYTHONPATH=${{ matrix.python-code-path }} pytest ${{ matrix.python-code-path }} --cov=${{ matrix.python-code-path }} --doctest-modules --cov-report=term --cov-fail-under=100 --cov-config=pytest.ini

|

||||

|

||||

11

.gitignore

vendored

11

.gitignore

vendored

@@ -1,4 +1,3 @@

|

||||

|

||||

# Created by https://www.gitignore.io/api/macos,windows

|

||||

# Edit at https://www.gitignore.io/?templates=macos,windows

|

||||

|

||||

@@ -67,4 +66,12 @@ __pycache__

|

||||

|

||||

# Analyzer dev/test config files

|

||||

*_dev.yaml

|

||||

site-packages

|

||||

site-packages

|

||||

|

||||

# Project Scope Directory

|

||||

.projectScope/

|

||||

.clinerules

|

||||

cline_docs/

|

||||

|

||||

# vscode settings

|

||||

.vscode/

|

||||

|

||||

@@ -1,18 +1,17 @@

|

||||

### 2.4.30-20231113 ISO image released on 2023/11/13

|

||||

|

||||

### 2.4.160-20250625 ISO image released on 2025/06/25

|

||||

|

||||

|

||||

### Download and Verify

|

||||

|

||||

2.4.30-20231113 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.30-20231113.iso

|

||||

2.4.160-20250625 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.160-20250625.iso

|

||||

|

||||

MD5: 15EB5A74782E4C2D5663D29E275839F6

|

||||

SHA1: BBD4A7D77ADDA94B866F1EFED846A83DDFD34D73

|

||||

SHA256: 4509EB8E11DB49C6CD3905C74C5525BDB1F773488002179A846E00DE8E499988

|

||||

MD5: 78CF5602EFFAB84174C56AD2826E6E4E

|

||||

SHA1: FC7EEC3EC95D97D3337501BAA7CA8CAE7C0E15EA

|

||||

SHA256: 0ED965E8BEC80EE16AE90A0F0F96A3046CEF2D92720A587278DDDE3B656C01C2

|

||||

|

||||

Signature for ISO image:

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.30-20231113.iso.sig

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.160-20250625.iso.sig

|

||||

|

||||

Signing key:

|

||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||

@@ -26,27 +25,29 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

||||

|

||||

Download the signature file for the ISO:

|

||||

```

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.30-20231113.iso.sig

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.160-20250625.iso.sig

|

||||

```

|

||||

|

||||

Download the ISO image:

|

||||

```

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.30-20231113.iso

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.160-20250625.iso

|

||||

```

|

||||

|

||||

Verify the downloaded ISO image using the signature file:

|

||||

```

|

||||

gpg --verify securityonion-2.4.30-20231113.iso.sig securityonion-2.4.30-20231113.iso

|

||||

gpg --verify securityonion-2.4.160-20250625.iso.sig securityonion-2.4.160-20250625.iso

|

||||

```

|

||||

|

||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||

```

|

||||

gpg: Signature made Mon 13 Nov 2023 09:23:21 AM EST using RSA key ID FE507013

|

||||

gpg: Signature made Wed 25 Jun 2025 10:13:33 AM EDT using RSA key ID FE507013

|

||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||

gpg: WARNING: This key is not certified with a trusted signature!

|

||||

gpg: There is no indication that the signature belongs to the owner.

|

||||

Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

||||

```

|

||||

|

||||

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

||||

|

||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||

https://docs.securityonion.net/en/2.4/installation.html

|

||||

|

||||

53

LICENSE

Normal file

53

LICENSE

Normal file

@@ -0,0 +1,53 @@

|

||||

Elastic License 2.0 (ELv2)

|

||||

|

||||

Acceptance

|

||||

|

||||

By using the software, you agree to all of the terms and conditions below.

|

||||

|

||||

Copyright License

|

||||

|

||||

The licensor grants you a non-exclusive, royalty-free, worldwide, non-sublicensable, non-transferable license to use, copy, distribute, make available, and prepare derivative works of the software, in each case subject to the limitations and conditions below.

|

||||

|

||||

Limitations

|

||||

|

||||

You may not provide the software to third parties as a hosted or managed service, where the service provides users with access to any substantial set of the features or functionality of the software.

|

||||

|

||||

You may not move, change, disable, or circumvent the license key functionality in the software, and you may not remove or obscure any functionality in the software that is protected by the license key.

|

||||

|

||||

You may not alter, remove, or obscure any licensing, copyright, or other notices of the licensor in the software. Any use of the licensor’s trademarks is subject to applicable law.

|

||||

|

||||

Patents

|

||||

|

||||

The licensor grants you a license, under any patent claims the licensor can license, or becomes able to license, to make, have made, use, sell, offer for sale, import and have imported the software, in each case subject to the limitations and conditions in this license. This license does not cover any patent claims that you cause to be infringed by modifications or additions to the software. If you or your company make any written claim that the software infringes or contributes to infringement of any patent, your patent license for the software granted under these terms ends immediately. If your company makes such a claim, your patent license ends immediately for work on behalf of your company.

|

||||

|

||||

Notices

|

||||

|

||||

You must ensure that anyone who gets a copy of any part of the software from you also gets a copy of these terms.

|

||||

|

||||

If you modify the software, you must include in any modified copies of the software prominent notices stating that you have modified the software.

|

||||

|

||||

No Other Rights

|

||||

|

||||

These terms do not imply any licenses other than those expressly granted in these terms.

|

||||

|

||||

Termination

|

||||

|

||||

If you use the software in violation of these terms, such use is not licensed, and your licenses will automatically terminate. If the licensor provides you with a notice of your violation, and you cease all violation of this license no later than 30 days after you receive that notice, your licenses will be reinstated retroactively. However, if you violate these terms after such reinstatement, any additional violation of these terms will cause your licenses to terminate automatically and permanently.

|

||||

|

||||

No Liability

|

||||

|

||||

As far as the law allows, the software comes as is, without any warranty or condition, and the licensor will not be liable to you for any damages arising out of these terms or the use or nature of the software, under any kind of legal claim.

|

||||

|

||||

Definitions

|

||||

|

||||

The licensor is the entity offering these terms, and the software is the software the licensor makes available under these terms, including any portion of it.

|

||||

|

||||

you refers to the individual or entity agreeing to these terms.

|

||||

|

||||

your company is any legal entity, sole proprietorship, or other kind of organization that you work for, plus all organizations that have control over, are under the control of, or are under common control with that organization. control means ownership of substantially all the assets of an entity, or the power to direct its management and policies by vote, contract, or otherwise. Control can be direct or indirect.

|

||||

|

||||

your licenses are all the licenses granted to you for the software under these terms.

|

||||

|

||||

use means anything you do with the software requiring one of your licenses.

|

||||

|

||||

trademark means trademarks, service marks, and similar rights.

|

||||

13

README.md

13

README.md

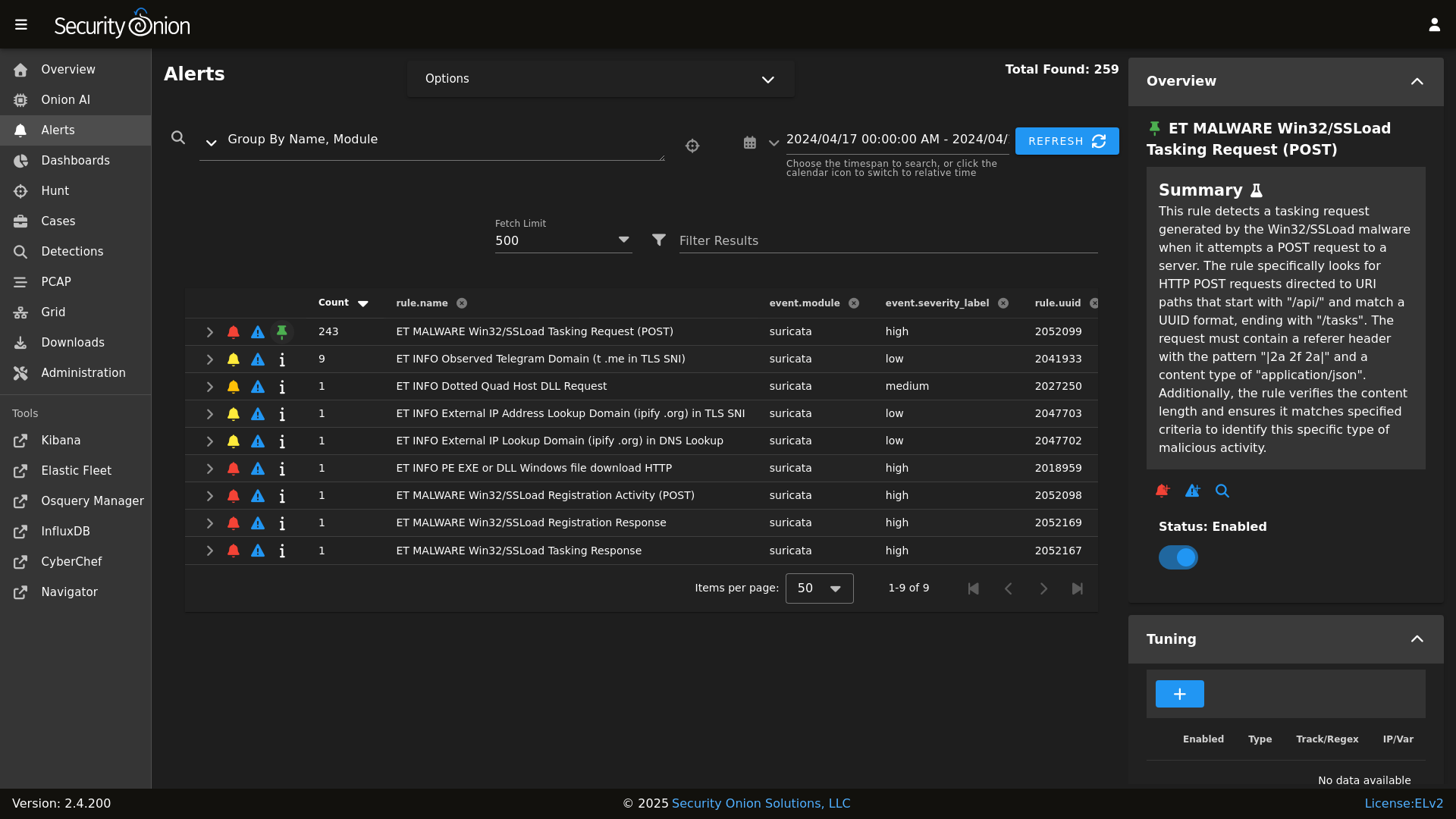

@@ -8,19 +8,22 @@ Alerts

|

||||

|

||||

|

||||

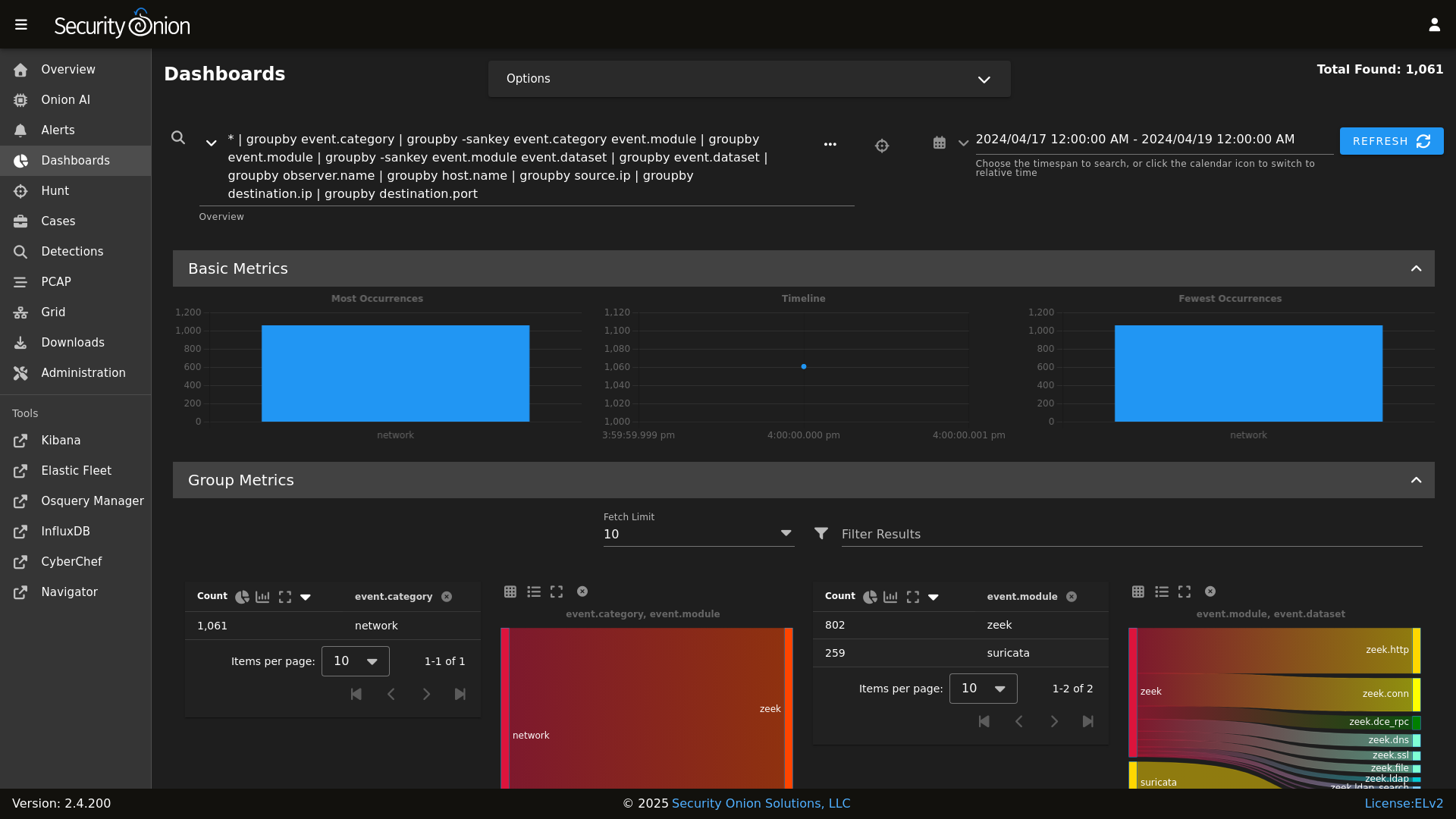

Dashboards

|

||||

|

||||

|

||||

|

||||

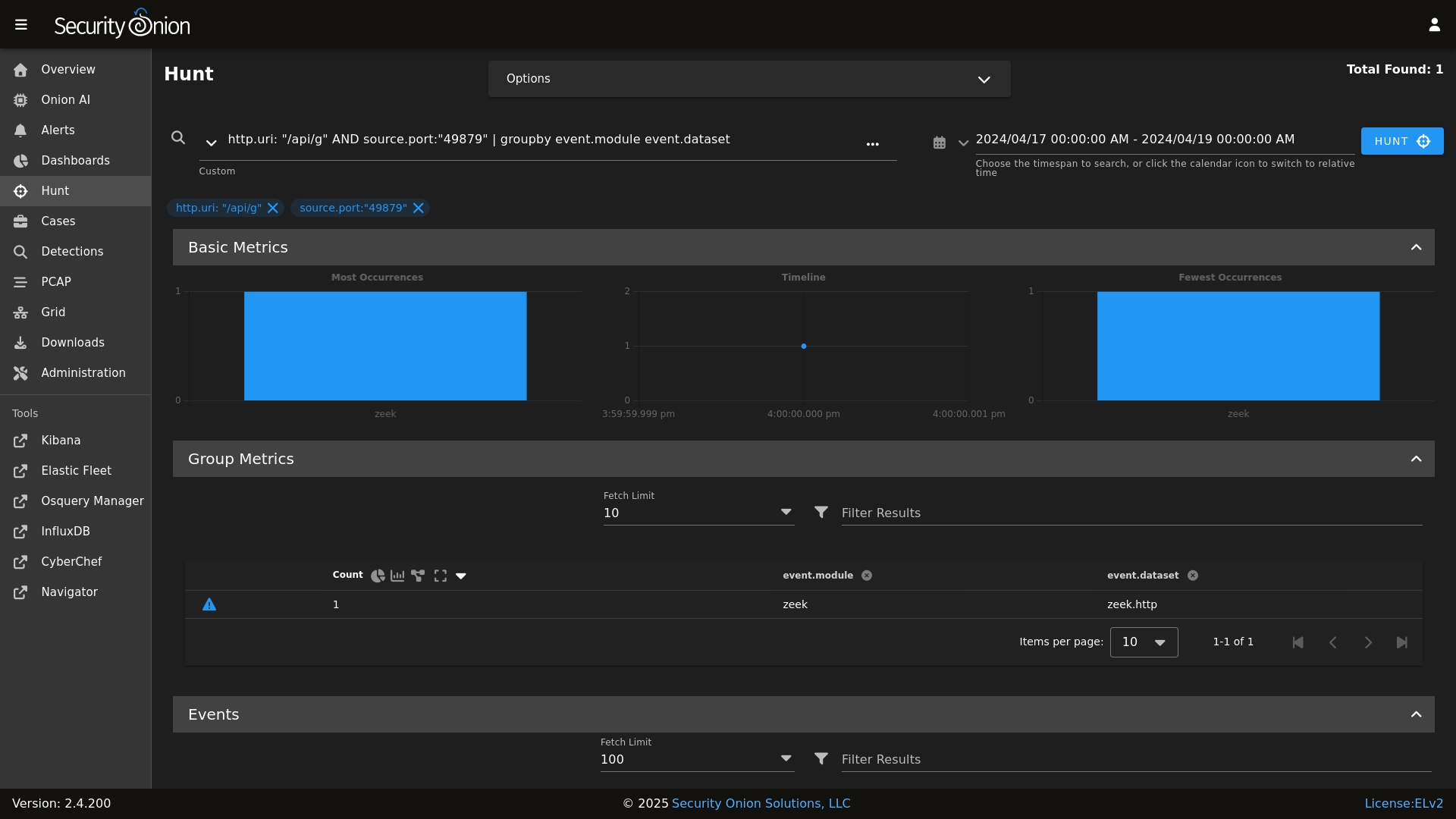

Hunt

|

||||

|

||||

|

||||

|

||||

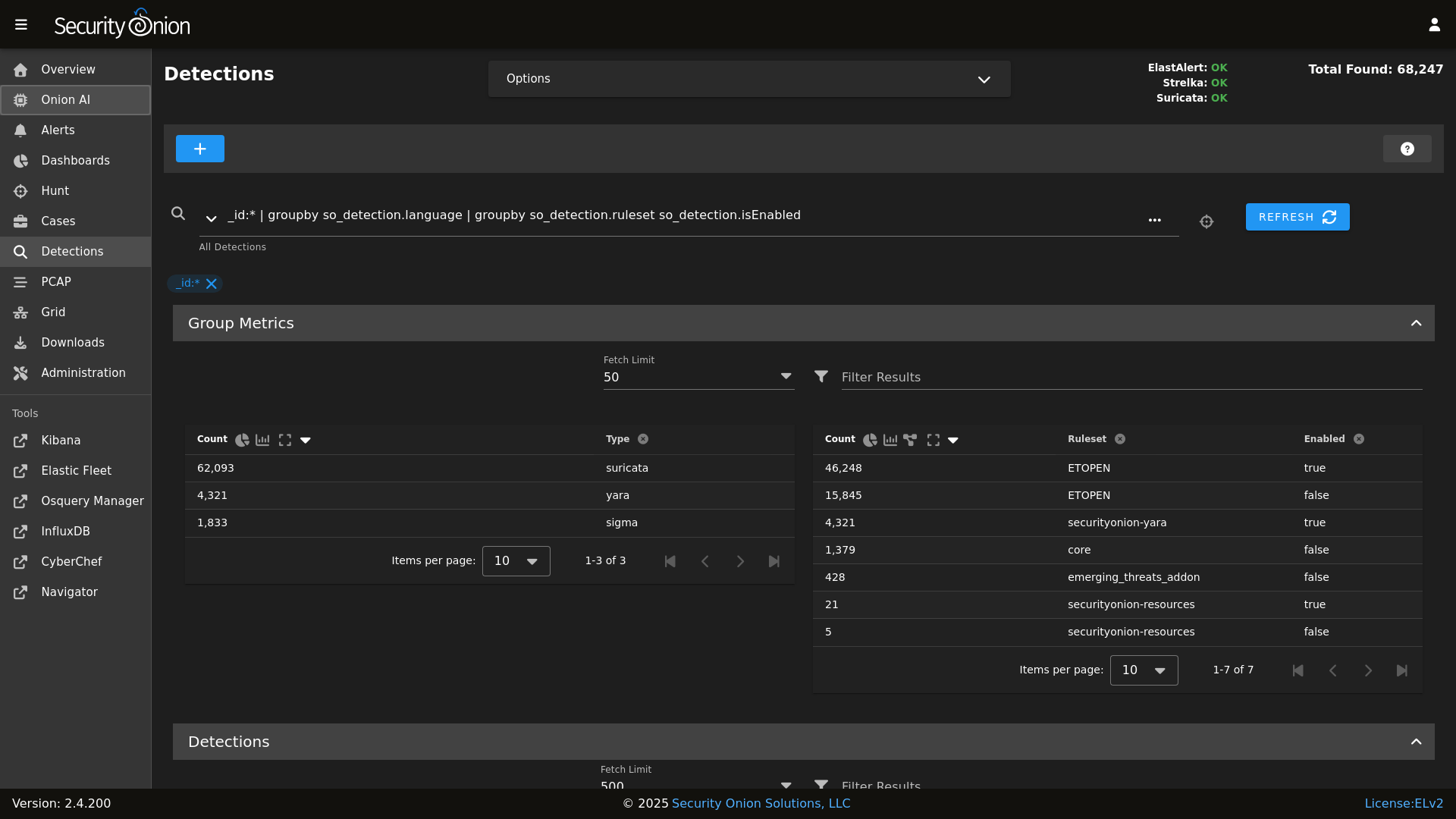

Detections

|

||||

|

||||

|

||||

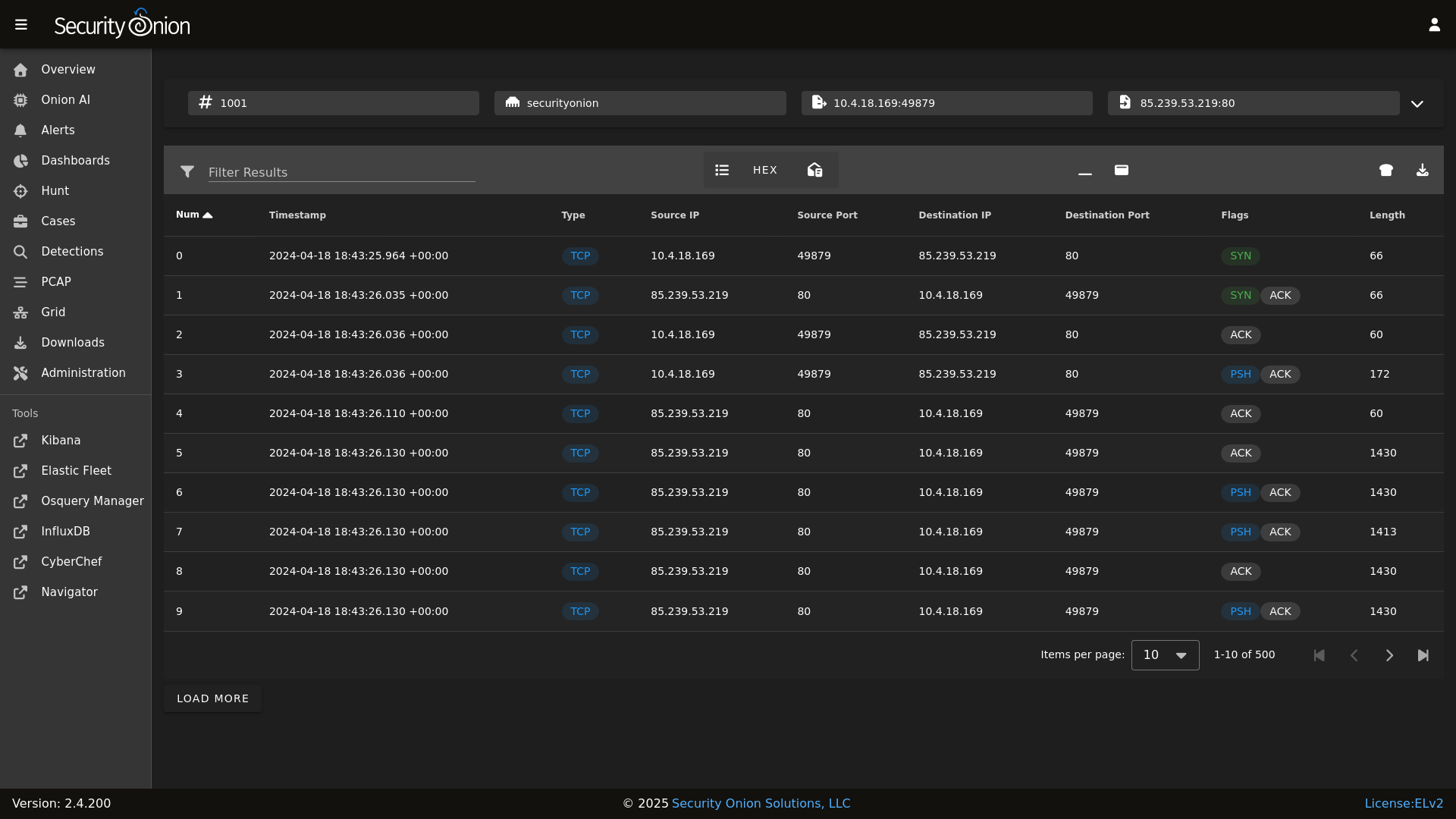

PCAP

|

||||

|

||||

|

||||

|

||||

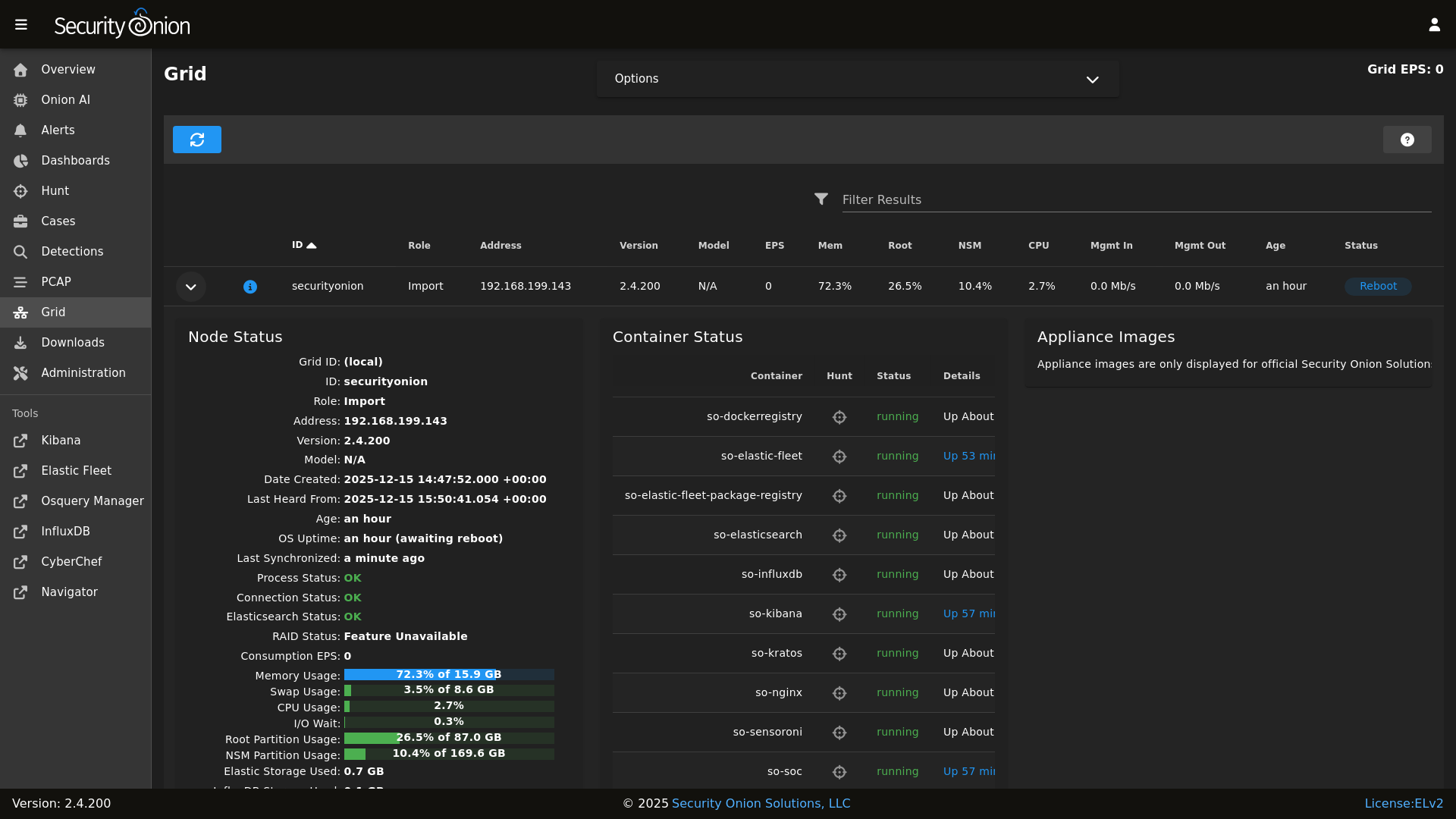

Grid

|

||||

|

||||

|

||||

|

||||

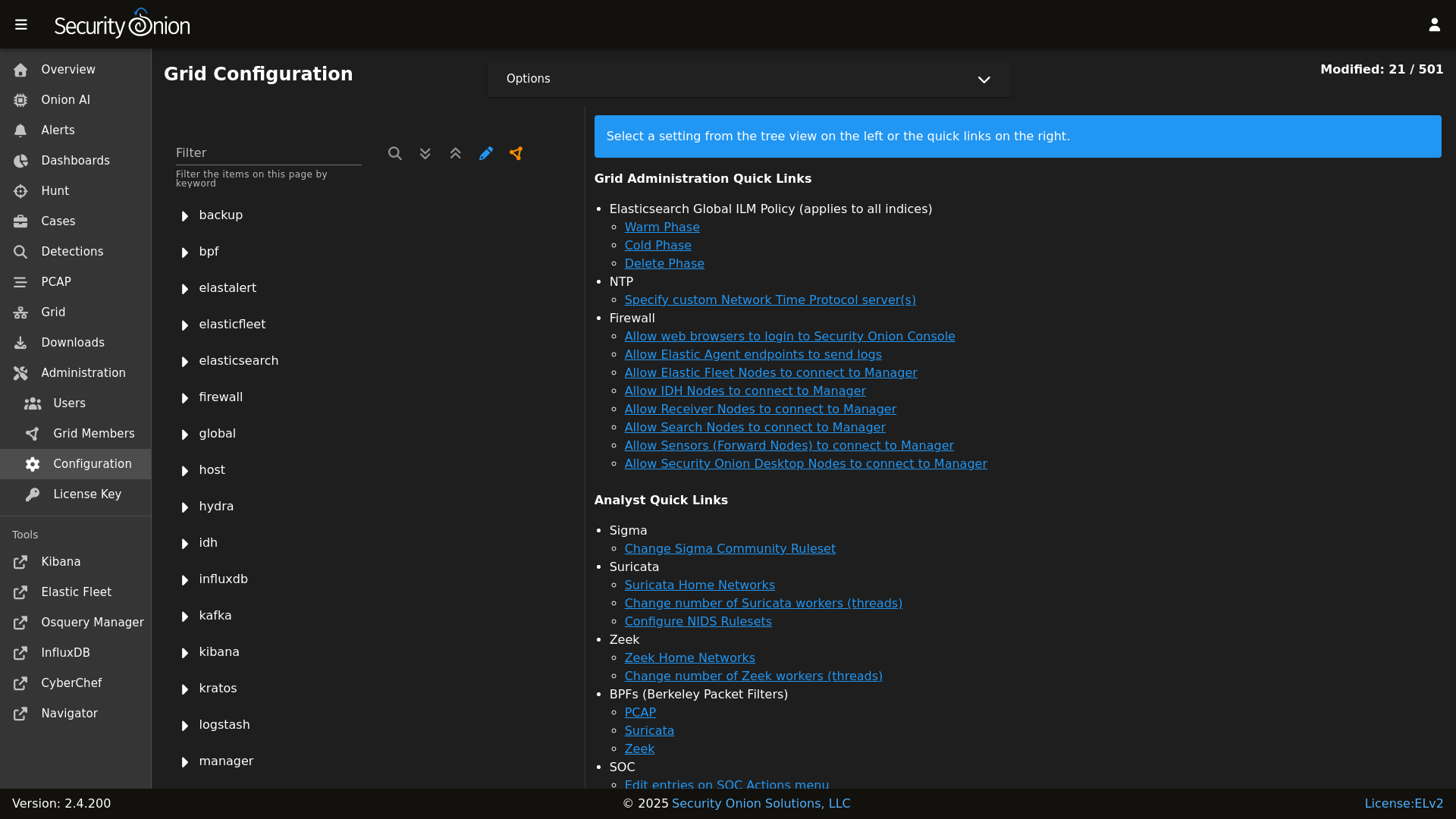

Config

|

||||

|

||||

|

||||

|

||||

### Release Notes

|

||||

|

||||

|

||||

@@ -5,9 +5,11 @@

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 2.4.x | :white_check_mark: |

|

||||

| 2.3.x | :white_check_mark: |

|

||||

| 2.3.x | :x: |

|

||||

| 16.04.x | :x: |

|

||||

|

||||

Security Onion 2.3 has reached End Of Life and is no longer supported.

|

||||

|

||||

Security Onion 16.04 has reached End Of Life and is no longer supported.

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

BIN

assets/images/screenshots/analyzers/echotrail.png

Normal file

BIN

assets/images/screenshots/analyzers/echotrail.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 21 KiB |

BIN

assets/images/screenshots/analyzers/elasticsearch.png

Normal file

BIN

assets/images/screenshots/analyzers/elasticsearch.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 22 KiB |

BIN

assets/images/screenshots/analyzers/sublime.png

Normal file

BIN

assets/images/screenshots/analyzers/sublime.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 12 KiB |

@@ -19,4 +19,4 @@ role:

|

||||

receiver:

|

||||

standalone:

|

||||

searchnode:

|

||||

sensor:

|

||||

sensor:

|

||||

@@ -41,7 +41,8 @@ file_roots:

|

||||

base:

|

||||

- /opt/so/saltstack/local/salt

|

||||

- /opt/so/saltstack/default/salt

|

||||

|

||||

- /nsm/elastic-fleet/artifacts

|

||||

- /opt/so/rules/nids

|

||||

|

||||

# The master_roots setting configures a master-only copy of the file_roots dictionary,

|

||||

# used by the state compiler.

|

||||

|

||||

34

pillar/elasticsearch/nodes.sls

Normal file

34

pillar/elasticsearch/nodes.sls

Normal file

@@ -0,0 +1,34 @@

|

||||

{% set node_types = {} %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='elasticsearch:enabled:true',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='pillar') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

{% if hostname not in node_types[node_type] %}

|

||||

{% do node_types[node_type].update({hostname: ip[0]}) %}

|

||||

{% else %}

|

||||

{% do node_types[node_type][hostname].update(ip[0]) %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

|

||||

elasticsearch:

|

||||

nodes:

|

||||

{% for node_type, values in node_types.items() %}

|

||||

{{node_type}}:

|

||||

{% for hostname, ip in values.items() %}

|

||||

{{hostname}}:

|

||||

ip: {{ip}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

34

pillar/hypervisor/nodes.sls

Normal file

34

pillar/hypervisor/nodes.sls

Normal file

@@ -0,0 +1,34 @@

|

||||

{% set node_types = {} %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='G@role:so-hypervisor or G@role:so-managerhype',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='compound') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

{% if hostname not in node_types[node_type] %}

|

||||

{% do node_types[node_type].update({hostname: ip[0]}) %}

|

||||

{% else %}

|

||||

{% do node_types[node_type][hostname].update(ip[0]) %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

|

||||

hypervisor:

|

||||

nodes:

|

||||

{% for node_type, values in node_types.items() %}

|

||||

{{node_type}}:

|

||||

{% for hostname, ip in values.items() %}

|

||||

{{hostname}}:

|

||||

ip: {{ip}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

2

pillar/kafka/nodes.sls

Normal file

2

pillar/kafka/nodes.sls

Normal file

@@ -0,0 +1,2 @@

|

||||

kafka:

|

||||

nodes:

|

||||

@@ -1,16 +1,15 @@

|

||||

{% set node_types = {} %}

|

||||

{% set cached_grains = salt.saltutil.runner('cache.grains', tgt='*') %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='G@role:so-manager or G@role:so-managersearch or G@role:so-standalone or G@role:so-searchnode or G@role:so-heavynode or G@role:so-receiver or G@role:so-fleet ',

|

||||

tgt='logstash:enabled:true',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='compound') | dictsort()

|

||||

tgt_type='pillar') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = cached_grains[minionid]['host'] %}

|

||||

{% set node_type = minionid.split('_')[1] %}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

|

||||

@@ -24,6 +24,7 @@

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

{% if node_types %}

|

||||

node_data:

|

||||

{% for node_type, host_values in node_types.items() %}

|

||||

{% for hostname, details in host_values.items() %}

|

||||

@@ -33,3 +34,6 @@ node_data:

|

||||

role: {{node_type}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

{% else %}

|

||||

node_data: False

|

||||

{% endif %}

|

||||

|

||||

34

pillar/redis/nodes.sls

Normal file

34

pillar/redis/nodes.sls

Normal file

@@ -0,0 +1,34 @@

|

||||

{% set node_types = {} %}

|

||||

{% for minionid, ip in salt.saltutil.runner(

|

||||

'mine.get',

|

||||

tgt='redis:enabled:true',

|

||||

fun='network.ip_addrs',

|

||||

tgt_type='pillar') | dictsort()

|

||||

%}

|

||||

|

||||

# only add a node to the pillar if it returned an ip from the mine

|

||||

{% if ip | length > 0%}

|

||||

{% set hostname = minionid.split('_') | first %}

|

||||

{% set node_type = minionid.split('_') | last %}

|

||||

{% if node_type not in node_types.keys() %}

|

||||

{% do node_types.update({node_type: {hostname: ip[0]}}) %}

|

||||

{% else %}

|

||||

{% if hostname not in node_types[node_type] %}

|

||||

{% do node_types[node_type].update({hostname: ip[0]}) %}

|

||||

{% else %}

|

||||

{% do node_types[node_type][hostname].update(ip[0]) %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endif %}

|

||||

{% endfor %}

|

||||

|

||||

|

||||

redis:

|

||||

nodes:

|

||||

{% for node_type, values in node_types.items() %}

|

||||

{{node_type}}:

|

||||

{% for hostname, ip in values.items() %}

|

||||

{{hostname}}:

|

||||

ip: {{ip}}

|

||||

{% endfor %}

|

||||

{% endfor %}

|

||||

@@ -16,16 +16,24 @@ base:

|

||||

- sensoroni.adv_sensoroni

|

||||

- telegraf.soc_telegraf

|

||||

- telegraf.adv_telegraf

|

||||

- versionlock.soc_versionlock

|

||||

- versionlock.adv_versionlock

|

||||

- soc.license

|

||||

|

||||

'* and not *_desktop':

|

||||

- firewall.soc_firewall

|

||||

- firewall.adv_firewall

|

||||

- nginx.soc_nginx

|

||||

- nginx.adv_nginx

|

||||

- node_data.ips

|

||||

|

||||

'*_manager or *_managersearch':

|

||||

'salt-cloud:driver:libvirt':

|

||||

- match: grain

|

||||

- vm.soc_vm

|

||||

- vm.adv_vm

|

||||

|

||||

'*_manager or *_managersearch or *_managerhype':

|

||||

- match: compound

|

||||

- node_data.ips

|

||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||

- elasticsearch.auth

|

||||

{% endif %}

|

||||

@@ -42,17 +50,18 @@ base:

|

||||

- logstash.adv_logstash

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

- influxdb.adv_influxdb

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -61,12 +70,15 @@ base:

|

||||

- elastalert.adv_elastalert

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- curator.soc_curator

|

||||

- curator.adv_curator

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

- hypervisor.nodes

|

||||

- hypervisor.soc_hypervisor

|

||||

- hypervisor.adv_hypervisor

|

||||

- stig.soc_stig

|

||||

|

||||

'*_sensor':

|

||||

- healthcheck.sensor

|

||||

@@ -82,8 +94,10 @@ base:

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

|

||||

'*_eval':

|

||||

- node_data.ips

|

||||

- secrets

|

||||

- healthcheck.eval

|

||||

- elasticsearch.index_templates

|

||||

@@ -94,6 +108,7 @@ base:

|

||||

- kibana.secrets

|

||||

{% endif %}

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -106,17 +121,12 @@ base:

|

||||

- idstools.adv_idstools

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- strelka.soc_strelka

|

||||

- strelka.adv_strelka

|

||||

- curator.soc_curator

|

||||

- curator.adv_curator

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

@@ -135,6 +145,7 @@ base:

|

||||

- minions.adv_{{ grains.id }}

|

||||

|

||||

'*_standalone':

|

||||

- node_data.ips

|

||||

- logstash.nodes

|

||||

- logstash.soc_logstash

|

||||

- logstash.adv_logstash

|

||||

@@ -151,10 +162,14 @@ base:

|

||||

- idstools.adv_idstools

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

- influxdb.adv_influxdb

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -165,15 +180,10 @@ base:

|

||||

- manager.adv_manager

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- strelka.soc_strelka

|

||||

- strelka.adv_strelka

|

||||

- curator.soc_curator

|

||||

- curator.adv_curator

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- zeek.soc_zeek

|

||||

@@ -186,6 +196,10 @@ base:

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

|

||||

'*_heavynode':

|

||||

- elasticsearch.auth

|

||||

@@ -194,8 +208,6 @@ base:

|

||||

- logstash.adv_logstash

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- curator.soc_curator

|

||||

- curator.adv_curator

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- zeek.soc_zeek

|

||||

@@ -221,15 +233,21 @@ base:

|

||||

- logstash.nodes

|

||||

- logstash.soc_logstash

|

||||

- logstash.adv_logstash

|

||||

- elasticsearch.nodes

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||

- elasticsearch.auth

|

||||

{% endif %}

|

||||

- redis.nodes

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

|

||||

'*_receiver':

|

||||

- logstash.nodes

|

||||

@@ -242,8 +260,11 @@ base:

|

||||

- redis.adv_redis

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

|

||||

'*_import':

|

||||

- node_data.ips

|

||||

- secrets

|

||||

- elasticsearch.index_templates

|

||||

{% if salt['file.file_exists']('/opt/so/saltstack/local/pillar/elasticsearch/auth.sls') %}

|

||||

@@ -253,6 +274,7 @@ base:

|

||||

- kibana.secrets

|

||||

{% endif %}

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- elasticsearch.soc_elasticsearch

|

||||

- elasticsearch.adv_elasticsearch

|

||||

- elasticfleet.soc_elasticfleet

|

||||

@@ -263,17 +285,12 @@ base:

|

||||

- manager.adv_manager

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- curator.soc_curator

|

||||

- curator.adv_curator

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- kratos.soc_kratos

|

||||

- kratos.adv_kratos

|

||||

- hydra.soc_hydra

|

||||

- hydra.adv_hydra

|

||||

- redis.soc_redis

|

||||

- redis.adv_redis

|

||||

- influxdb.soc_influxdb

|

||||

@@ -292,6 +309,7 @@ base:

|

||||

- minions.adv_{{ grains.id }}

|

||||

|

||||

'*_fleet':

|

||||

- node_data.ips

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- logstash.nodes

|

||||

@@ -302,6 +320,12 @@ base:

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

|

||||

'*_hypervisor':

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

|

||||

'*_desktop':

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

|

||||

|

||||

14

pyci.sh

14

pyci.sh

@@ -15,12 +15,16 @@ TARGET_DIR=${1:-.}

|

||||

|

||||

PATH=$PATH:/usr/local/bin

|

||||

|

||||

if ! which pytest &> /dev/null || ! which flake8 &> /dev/null ; then

|

||||

echo "Missing dependencies. Consider running the following command:"

|

||||

echo " python -m pip install flake8 pytest pytest-cov"

|

||||

if [ ! -d .venv ]; then

|

||||

python -m venv .venv

|

||||

fi

|

||||

|

||||

source .venv/bin/activate

|

||||

|

||||

if ! pip install flake8 pytest pytest-cov pyyaml; then

|

||||

echo "Unable to install dependencies."

|

||||

exit 1

|

||||

fi

|

||||

|

||||

pip install pytest pytest-cov

|

||||

flake8 "$TARGET_DIR" "--config=${HOME_DIR}/pytest.ini"

|

||||

python3 -m pytest "--cov-config=${HOME_DIR}/pytest.ini" "--cov=$TARGET_DIR" --doctest-modules --cov-report=term --cov-fail-under=100 "$TARGET_DIR"

|

||||

python3 -m pytest "--cov-config=${HOME_DIR}/pytest.ini" "--cov=$TARGET_DIR" --doctest-modules --cov-report=term --cov-fail-under=100 "$TARGET_DIR"

|

||||

|

||||

246

salt/_modules/qcow2.py

Normal file

246

salt/_modules/qcow2.py

Normal file

@@ -0,0 +1,246 @@

|

||||

#!py

|

||||

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

"""

|

||||

Salt module for managing QCOW2 image configurations and VM hardware settings. This module provides functions

|

||||

for modifying network configurations within QCOW2 images and adjusting virtual machine hardware settings.

|

||||

It serves as a Salt interface to the so-qcow2-modify-network and so-kvm-modify-hardware scripts.

|

||||

|

||||

The module offers two main capabilities:

|

||||

1. Network Configuration: Modify network settings (DHCP/static IP) within QCOW2 images

|

||||

2. Hardware Configuration: Adjust VM hardware settings (CPU, memory, PCI passthrough)

|

||||

|

||||

This module is intended to work with Security Onion's virtualization infrastructure and is typically

|

||||

used in conjunction with salt-cloud for VM provisioning and management.

|

||||

"""

|

||||

|

||||

import logging

|

||||

import subprocess

|

||||

import shlex

|

||||

|

||||

log = logging.getLogger(__name__)

|

||||

|

||||

__virtualname__ = 'qcow2'

|

||||

|

||||

def __virtual__():

|

||||

return __virtualname__

|

||||

|

||||

def modify_network_config(image, interface, mode, vm_name, ip4=None, gw4=None, dns4=None, search4=None):

|

||||

'''

|

||||

Usage:

|

||||

salt '*' qcow2.modify_network_config image=<path> interface=<iface> mode=<mode> vm_name=<name> [ip4=<addr>] [gw4=<addr>] [dns4=<servers>] [search4=<domain>]

|

||||

|

||||

Options:

|

||||

image

|

||||

Path to the QCOW2 image file that will be modified

|

||||

interface

|

||||

Network interface name to configure (e.g., 'enp1s0')

|

||||

mode

|

||||

Network configuration mode, either 'dhcp4' or 'static4'

|

||||

vm_name

|

||||

Full name of the VM (hostname_role)

|

||||

ip4

|

||||

IPv4 address with CIDR notation (e.g., '192.168.1.10/24')

|

||||

Required when mode='static4'

|

||||

gw4

|

||||

IPv4 gateway address (e.g., '192.168.1.1')

|

||||

Required when mode='static4'

|

||||

dns4

|

||||

Comma-separated list of IPv4 DNS servers (e.g., '8.8.8.8,8.8.4.4')

|

||||

Optional for both DHCP and static configurations

|

||||

search4

|

||||

DNS search domain for IPv4 (e.g., 'example.local')

|

||||

Optional for both DHCP and static configurations

|

||||

|

||||

Examples:

|

||||

1. **Configure DHCP:**

|

||||

```bash

|

||||

salt '*' qcow2.modify_network_config image='/nsm/libvirt/images/sool9/sool9.qcow2' interface='enp1s0' mode='dhcp4'

|

||||

```

|

||||

This configures enp1s0 to use DHCP for IP assignment

|

||||

|

||||

2. **Configure Static IP:**

|

||||

```bash

|

||||

salt '*' qcow2.modify_network_config image='/nsm/libvirt/images/sool9/sool9.qcow2' interface='enp1s0' mode='static4' ip4='192.168.1.10/24' gw4='192.168.1.1' dns4='192.168.1.1,8.8.8.8' search4='example.local'

|

||||

```

|

||||

This sets a static IP configuration with DNS servers and search domain

|

||||

|

||||

Notes:

|

||||

- The QCOW2 image must be accessible and writable by the salt minion

|

||||

- The image should not be in use by a running VM when modified

|

||||

- Network changes take effect on next VM boot

|

||||

- Requires so-qcow2-modify-network script to be installed

|

||||

|

||||

Description:

|

||||

This function modifies network configuration within a QCOW2 image file by executing

|

||||

the so-qcow2-modify-network script. It supports both DHCP and static IPv4 configuration.

|

||||

The script mounts the image, modifies the network configuration files, and unmounts

|

||||

safely. All operations are logged for troubleshooting purposes.

|

||||

|

||||

Exit Codes:

|

||||

0: Success

|

||||

1: Invalid parameters or configuration

|

||||

2: Image access or mounting error

|

||||

3: Network configuration error

|

||||

4: System command error

|

||||

255: Unexpected error

|

||||

|

||||

Logging:

|

||||

- All operations are logged to the salt minion log

|

||||

- Log entries are prefixed with 'qcow2 module:'

|

||||

- Error conditions include detailed error messages and stack traces

|

||||

- Success/failure status is logged for verification

|

||||

'''

|

||||

|

||||

cmd = ['/usr/sbin/so-qcow2-modify-network', '-I', image, '-i', interface, '-n', vm_name]

|

||||

|

||||

if mode.lower() == 'dhcp4':

|

||||

cmd.append('--dhcp4')

|

||||

elif mode.lower() == 'static4':

|

||||

cmd.append('--static4')

|

||||

if not ip4 or not gw4:

|

||||

raise ValueError('Both ip4 and gw4 are required for static configuration.')

|

||||

cmd.extend(['--ip4', ip4, '--gw4', gw4])

|

||||

if dns4:

|

||||

cmd.extend(['--dns4', dns4])

|

||||

if search4:

|

||||

cmd.extend(['--search4', search4])

|

||||

else:

|

||||

raise ValueError("Invalid mode '{}'. Expected 'dhcp4' or 'static4'.".format(mode))

|

||||

|

||||

log.info('qcow2 module: Executing command: {}'.format(' '.join(shlex.quote(arg) for arg in cmd)))

|

||||

|

||||

try:

|

||||

result = subprocess.run(cmd, capture_output=True, text=True, check=False)

|

||||

ret = {

|

||||

'retcode': result.returncode,

|

||||

'stdout': result.stdout,

|

||||

'stderr': result.stderr

|

||||

}

|

||||

if result.returncode != 0:

|

||||

log.error('qcow2 module: Script execution failed with return code {}: {}'.format(result.returncode, result.stderr))

|

||||

else:

|

||||

log.info('qcow2 module: Script executed successfully.')

|

||||

return ret

|

||||

except Exception as e:

|

||||

log.error('qcow2 module: An error occurred while executing the script: {}'.format(e))

|

||||

raise

|

||||

|

||||

def modify_hardware_config(vm_name, cpu=None, memory=None, pci=None, start=False):

|

||||

'''

|

||||

Usage:

|

||||

salt '*' qcow2.modify_hardware_config vm_name=<name> [cpu=<count>] [memory=<size>] [pci=<id>] [pci=<id>] [start=<bool>]

|

||||

|

||||

Options:

|

||||

vm_name

|

||||

Name of the virtual machine to modify

|

||||

cpu

|

||||

Number of virtual CPUs to assign (positive integer)

|

||||

Optional - VM's current CPU count retained if not specified

|

||||

memory

|

||||

Amount of memory to assign in MiB (positive integer)

|

||||

Optional - VM's current memory size retained if not specified

|

||||

pci

|

||||

PCI hardware ID(s) to passthrough to the VM (e.g., '0000:c7:00.0')

|

||||

Can be specified multiple times for multiple devices

|

||||

Optional - no PCI passthrough if not specified

|

||||

start

|

||||

Boolean flag to start the VM after modification

|

||||

Optional - defaults to False

|

||||

|

||||

Examples:

|

||||

1. **Modify CPU and Memory:**

|

||||

```bash

|

||||

salt '*' qcow2.modify_hardware_config vm_name='sensor1' cpu=4 memory=8192

|

||||

```

|

||||

This assigns 4 CPUs and 8GB memory to the VM

|

||||

|

||||

2. **Enable PCI Passthrough:**

|

||||

```bash

|

||||

salt '*' qcow2.modify_hardware_config vm_name='sensor1' pci='0000:c7:00.0' pci='0000:c4:00.0' start=True

|

||||

```

|

||||

This configures PCI passthrough and starts the VM

|

||||

|

||||

3. **Complete Hardware Configuration:**

|

||||

```bash

|

||||

salt '*' qcow2.modify_hardware_config vm_name='sensor1' cpu=8 memory=16384 pci='0000:c7:00.0' start=True

|

||||

```

|

||||

This sets CPU, memory, PCI passthrough, and starts the VM

|

||||

|

||||

Notes:

|

||||

- VM must be stopped before modification unless only the start flag is set

|

||||

- Memory is specified in MiB (1024 = 1GB)

|

||||

- PCI devices must be available and not in use by the host

|

||||

- CPU count should align with host capabilities

|

||||

- Requires so-kvm-modify-hardware script to be installed

|

||||

|

||||

Description:

|

||||

This function modifies the hardware configuration of a KVM virtual machine using

|

||||

the so-kvm-modify-hardware script. It can adjust CPU count, memory allocation,

|

||||

and PCI device passthrough. Changes are applied to the VM's libvirt configuration.

|

||||

The VM can optionally be started after modifications are complete.

|

||||

|

||||

Exit Codes:

|

||||

0: Success

|

||||

1: Invalid parameters

|

||||

2: VM state error (running when should be stopped)

|

||||

3: Hardware configuration error

|

||||

4: System command error

|

||||

255: Unexpected error

|

||||

|

||||

Logging:

|

||||

- All operations are logged to the salt minion log

|

||||

- Log entries are prefixed with 'qcow2 module:'

|

||||

- Hardware configuration changes are logged

|

||||

- Errors include detailed messages and stack traces

|

||||

- Final status of modification is logged

|

||||

'''

|

||||

|

||||

cmd = ['/usr/sbin/so-kvm-modify-hardware', '-v', vm_name]

|

||||

|

||||

if cpu is not None:

|

||||

if isinstance(cpu, int) and cpu > 0:

|

||||

cmd.extend(['-c', str(cpu)])

|

||||

else:

|

||||

raise ValueError('cpu must be a positive integer.')

|

||||

if memory is not None:

|

||||

if isinstance(memory, int) and memory > 0:

|

||||

cmd.extend(['-m', str(memory)])

|

||||

else:

|

||||

raise ValueError('memory must be a positive integer.')

|

||||

if pci:

|

||||

# Handle PCI IDs (can be a single device or comma-separated list)

|

||||

if isinstance(pci, str):

|

||||

devices = [dev.strip() for dev in pci.split(',') if dev.strip()]

|

||||

elif isinstance(pci, list):

|

||||

devices = pci

|

||||

else:

|

||||

devices = [pci]

|

||||

|

||||

# Add each device with its own -p flag

|

||||

for device in devices:

|

||||

cmd.extend(['-p', str(device)])

|

||||

if start:

|

||||

cmd.append('-s')

|

||||

|

||||

log.info('qcow2 module: Executing command: {}'.format(' '.join(shlex.quote(arg) for arg in cmd)))

|

||||

|

||||

try:

|

||||

result = subprocess.run(cmd, capture_output=True, text=True, check=False)

|

||||

ret = {

|

||||

'retcode': result.returncode,

|

||||

'stdout': result.stdout,

|

||||

'stderr': result.stderr

|

||||

}

|

||||

if result.returncode != 0:

|

||||

log.error('qcow2 module: Script execution failed with return code {}: {}'.format(result.returncode, result.stderr))

|

||||

else:

|

||||

log.info('qcow2 module: Script executed successfully.')

|

||||

return ret

|

||||

except Exception as e:

|

||||

log.error('qcow2 module: An error occurred while executing the script: {}'.format(e))

|

||||

raise

|

||||

1092

salt/_runners/setup_hypervisor.py

Normal file

1092

salt/_runners/setup_hypervisor.py

Normal file

File diff suppressed because it is too large

Load Diff

@@ -1,258 +1,178 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

{# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

Elastic License 2.0. #}

|

||||

|

||||

{% set ISAIRGAP = salt['pillar.get']('global:airgap', False) %}

|

||||

{% import_yaml 'salt/minion.defaults.yaml' as saltversion %}

|

||||

{% set saltversion = saltversion.salt.minion.version %}

|

||||

|

||||

{# this is the list we are returning from this map file, it gets built below #}

|

||||

{% set allowed_states= [] %}

|

||||

{# Define common state groups to reduce redundancy #}

|

||||

{% set base_states = [

|

||||

'common',

|

||||

'patch.os.schedule',

|

||||

'motd',

|

||||

'salt.minion-check',

|

||||

'sensoroni',

|

||||

'salt.lasthighstate',

|

||||

'salt.minion'

|

||||

] %}

|

||||

|

||||

{% set ssl_states = [

|

||||

'ssl',

|

||||

'telegraf',

|

||||

'firewall',

|

||||

'schedule',

|

||||

'docker_clean'

|

||||

] %}

|

||||

|

||||

{% set manager_states = [

|

||||

'salt.master',

|

||||

'ca',

|

||||

'registry',

|

||||

'manager',

|

||||

'nginx',

|

||||

'influxdb',

|

||||

'soc',

|

||||

'kratos',

|

||||

'hydra',

|

||||

'elasticfleet',

|

||||

'elastic-fleet-package-registry',

|

||||

'idstools',

|

||||

'suricata.manager',

|

||||

'utility'

|

||||

] %}

|

||||

|

||||

{% set sensor_states = [

|

||||

'pcap',

|

||||

'suricata',

|

||||

'healthcheck',

|

||||

'tcpreplay',

|

||||

'zeek',

|

||||

'strelka'

|

||||

] %}

|

||||

|

||||

{% set kafka_states = [

|

||||

'kafka'

|

||||

] %}

|

||||

|

||||

{% set stig_states = [

|

||||

'stig'

|

||||

] %}

|

||||

|

||||

{% set elastic_stack_states = [

|

||||

'elasticsearch',

|

||||

'elasticsearch.auth',

|

||||

'kibana',

|

||||

'kibana.secrets',

|

||||

'elastalert',

|

||||

'logstash',

|

||||

'redis'

|

||||

] %}

|

||||

|

||||

{# Initialize the allowed_states list #}

|

||||

{% set allowed_states = [] %}

|

||||

|

||||

{% if grains.saltversion | string == saltversion | string %}

|

||||

{# Map role-specific states #}

|

||||

{% set role_states = {

|

||||

'so-eval': (

|

||||

ssl_states +

|

||||

manager_states +

|

||||

sensor_states +

|

||||

elastic_stack_states | reject('equalto', 'logstash') | list

|

||||

),

|

||||

'so-heavynode': (

|

||||

ssl_states +

|

||||

sensor_states +

|

||||

['elasticagent', 'elasticsearch', 'logstash', 'redis', 'nginx']

|

||||

),

|

||||

'so-idh': (

|

||||

ssl_states +

|

||||

['idh']

|

||||

),

|

||||

'so-import': (

|

||||

ssl_states +

|

||||

manager_states +

|

||||

sensor_states | reject('equalto', 'strelka') | reject('equalto', 'healthcheck') | list +

|

||||

['elasticsearch', 'elasticsearch.auth', 'kibana', 'kibana.secrets', 'strelka.manager']

|

||||

),

|

||||

'so-manager': (

|

||||

ssl_states +

|

||||

manager_states +

|

||||

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users', 'strelka.manager'] +

|

||||

stig_states +

|

||||

kafka_states +

|

||||

elastic_stack_states

|

||||

),

|

||||

'so-managerhype': (

|

||||

ssl_states +

|

||||

manager_states +

|

||||

['salt.cloud', 'strelka.manager', 'hypervisor', 'libvirt'] +

|

||||

stig_states +

|

||||

kafka_states +

|

||||

elastic_stack_states

|

||||

),

|

||||

'so-managersearch': (

|

||||

ssl_states +

|

||||

manager_states +

|

||||

['strelka.manager'] +

|

||||

stig_states +

|

||||

kafka_states +

|

||||

elastic_stack_states

|

||||

),

|

||||

'so-searchnode': (

|

||||

ssl_states +

|

||||