mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2026-03-24 13:32:37 +01:00

Compare commits

1 Commits

customulim

...

TOoSmOotH-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

cef71b41e2 |

9

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

9

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

@@ -2,11 +2,13 @@ body:

|

|||||||

- type: markdown

|

- type: markdown

|

||||||

attributes:

|

attributes:

|

||||||

value: |

|

value: |

|

||||||

|

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||||

|

|

||||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||||

- type: dropdown

|

- type: dropdown

|

||||||

attributes:

|

attributes:

|

||||||

label: Version

|

label: Version

|

||||||

description: Which version of Security Onion are you asking about?

|

description: Which version of Security Onion 2.4.x are you asking about?

|

||||||

options:

|

options:

|

||||||

-

|

-

|

||||||

- 2.4.10

|

- 2.4.10

|

||||||

@@ -31,9 +33,6 @@ body:

|

|||||||

- 2.4.180

|

- 2.4.180

|

||||||

- 2.4.190

|

- 2.4.190

|

||||||

- 2.4.200

|

- 2.4.200

|

||||||

- 2.4.201

|

|

||||||

- 2.4.210

|

|

||||||

- 2.4.211

|

|

||||||

- Other (please provide detail below)

|

- Other (please provide detail below)

|

||||||

validations:

|

validations:

|

||||||

required: true

|

required: true

|

||||||

@@ -95,7 +94,7 @@ body:

|

|||||||

attributes:

|

attributes:

|

||||||

label: Hardware Specs

|

label: Hardware Specs

|

||||||

description: >

|

description: >

|

||||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://securityonion.net/docs/hardware?

|

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://docs.securityonion.net/en/2.4/hardware.html?

|

||||||

options:

|

options:

|

||||||

-

|

-

|

||||||

- Meets minimum requirements

|

- Meets minimum requirements

|

||||||

|

|||||||

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

177

.github/DISCUSSION_TEMPLATE/3-0.yml

vendored

@@ -1,177 +0,0 @@

|

|||||||

body:

|

|

||||||

- type: markdown

|

|

||||||

attributes:

|

|

||||||

value: |

|

|

||||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Version

|

|

||||||

description: Which version of Security Onion are you asking about?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- 3.0.0

|

|

||||||

- Other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Installation Method

|

|

||||||

description: How did you install Security Onion?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Security Onion ISO image

|

|

||||||

- Cloud image (Amazon, Azure, Google)

|

|

||||||

- Network installation on Oracle 9 (unsupported)

|

|

||||||

- Other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Description

|

|

||||||

description: >

|

|

||||||

Is this discussion about installation, configuration, upgrading, or other?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- installation

|

|

||||||

- configuration

|

|

||||||

- upgrading

|

|

||||||

- other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Installation Type

|

|

||||||

description: >

|

|

||||||

When you installed, did you choose Import, Eval, Standalone, Distributed, or something else?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Import

|

|

||||||

- Eval

|

|

||||||

- Standalone

|

|

||||||

- Distributed

|

|

||||||

- other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Location

|

|

||||||

description: >

|

|

||||||

Is this deployment in the cloud, on-prem with Internet access, or airgap?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- cloud

|

|

||||||

- on-prem with Internet access

|

|

||||||

- airgap

|

|

||||||

- other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Hardware Specs

|

|

||||||

description: >

|

|

||||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://securityonion.net/docs/hardware?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Meets minimum requirements

|

|

||||||

- Exceeds minimum requirements

|

|

||||||

- Does not meet minimum requirements

|

|

||||||

- other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: input

|

|

||||||

attributes:

|

|

||||||

label: CPU

|

|

||||||

description: How many CPU cores do you have?

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: input

|

|

||||||

attributes:

|

|

||||||

label: RAM

|

|

||||||

description: How much RAM do you have?

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: input

|

|

||||||

attributes:

|

|

||||||

label: Storage for /

|

|

||||||

description: How much storage do you have for the / partition?

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: input

|

|

||||||

attributes:

|

|

||||||

label: Storage for /nsm

|

|

||||||

description: How much storage do you have for the /nsm partition?

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Network Traffic Collection

|

|

||||||

description: >

|

|

||||||

Are you collecting network traffic from a tap or span port?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- tap

|

|

||||||

- span port

|

|

||||||

- other (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Network Traffic Speeds

|

|

||||||

description: >

|

|

||||||

How much network traffic are you monitoring?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Less than 1Gbps

|

|

||||||

- 1Gbps to 10Gbps

|

|

||||||

- more than 10Gbps

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Status

|

|

||||||

description: >

|

|

||||||

Does SOC Grid show all services on all nodes as running OK?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Yes, all services on all nodes are running OK

|

|

||||||

- No, one or more services are failed (please provide detail below)

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Salt Status

|

|

||||||

description: >

|

|

||||||

Do you get any failures when you run "sudo salt-call state.highstate"?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Yes, there are salt failures (please provide detail below)

|

|

||||||

- No, there are no failures

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: dropdown

|

|

||||||

attributes:

|

|

||||||

label: Logs

|

|

||||||

description: >

|

|

||||||

Are there any additional clues in /opt/so/log/?

|

|

||||||

options:

|

|

||||||

-

|

|

||||||

- Yes, there are additional clues in /opt/so/log/ (please provide detail below)

|

|

||||||

- No, there are no additional clues

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: textarea

|

|

||||||

attributes:

|

|

||||||

label: Detail

|

|

||||||

description: Please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and then provide detailed information to help us help you.

|

|

||||||

placeholder: |-

|

|

||||||

STOP! Before typing, please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 in their entirety!

|

|

||||||

|

|

||||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

|

||||||

validations:

|

|

||||||

required: true

|

|

||||||

- type: checkboxes

|

|

||||||

attributes:

|

|

||||||

label: Guidelines

|

|

||||||

options:

|

|

||||||

- label: I have read the discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and assert that I have followed the guidelines.

|

|

||||||

required: true

|

|

||||||

2

.github/workflows/pythontest.yml

vendored

2

.github/workflows/pythontest.yml

vendored

@@ -13,7 +13,7 @@ jobs:

|

|||||||

strategy:

|

strategy:

|

||||||

fail-fast: false

|

fail-fast: false

|

||||||

matrix:

|

matrix:

|

||||||

python-version: ["3.14"]

|

python-version: ["3.13"]

|

||||||

python-code-path: ["salt/sensoroni/files/analyzers", "salt/manager/tools/sbin"]

|

python-code-path: ["salt/sensoroni/files/analyzers", "salt/manager/tools/sbin"]

|

||||||

|

|

||||||

steps:

|

steps:

|

||||||

|

|||||||

@@ -1,17 +1,17 @@

|

|||||||

### 2.4.210-20260302 ISO image released on 2026/03/02

|

### 2.4.201-20260114 ISO image released on 2026/1/15

|

||||||

|

|

||||||

|

|

||||||

### Download and Verify

|

### Download and Verify

|

||||||

|

|

||||||

2.4.210-20260302 ISO image:

|

2.4.201-20260114 ISO image:

|

||||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

https://download.securityonion.net/file/securityonion/securityonion-2.4.201-20260114.iso

|

||||||

|

|

||||||

MD5: 575F316981891EBED2EE4E1F42A1F016

|

MD5: 20E926E433203798512EF46E590C89B9

|

||||||

SHA1: 600945E8823221CBC5F1C056084A71355308227E

|

SHA1: 779E4084A3E1A209B494493B8F5658508B6014FA

|

||||||

SHA256: A6AA6471125F07FA6E2796430E94BEAFDEF728E833E9728FDFA7106351EBC47E

|

SHA256: 3D10E7C885AEC5C5D4F4E50F9644FF9728E8C0A2E36EBB8C96B32569685A7C40

|

||||||

|

|

||||||

Signature for ISO image:

|

Signature for ISO image:

|

||||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.201-20260114.iso.sig

|

||||||

|

|

||||||

Signing key:

|

Signing key:

|

||||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||||

@@ -25,22 +25,22 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

|||||||

|

|

||||||

Download the signature file for the ISO:

|

Download the signature file for the ISO:

|

||||||

```

|

```

|

||||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.210-20260302.iso.sig

|

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.201-20260114.iso.sig

|

||||||

```

|

```

|

||||||

|

|

||||||

Download the ISO image:

|

Download the ISO image:

|

||||||

```

|

```

|

||||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.210-20260302.iso

|

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.201-20260114.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

Verify the downloaded ISO image using the signature file:

|

Verify the downloaded ISO image using the signature file:

|

||||||

```

|

```

|

||||||

gpg --verify securityonion-2.4.210-20260302.iso.sig securityonion-2.4.210-20260302.iso

|

gpg --verify securityonion-2.4.201-20260114.iso.sig securityonion-2.4.201-20260114.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||||

```

|

```

|

||||||

gpg: Signature made Mon 02 Mar 2026 11:55:24 AM EST using RSA key ID FE507013

|

gpg: Signature made Wed 14 Jan 2026 05:23:39 PM EST using RSA key ID FE507013

|

||||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||||

gpg: WARNING: This key is not certified with a trusted signature!

|

gpg: WARNING: This key is not certified with a trusted signature!

|

||||||

gpg: There is no indication that the signature belongs to the owner.

|

gpg: There is no indication that the signature belongs to the owner.

|

||||||

@@ -50,4 +50,4 @@ Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

|||||||

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

||||||

|

|

||||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||||

https://securityonion.net/docs/installation

|

https://docs.securityonion.net/en/2.4/installation.html

|

||||||

|

|||||||

66

README.md

66

README.md

@@ -1,58 +1,50 @@

|

|||||||

<p align="center">

|

## Security Onion 2.4

|

||||||

<img src="https://securityonionsolutions.com/logo/logo-so-onion-dark.svg" width="400" alt="Security Onion Logo">

|

|

||||||

</p>

|

|

||||||

|

|

||||||

# Security Onion

|

Security Onion 2.4 is here!

|

||||||

|

|

||||||

Security Onion is a free and open Linux distribution for threat hunting, enterprise security monitoring, and log management. It includes a comprehensive suite of tools designed to work together to provide visibility into your network and host activity.

|

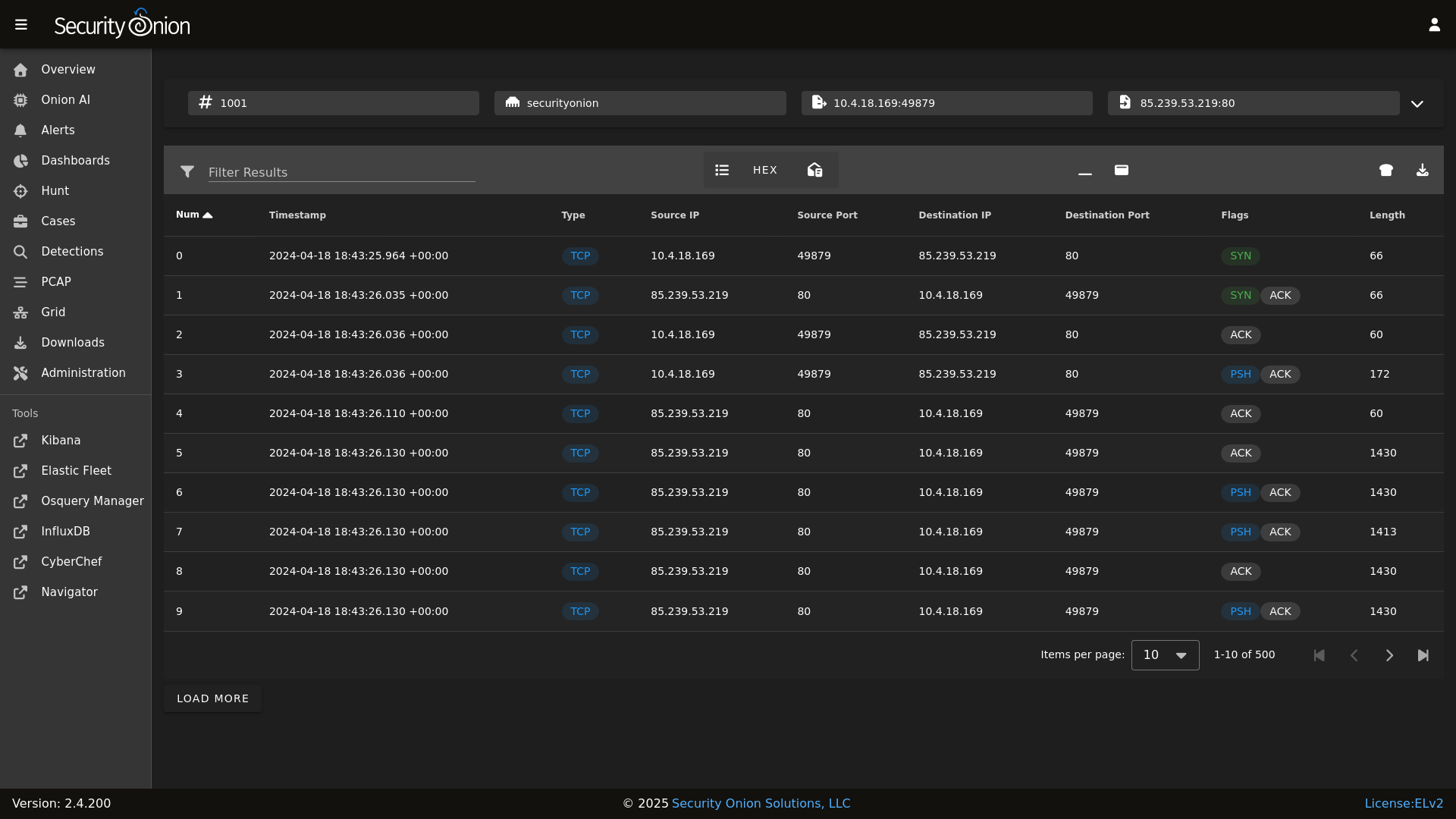

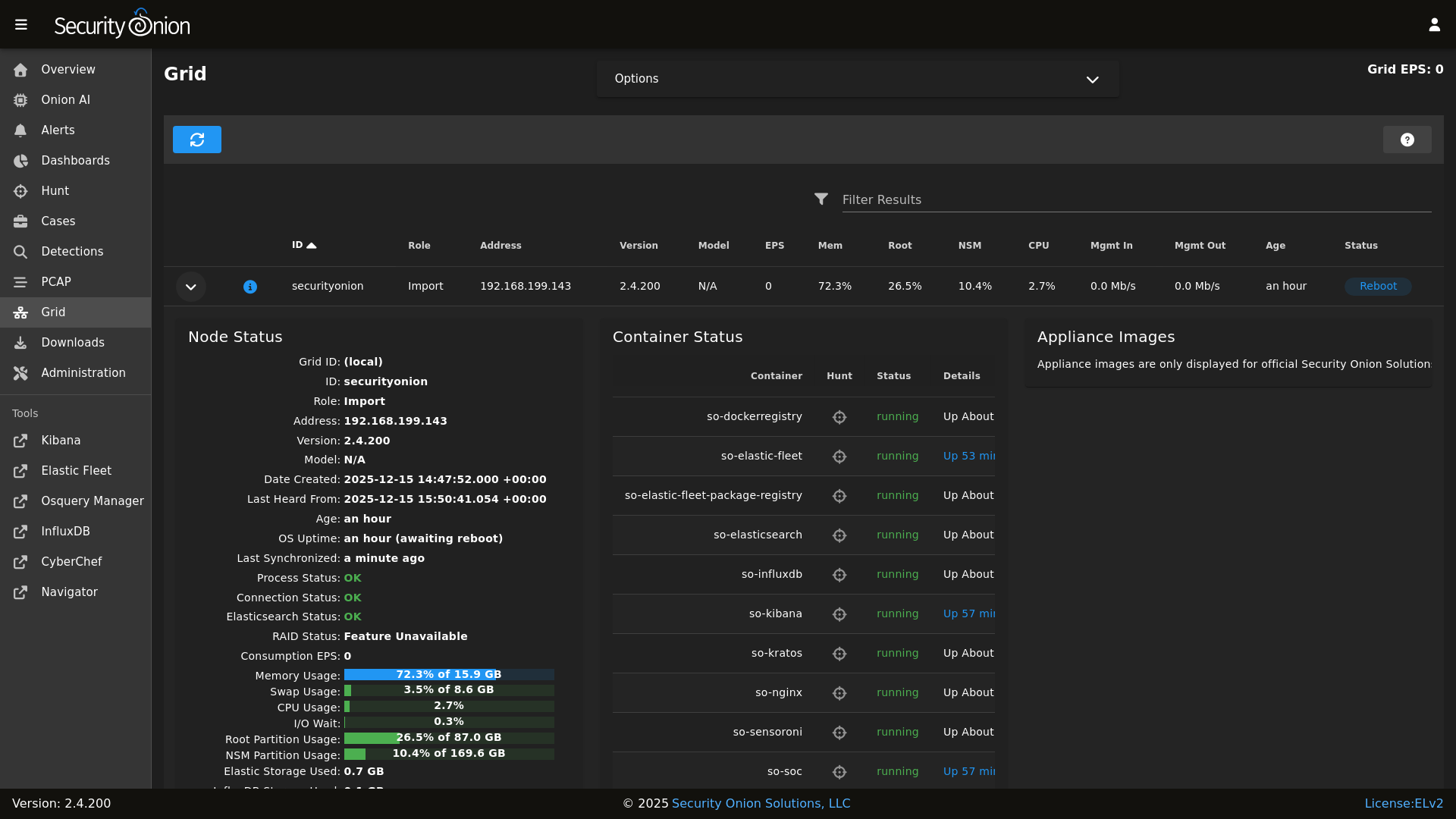

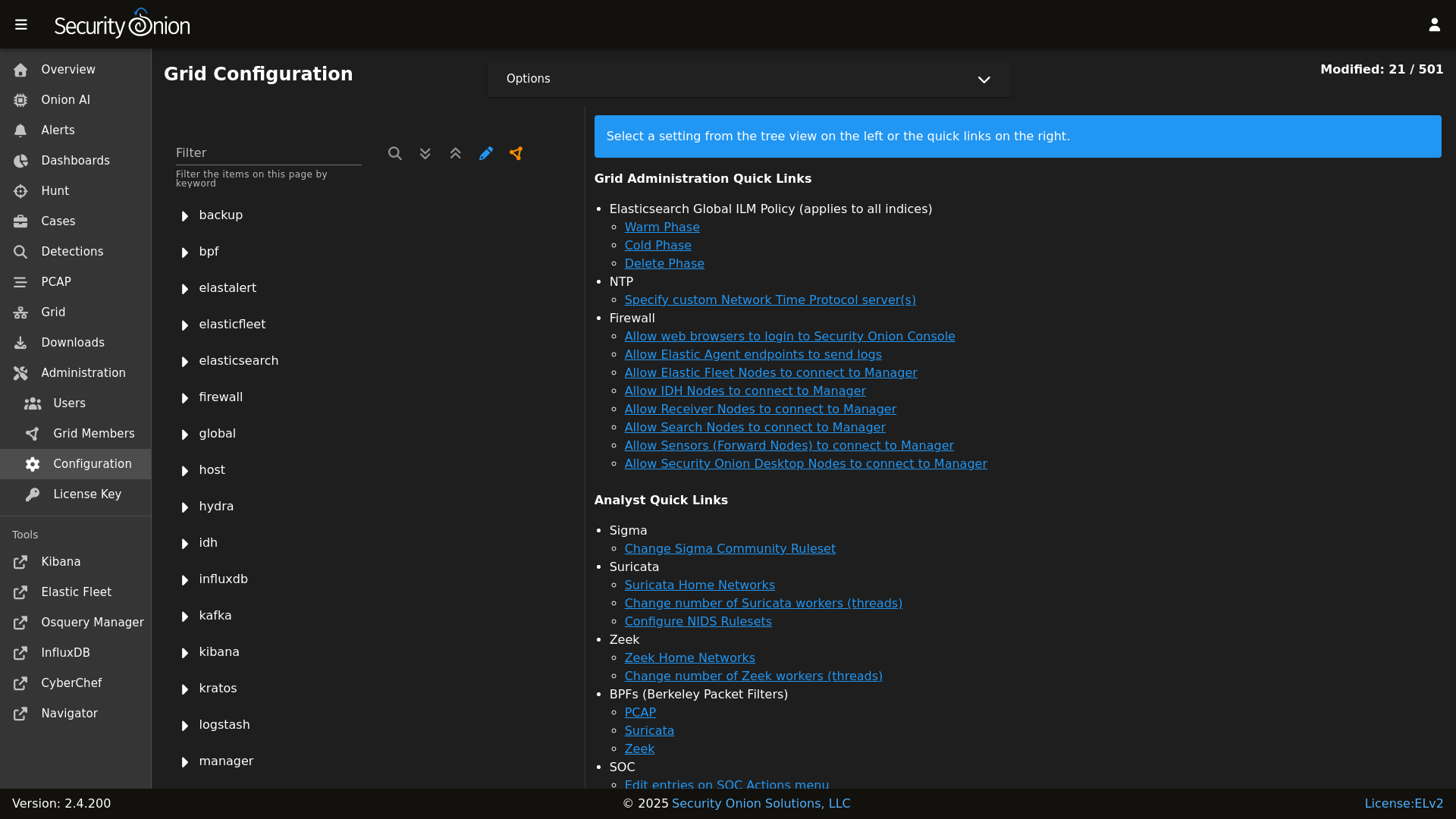

## Screenshots

|

||||||

|

|

||||||

## ✨ Features

|

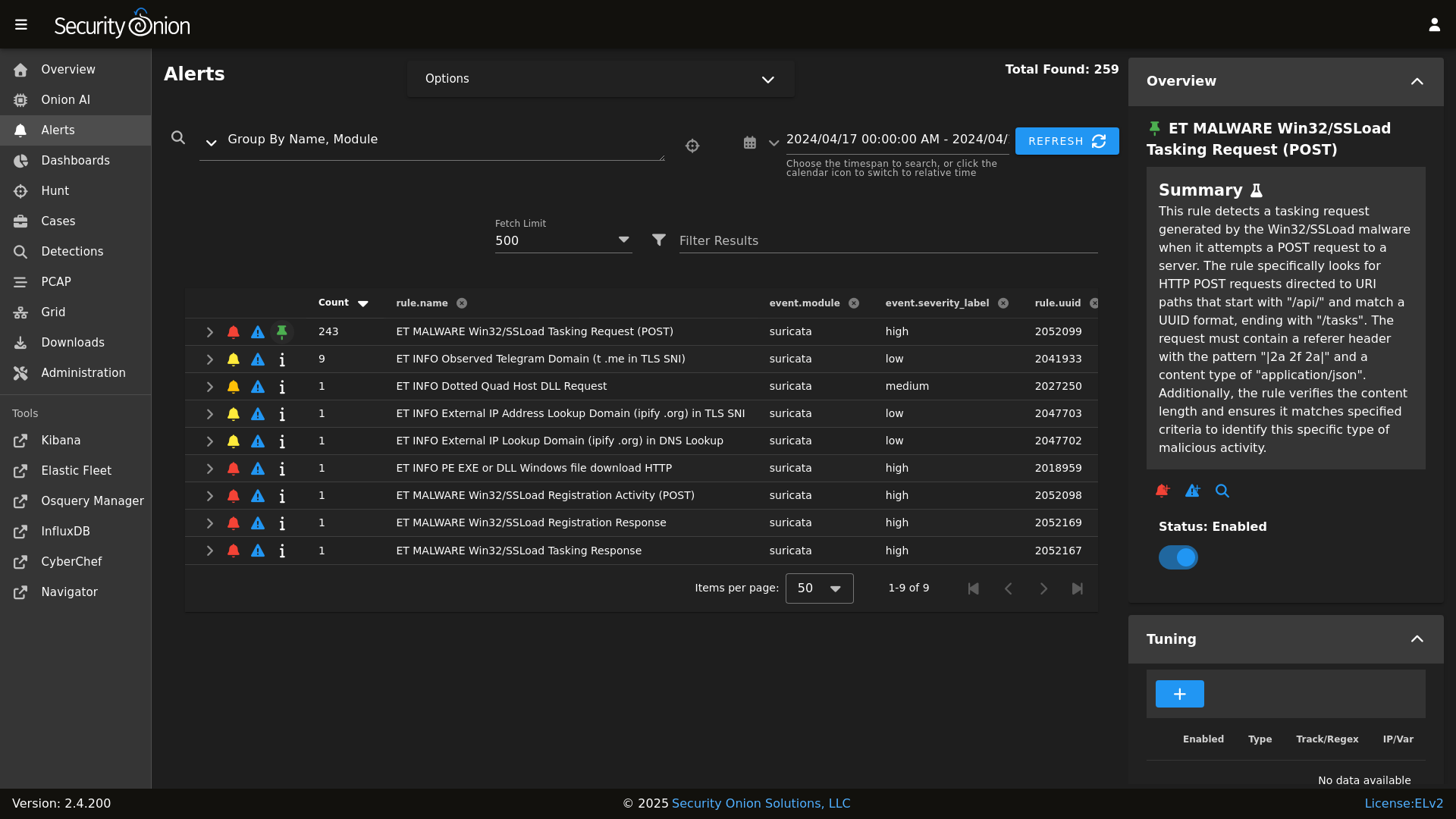

Alerts

|

||||||

|

|

||||||

|

|

||||||

Security Onion includes everything you need to monitor your network and host systems:

|

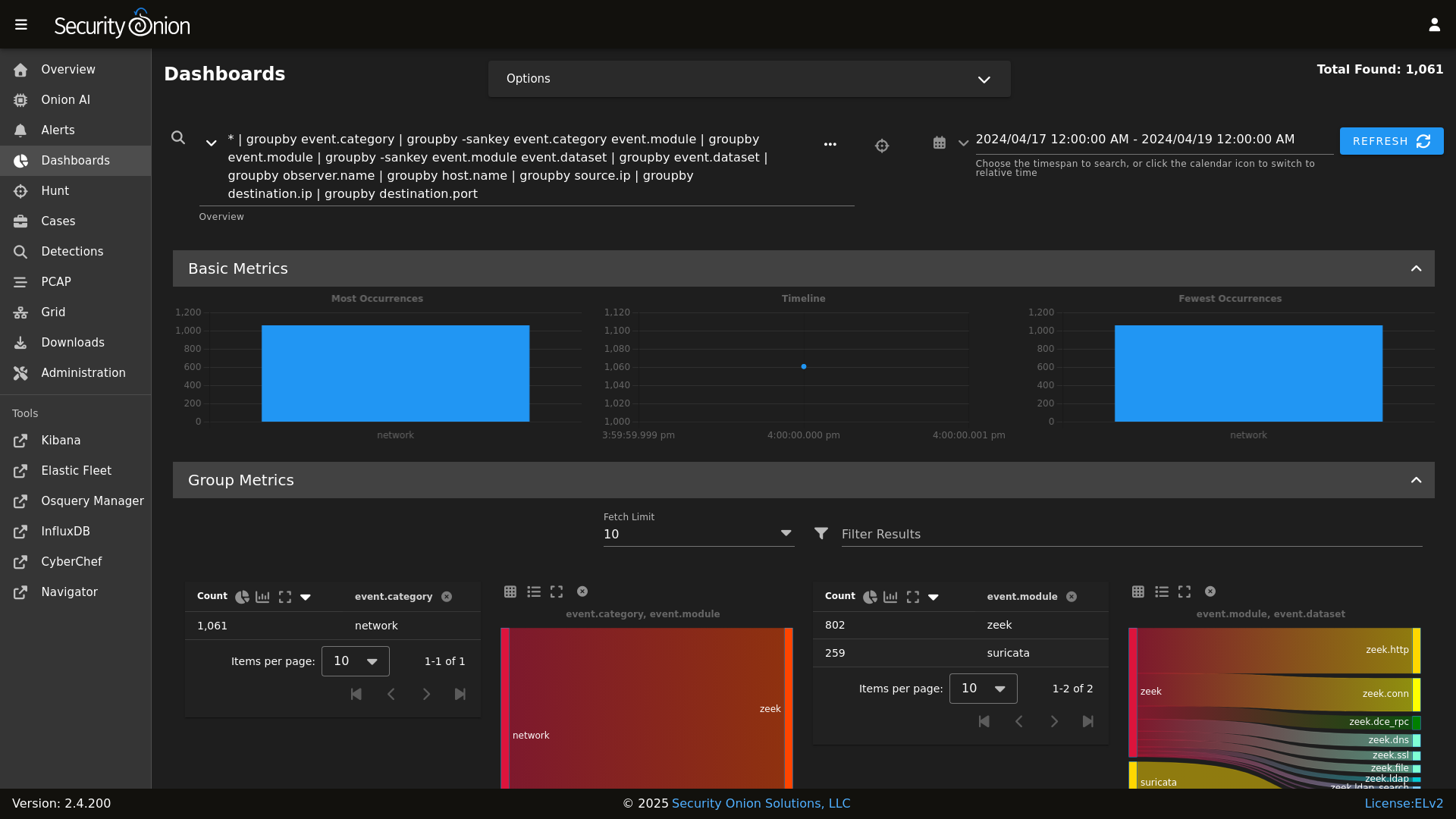

Dashboards

|

||||||

|

|

||||||

|

|

||||||

* **Security Onion Console (SOC)**: A unified web interface for analyzing security events and managing your grid.

|

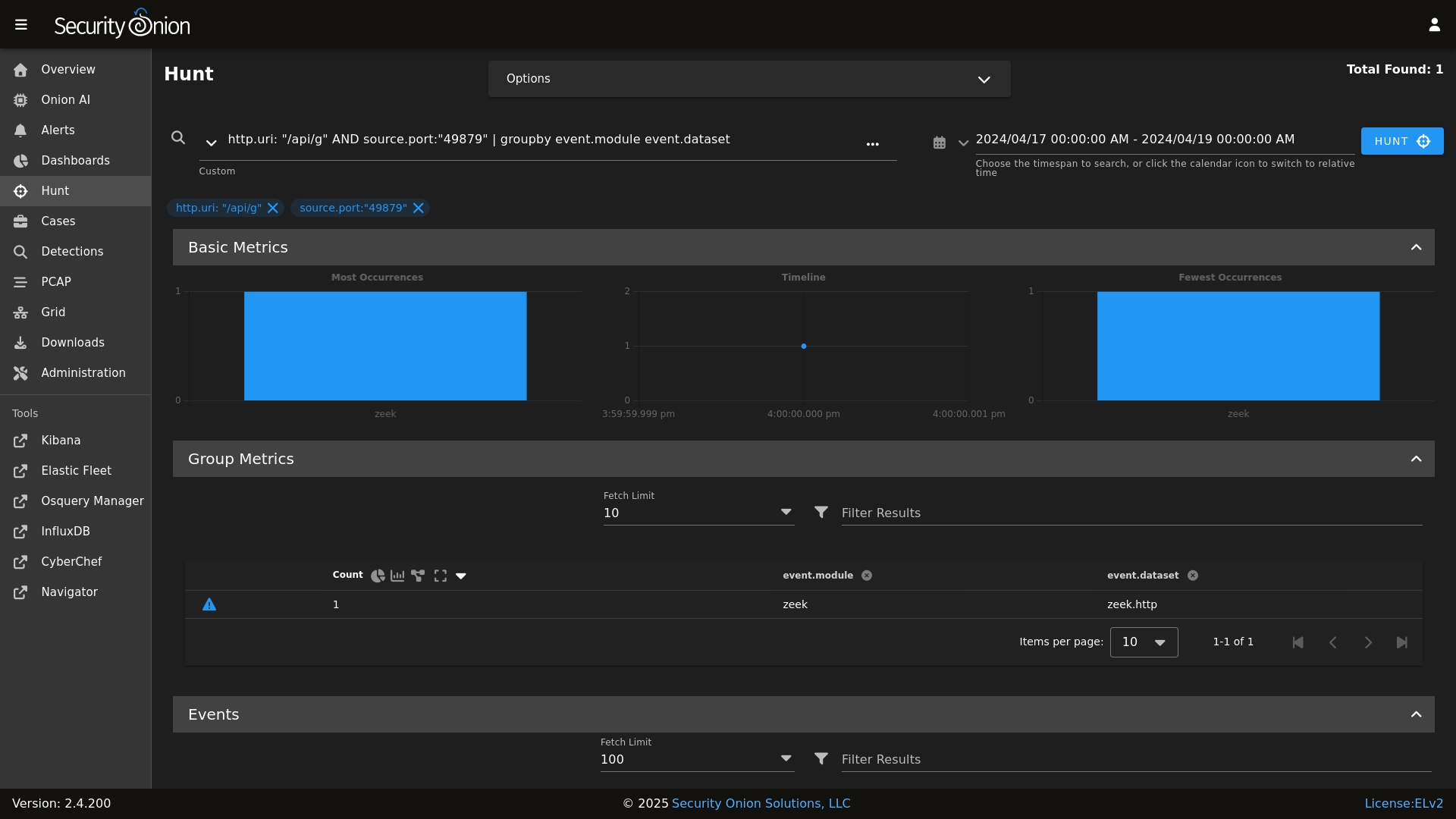

Hunt

|

||||||

* **Elastic Stack**: Powerful search backed by Elasticsearch.

|

|

||||||

* **Intrusion Detection**: Network-based IDS with Suricata and host-based monitoring with Elastic Fleet.

|

|

||||||

* **Network Metadata**: Detailed network metadata generated by Zeek or Suricata.

|

|

||||||

* **Full Packet Capture**: Retain and analyze raw network traffic with Suricata PCAP.

|

|

||||||

|

|

||||||

## ⭐ Security Onion Pro

|

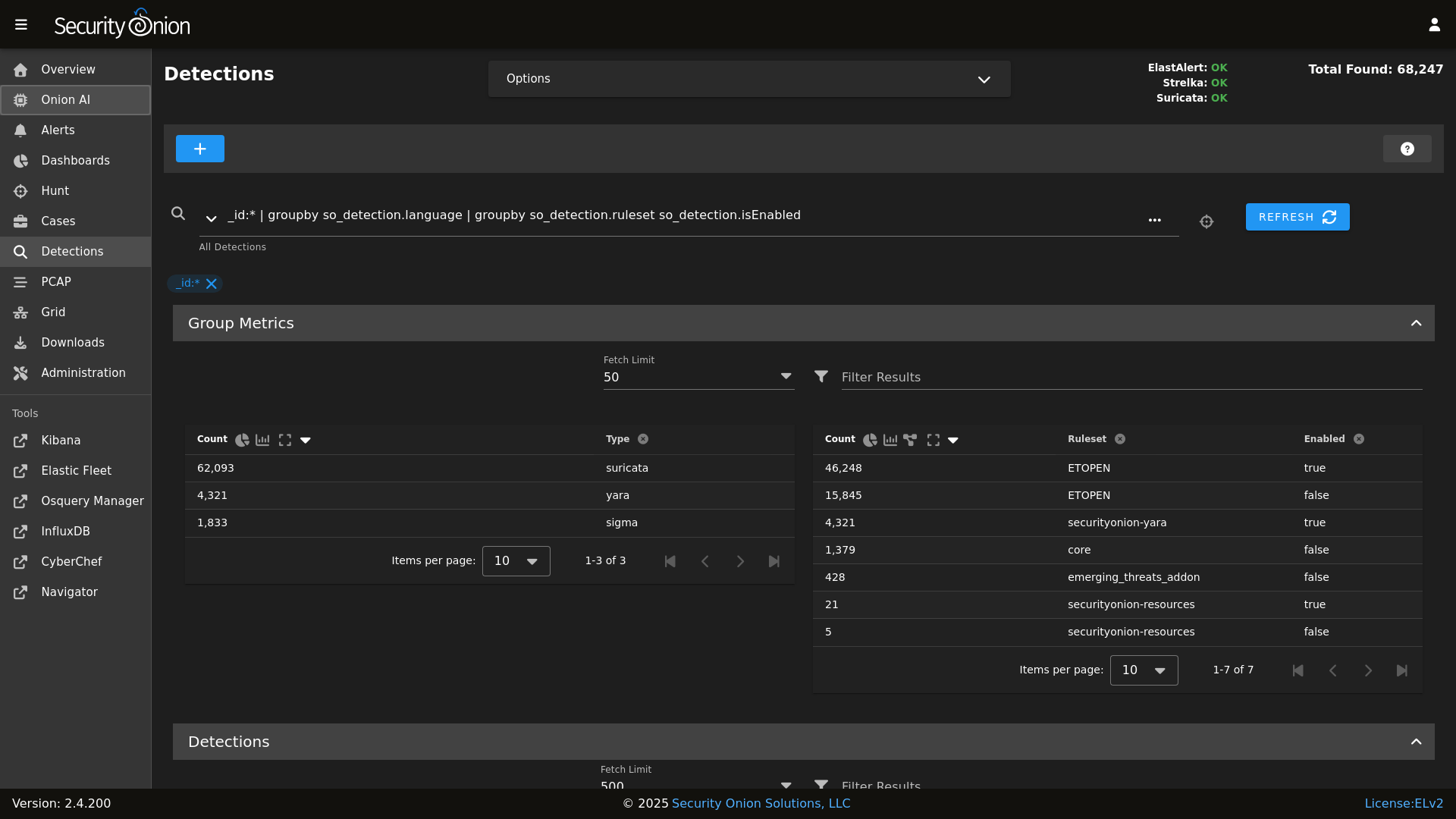

Detections

|

||||||

|

|

||||||

|

|

||||||

For organizations and enterprises requiring advanced capabilities, **Security Onion Pro** offers additional features designed for scale and efficiency:

|

PCAP

|

||||||

|

|

||||||

|

|

||||||

* **Onion AI**: Leverage powerful AI-driven insights to accelerate your analysis and investigations.

|

Grid

|

||||||

* **Enterprise Features**: Enhanced tools and integrations tailored for enterprise-grade security operations.

|

|

||||||

|

|

||||||

For more information, visit the [Security Onion Pro](https://securityonionsolutions.com/pro) page.

|

Config

|

||||||

|

|

||||||

|

|

||||||

## ☁️ Cloud Deployment

|

### Release Notes

|

||||||

|

|

||||||

Security Onion is available and ready to deploy in the **AWS**, **Azure**, and **Google Cloud (GCP)** marketplaces.

|

https://docs.securityonion.net/en/2.4/release-notes.html

|

||||||

|

|

||||||

## 🚀 Getting Started

|

### Requirements

|

||||||

|

|

||||||

| Goal | Resource |

|

https://docs.securityonion.net/en/2.4/hardware.html

|

||||||

| :--- | :--- |

|

|

||||||

| **Download** | [Security Onion ISO](https://securityonion.net/docs/download) |

|

|

||||||

| **Requirements** | [Hardware Guide](https://securityonion.net/docs/hardware) |

|

|

||||||

| **Install** | [Installation Instructions](https://securityonion.net/docs/installation) |

|

|

||||||

| **What's New** | [Release Notes](https://securityonion.net/docs/release-notes) |

|

|

||||||

|

|

||||||

## 📖 Documentation & Support

|

### Download

|

||||||

|

|

||||||

For more detailed information, please visit our [Documentation](https://docs.securityonion.net).

|

https://docs.securityonion.net/en/2.4/download.html

|

||||||

|

|

||||||

* **FAQ**: [Frequently Asked Questions](https://securityonion.net/docs/faq)

|

### Installation

|

||||||

* **Community**: [Discussions & Support](https://securityonion.net/docs/community-support)

|

|

||||||

* **Training**: [Official Training](https://securityonion.net/training)

|

|

||||||

|

|

||||||

## 🤝 Contributing

|

https://docs.securityonion.net/en/2.4/installation.html

|

||||||

|

|

||||||

We welcome contributions! Please see our [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines on how to get involved.

|

### FAQ

|

||||||

|

|

||||||

## 🛡️ License

|

https://docs.securityonion.net/en/2.4/faq.html

|

||||||

|

|

||||||

Security Onion is licensed under the terms of the license found in the [LICENSE](LICENSE) file.

|

### Feedback

|

||||||

|

|

||||||

---

|

https://docs.securityonion.net/en/2.4/community-support.html

|

||||||

*Built with 🧅 by Security Onion Solutions.*

|

|

||||||

|

|||||||

@@ -4,7 +4,6 @@

|

|||||||

|

|

||||||

| Version | Supported |

|

| Version | Supported |

|

||||||

| ------- | ------------------ |

|

| ------- | ------------------ |

|

||||||

| 3.x | :white_check_mark: |

|

|

||||||

| 2.4.x | :white_check_mark: |

|

| 2.4.x | :white_check_mark: |

|

||||||

| 2.3.x | :x: |

|

| 2.3.x | :x: |

|

||||||

| 16.04.x | :x: |

|

| 16.04.x | :x: |

|

||||||

|

|||||||

@@ -1,2 +0,0 @@

|

|||||||

ca:

|

|

||||||

server:

|

|

||||||

@@ -1,6 +1,5 @@

|

|||||||

base:

|

base:

|

||||||

'*':

|

'*':

|

||||||

- ca

|

|

||||||

- global.soc_global

|

- global.soc_global

|

||||||

- global.adv_global

|

- global.adv_global

|

||||||

- docker.soc_docker

|

- docker.soc_docker

|

||||||

@@ -87,6 +86,8 @@ base:

|

|||||||

- zeek.adv_zeek

|

- zeek.adv_zeek

|

||||||

- bpf.soc_bpf

|

- bpf.soc_bpf

|

||||||

- bpf.adv_bpf

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

- suricata.soc_suricata

|

- suricata.soc_suricata

|

||||||

- suricata.adv_suricata

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

@@ -132,6 +133,8 @@ base:

|

|||||||

- zeek.adv_zeek

|

- zeek.adv_zeek

|

||||||

- bpf.soc_bpf

|

- bpf.soc_bpf

|

||||||

- bpf.adv_bpf

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

- suricata.soc_suricata

|

- suricata.soc_suricata

|

||||||

- suricata.adv_suricata

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

@@ -181,6 +184,8 @@ base:

|

|||||||

- zeek.adv_zeek

|

- zeek.adv_zeek

|

||||||

- bpf.soc_bpf

|

- bpf.soc_bpf

|

||||||

- bpf.adv_bpf

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

- suricata.soc_suricata

|

- suricata.soc_suricata

|

||||||

- suricata.adv_suricata

|

- suricata.adv_suricata

|

||||||

- minions.{{ grains.id }}

|

- minions.{{ grains.id }}

|

||||||

@@ -203,6 +208,8 @@ base:

|

|||||||

- zeek.adv_zeek

|

- zeek.adv_zeek

|

||||||

- bpf.soc_bpf

|

- bpf.soc_bpf

|

||||||

- bpf.adv_bpf

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

- suricata.soc_suricata

|

- suricata.soc_suricata

|

||||||

- suricata.adv_suricata

|

- suricata.adv_suricata

|

||||||

- strelka.soc_strelka

|

- strelka.soc_strelka

|

||||||

@@ -289,6 +296,8 @@ base:

|

|||||||

- zeek.adv_zeek

|

- zeek.adv_zeek

|

||||||

- bpf.soc_bpf

|

- bpf.soc_bpf

|

||||||

- bpf.adv_bpf

|

- bpf.adv_bpf

|

||||||

|

- pcap.soc_pcap

|

||||||

|

- pcap.adv_pcap

|

||||||

- suricata.soc_suricata

|

- suricata.soc_suricata

|

||||||

- suricata.adv_suricata

|

- suricata.adv_suricata

|

||||||

- strelka.soc_strelka

|

- strelka.soc_strelka

|

||||||

|

|||||||

@@ -1,14 +1,24 @@

|

|||||||

|

from os import path

|

||||||

import subprocess

|

import subprocess

|

||||||

|

|

||||||

def check():

|

def check():

|

||||||

|

|

||||||

|

osfam = __grains__['os_family']

|

||||||

retval = 'False'

|

retval = 'False'

|

||||||

|

|

||||||

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

if osfam == 'Debian':

|

||||||

|

if path.exists('/var/run/reboot-required'):

|

||||||

|

retval = 'True'

|

||||||

|

|

||||||

try:

|

elif osfam == 'RedHat':

|

||||||

needs_restarting = subprocess.check_call(cmd, shell=True)

|

cmd = 'needs-restarting -r > /dev/null 2>&1'

|

||||||

except subprocess.CalledProcessError:

|

|

||||||

retval = 'True'

|

try:

|

||||||

|

needs_restarting = subprocess.check_call(cmd, shell=True)

|

||||||

|

except subprocess.CalledProcessError:

|

||||||

|

retval = 'True'

|

||||||

|

|

||||||

|

else:

|

||||||

|

retval = 'Unsupported OS: %s' % os

|

||||||

|

|

||||||

return retval

|

return retval

|

||||||

|

|||||||

@@ -15,7 +15,11 @@

|

|||||||

'salt.minion-check',

|

'salt.minion-check',

|

||||||

'sensoroni',

|

'sensoroni',

|

||||||

'salt.lasthighstate',

|

'salt.lasthighstate',

|

||||||

'salt.minion',

|

'salt.minion'

|

||||||

|

] %}

|

||||||

|

|

||||||

|

{% set ssl_states = [

|

||||||

|

'ssl',

|

||||||

'telegraf',

|

'telegraf',

|

||||||

'firewall',

|

'firewall',

|

||||||

'schedule',

|

'schedule',

|

||||||

@@ -24,7 +28,7 @@

|

|||||||

|

|

||||||

{% set manager_states = [

|

{% set manager_states = [

|

||||||

'salt.master',

|

'salt.master',

|

||||||

'ca.server',

|

'ca',

|

||||||

'registry',

|

'registry',

|

||||||

'manager',

|

'manager',

|

||||||

'nginx',

|

'nginx',

|

||||||

@@ -38,6 +42,7 @@

|

|||||||

] %}

|

] %}

|

||||||

|

|

||||||

{% set sensor_states = [

|

{% set sensor_states = [

|

||||||

|

'pcap',

|

||||||

'suricata',

|

'suricata',

|

||||||

'healthcheck',

|

'healthcheck',

|

||||||

'tcpreplay',

|

'tcpreplay',

|

||||||

@@ -70,24 +75,28 @@

|

|||||||

{# Map role-specific states #}

|

{# Map role-specific states #}

|

||||||

{% set role_states = {

|

{% set role_states = {

|

||||||

'so-eval': (

|

'so-eval': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

sensor_states +

|

sensor_states +

|

||||||

elastic_stack_states | reject('equalto', 'logstash') | list +

|

elastic_stack_states | reject('equalto', 'logstash') | list

|

||||||

['logstash.ssl']

|

|

||||||

),

|

),

|

||||||

'so-heavynode': (

|

'so-heavynode': (

|

||||||

|

ssl_states +

|

||||||

sensor_states +

|

sensor_states +

|

||||||

['elasticagent', 'elasticsearch', 'logstash', 'redis', 'nginx']

|

['elasticagent', 'elasticsearch', 'logstash', 'redis', 'nginx']

|

||||||

),

|

),

|

||||||

'so-idh': (

|

'so-idh': (

|

||||||

|

ssl_states +

|

||||||

['idh']

|

['idh']

|

||||||

),

|

),

|

||||||

'so-import': (

|

'so-import': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

sensor_states | reject('equalto', 'strelka') | reject('equalto', 'healthcheck') | list +

|

sensor_states | reject('equalto', 'strelka') | reject('equalto', 'healthcheck') | list +

|

||||||

['elasticsearch', 'elasticsearch.auth', 'kibana', 'kibana.secrets', 'logstash.ssl', 'strelka.manager']

|

['elasticsearch', 'elasticsearch.auth', 'kibana', 'kibana.secrets', 'strelka.manager']

|

||||||

),

|

),

|

||||||

'so-manager': (

|

'so-manager': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users', 'strelka.manager'] +

|

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users', 'strelka.manager'] +

|

||||||

stig_states +

|

stig_states +

|

||||||

@@ -95,6 +104,7 @@

|

|||||||

elastic_stack_states

|

elastic_stack_states

|

||||||

),

|

),

|

||||||

'so-managerhype': (

|

'so-managerhype': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

['salt.cloud', 'strelka.manager', 'hypervisor', 'libvirt'] +

|

['salt.cloud', 'strelka.manager', 'hypervisor', 'libvirt'] +

|

||||||

stig_states +

|

stig_states +

|

||||||

@@ -102,6 +112,7 @@

|

|||||||

elastic_stack_states

|

elastic_stack_states

|

||||||

),

|

),

|

||||||

'so-managersearch': (

|

'so-managersearch': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users', 'strelka.manager'] +

|

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users', 'strelka.manager'] +

|

||||||

stig_states +

|

stig_states +

|

||||||

@@ -109,10 +120,12 @@

|

|||||||

elastic_stack_states

|

elastic_stack_states

|

||||||

),

|

),

|

||||||

'so-searchnode': (

|

'so-searchnode': (

|

||||||

|

ssl_states +

|

||||||

['kafka.ca', 'kafka.ssl', 'elasticsearch', 'logstash', 'nginx'] +

|

['kafka.ca', 'kafka.ssl', 'elasticsearch', 'logstash', 'nginx'] +

|

||||||

stig_states

|

stig_states

|

||||||

),

|

),

|

||||||

'so-standalone': (

|

'so-standalone': (

|

||||||

|

ssl_states +

|

||||||

manager_states +

|

manager_states +

|

||||||

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users'] +

|

['salt.cloud', 'libvirt.packages', 'libvirt.ssh.users'] +

|

||||||

sensor_states +

|

sensor_states +

|

||||||

@@ -121,24 +134,29 @@

|

|||||||

elastic_stack_states

|

elastic_stack_states

|

||||||

),

|

),

|

||||||

'so-sensor': (

|

'so-sensor': (

|

||||||

|

ssl_states +

|

||||||

sensor_states +

|

sensor_states +

|

||||||

['nginx'] +

|

['nginx'] +

|

||||||

stig_states

|

stig_states

|

||||||

),

|

),

|

||||||

'so-fleet': (

|

'so-fleet': (

|

||||||

|

ssl_states +

|

||||||

stig_states +

|

stig_states +

|

||||||

['logstash', 'nginx', 'healthcheck', 'elasticfleet']

|

['logstash', 'nginx', 'healthcheck', 'elasticfleet']

|

||||||

),

|

),

|

||||||

'so-receiver': (

|

'so-receiver': (

|

||||||

|

ssl_states +

|

||||||

kafka_states +

|

kafka_states +

|

||||||

stig_states +

|

stig_states +

|

||||||

['logstash', 'redis']

|

['logstash', 'redis']

|

||||||

),

|

),

|

||||||

'so-hypervisor': (

|

'so-hypervisor': (

|

||||||

|

ssl_states +

|

||||||

stig_states +

|

stig_states +

|

||||||

['hypervisor', 'libvirt']

|

['hypervisor', 'libvirt']

|

||||||

),

|

),

|

||||||

'so-desktop': (

|

'so-desktop': (

|

||||||

|

['ssl', 'docker_clean', 'telegraf'] +

|

||||||

stig_states

|

stig_states

|

||||||

)

|

)

|

||||||

} %}

|

} %}

|

||||||

|

|||||||

@@ -1,12 +1,10 @@

|

|||||||

{% macro remove_comments(bpfmerged, app) %}

|

{% macro remove_comments(bpfmerged, app) %}

|

||||||

|

|

||||||

{# remove comments from the bpf #}

|

{# remove comments from the bpf #}

|

||||||

{% set app_list = [] %}

|

|

||||||

{% for bpf in bpfmerged[app] %}

|

{% for bpf in bpfmerged[app] %}

|

||||||

{% if not bpf.strip().startswith('#') %}

|

{% if bpf.strip().startswith('#') %}

|

||||||

{% do app_list.append(bpf) %}

|

{% do bpfmerged[app].pop(loop.index0) %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

{% endfor %}

|

{% endfor %}

|

||||||

{% do bpfmerged.update({app: app_list}) %}

|

|

||||||

|

|

||||||

{% endmacro %}

|

{% endmacro %}

|

||||||

|

|||||||

@@ -1,15 +1,21 @@

|

|||||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||||

{% set PCAP_BPF_STATUS = 0 %}

|

{% set PCAP_BPF_STATUS = 0 %}

|

||||||

|

{% set STENO_BPF_COMPILED = "" %}

|

||||||

|

|

||||||

|

{% if GLOBALS.pcap_engine == "TRANSITION" %}

|

||||||

|

{% set PCAPBPF = ["ip and host 255.255.255.1 and port 1"] %}

|

||||||

|

{% else %}

|

||||||

{% import_yaml 'bpf/defaults.yaml' as BPFDEFAULTS %}

|

{% import_yaml 'bpf/defaults.yaml' as BPFDEFAULTS %}

|

||||||

{% set BPFMERGED = salt['pillar.get']('bpf', BPFDEFAULTS.bpf, merge=True) %}

|

{% set BPFMERGED = salt['pillar.get']('bpf', BPFDEFAULTS.bpf, merge=True) %}

|

||||||

{% import 'bpf/macros.jinja' as MACROS %}

|

{% import 'bpf/macros.jinja' as MACROS %}

|

||||||

{{ MACROS.remove_comments(BPFMERGED, 'pcap') }}

|

{{ MACROS.remove_comments(BPFMERGED, 'pcap') }}

|

||||||

{% set PCAPBPF = BPFMERGED.pcap %}

|

{% set PCAPBPF = BPFMERGED.pcap %}

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

{% if PCAPBPF %}

|

{% if PCAPBPF %}

|

||||||

{% set PCAP_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + PCAPBPF|join(" "),cwd='/root') %}

|

{% set PCAP_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ PCAPBPF|join(" "), cwd='/root') %}

|

||||||

{% if PCAP_BPF_CALC['retcode'] == 0 %}

|

{% if PCAP_BPF_CALC['retcode'] == 0 %}

|

||||||

{% set PCAP_BPF_STATUS = 1 %}

|

{% set PCAP_BPF_STATUS = 1 %}

|

||||||

|

{% set STENO_BPF_COMPILED = ",\\\"--filter=" + PCAP_BPF_CALC['stdout'] + "\\\"" %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

|

|||||||

@@ -9,7 +9,7 @@

|

|||||||

{% set SURICATABPF = BPFMERGED.suricata %}

|

{% set SURICATABPF = BPFMERGED.suricata %}

|

||||||

|

|

||||||

{% if SURICATABPF %}

|

{% if SURICATABPF %}

|

||||||

{% set SURICATA_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + SURICATABPF|join(" "),cwd='/root') %}

|

{% set SURICATA_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ SURICATABPF|join(" "), cwd='/root') %}

|

||||||

{% if SURICATA_BPF_CALC['retcode'] == 0 %}

|

{% if SURICATA_BPF_CALC['retcode'] == 0 %}

|

||||||

{% set SURICATA_BPF_STATUS = 1 %}

|

{% set SURICATA_BPF_STATUS = 1 %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

|

|||||||

@@ -9,7 +9,7 @@

|

|||||||

{% set ZEEKBPF = BPFMERGED.zeek %}

|

{% set ZEEKBPF = BPFMERGED.zeek %}

|

||||||

|

|

||||||

{% if ZEEKBPF %}

|

{% if ZEEKBPF %}

|

||||||

{% set ZEEK_BPF_CALC = salt['cmd.script']('salt://common/tools/sbin/so-bpf-compile', GLOBALS.sensor.interface + ' ' + ZEEKBPF|join(" "),cwd='/root') %}

|

{% set ZEEK_BPF_CALC = salt['cmd.run_all']('/usr/sbin/so-bpf-compile ' ~ GLOBALS.sensor.interface ~ ' ' ~ ZEEKBPF|join(" "), cwd='/root') %}

|

||||||

{% if ZEEK_BPF_CALC['retcode'] == 0 %}

|

{% if ZEEK_BPF_CALC['retcode'] == 0 %}

|

||||||

{% set ZEEK_BPF_STATUS = 1 %}

|

{% set ZEEK_BPF_STATUS = 1 %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

|

|||||||

4

salt/ca/dirs.sls

Normal file

4

salt/ca/dirs.sls

Normal file

@@ -0,0 +1,4 @@

|

|||||||

|

pki_issued_certs:

|

||||||

|

file.directory:

|

||||||

|

- name: /etc/pki/issued_certs

|

||||||

|

- makedirs: True

|

||||||

@@ -3,10 +3,70 @@

|

|||||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

# Elastic License 2.0.

|

# Elastic License 2.0.

|

||||||

|

|

||||||

|

{% from 'allowed_states.map.jinja' import allowed_states %}

|

||||||

|

{% if sls in allowed_states %}

|

||||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||||

|

|

||||||

|

|

||||||

include:

|

include:

|

||||||

{% if GLOBALS.is_manager %}

|

- ca.dirs

|

||||||

- ca.server

|

|

||||||

|

/etc/salt/minion.d/signing_policies.conf:

|

||||||

|

file.managed:

|

||||||

|

- source: salt://ca/files/signing_policies.conf

|

||||||

|

|

||||||

|

pki_private_key:

|

||||||

|

x509.private_key_managed:

|

||||||

|

- name: /etc/pki/ca.key

|

||||||

|

- keysize: 4096

|

||||||

|

- passphrase:

|

||||||

|

- backup: True

|

||||||

|

{% if salt['file.file_exists']('/etc/pki/ca.key') -%}

|

||||||

|

- prereq:

|

||||||

|

- x509: /etc/pki/ca.crt

|

||||||

|

{%- endif %}

|

||||||

|

|

||||||

|

pki_public_ca_crt:

|

||||||

|

x509.certificate_managed:

|

||||||

|

- name: /etc/pki/ca.crt

|

||||||

|

- signing_private_key: /etc/pki/ca.key

|

||||||

|

- CN: {{ GLOBALS.manager }}

|

||||||

|

- C: US

|

||||||

|

- ST: Utah

|

||||||

|

- L: Salt Lake City

|

||||||

|

- basicConstraints: "critical CA:true"

|

||||||

|

- keyUsage: "critical cRLSign, keyCertSign"

|

||||||

|

- extendedkeyUsage: "serverAuth, clientAuth"

|

||||||

|

- subjectKeyIdentifier: hash

|

||||||

|

- authorityKeyIdentifier: keyid:always, issuer

|

||||||

|

- days_valid: 3650

|

||||||

|

- days_remaining: 0

|

||||||

|

- backup: True

|

||||||

|

- replace: False

|

||||||

|

- require:

|

||||||

|

- sls: ca.dirs

|

||||||

|

- timeout: 30

|

||||||

|

- retry:

|

||||||

|

attempts: 5

|

||||||

|

interval: 30

|

||||||

|

|

||||||

|

mine_update_ca_crt:

|

||||||

|

module.run:

|

||||||

|

- mine.update: []

|

||||||

|

- onchanges:

|

||||||

|

- x509: pki_public_ca_crt

|

||||||

|

|

||||||

|

cakeyperms:

|

||||||

|

file.managed:

|

||||||

|

- replace: False

|

||||||

|

- name: /etc/pki/ca.key

|

||||||

|

- mode: 640

|

||||||

|

- group: 939

|

||||||

|

|

||||||

|

{% else %}

|

||||||

|

|

||||||

|

{{sls}}_state_not_allowed:

|

||||||

|

test.fail_without_changes:

|

||||||

|

- name: {{sls}}_state_not_allowed

|

||||||

|

|

||||||

{% endif %}

|

{% endif %}

|

||||||

- ca.trustca

|

|

||||||

|

|||||||

@@ -1,3 +0,0 @@

|

|||||||

{% set CA = {

|

|

||||||

'server': pillar.ca.server

|

|

||||||

}%}

|

|

||||||

@@ -1,35 +1,7 @@

|

|||||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

pki_private_key:

|

||||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

|

||||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

|

||||||

# Elastic License 2.0.

|

|

||||||

|

|

||||||

{% set setup_running = salt['cmd.retcode']('pgrep -x so-setup') == 0 %}

|

|

||||||

|

|

||||||

{% if setup_running%}

|

|

||||||

|

|

||||||

include:

|

|

||||||

- ssl.remove

|

|

||||||

|

|

||||||

remove_pki_private_key:

|

|

||||||

file.absent:

|

file.absent:

|

||||||

- name: /etc/pki/ca.key

|

- name: /etc/pki/ca.key

|

||||||

|

|

||||||

remove_pki_public_ca_crt:

|

pki_public_ca_crt:

|

||||||

file.absent:

|

file.absent:

|

||||||

- name: /etc/pki/ca.crt

|

- name: /etc/pki/ca.crt

|

||||||

|

|

||||||

remove_trusttheca:

|

|

||||||

file.absent:

|

|

||||||

- name: /etc/pki/tls/certs/intca.crt

|

|

||||||

|

|

||||||

remove_pki_public_ca_crt_symlink:

|

|

||||||

file.absent:

|

|

||||||

- name: /opt/so/saltstack/local/salt/ca/files/ca.crt

|

|

||||||

|

|

||||||

{% else %}

|

|

||||||

|

|

||||||

so-setup_not_running:

|

|

||||||

test.show_notification:

|

|

||||||

- text: "This state is reserved for usage during so-setup."

|

|

||||||

|

|

||||||

{% endif %}

|

|

||||||

|

|||||||

@@ -1,63 +0,0 @@

|

|||||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

|

||||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

|

||||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

|

||||||

# Elastic License 2.0.

|

|

||||||

|

|

||||||

{% from 'allowed_states.map.jinja' import allowed_states %}

|

|

||||||

{% if sls in allowed_states %}

|

|

||||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

|

||||||

|

|

||||||

pki_private_key:

|

|

||||||

x509.private_key_managed:

|

|

||||||

- name: /etc/pki/ca.key

|

|

||||||

- keysize: 4096

|

|

||||||

- passphrase:

|

|

||||||

- backup: True

|

|

||||||

{% if salt['file.file_exists']('/etc/pki/ca.key') -%}

|

|

||||||

- prereq:

|

|

||||||

- x509: /etc/pki/ca.crt

|

|

||||||

{%- endif %}

|

|

||||||

|

|

||||||

pki_public_ca_crt:

|

|

||||||

x509.certificate_managed:

|

|

||||||

- name: /etc/pki/ca.crt

|

|

||||||

- signing_private_key: /etc/pki/ca.key

|

|

||||||

- CN: {{ GLOBALS.manager }}

|

|

||||||

- C: US

|

|

||||||

- ST: Utah

|

|

||||||

- L: Salt Lake City

|

|

||||||

- basicConstraints: "critical CA:true"

|

|

||||||

- keyUsage: "critical cRLSign, keyCertSign"

|

|

||||||

- extendedkeyUsage: "serverAuth, clientAuth"

|

|

||||||

- subjectKeyIdentifier: hash

|

|

||||||

- authorityKeyIdentifier: keyid:always, issuer

|

|

||||||

- days_valid: 3650

|

|

||||||

- days_remaining: 7

|

|

||||||

- backup: True

|

|

||||||

- replace: False

|

|

||||||

- timeout: 30

|

|

||||||

- retry:

|

|

||||||

attempts: 5

|

|

||||||

interval: 30

|

|

||||||

|

|

||||||

pki_public_ca_crt_symlink:

|

|

||||||

file.symlink:

|

|

||||||

- name: /opt/so/saltstack/local/salt/ca/files/ca.crt

|

|

||||||

- target: /etc/pki/ca.crt

|

|

||||||

- require:

|

|

||||||

- x509: pki_public_ca_crt

|

|

||||||

|

|

||||||

cakeyperms:

|

|

||||||

file.managed:

|

|

||||||

- replace: False

|

|

||||||

- name: /etc/pki/ca.key

|

|

||||||

- mode: 640

|

|

||||||

- group: 939

|

|

||||||

|

|

||||||

{% else %}

|

|

||||||

|

|

||||||

{{sls}}_state_not_allowed:

|

|

||||||

test.fail_without_changes:

|

|

||||||

- name: {{sls}}_state_not_allowed

|

|

||||||

|

|

||||||

{% endif %}

|

|

||||||

12

salt/common/files/daemon.json

Normal file

12

salt/common/files/daemon.json

Normal file

@@ -0,0 +1,12 @@

|

|||||||

|

{

|

||||||

|

"registry-mirrors": [

|

||||||

|

"https://:5000"

|

||||||

|

],

|

||||||

|

"bip": "172.17.0.1/24",

|

||||||

|

"default-address-pools": [

|

||||||

|

{

|

||||||

|

"base": "172.17.0.0/24",

|

||||||

|

"size": 24

|

||||||

|

}

|

||||||

|

]

|

||||||

|

}

|

||||||

@@ -20,6 +20,11 @@ kernel.printk:

|

|||||||

sysctl.present:

|

sysctl.present:

|

||||||

- value: "3 4 1 3"

|

- value: "3 4 1 3"

|

||||||

|

|

||||||

|

# Remove variables.txt from /tmp - This is temp

|

||||||

|

rmvariablesfile:

|

||||||

|

file.absent:

|

||||||

|

- name: /tmp/variables.txt

|

||||||

|

|

||||||

# Add socore Group

|

# Add socore Group

|

||||||

socoregroup:

|

socoregroup:

|

||||||

group.present:

|

group.present:

|

||||||

@@ -144,13 +149,35 @@ common_sbin_jinja:

|

|||||||

- so-import-pcap

|

- so-import-pcap

|

||||||

{% endif %}

|

{% endif %}

|

||||||

|

|

||||||

|

{% if GLOBALS.role == 'so-heavynode' %}

|

||||||

|

remove_so-pcap-import_heavynode:

|

||||||

|

file.absent:

|

||||||

|

- name: /usr/sbin/so-pcap-import

|

||||||

|

|

||||||

|

remove_so-import-pcap_heavynode:

|

||||||

|

file.absent:

|

||||||

|

- name: /usr/sbin/so-import-pcap

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% if not GLOBALS.is_manager%}

|

||||||

|

# prior to 2.4.50 these scripts were in common/tools/sbin on the manager because of soup and distributed to non managers

|

||||||

|

# these two states remove the scripts from non manager nodes

|

||||||

|

remove_soup:

|

||||||

|

file.absent:

|

||||||

|

- name: /usr/sbin/soup

|

||||||

|

|

||||||

|

remove_so-firewall:

|

||||||

|

file.absent:

|

||||||

|

- name: /usr/sbin/so-firewall

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

so-status_script:

|

so-status_script:

|

||||||

file.managed:

|

file.managed:

|

||||||

- name: /usr/sbin/so-status

|

- name: /usr/sbin/so-status

|

||||||

- source: salt://common/tools/sbin/so-status

|

- source: salt://common/tools/sbin/so-status

|

||||||

- mode: 755

|

- mode: 755

|

||||||

|

|

||||||

{% if GLOBALS.is_sensor %}

|

{% if GLOBALS.role in GLOBALS.sensor_roles %}

|

||||||

# Add sensor cleanup

|

# Add sensor cleanup

|

||||||

so-sensor-clean:

|

so-sensor-clean:

|

||||||

cron.present:

|

cron.present:

|

||||||

|

|||||||

@@ -1,5 +1,52 @@

|

|||||||

# we cannot import GLOBALS from vars/globals.map.jinja in this state since it is called in setup.virt.init

|

# we cannot import GLOBALS from vars/globals.map.jinja in this state since it is called in setup.virt.init

|

||||||

# since it is early in setup of a new VM, the pillars imported in GLOBALS are not yet defined

|

# since it is early in setup of a new VM, the pillars imported in GLOBALS are not yet defined

|

||||||

|

{% if grains.os_family == 'Debian' %}

|

||||||

|

commonpkgs:

|

||||||

|

pkg.installed:

|

||||||

|

- skip_suggestions: True

|

||||||

|

- pkgs:

|

||||||

|

- apache2-utils

|

||||||

|

- wget

|

||||||

|

- ntpdate

|

||||||

|

- jq

|

||||||

|

- curl

|

||||||

|

- ca-certificates

|

||||||

|

- software-properties-common

|

||||||

|

- apt-transport-https

|

||||||

|

- openssl

|

||||||

|

- netcat-openbsd

|

||||||

|

- sqlite3

|

||||||

|

- libssl-dev

|

||||||

|

- procps

|

||||||

|

- python3-dateutil

|

||||||

|

- python3-docker

|

||||||

|

- python3-packaging

|

||||||

|

- python3-lxml

|

||||||

|

- git

|

||||||

|

- rsync

|

||||||

|

- vim

|

||||||

|

- tar

|

||||||

|

- unzip

|

||||||

|

- bc

|

||||||

|

{% if grains.oscodename != 'focal' %}

|

||||||

|

- python3-rich

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% if grains.oscodename == 'focal' %}

|

||||||

|

# since Ubuntu requires and internet connection we can use pip to install modules

|

||||||

|

python3-pip:

|

||||||

|

pkg.installed

|

||||||

|

|

||||||

|

python-rich:

|

||||||

|

pip.installed:

|

||||||

|

- name: rich

|

||||||

|

- target: /usr/local/lib/python3.8/dist-packages/

|

||||||

|

- require:

|

||||||

|

- pkg: python3-pip

|

||||||

|

{% endif %}

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% if grains.os_family == 'RedHat' %}

|

||||||

|

|

||||||

remove_mariadb:

|

remove_mariadb:

|

||||||

pkg.removed:

|

pkg.removed:

|

||||||

@@ -37,3 +84,5 @@ commonpkgs:

|

|||||||

- unzip

|

- unzip

|

||||||

- wget

|

- wget

|

||||||

- yum-utils

|

- yum-utils

|

||||||

|

|

||||||

|

{% endif %}

|

||||||

|

|||||||

@@ -3,6 +3,8 @@

|

|||||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

# Elastic License 2.0.

|

# Elastic License 2.0.

|

||||||

|

|

||||||

|

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||||

|

|

||||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||||

{% if SOC_GLOBAL.global.airgap %}

|

{% if SOC_GLOBAL.global.airgap %}

|

||||||

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

||||||

@@ -11,6 +13,14 @@

|

|||||||

{% endif %}

|

{% endif %}

|

||||||

{% set SOVERSION = salt['file.read']('/etc/soversion').strip() %}

|

{% set SOVERSION = salt['file.read']('/etc/soversion').strip() %}

|

||||||

|

|

||||||

|

remove_common_soup:

|

||||||

|

file.absent:

|

||||||

|

- name: /opt/so/saltstack/default/salt/common/tools/sbin/soup

|

||||||

|

|

||||||

|

remove_common_so-firewall:

|

||||||

|

file.absent:

|

||||||

|

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-firewall

|

||||||

|

|

||||||

# This section is used to put the scripts in place in the Salt file system

|

# This section is used to put the scripts in place in the Salt file system

|

||||||

# in case a state run tries to overwrite what we do in the next section.

|

# in case a state run tries to overwrite what we do in the next section.

|

||||||

copy_so-common_common_tools_sbin:

|

copy_so-common_common_tools_sbin:

|

||||||

@@ -110,3 +120,23 @@ copy_bootstrap-salt_sbin:

|

|||||||

- source: {{UPDATE_DIR}}/salt/salt/scripts/bootstrap-salt.sh

|

- source: {{UPDATE_DIR}}/salt/salt/scripts/bootstrap-salt.sh

|

||||||

- force: True

|

- force: True

|

||||||

- preserve: True

|

- preserve: True

|

||||||

|

|

||||||

|

{# this is added in 2.4.120 to remove salt repo files pointing to saltproject.io to accomodate the move to broadcom and new bootstrap-salt script #}

|

||||||

|

{% if salt['pkg.version_cmp'](SOVERSION, '2.4.120') == -1 %}

|

||||||

|

{% set saltrepofile = '/etc/yum.repos.d/salt.repo' %}

|

||||||

|

{% if grains.os_family == 'Debian' %}

|

||||||

|

{% set saltrepofile = '/etc/apt/sources.list.d/salt.list' %}

|

||||||

|

{% endif %}

|

||||||

|

remove_saltproject_io_repo_manager:

|

||||||

|

file.absent:

|

||||||

|

- name: {{ saltrepofile }}

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% else %}

|

||||||

|

fix_23_soup_sbin:

|

||||||

|

cmd.run:

|

||||||

|

- name: curl -s -f -o /usr/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||||

|

fix_23_soup_salt:

|

||||||

|

cmd.run:

|

||||||

|

- name: curl -s -f -o /opt/so/saltstack/defalt/salt/common/tools/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||||

|

{% endif %}

|

||||||

|

|||||||

@@ -16,7 +16,7 @@

|

|||||||

|

|

||||||

if [ "$#" -lt 2 ]; then

|

if [ "$#" -lt 2 ]; then

|

||||||

cat 1>&2 <<EOF

|

cat 1>&2 <<EOF

|

||||||

$0 compiles a BPF expression to be passed to PCAP to apply a socket filter.

|

$0 compiles a BPF expression to be passed to stenotype to apply a socket filter.

|

||||||

Its first argument is the interface (link type is required) and all other arguments

|

Its first argument is the interface (link type is required) and all other arguments

|

||||||

are passed to TCPDump.

|

are passed to TCPDump.

|

||||||

|

|

||||||

|

|||||||

@@ -10,7 +10,7 @@

|

|||||||

cat << EOF

|

cat << EOF

|

||||||

|

|

||||||

so-checkin will run a full salt highstate to apply all salt states. If a highstate is already running, this request will be queued and so it may pause for a few minutes before you see any more output. For more information about so-checkin and salt, please see:

|

so-checkin will run a full salt highstate to apply all salt states. If a highstate is already running, this request will be queued and so it may pause for a few minutes before you see any more output. For more information about so-checkin and salt, please see:

|

||||||

https://securityonion.net/docs/salt

|

https://docs.securityonion.net/en/2.4/salt.html

|

||||||

|

|

||||||

EOF

|

EOF

|

||||||

|

|

||||||

|

|||||||

@@ -10,7 +10,7 @@

|

|||||||

# and since this same logic is required during installation, it's included in this file.

|

# and since this same logic is required during installation, it's included in this file.

|

||||||

|

|

||||||

DEFAULT_SALT_DIR=/opt/so/saltstack/default

|

DEFAULT_SALT_DIR=/opt/so/saltstack/default

|

||||||

DOC_BASE_URL="https://securityonion.net/docs"

|

DOC_BASE_URL="https://docs.securityonion.net/en/2.4"

|

||||||

|

|

||||||

if [ -z $NOROOT ]; then

|

if [ -z $NOROOT ]; then

|

||||||

# Check for prerequisites

|

# Check for prerequisites

|

||||||

@@ -333,8 +333,8 @@ get_elastic_agent_vars() {

|

|||||||

|

|

||||||

if [ -f "$defaultsfile" ]; then

|

if [ -f "$defaultsfile" ]; then

|

||||||

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

ELASTIC_AGENT_TARBALL_VERSION=$(egrep " +version: " $defaultsfile | awk -F: '{print $2}' | tr -d '[:space:]')

|

||||||

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

ELASTIC_AGENT_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||||

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/3/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

ELASTIC_AGENT_MD5_URL="https://repo.securityonion.net/file/so-repo/prod/2.4/elasticagent/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||||

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

ELASTIC_AGENT_FILE="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.tar.gz"

|

||||||

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

ELASTIC_AGENT_MD5="/nsm/elastic-fleet/artifacts/elastic-agent_SO-$ELASTIC_AGENT_TARBALL_VERSION.md5"

|

||||||

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

ELASTIC_AGENT_EXPANSION_DIR=/nsm/elastic-fleet/artifacts/beats/elastic-agent

|

||||||

@@ -349,16 +349,21 @@ get_random_value() {

|

|||||||

}

|

}

|

||||||

|

|

||||||

gpg_rpm_import() {

|

gpg_rpm_import() {

|

||||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

if [[ $is_oracle ]]; then

|

||||||

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||||

else

|

local RPMKEYSLOC="../salt/repo/client/files/$OS/keys"

|

||||||

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

else

|

||||||

|

local RPMKEYSLOC="$UPDATE_DIR/salt/repo/client/files/$OS/keys"

|

||||||

|

fi

|

||||||

|

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

||||||

|

for RPMKEY in "${RPMKEYS[@]}"; do

|

||||||

|

rpm --import $RPMKEYSLOC/$RPMKEY

|

||||||

|

echo "Imported $RPMKEY"

|

||||||

|

done

|

||||||

|

elif [[ $is_rpm ]]; then

|

||||||

|

echo "Importing the security onion GPG key"

|

||||||

|

rpm --import ../salt/repo/client/files/oracle/keys/securityonion.pub

|

||||||

fi

|

fi

|

||||||

RPMKEYS=('RPM-GPG-KEY-oracle' 'RPM-GPG-KEY-EPEL-9' 'SALT-PROJECT-GPG-PUBKEY-2023.pub' 'docker.pub' 'securityonion.pub')

|

|

||||||

for RPMKEY in "${RPMKEYS[@]}"; do

|

|

||||||

rpm --import $RPMKEYSLOC/$RPMKEY

|

|

||||||

echo "Imported $RPMKEY"

|

|

||||||

done

|

|

||||||

}

|

}

|

||||||

|

|

||||||

header() {

|

header() {

|

||||||

@@ -399,25 +404,6 @@ is_single_node_grid() {

|

|||||||

grep "role: so-" /etc/salt/grains | grep -E "eval|standalone|import" &> /dev/null

|

grep "role: so-" /etc/salt/grains | grep -E "eval|standalone|import" &> /dev/null

|

||||||

}

|

}

|

||||||

|

|

||||||

initialize_elasticsearch_indices() {

|

|

||||||

local index_names=$1

|

|

||||||

local default_entry=${2:-'{"@timestamp":"0"}'}

|

|

||||||

|

|

||||||

for idx in $index_names; do

|

|

||||||

if ! so-elasticsearch-query "$idx" --fail --retry 3 --retry-delay 30 >/dev/null 2>&1; then

|

|

||||||

echo "Index does not already exist. Initializing $idx index."

|

|

||||||

|

|

||||||

if retry 3 10 "so-elasticsearch-query "$idx/_doc" -d '$default_entry' -XPOST --fail 2>/dev/null" '"successful":1'; then

|

|

||||||

echo "Successfully initialized $idx index."

|

|

||||||

else

|

|

||||||

echo "Failed to initialize $idx index after 3 attempts."

|

|

||||||

fi

|

|

||||||

else

|

|

||||||

echo "Index $idx already exists. No action needed."

|

|

||||||

fi

|

|

||||||

done

|

|

||||||

}

|

|

||||||

|

|

||||||

lookup_bond_interfaces() {

|

lookup_bond_interfaces() {

|

||||||

cat /proc/net/bonding/bond0 | grep "Slave Interface:" | sed -e "s/Slave Interface: //g"

|

cat /proc/net/bonding/bond0 | grep "Slave Interface:" | sed -e "s/Slave Interface: //g"

|

||||||

}

|

}

|

||||||

@@ -568,39 +554,21 @@ run_check_net_err() {

|

|||||||

}

|

}

|

||||||

|

|

||||||

wait_for_salt_minion() {

|

wait_for_salt_minion() {

|

||||||

local minion="$1"

|

local minion="$1"

|

||||||

local max_wait="${2:-30}"

|

local timeout="${2:-5}"

|

||||||

local interval="${3:-2}"

|

local logfile="${3:-'/dev/stdout'}"

|

||||||

local logfile="${4:-'/dev/stdout'}"

|

retry 60 5 "journalctl -u salt-minion.service | grep 'Minion is ready to receive requests'" >> "$logfile" 2>&1 || fail

|

||||||

local elapsed=0

|

local attempt=0

|

||||||

|

# each attempts would take about 15 seconds

|

||||||