mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2025-12-06 17:22:49 +01:00

Compare commits

1 Commits

2.4.80-202

...

sysusers

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

50ab63162a |

3

.github/.gitleaks.toml

vendored

3

.github/.gitleaks.toml

vendored

@@ -536,10 +536,11 @@ secretGroup = 4

|

||||

|

||||

[allowlist]

|

||||

description = "global allow lists"

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''', '''.*:.*StrelkaHexDump.*''', '''.*:.*PLACEHOLDER.*''', '''ssl_.*password''']

|

||||

regexes = ['''219-09-9999''', '''078-05-1120''', '''(9[0-9]{2}|666)-\d{2}-\d{4}''', '''RPM-GPG-KEY.*''']

|

||||

paths = [

|

||||

'''gitleaks.toml''',

|

||||

'''(.*?)(jpg|gif|doc|pdf|bin|svg|socket)$''',

|

||||

'''(go.mod|go.sum)$''',

|

||||

|

||||

'''salt/nginx/files/enterprise-attack.json'''

|

||||

]

|

||||

|

||||

190

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

190

.github/DISCUSSION_TEMPLATE/2-4.yml

vendored

@@ -1,190 +0,0 @@

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

⚠️ This category is solely for conversations related to Security Onion 2.4 ⚠️

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Version

|

||||

description: Which version of Security Onion 2.4.x are you asking about?

|

||||

options:

|

||||

-

|

||||

- 2.4 Pre-release (Beta, Release Candidate)

|

||||

- 2.4.10

|

||||

- 2.4.20

|

||||

- 2.4.30

|

||||

- 2.4.40

|

||||

- 2.4.50

|

||||

- 2.4.60

|

||||

- 2.4.70

|

||||

- 2.4.80

|

||||

- 2.4.90

|

||||

- 2.4.100

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Method

|

||||

description: How did you install Security Onion?

|

||||

options:

|

||||

-

|

||||

- Security Onion ISO image

|

||||

- Network installation on Red Hat derivative like Oracle, Rocky, Alma, etc.

|

||||

- Network installation on Ubuntu

|

||||

- Network installation on Debian

|

||||

- Other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Description

|

||||

description: >

|

||||

Is this discussion about installation, configuration, upgrading, or other?

|

||||

options:

|

||||

-

|

||||

- installation

|

||||

- configuration

|

||||

- upgrading

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Installation Type

|

||||

description: >

|

||||

When you installed, did you choose Import, Eval, Standalone, Distributed, or something else?

|

||||

options:

|

||||

-

|

||||

- Import

|

||||

- Eval

|

||||

- Standalone

|

||||

- Distributed

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Location

|

||||

description: >

|

||||

Is this deployment in the cloud, on-prem with Internet access, or airgap?

|

||||

options:

|

||||

-

|

||||

- cloud

|

||||

- on-prem with Internet access

|

||||

- airgap

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Hardware Specs

|

||||

description: >

|

||||

Does your hardware meet or exceed the minimum requirements for your installation type as shown at https://docs.securityonion.net/en/2.4/hardware.html?

|

||||

options:

|

||||

-

|

||||

- Meets minimum requirements

|

||||

- Exceeds minimum requirements

|

||||

- Does not meet minimum requirements

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: CPU

|

||||

description: How many CPU cores do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: RAM

|

||||

description: How much RAM do you have?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /

|

||||

description: How much storage do you have for the / partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

attributes:

|

||||

label: Storage for /nsm

|

||||

description: How much storage do you have for the /nsm partition?

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Collection

|

||||

description: >

|

||||

Are you collecting network traffic from a tap or span port?

|

||||

options:

|

||||

-

|

||||

- tap

|

||||

- span port

|

||||

- other (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Network Traffic Speeds

|

||||

description: >

|

||||

How much network traffic are you monitoring?

|

||||

options:

|

||||

-

|

||||

- Less than 1Gbps

|

||||

- 1Gbps to 10Gbps

|

||||

- more than 10Gbps

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Status

|

||||

description: >

|

||||

Does SOC Grid show all services on all nodes as running OK?

|

||||

options:

|

||||

-

|

||||

- Yes, all services on all nodes are running OK

|

||||

- No, one or more services are failed (please provide detail below)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Salt Status

|

||||

description: >

|

||||

Do you get any failures when you run "sudo salt-call state.highstate"?

|

||||

options:

|

||||

-

|

||||

- Yes, there are salt failures (please provide detail below)

|

||||

- No, there are no failures

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

attributes:

|

||||

label: Logs

|

||||

description: >

|

||||

Are there any additional clues in /opt/so/log/?

|

||||

options:

|

||||

-

|

||||

- Yes, there are additional clues in /opt/so/log/ (please provide detail below)

|

||||

- No, there are no additional clues

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: Detail

|

||||

description: Please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and then provide detailed information to help us help you.

|

||||

placeholder: |-

|

||||

STOP! Before typing, please read our discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 in their entirety!

|

||||

|

||||

If your organization needs more immediate, enterprise grade professional support, with one-on-one virtual meetings and screensharing, contact us via our website: https://securityonion.com/support

|

||||

validations:

|

||||

required: true

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: Guidelines

|

||||

options:

|

||||

- label: I have read the discussion guidelines at https://github.com/Security-Onion-Solutions/securityonion/discussions/1720 and assert that I have followed the guidelines.

|

||||

required: true

|

||||

33

.github/workflows/close-threads.yml

vendored

33

.github/workflows/close-threads.yml

vendored

@@ -1,33 +0,0 @@

|

||||

name: 'Close Threads'

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '50 1 * * *'

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

discussions: write

|

||||

|

||||

concurrency:

|

||||

group: lock-threads

|

||||

|

||||

jobs:

|

||||

close-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

steps:

|

||||

- uses: actions/stale@v5

|

||||

with:

|

||||

days-before-issue-stale: -1

|

||||

days-before-issue-close: 60

|

||||

stale-issue-message: "This issue is stale because it has been inactive for an extended period. Stale issues convey that the issue, while important to someone, is not critical enough for the author, or other community members to work on, sponsor, or otherwise shepherd the issue through to a resolution."

|

||||

close-issue-message: "This issue was closed because it has been stale for an extended period. It will be automatically locked in 30 days, after which no further commenting will be available."

|

||||

days-before-pr-stale: 45

|

||||

days-before-pr-close: 60

|

||||

stale-pr-message: "This PR is stale because it has been inactive for an extended period. The longer a PR remains stale the more out of date with the main branch it becomes."

|

||||

close-pr-message: "This PR was closed because it has been stale for an extended period. It will be automatically locked in 30 days. If there is still a commitment to finishing this PR re-open it before it is locked."

|

||||

26

.github/workflows/lock-threads.yml

vendored

26

.github/workflows/lock-threads.yml

vendored

@@ -1,26 +0,0 @@

|

||||

name: 'Lock Threads'

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '50 2 * * *'

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

discussions: write

|

||||

|

||||

concurrency:

|

||||

group: lock-threads

|

||||

|

||||

jobs:

|

||||

lock-threads:

|

||||

if: github.repository_owner == 'security-onion-solutions'

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: jertel/lock-threads@main

|

||||

with:

|

||||

include-discussion-currently-open: true

|

||||

discussion-inactive-days: 90

|

||||

issue-inactive-days: 30

|

||||

pr-inactive-days: 30

|

||||

@@ -1,17 +1,17 @@

|

||||

### 2.4.80-20240624 ISO image released on 2024/06/25

|

||||

### 2.4.30-20231228 ISO image released on 2024/01/02

|

||||

|

||||

|

||||

### Download and Verify

|

||||

|

||||

2.4.80-20240624 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.80-20240624.iso

|

||||

2.4.30-20231228 ISO image:

|

||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.30-20231228.iso

|

||||

|

||||

MD5: 139F9762E926F9CB3C4A9528A3752C31

|

||||

SHA1: BC6CA2C5F4ABC1A04E83A5CF8FFA6A53B1583CC9

|

||||

SHA256: 70E90845C84FFA30AD6CF21504634F57C273E7996CA72F7250428DDBAAC5B1BD

|

||||

MD5: DBD47645CD6FA8358C51D8753046FB54

|

||||

SHA1: 2494091065434ACB028F71444A5D16E8F8A11EDF

|

||||

SHA256: 3345AE1DC58AC7F29D82E60D9A36CDF8DE19B7DFF999D8C4F89C7BD36AEE7F1D

|

||||

|

||||

Signature for ISO image:

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.80-20240624.iso.sig

|

||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.30-20231228.iso.sig

|

||||

|

||||

Signing key:

|

||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||

@@ -25,29 +25,27 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

||||

|

||||

Download the signature file for the ISO:

|

||||

```

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.80-20240624.iso.sig

|

||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.30-20231228.iso.sig

|

||||

```

|

||||

|

||||

Download the ISO image:

|

||||

```

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.80-20240624.iso

|

||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.30-20231228.iso

|

||||

```

|

||||

|

||||

Verify the downloaded ISO image using the signature file:

|

||||

```

|

||||

gpg --verify securityonion-2.4.80-20240624.iso.sig securityonion-2.4.80-20240624.iso

|

||||

gpg --verify securityonion-2.4.30-20231228.iso.sig securityonion-2.4.30-20231228.iso

|

||||

```

|

||||

|

||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||

```

|

||||

gpg: Signature made Mon 24 Jun 2024 02:42:03 PM EDT using RSA key ID FE507013

|

||||

gpg: Signature made Thu 28 Dec 2023 10:08:31 AM EST using RSA key ID FE507013

|

||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||

gpg: WARNING: This key is not certified with a trusted signature!

|

||||

gpg: There is no indication that the signature belongs to the owner.

|

||||

Primary key fingerprint: C804 A93D 36BE 0C73 3EA1 9644 7C10 60B7 FE50 7013

|

||||

```

|

||||

|

||||

If it fails to verify, try downloading again. If it still fails to verify, try downloading from another computer or another network.

|

||||

|

||||

Once you've verified the ISO image, you're ready to proceed to our Installation guide:

|

||||

https://docs.securityonion.net/en/2.4/installation.html

|

||||

|

||||

13

README.md

13

README.md

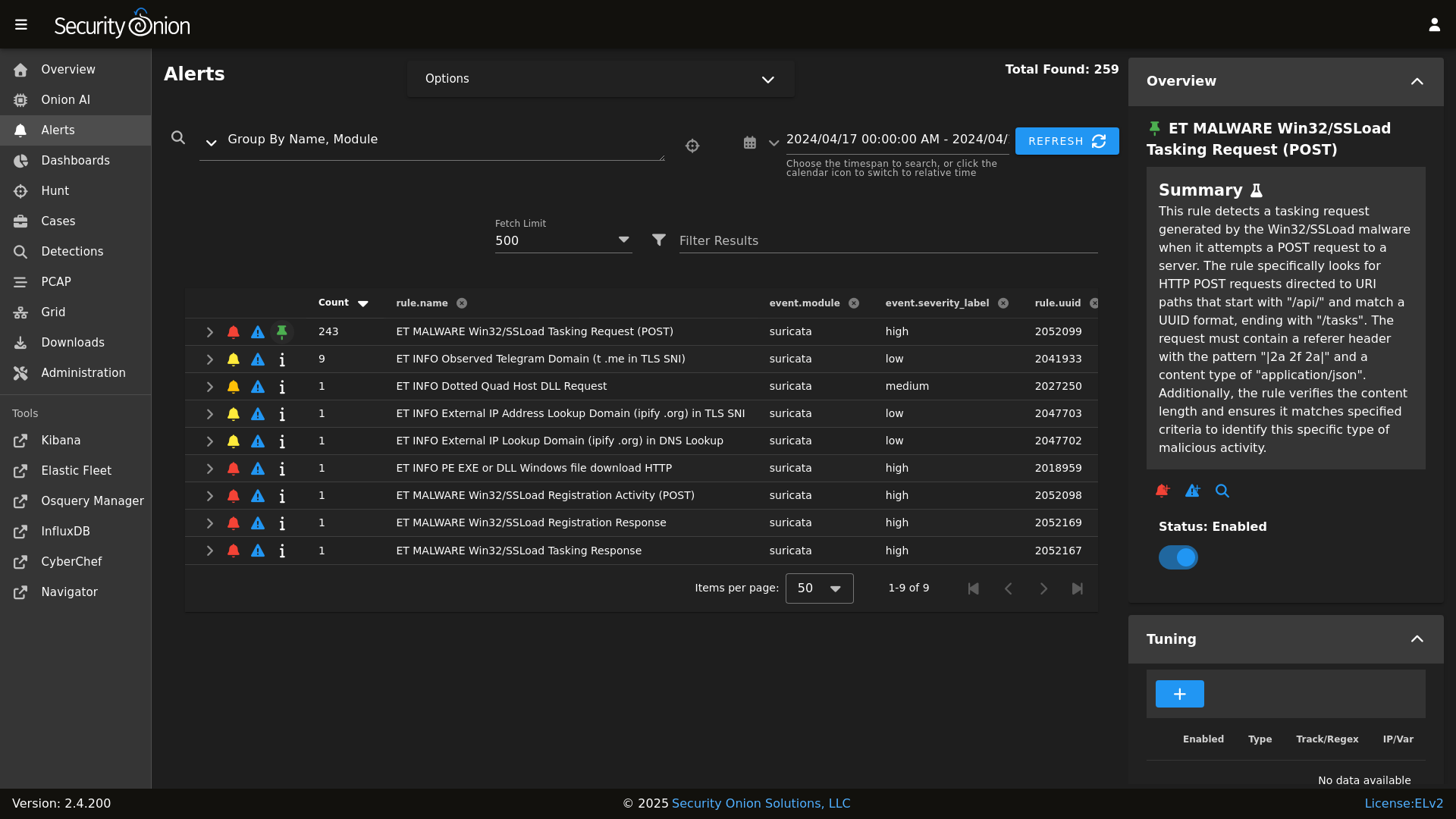

@@ -8,22 +8,19 @@ Alerts

|

||||

|

||||

|

||||

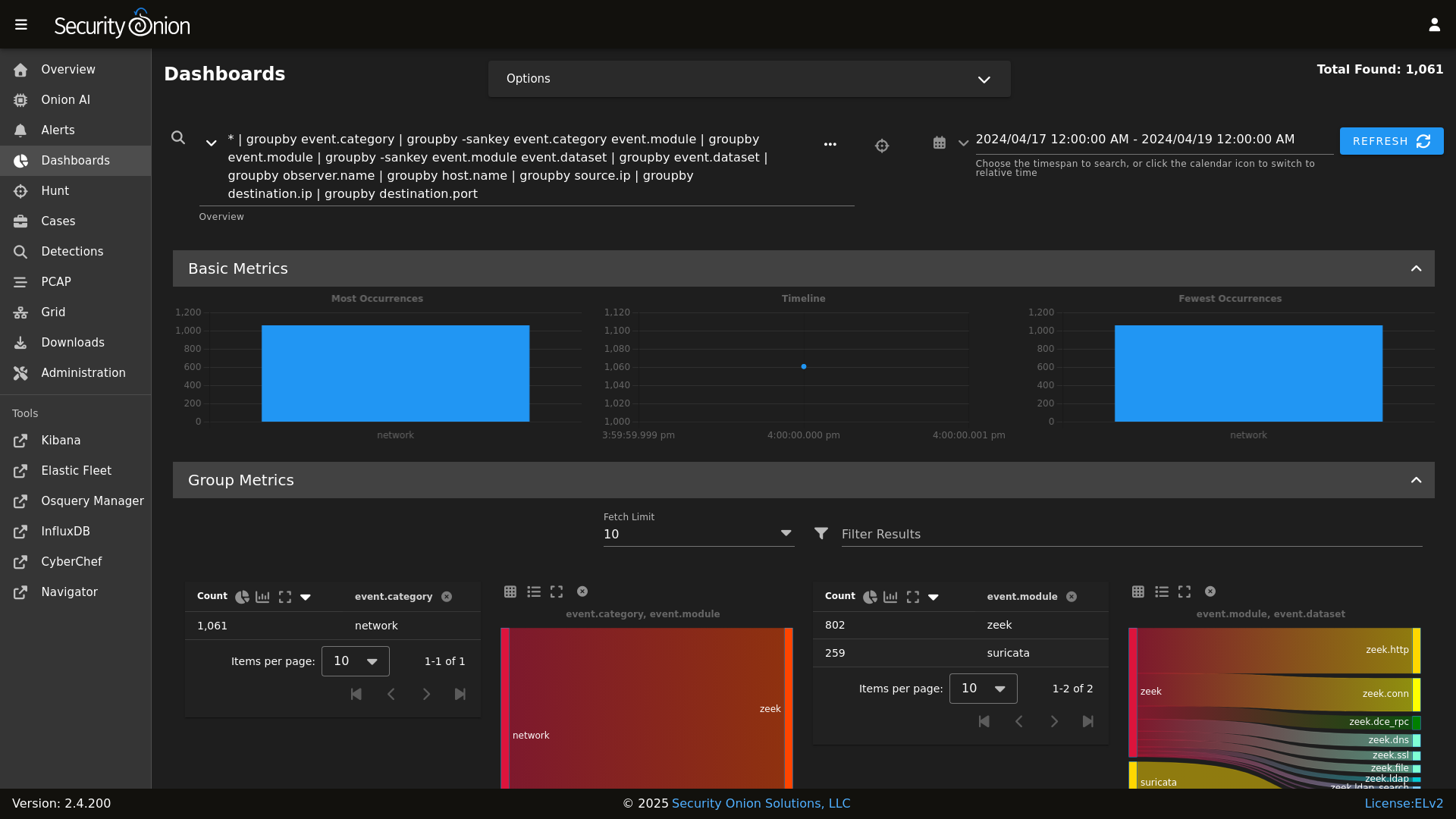

Dashboards

|

||||

|

||||

|

||||

|

||||

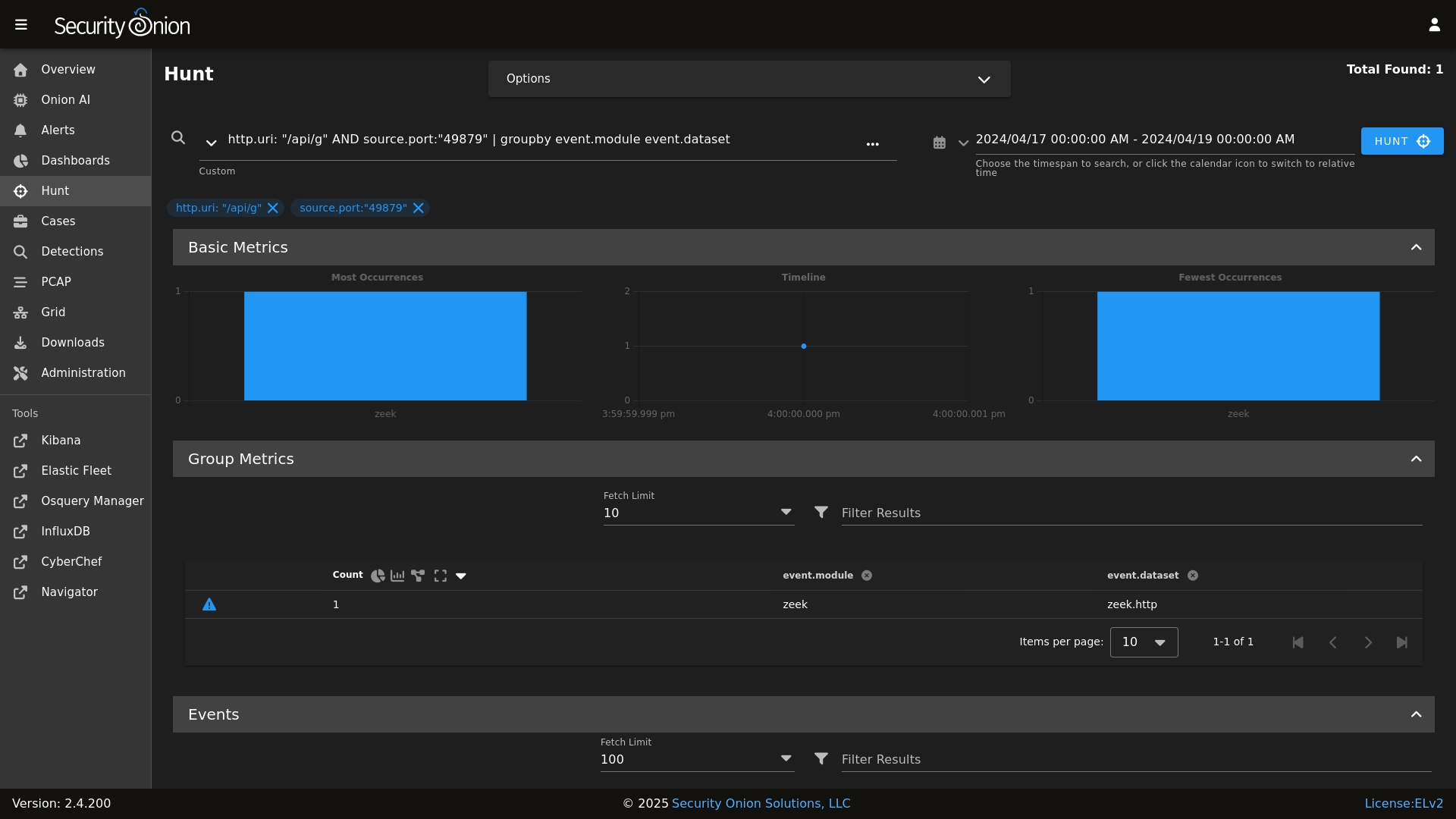

Hunt

|

||||

|

||||

|

||||

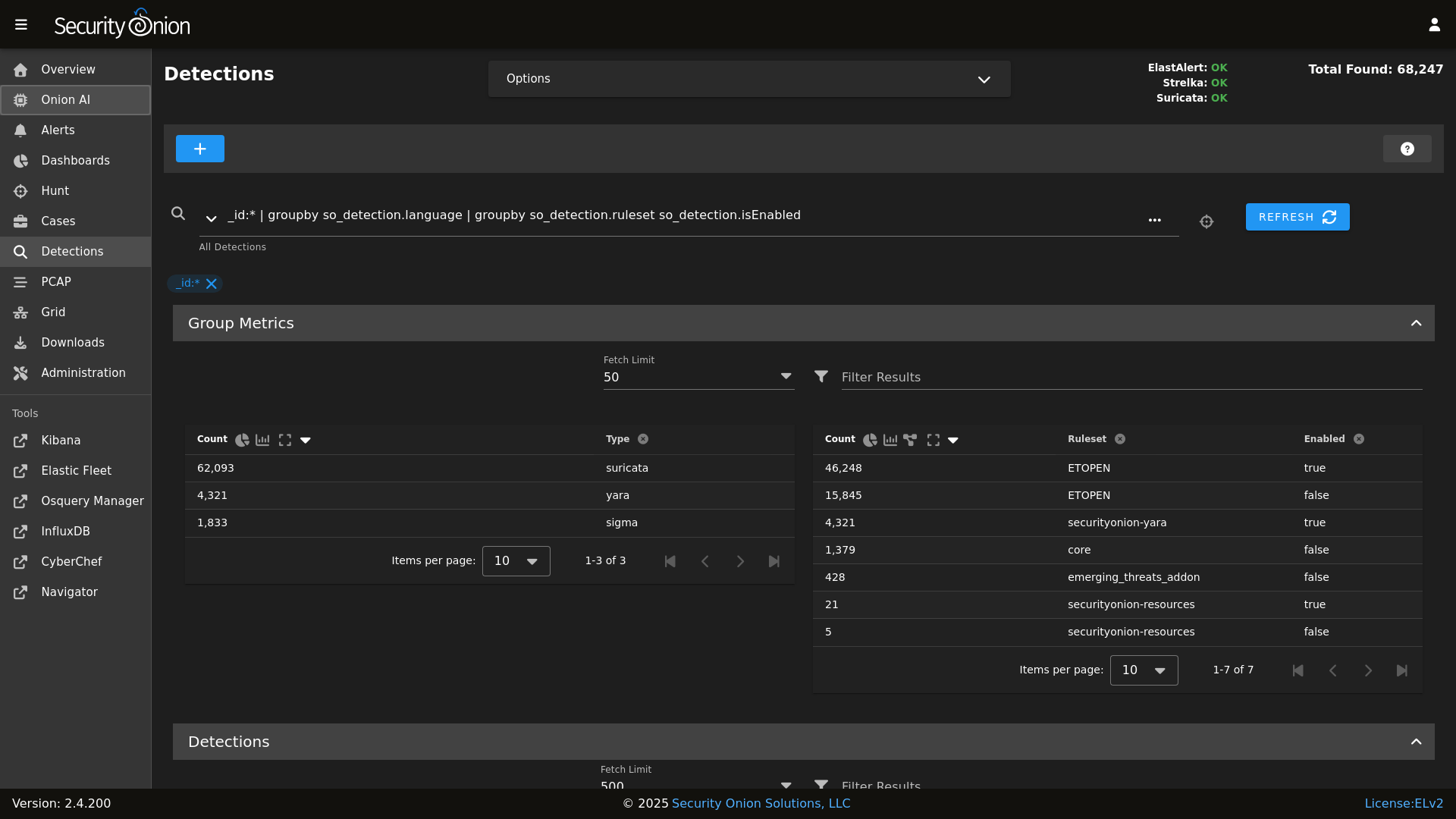

Detections

|

||||

|

||||

|

||||

|

||||

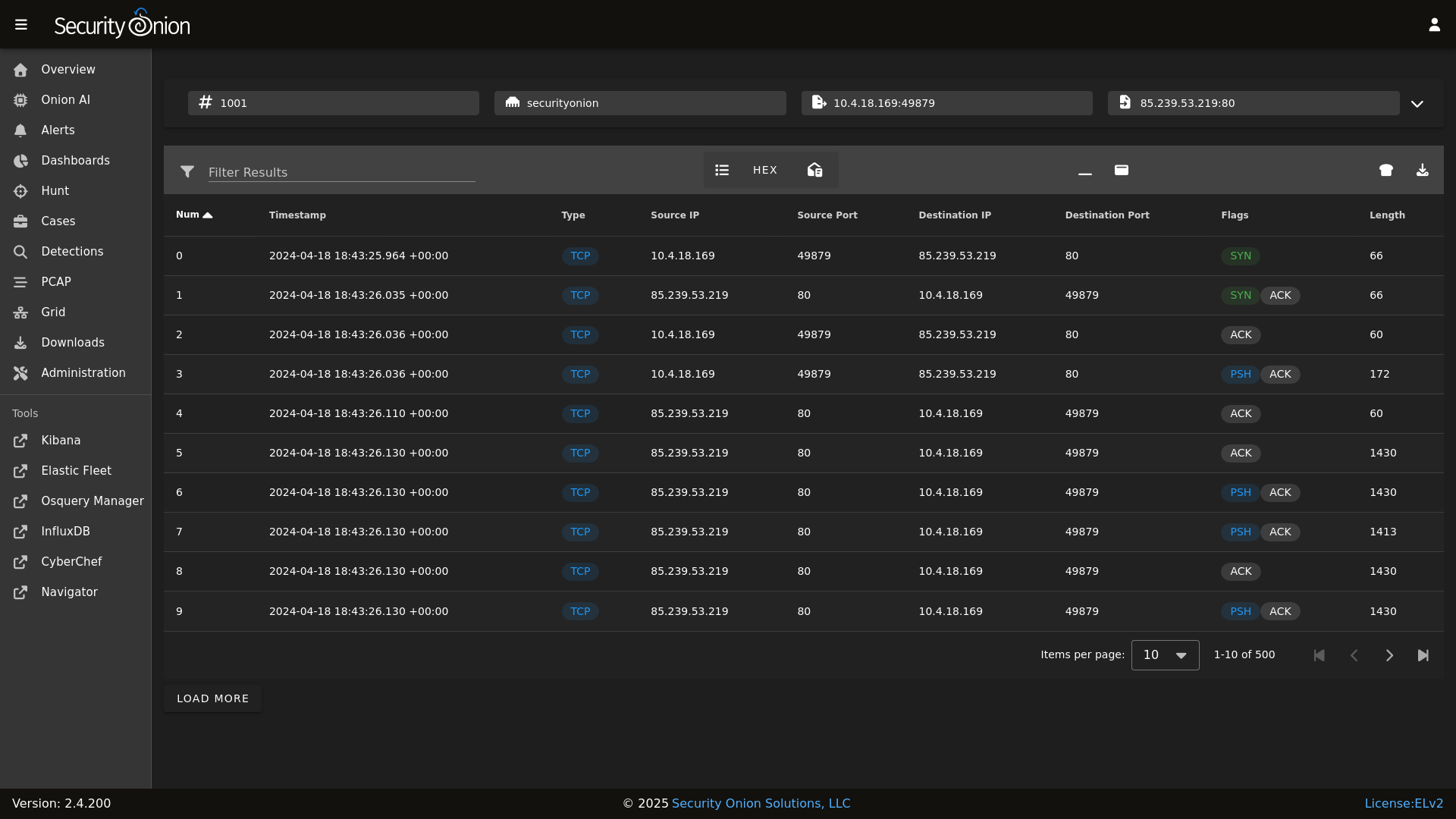

PCAP

|

||||

|

||||

|

||||

|

||||

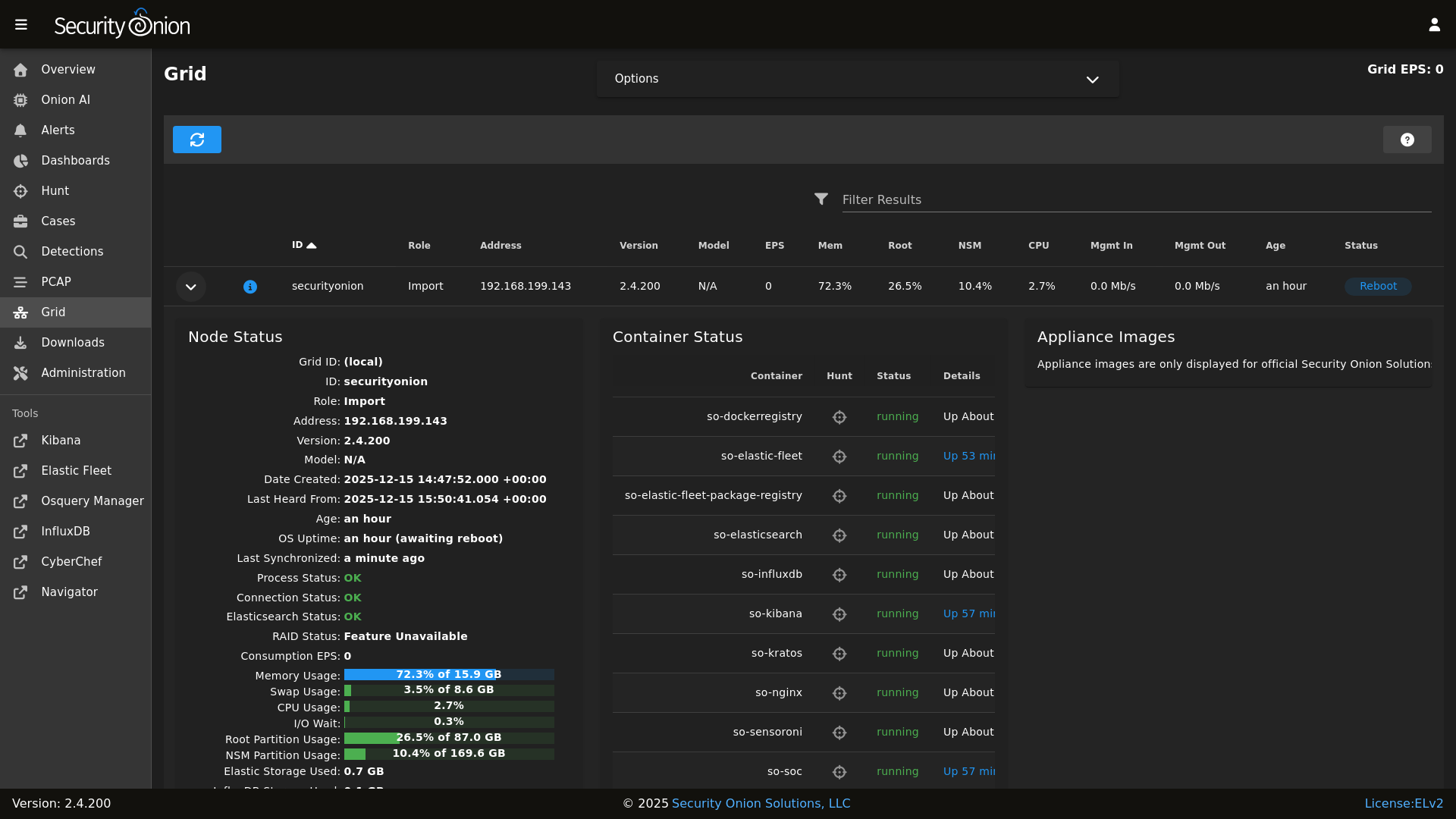

Grid

|

||||

|

||||

|

||||

|

||||

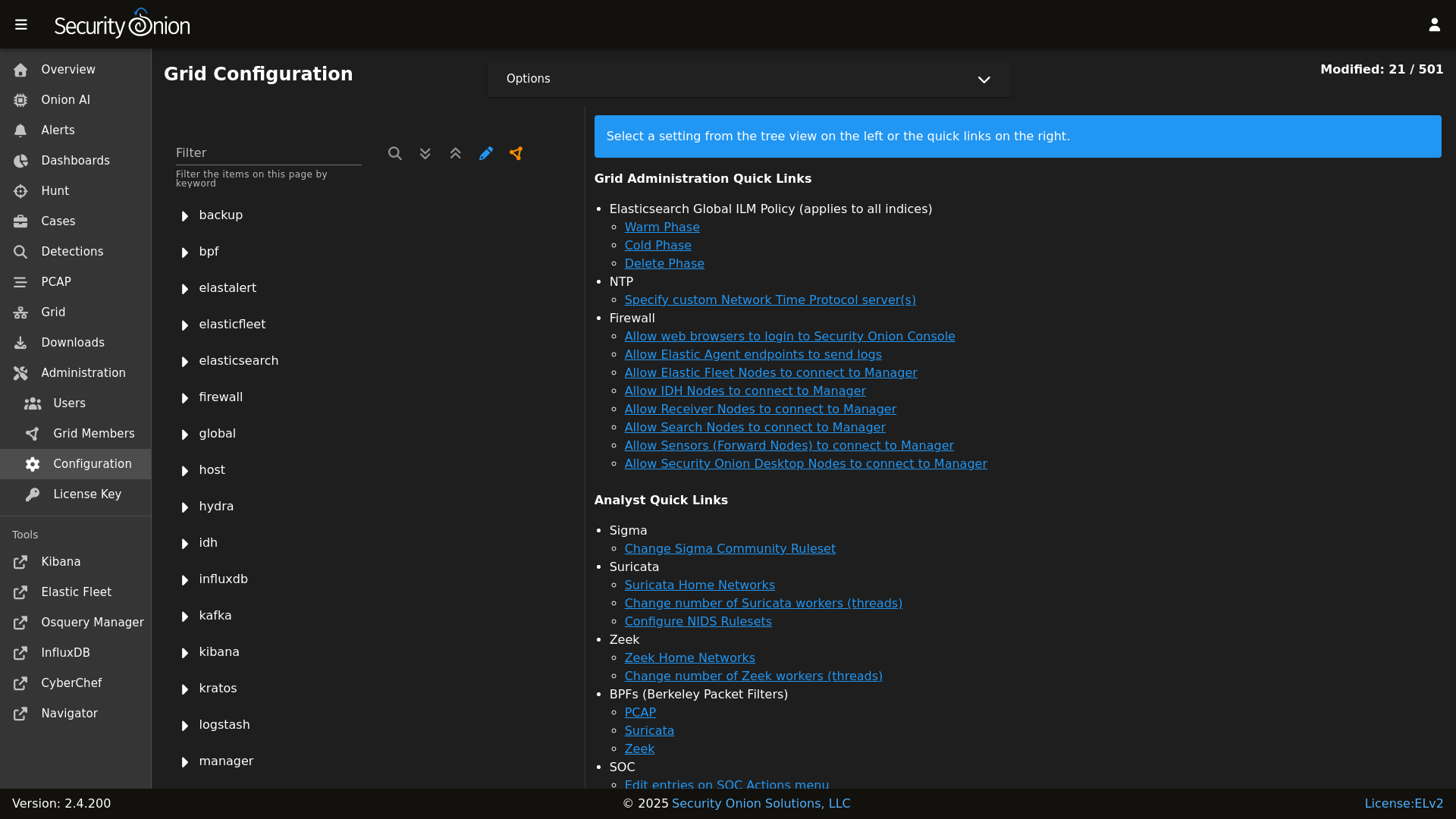

Config

|

||||

|

||||

|

||||

|

||||

### Release Notes

|

||||

|

||||

|

||||

@@ -41,8 +41,7 @@ file_roots:

|

||||

base:

|

||||

- /opt/so/saltstack/local/salt

|

||||

- /opt/so/saltstack/default/salt

|

||||

- /nsm/elastic-fleet/artifacts

|

||||

- /opt/so/rules/nids

|

||||

|

||||

|

||||

# The master_roots setting configures a master-only copy of the file_roots dictionary,

|

||||

# used by the state compiler.

|

||||

|

||||

@@ -1,2 +0,0 @@

|

||||

kafka:

|

||||

nodes:

|

||||

@@ -16,6 +16,7 @@ base:

|

||||

- sensoroni.adv_sensoroni

|

||||

- telegraf.soc_telegraf

|

||||

- telegraf.adv_telegraf

|

||||

- users

|

||||

|

||||

'* and not *_desktop':

|

||||

- firewall.soc_firewall

|

||||

@@ -43,6 +44,8 @@ base:

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- kratos.soc_kratos

|

||||

@@ -59,12 +62,10 @@ base:

|

||||

- elastalert.adv_elastalert

|

||||

- backup.soc_backup

|

||||

- backup.adv_backup

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

- stig.soc_stig

|

||||

|

||||

'*_sensor':

|

||||

- healthcheck.sensor

|

||||

@@ -80,8 +81,6 @@ base:

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- soc.license

|

||||

|

||||

'*_eval':

|

||||

- secrets

|

||||

@@ -107,6 +106,8 @@ base:

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- strelka.soc_strelka

|

||||

@@ -162,6 +163,8 @@ base:

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- strelka.soc_strelka

|

||||

@@ -178,10 +181,6 @@ base:

|

||||

- suricata.adv_suricata

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

|

||||

'*_heavynode':

|

||||

- elasticsearch.auth

|

||||

@@ -224,9 +223,6 @@ base:

|

||||

- redis.adv_redis

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- stig.soc_stig

|

||||

- soc.license

|

||||

- kafka.nodes

|

||||

|

||||

'*_receiver':

|

||||

- logstash.nodes

|

||||

@@ -239,10 +235,6 @@ base:

|

||||

- redis.adv_redis

|

||||

- minions.{{ grains.id }}

|

||||

- minions.adv_{{ grains.id }}

|

||||

- kafka.nodes

|

||||

- kafka.soc_kafka

|

||||

- kafka.adv_kafka

|

||||

- soc.license

|

||||

|

||||

'*_import':

|

||||

- secrets

|

||||

@@ -265,6 +257,8 @@ base:

|

||||

- soc.soc_soc

|

||||

- soc.adv_soc

|

||||

- soc.license

|

||||

- soctopus.soc_soctopus

|

||||

- soctopus.adv_soctopus

|

||||

- kibana.soc_kibana

|

||||

- kibana.adv_kibana

|

||||

- backup.soc_backup

|

||||

|

||||

2

pillar/users/init.sls

Normal file

2

pillar/users/init.sls

Normal file

@@ -0,0 +1,2 @@

|

||||

# users pillar goes in /opt/so/saltstack/local/pillar/users/init.sls

|

||||

# the users directory may need to be created under /opt/so/saltstack/local/pillar

|

||||

18

pillar/users/pillar.example

Normal file

18

pillar/users/pillar.example

Normal file

@@ -0,0 +1,18 @@

|

||||

users:

|

||||

sclapton:

|

||||

# required fields

|

||||

status: present

|

||||

# node_access determines which node types the user can access.

|

||||

# this can either be by grains.role or by final part of the minion id after the _

|

||||

node_access:

|

||||

- standalone

|

||||

- searchnode

|

||||

# optional fields

|

||||

fullname: Stevie Claptoon

|

||||

uid: 1001

|

||||

gid: 1001

|

||||

homephone: does not have a phone

|

||||

groups:

|

||||

- mygroup1

|

||||

- mygroup2

|

||||

- wheel # give sudo access

|

||||

20

pillar/users/pillar.usage

Normal file

20

pillar/users/pillar.usage

Normal file

@@ -0,0 +1,20 @@

|

||||

users:

|

||||

sclapton:

|

||||

# required fields

|

||||

status: <present | absent>

|

||||

# node_access determines which node types the user can access.

|

||||

# this can either be by grains.role or by final part of the minion id after the _

|

||||

node_access:

|

||||

- standalone

|

||||

- searchnode

|

||||

# optional fields

|

||||

fullname: <string>

|

||||

uid: <integer>

|

||||

gid: <integer>

|

||||

roomnumber: <string>

|

||||

workphone: <string>

|

||||

homephone: <string>

|

||||

groups:

|

||||

- <string>

|

||||

- <string>

|

||||

- wheel # give sudo access

|

||||

12

pyci.sh

12

pyci.sh

@@ -15,16 +15,12 @@ TARGET_DIR=${1:-.}

|

||||

|

||||

PATH=$PATH:/usr/local/bin

|

||||

|

||||

if [ ! -d .venv ]; then

|

||||

python -m venv .venv

|

||||

fi

|

||||

|

||||

source .venv/bin/activate

|

||||

|

||||

if ! pip install flake8 pytest pytest-cov pyyaml; then

|

||||

echo "Unable to install dependencies."

|

||||

if ! which pytest &> /dev/null || ! which flake8 &> /dev/null ; then

|

||||

echo "Missing dependencies. Consider running the following command:"

|

||||

echo " python -m pip install flake8 pytest pytest-cov"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

pip install pytest pytest-cov

|

||||

flake8 "$TARGET_DIR" "--config=${HOME_DIR}/pytest.ini"

|

||||

python3 -m pytest "--cov-config=${HOME_DIR}/pytest.ini" "--cov=$TARGET_DIR" --doctest-modules --cov-report=term --cov-fail-under=100 "$TARGET_DIR"

|

||||

@@ -34,6 +34,7 @@

|

||||

'suricata',

|

||||

'utility',

|

||||

'schedule',

|

||||

'soctopus',

|

||||

'tcpreplay',

|

||||

'docker_clean'

|

||||

],

|

||||

@@ -65,7 +66,6 @@

|

||||

'registry',

|

||||

'manager',

|

||||

'nginx',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'influxdb',

|

||||

@@ -92,7 +92,6 @@

|

||||

'nginx',

|

||||

'telegraf',

|

||||

'influxdb',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'elasticfleet',

|

||||

@@ -102,9 +101,8 @@

|

||||

'suricata.manager',

|

||||

'utility',

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'stig',

|

||||

'kafka'

|

||||

'soctopus',

|

||||

'docker_clean'

|

||||

],

|

||||

'so-managersearch': [

|

||||

'salt.master',

|

||||

@@ -114,7 +112,6 @@

|

||||

'nginx',

|

||||

'telegraf',

|

||||

'influxdb',

|

||||

'strelka.manager',

|

||||

'soc',

|

||||

'kratos',

|

||||

'elastic-fleet-package-registry',

|

||||

@@ -125,9 +122,8 @@

|

||||

'suricata.manager',

|

||||

'utility',

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'stig',

|

||||

'kafka'

|

||||

'soctopus',

|

||||

'docker_clean'

|

||||

],

|

||||

'so-searchnode': [

|

||||

'ssl',

|

||||

@@ -135,8 +131,7 @@

|

||||

'telegraf',

|

||||

'firewall',

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'stig'

|

||||

'docker_clean'

|

||||

],

|

||||

'so-standalone': [

|

||||

'salt.master',

|

||||

@@ -159,10 +154,9 @@

|

||||

'healthcheck',

|

||||

'utility',

|

||||

'schedule',

|

||||

'soctopus',

|

||||

'tcpreplay',

|

||||

'docker_clean',

|

||||

'stig',

|

||||

'kafka'

|

||||

'docker_clean'

|

||||

],

|

||||

'so-sensor': [

|

||||

'ssl',

|

||||

@@ -174,15 +168,13 @@

|

||||

'healthcheck',

|

||||

'schedule',

|

||||

'tcpreplay',

|

||||

'docker_clean',

|

||||

'stig'

|

||||

'docker_clean'

|

||||

],

|

||||

'so-fleet': [

|

||||

'ssl',

|

||||

'telegraf',

|

||||

'firewall',

|

||||

'logstash',

|

||||

'nginx',

|

||||

'healthcheck',

|

||||

'schedule',

|

||||

'elasticfleet',

|

||||

@@ -193,10 +185,7 @@

|

||||

'telegraf',

|

||||

'firewall',

|

||||

'schedule',

|

||||

'docker_clean',

|

||||

'kafka',

|

||||

'elasticsearch.ca',

|

||||

'stig'

|

||||

'docker_clean'

|

||||

],

|

||||

'so-desktop': [

|

||||

'ssl',

|

||||

@@ -205,6 +194,10 @@

|

||||

],

|

||||

}, grain='role') %}

|

||||

|

||||

{% if grains.role in ['so-eval', 'so-manager', 'so-managersearch', 'so-standalone'] %}

|

||||

{% do allowed_states.append('mysql') %}

|

||||

{% endif %}

|

||||

|

||||

{%- if grains.role in ['so-sensor', 'so-eval', 'so-standalone', 'so-heavynode'] %}

|

||||

{% do allowed_states.append('zeek') %}

|

||||

{%- endif %}

|

||||

@@ -230,6 +223,10 @@

|

||||

{% do allowed_states.append('elastalert') %}

|

||||

{% endif %}

|

||||

|

||||

{% if grains.role in ['so-eval', 'so-manager', 'so-standalone', 'so-managersearch'] %}

|

||||

{% do allowed_states.append('playbook') %}

|

||||

{% endif %}

|

||||

|

||||

{% if grains.role in ['so-manager', 'so-standalone', 'so-searchnode', 'so-managersearch', 'so-heavynode', 'so-receiver'] %}

|

||||

{% do allowed_states.append('logstash') %}

|

||||

{% endif %}

|

||||

|

||||

@@ -1,10 +1,7 @@

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

{% if GLOBALS.pcap_engine == "TRANSITION" %}

|

||||

{% set PCAPBPF = ["ip and host 255.255.255.1 and port 1"] %}

|

||||

{% else %}

|

||||

{% import_yaml 'bpf/defaults.yaml' as BPFDEFAULTS %}

|

||||

{% set BPFMERGED = salt['pillar.get']('bpf', BPFDEFAULTS.bpf, merge=True) %}

|

||||

{% import 'bpf/macros.jinja' as MACROS %}

|

||||

|

||||

{{ MACROS.remove_comments(BPFMERGED, 'pcap') }}

|

||||

|

||||

{% set PCAPBPF = BPFMERGED.pcap %}

|

||||

{% endif %}

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

bpf:

|

||||

pcap:

|

||||

description: List of BPF filters to apply to Stenographer.

|

||||

description: List of BPF filters to apply to PCAP.

|

||||

multiline: True

|

||||

forcedType: "[]string"

|

||||

helpLink: bpf.html

|

||||

|

||||

@@ -1,3 +1,6 @@

|

||||

mine_functions:

|

||||

x509.get_pem_entries: [/etc/pki/ca.crt]

|

||||

|

||||

x509_signing_policies:

|

||||

filebeat:

|

||||

- minions: '*'

|

||||

@@ -67,17 +70,3 @@ x509_signing_policies:

|

||||

- authorityKeyIdentifier: keyid,issuer:always

|

||||

- days_valid: 820

|

||||

- copypath: /etc/pki/issued_certs/

|

||||

kafka:

|

||||

- minions: '*'

|

||||

- signing_private_key: /etc/pki/ca.key

|

||||

- signing_cert: /etc/pki/ca.crt

|

||||

- C: US

|

||||

- ST: Utah

|

||||

- L: Salt Lake City

|

||||

- basicConstraints: "critical CA:false"

|

||||

- keyUsage: "digitalSignature, keyEncipherment"

|

||||

- subjectKeyIdentifier: hash

|

||||

- authorityKeyIdentifier: keyid,issuer:always

|

||||

- extendedKeyUsage: "serverAuth, clientAuth"

|

||||

- days_valid: 820

|

||||

- copypath: /etc/pki/issued_certs/

|

||||

|

||||

@@ -4,6 +4,7 @@

|

||||

{% from 'vars/globals.map.jinja' import GLOBALS %}

|

||||

|

||||

include:

|

||||

- common.soup_scripts

|

||||

- common.packages

|

||||

{% if GLOBALS.role in GLOBALS.manager_roles %}

|

||||

- manager.elasticsearch # needed for elastic_curl_config state

|

||||

@@ -133,18 +134,6 @@ common_sbin_jinja:

|

||||

- file_mode: 755

|

||||

- template: jinja

|

||||

|

||||

{% if not GLOBALS.is_manager%}

|

||||

# prior to 2.4.50 these scripts were in common/tools/sbin on the manager because of soup and distributed to non managers

|

||||

# these two states remove the scripts from non manager nodes

|

||||

remove_soup:

|

||||

file.absent:

|

||||

- name: /usr/sbin/soup

|

||||

|

||||

remove_so-firewall:

|

||||

file.absent:

|

||||

- name: /usr/sbin/so-firewall

|

||||

{% endif %}

|

||||

|

||||

so-status_script:

|

||||

file.managed:

|

||||

- name: /usr/sbin/so-status

|

||||

|

||||

@@ -1,117 +1,23 @@

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

# Sync some Utilities

|

||||

soup_scripts:

|

||||

file.recurse:

|

||||

- name: /usr/sbin

|

||||

- user: root

|

||||

- group: root

|

||||

- file_mode: 755

|

||||

- source: salt://common/tools/sbin

|

||||

- include_pat:

|

||||

- so-common

|

||||

- so-image-common

|

||||

|

||||

{% if '2.4' in salt['cp.get_file_str']('/etc/soversion') %}

|

||||

|

||||

{% import_yaml '/opt/so/saltstack/local/pillar/global/soc_global.sls' as SOC_GLOBAL %}

|

||||

{% if SOC_GLOBAL.global.airgap %}

|

||||

{% set UPDATE_DIR='/tmp/soagupdate/SecurityOnion' %}

|

||||

{% else %}

|

||||

{% set UPDATE_DIR='/tmp/sogh/securityonion' %}

|

||||

{% endif %}

|

||||

|

||||

remove_common_soup:

|

||||

file.absent:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/soup

|

||||

|

||||

remove_common_so-firewall:

|

||||

file.absent:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-firewall

|

||||

|

||||

# This section is used to put the scripts in place in the Salt file system

|

||||

# in case a state run tries to overwrite what we do in the next section.

|

||||

copy_so-common_common_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-common

|

||||

- source: {{UPDATE_DIR}}/salt/common/tools/sbin/so-common

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-image-common_common_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/common/tools/sbin/so-image-common

|

||||

- source: {{UPDATE_DIR}}/salt/common/tools/sbin/so-image-common

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_soup_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/soup

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/soup

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-firewall_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/so-firewall

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-firewall

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-yaml_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/so-yaml.py

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-yaml.py

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-repo-sync_manager_tools_sbin:

|

||||

file.copy:

|

||||

- name: /opt/so/saltstack/default/salt/manager/tools/sbin/so-repo-sync

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-repo-sync

|

||||

- preserve: True

|

||||

|

||||

# This section is used to put the new script in place so that it can be called during soup.

|

||||

# It is faster than calling the states that normally manage them to put them in place.

|

||||

copy_so-common_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-common

|

||||

- source: {{UPDATE_DIR}}/salt/common/tools/sbin/so-common

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-image-common_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-image-common

|

||||

- source: {{UPDATE_DIR}}/salt/common/tools/sbin/so-image-common

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_soup_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/soup

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/soup

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-firewall_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-firewall

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-firewall

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-yaml_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-yaml.py

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-yaml.py

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

copy_so-repo-sync_sbin:

|

||||

file.copy:

|

||||

- name: /usr/sbin/so-repo-sync

|

||||

- source: {{UPDATE_DIR}}/salt/manager/tools/sbin/so-repo-sync

|

||||

- force: True

|

||||

- preserve: True

|

||||

|

||||

{% else %}

|

||||

fix_23_soup_sbin:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /usr/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

fix_23_soup_salt:

|

||||

cmd.run:

|

||||

- name: curl -s -f -o /opt/so/saltstack/defalt/salt/common/tools/sbin/soup https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.3/main/salt/common/tools/sbin/soup

|

||||

{% endif %}

|

||||

soup_manager_scripts:

|

||||

file.recurse:

|

||||

- name: /usr/sbin

|

||||

- user: root

|

||||

- group: root

|

||||

- file_mode: 755

|

||||

- source: salt://manager/tools/sbin

|

||||

- include_pat:

|

||||

- so-firewall

|

||||

- so-repo-sync

|

||||

- soup

|

||||

|

||||

@@ -5,13 +5,8 @@

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0.

|

||||

|

||||

|

||||

|

||||

. /usr/sbin/so-common

|

||||

|

||||

cat << EOF

|

||||

|

||||

so-checkin will run a full salt highstate to apply all salt states. If a highstate is already running, this request will be queued and so it may pause for a few minutes before you see any more output. For more information about so-checkin and salt, please see:

|

||||

https://docs.securityonion.net/en/2.4/salt.html

|

||||

|

||||

EOF

|

||||

|

||||

salt-call state.highstate -l info queue=True

|

||||

salt-call state.highstate -l info

|

||||

|

||||

@@ -31,11 +31,6 @@ if ! echo "$PATH" | grep -q "/usr/sbin"; then

|

||||

export PATH="$PATH:/usr/sbin"

|

||||

fi

|

||||

|

||||

# See if a proxy is set. If so use it.

|

||||

if [ -f /etc/profile.d/so-proxy.sh ]; then

|

||||

. /etc/profile.d/so-proxy.sh

|

||||

fi

|

||||

|

||||

# Define a banner to separate sections

|

||||

banner="========================================================================="

|

||||

|

||||

@@ -184,21 +179,6 @@ copy_new_files() {

|

||||

cd /tmp

|

||||

}

|

||||

|

||||

create_local_directories() {

|

||||

echo "Creating local pillar and salt directories if needed"

|

||||

PILLARSALTDIR=$1

|

||||

local_salt_dir="/opt/so/saltstack/local"

|

||||

for i in "pillar" "salt"; do

|

||||

for d in $(find $PILLARSALTDIR/$i -type d); do

|

||||

suffixdir=${d//$PILLARSALTDIR/}

|

||||

if [ ! -d "$local_salt_dir/$suffixdir" ]; then

|

||||

mkdir -pv $local_salt_dir$suffixdir

|

||||

fi

|

||||

done

|

||||

chown -R socore:socore $local_salt_dir/$i

|

||||

done

|

||||

}

|

||||

|

||||

disable_fastestmirror() {

|

||||

sed -i 's/enabled=1/enabled=0/' /etc/yum/pluginconf.d/fastestmirror.conf

|

||||

}

|

||||

@@ -268,14 +248,6 @@ get_random_value() {

|

||||

head -c 5000 /dev/urandom | tr -dc 'a-zA-Z0-9' | fold -w $length | head -n 1

|

||||

}

|

||||

|

||||

get_agent_count() {

|

||||

if [ -f /opt/so/log/agents/agentstatus.log ]; then

|

||||

AGENTCOUNT=$(cat /opt/so/log/agents/agentstatus.log | grep -wF active | awk '{print $2}')

|

||||

else

|

||||

AGENTCOUNT=0

|

||||

fi

|

||||

}

|

||||

|

||||

gpg_rpm_import() {

|

||||

if [[ $is_oracle ]]; then

|

||||

if [[ "$WHATWOULDYOUSAYYAHDOHERE" == "setup" ]]; then

|

||||

@@ -357,7 +329,7 @@ lookup_salt_value() {

|

||||

local=""

|

||||

fi

|

||||

|

||||

salt-call -lerror --no-color ${kind}.get ${group}${key} --out=${output} ${local}

|

||||

salt-call --no-color ${kind}.get ${group}${key} --out=${output} ${local}

|

||||

}

|

||||

|

||||

lookup_pillar() {

|

||||

@@ -394,13 +366,6 @@ is_feature_enabled() {

|

||||

return 1

|

||||

}

|

||||

|

||||

read_feat() {

|

||||

if [ -f /opt/so/log/sostatus/lks_enabled ]; then

|

||||

lic_id=$(cat /opt/so/saltstack/local/pillar/soc/license.sls | grep license_id: | awk '{print $2}')

|

||||

echo "$lic_id/$(cat /opt/so/log/sostatus/lks_enabled)/$(cat /opt/so/log/sostatus/fps_enabled)"

|

||||

fi

|

||||

}

|

||||

|

||||

require_manager() {

|

||||

if is_manager_node; then

|

||||

echo "This is a manager, so we can proceed."

|

||||

@@ -594,15 +559,6 @@ status () {

|

||||

printf "\n=========================================================================\n$(date) | $1\n=========================================================================\n"

|

||||

}

|

||||

|

||||

sync_options() {

|

||||

set_version

|

||||

set_os

|

||||

salt_minion_count

|

||||

get_agent_count

|

||||

|

||||

echo "$VERSION/$OS/$(uname -r)/$MINIONCOUNT:$AGENTCOUNT/$(read_feat)"

|

||||

}

|

||||

|

||||

systemctl_func() {

|

||||

local action=$1

|

||||

local echo_action=$1

|

||||

|

||||

@@ -8,7 +8,6 @@

|

||||

import sys

|

||||

import subprocess

|

||||

import os

|

||||

import json

|

||||

|

||||

sys.path.append('/opt/saltstack/salt/lib/python3.10/site-packages/')

|

||||

import salt.config

|

||||

@@ -37,67 +36,17 @@ def check_needs_restarted():

|

||||

with open(outfile, 'w') as f:

|

||||

f.write(val)

|

||||

|

||||

def check_for_fps():

|

||||

feat = 'fps'

|

||||

feat_full = feat.replace('ps', 'ips')

|

||||

fps = 0

|

||||

try:

|

||||

result = subprocess.run([feat_full + '-mode-setup', '--is-enabled'], stdout=subprocess.PIPE)

|

||||

if result.returncode == 0:

|

||||

fps = 1

|

||||

except FileNotFoundError:

|

||||

fn = '/proc/sys/crypto/' + feat_full + '_enabled'

|

||||

try:

|

||||

with open(fn, 'r') as f:

|

||||

contents = f.read()

|

||||

if '1' in contents:

|

||||

fps = 1

|

||||

except:

|

||||

# Unknown, so assume 0

|

||||

fps = 0

|

||||

|

||||

with open('/opt/so/log/sostatus/fps_enabled', 'w') as f:

|

||||

f.write(str(fps))

|

||||

|

||||

def check_for_lks():

|

||||

feat = 'Lks'

|

||||

feat_full = feat.replace('ks', 'uks')

|

||||

lks = 0

|

||||

result = subprocess.run(['lsblk', '-p', '-J'], check=True, stdout=subprocess.PIPE)

|

||||

data = json.loads(result.stdout)

|

||||

for device in data['blockdevices']:

|

||||

if 'children' in device:

|

||||

for gc in device['children']:

|

||||

if 'children' in gc:

|

||||

try:

|

||||

arg = 'is' + feat_full

|

||||

result = subprocess.run(['cryptsetup', arg, gc['name']], stdout=subprocess.PIPE)

|

||||

if result.returncode == 0:

|

||||

lks = 1

|

||||

except FileNotFoundError:

|

||||

for ggc in gc['children']:

|

||||

if 'crypt' in ggc['type']:

|

||||

lks = 1

|

||||

if lks:

|

||||

break

|

||||

with open('/opt/so/log/sostatus/lks_enabled', 'w') as f:

|

||||

f.write(str(lks))

|

||||

|

||||

def fail(msg):

|

||||

print(msg, file=sys.stderr)

|

||||

sys.exit(1)

|

||||

|

||||

|

||||

def main():

|

||||

proc = subprocess.run(['id', '-u'], stdout=subprocess.PIPE, encoding="utf-8")

|

||||

if proc.stdout.strip() != "0":

|

||||

fail("This program must be run as root")

|

||||

# Ensure that umask is 0022 so that files created by this script have rw-r-r permissions

|

||||

org_umask = os.umask(0o022)

|

||||

|

||||

check_needs_restarted()

|

||||

check_for_fps()

|

||||

check_for_lks()

|

||||

# Restore umask to whatever value was set before this script was run. SXIG sets to 0077 rw---

|

||||

os.umask(org_umask)

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

|

||||

@@ -50,14 +50,16 @@ container_list() {

|

||||

"so-idh"

|

||||

"so-idstools"

|

||||

"so-influxdb"

|

||||

"so-kafka"

|

||||

"so-kibana"

|

||||

"so-kratos"

|

||||

"so-logstash"

|

||||

"so-mysql"

|

||||

"so-nginx"

|

||||

"so-pcaptools"

|

||||

"so-playbook"

|

||||

"so-redis"

|

||||

"so-soc"

|

||||

"so-soctopus"

|

||||

"so-steno"

|

||||

"so-strelka-backend"

|

||||

"so-strelka-filestream"

|

||||

|

||||

@@ -49,6 +49,10 @@ if [ "$CONTINUE" == "y" ]; then

|

||||

sed -i "s|$OLD_IP|$NEW_IP|g" $file

|

||||

done

|

||||

|

||||

echo "Granting MySQL root user permissions on $NEW_IP"

|

||||

docker exec -i so-mysql mysql --user=root --password=$(lookup_pillar_secret 'mysql') -e "GRANT ALL PRIVILEGES ON *.* TO 'root'@'$NEW_IP' IDENTIFIED BY '$(lookup_pillar_secret 'mysql')' WITH GRANT OPTION;" &> /dev/null

|

||||

echo "Removing MySQL root user from $OLD_IP"

|

||||

docker exec -i so-mysql mysql --user=root --password=$(lookup_pillar_secret 'mysql') -e "DROP USER 'root'@'$OLD_IP';" &> /dev/null

|

||||

echo "Updating Kibana dashboards"

|

||||

salt-call state.apply kibana.so_savedobjects_defaults -l info queue=True

|

||||

|

||||

|

||||

@@ -122,7 +122,6 @@ if [[ $EXCLUDE_STARTUP_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|error while communicating" # Elasticsearch MS -> HN "sensor" temporarily unavailable

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|tls handshake error" # Docker registry container when new node comes onlines

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Unable to get license information" # Logstash trying to contact ES before it's ready

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|process already finished" # Telegraf script finished just as the auto kill timeout kicked in

|

||||

fi

|

||||

|

||||

if [[ $EXCLUDE_FALSE_POSITIVE_ERRORS == 'Y' ]]; then

|

||||

@@ -155,11 +154,15 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|fail\\(error\\)" # redis/python generic stack line, rely on other lines for actual error

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|urlerror" # idstools connection timeout

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|timeouterror" # idstools connection timeout

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|forbidden" # playbook

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|_ml" # Elastic ML errors

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|context canceled" # elastic agent during shutdown

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|exited with code 128" # soctopus errors during forced restart by highstate

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|geoip databases update" # airgap can't update GeoIP DB

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|filenotfounderror" # bug in 2.4.10 filecheck salt state caused duplicate cronjobs

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|salt-minion-check" # bug in early 2.4 place Jinja script in non-jinja salt dir causing cron output errors

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|generating elastalert config" # playbook expected error

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|activerecord" # playbook expected error

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|monitoring.metrics" # known issue with elastic agent casting the field incorrectly if an integer value shows up before a float

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|repodownload.conf" # known issue with reposync on pre-2.4.20

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|missing versions record" # stenographer corrupt index

|

||||

@@ -198,13 +201,7 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|req.LocalMeta.host.ip" # known issue in GH

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|sendmail" # zeek

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|stats.log"

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Unknown column" # Elastalert errors from running EQL queries

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|parsing_exception" # Elastalert EQL parsing issue. Temp.

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|context deadline exceeded"

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Error running query:" # Specific issues with detection rules

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|detect-parse" # Suricata encountering a malformed rule

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|integrity check failed" # Detections: Exclude false positive due to automated testing

|

||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|syncErrors" # Detections: Not an actual error

|

||||

fi

|

||||

|

||||

RESULT=0

|

||||

@@ -213,9 +210,7 @@ RESULT=0

|

||||

CONTAINER_IDS=$(docker ps -q)

|

||||

exclude_container so-kibana # kibana error logs are too verbose with large varieties of errors most of which are temporary

|

||||

exclude_container so-idstools # ignore due to known issues and noisy logging

|

||||

exclude_container so-playbook # Playbook is removed as of 2.4.70, disregard output in stopped containers

|

||||

exclude_container so-mysql # MySQL is removed as of 2.4.70, disregard output in stopped containers

|

||||

exclude_container so-soctopus # Soctopus is removed as of 2.4.70, disregard output in stopped containers

|

||||

exclude_container so-playbook # ignore due to several playbook known issues

|

||||

|

||||

for container_id in $CONTAINER_IDS; do

|

||||

container_name=$(docker ps --format json | jq ". | select(.ID==\"$container_id\")|.Names")

|

||||

@@ -233,14 +228,10 @@ exclude_log "kibana.log" # kibana error logs are too verbose with large variet

|

||||

exclude_log "spool" # disregard zeek analyze logs as this is data specific

|

||||

exclude_log "import" # disregard imported test data the contains error strings

|

||||

exclude_log "update.log" # ignore playbook updates due to several known issues

|

||||

exclude_log "playbook.log" # ignore due to several playbook known issues

|

||||

exclude_log "cron-cluster-delete.log" # ignore since Curator has been removed

|

||||

exclude_log "cron-close.log" # ignore since Curator has been removed

|

||||

exclude_log "curator.log" # ignore since Curator has been removed

|

||||

exclude_log "playbook.log" # Playbook is removed as of 2.4.70, logs may still be on disk

|

||||

exclude_log "mysqld.log" # MySQL is removed as of 2.4.70, logs may still be on disk

|

||||

exclude_log "soctopus.log" # Soctopus is removed as of 2.4.70, logs may still be on disk

|

||||

exclude_log "agentstatus.log" # ignore this log since it tracks agents in error state

|

||||

exclude_log "detections_runtime-status_yara.log" # temporarily ignore this log until Detections is more stable

|

||||

|

||||

for log_file in $(cat /tmp/log_check_files); do

|

||||

status "Checking log file $log_file"

|

||||

|

||||

@@ -1,98 +0,0 @@

|

||||

#!/bin/bash

|

||||

#

|

||||

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||

# Elastic License 2.0."

|

||||

|

||||

set -e

|

||||

# This script is intended to be used in the case the ISO install did not properly setup TPM decrypt for LUKS partitions at boot.

|

||||

if [ -z $NOROOT ]; then

|

||||

# Check for prerequisites

|

||||

if [ "$(id -u)" -ne 0 ]; then

|

||||

echo "This script must be run using sudo!"

|

||||

exit 1

|

||||

fi

|

||||

fi

|

||||

ENROLL_TPM=N

|

||||

|

||||

while [[ $# -gt 0 ]]; do

|

||||

case $1 in

|

||||

--enroll-tpm)

|

||||

ENROLL_TPM=Y

|

||||

;;

|

||||

*)

|

||||

echo "Usage: $0 [options]"

|

||||

echo ""

|

||||

echo "where options are:"

|

||||

echo " --enroll-tpm for when TPM enrollment was not selected during ISO install."

|

||||

echo ""

|

||||

exit 1

|

||||

;;

|

||||

esac

|

||||

shift

|

||||

done

|

||||

|

||||

check_for_tpm() {

|

||||

echo -n "Checking for TPM: "

|

||||

if [ -d /sys/class/tpm/tpm0 ]; then

|

||||

echo -e "tpm0 found."

|

||||

TPM="yes"

|

||||

# Check if TPM is using sha1 or sha256

|

||||

if [ -d /sys/class/tpm/tpm0/pcr-sha1 ]; then

|

||||

echo -e "TPM is using sha1.\n"

|

||||

TPM_PCR="sha1"

|

||||

elif [ -d /sys/class/tpm/tpm0/pcr-sha256 ]; then

|

||||

echo -e "TPM is using sha256.\n"

|

||||

TPM_PCR="sha256"

|

||||

fi

|

||||

else

|

||||

echo -e "No TPM found.\n"

|

||||

exit 1

|

||||

fi

|

||||

}

|

||||

|

||||

check_for_luks_partitions() {

|

||||

echo "Checking for LUKS partitions"

|

||||

for part in $(lsblk -o NAME,FSTYPE -ln | grep crypto_LUKS | awk '{print $1}'); do

|

||||

echo "Found LUKS partition: $part"

|

||||

LUKS_PARTITIONS+=("$part")

|

||||

done

|

||||

if [ ${#LUKS_PARTITIONS[@]} -eq 0 ]; then

|

||||

echo -e "No LUKS partitions found.\n"

|

||||

exit 1

|

||||

fi

|

||||

echo ""

|

||||

}

|

||||

|

||||

enroll_tpm_in_luks() {

|

||||

read -s -p "Enter the LUKS passphrase used during ISO install: " LUKS_PASSPHRASE

|

||||

echo ""

|

||||

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||

echo "Enrolling TPM for LUKS device: /dev/$part"

|

||||

if [ "$TPM_PCR" == "sha1" ]; then

|

||||

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha1","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||

elif [ "$TPM_PCR" == "sha256" ]; then

|

||||

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha256","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||

fi

|

||||

done

|

||||

}

|

||||

|

||||

regenerate_tpm_enrollment_token() {

|

||||

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||

clevis luks regen -d /dev/$part -s 1 -q

|

||||

done

|

||||

}

|

||||

|

||||

check_for_tpm

|

||||

check_for_luks_partitions

|

||||

|

||||

if [[ $ENROLL_TPM == "Y" ]]; then

|

||||

enroll_tpm_in_luks

|

||||

else

|

||||

regenerate_tpm_enrollment_token

|

||||

fi

|

||||

|

||||

echo "Running dracut"

|

||||

dracut -fv

|

||||

echo -e "\nTPM configuration complete. Reboot the system to verify the TPM is correctly decrypting the LUKS partition(s) at boot.\n"

|

||||

@@ -10,7 +10,7 @@

|

||||

. /usr/sbin/so-common

|

||||

. /usr/sbin/so-image-common

|

||||

|

||||

REPLAYIFACE=${REPLAYIFACE:-"{{salt['pillar.get']('sensor:interface', '')}}"}

|

||||

REPLAYIFACE=${REPLAYIFACE:-$(lookup_pillar interface sensor)}

|

||||

REPLAYSPEED=${REPLAYSPEED:-10}

|

||||

|

||||

mkdir -p /opt/so/samples

|

||||

@@ -89,7 +89,6 @@ function suricata() {

|

||||

-v ${LOG_PATH}:/var/log/suricata/:rw \

|

||||

-v ${NSM_PATH}/:/nsm/:rw \

|

||||

-v "$PCAP:/input.pcap:ro" \

|

||||

-v /dev/null:/nsm/suripcap:rw \

|

||||

-v /opt/so/conf/suricata/bpf:/etc/suricata/bpf:ro \

|

||||

{{ MANAGER }}:5000/{{ IMAGEREPO }}/so-suricata:{{ VERSION }} \

|

||||

--runmode single -k none -r /input.pcap > $LOG_PATH/console.log 2>&1

|

||||

@@ -248,7 +247,7 @@ fi

|

||||

START_OLDEST_SLASH=$(echo $START_OLDEST | sed -e 's/-/%2F/g')

|

||||

END_NEWEST_SLASH=$(echo $END_NEWEST | sed -e 's/-/%2F/g')

|

||||

if [[ $VALID_PCAPS_COUNT -gt 0 ]] || [[ $SKIPPED_PCAPS_COUNT -gt 0 ]]; then

|

||||

URL="https://{{ URLBASE }}/#/dashboards?q=$HASH_FILTERS%20%7C%20groupby%20event.module*%20%7C%20groupby%20-sankey%20event.module*%20event.dataset%20%7C%20groupby%20event.dataset%20%7C%20groupby%20source.ip%20%7C%20groupby%20destination.ip%20%7C%20groupby%20destination.port%20%7C%20groupby%20network.protocol%20%7C%20groupby%20rule.name%20rule.category%20event.severity_label%20%7C%20groupby%20dns.query.name%20%7C%20groupby%20file.mime_type%20%7C%20groupby%20http.virtual_host%20http.uri%20%7C%20groupby%20notice.note%20notice.message%20notice.sub_message%20%7C%20groupby%20ssl.server_name%20%7C%20groupby%20source_geo.organization_name%20source.geo.country_name%20%7C%20groupby%20destination_geo.organization_name%20destination.geo.country_name&t=${START_OLDEST_SLASH}%2000%3A00%3A00%20AM%20-%20${END_NEWEST_SLASH}%2000%3A00%3A00%20AM&z=UTC"

|

||||

URL="https://{{ URLBASE }}/#/dashboards?q=$HASH_FILTERS%20%7C%20groupby%20-sankey%20event.dataset%20event.category%2a%20%7C%20groupby%20-pie%20event.category%20%7C%20groupby%20-bar%20event.module%20%7C%20groupby%20event.dataset%20%7C%20groupby%20event.module%20%7C%20groupby%20event.category%20%7C%20groupby%20observer.name%20%7C%20groupby%20source.ip%20%7C%20groupby%20destination.ip%20%7C%20groupby%20destination.port&t=${START_OLDEST_SLASH}%2000%3A00%3A00%20AM%20-%20${END_NEWEST_SLASH}%2000%3A00%3A00%20AM&z=UTC"

|

||||

|

||||

status "Import complete!"

|

||||

status

|

||||

|

||||

@@ -334,7 +334,6 @@ desktop_packages:

|

||||

- pulseaudio-libs

|

||||

- pulseaudio-libs-glib2

|

||||

- pulseaudio-utils

|

||||

- putty

|

||||

- sane-airscan

|

||||

- sane-backends

|

||||

- sane-backends-drivers-cameras

|

||||

|

||||

@@ -67,6 +67,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-mysql':

|

||||

final_octet: 30

|

||||

port_bindings:

|

||||

- 0.0.0.0:3306:3306

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-nginx':

|

||||

final_octet: 31

|

||||

port_bindings:

|

||||

@@ -77,10 +84,10 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-nginx-fleet-node':

|

||||

final_octet: 31

|

||||

'so-playbook':

|

||||

final_octet: 32

|

||||

port_bindings:

|

||||

- 8443:8443

|

||||

- 0.0.0.0:3000:3000

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

@@ -104,6 +111,13 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-soctopus':

|

||||

final_octet: 35

|

||||

port_bindings:

|

||||

- 0.0.0.0:7000:7000

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-strelka-backend':

|

||||

final_octet: 36

|

||||

custom_bind_mounts: []

|

||||

@@ -180,19 +194,8 @@ docker:

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

ulimits:

|

||||

- memlock=524288000

|

||||

'so-zeek':

|

||||

final_octet: 99

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

'so-kafka':

|

||||

final_octet: 88

|

||||

port_bindings:

|

||||

- 0.0.0.0:9092:9092

|

||||

- 0.0.0.0:9093:9093

|

||||

- 0.0.0.0:8778:8778

|

||||

custom_bind_mounts: []

|

||||

extra_hosts: []

|

||||

extra_env: []

|

||||

|

||||

@@ -20,30 +20,30 @@ dockergroup:

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.6.33-1

|

||||

- docker-ce: 5:26.1.4-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:26.1.4-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:26.1.4-1~debian.12~bookworm

|

||||

- containerd.io: 1.6.21-1

|

||||

- docker-ce: 5:24.0.3-1~debian.12~bookworm

|

||||

- docker-ce-cli: 5:24.0.3-1~debian.12~bookworm

|

||||

- docker-ce-rootless-extras: 5:24.0.3-1~debian.12~bookworm

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% elif grains.oscodename == 'jammy' %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.6.33-1

|

||||

- docker-ce: 5:26.1.4-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:26.1.4-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:26.1.4-1~ubuntu.22.04~jammy

|

||||

- containerd.io: 1.6.21-1

|

||||

- docker-ce: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- docker-ce-cli: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- docker-ce-rootless-extras: 5:24.0.2-1~ubuntu.22.04~jammy

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% else %}

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.6.33-1

|

||||

- docker-ce: 5:26.1.4-1~ubuntu.20.04~focal

|

||||

- docker-ce-cli: 5:26.1.4-1~ubuntu.20.04~focal

|

||||

- docker-ce-rootless-extras: 5:26.1.4-1~ubuntu.20.04~focal

|

||||

- containerd.io: 1.4.9-1

|

||||

- docker-ce: 5:20.10.8~3-0~ubuntu-focal

|

||||

- docker-ce-cli: 5:20.10.5~3-0~ubuntu-focal

|

||||

- docker-ce-rootless-extras: 5:20.10.5~3-0~ubuntu-focal

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

@@ -51,10 +51,10 @@ dockerheldpackages:

|

||||

dockerheldpackages:

|

||||

pkg.installed:

|

||||

- pkgs:

|

||||

- containerd.io: 1.6.33-3.1.el9

|

||||

- docker-ce: 3:26.1.4-1.el9

|

||||

- docker-ce-cli: 1:26.1.4-1.el9

|

||||

- docker-ce-rootless-extras: 26.1.4-1.el9

|

||||

- containerd.io: 1.6.21-3.1.el9

|

||||

- docker-ce: 24.0.4-1.el9

|

||||

- docker-ce-cli: 24.0.4-1.el9

|

||||

- docker-ce-rootless-extras: 24.0.4-1.el9

|

||||

- hold: True

|

||||

- update_holds: True

|

||||

{% endif %}

|

||||

|

||||

@@ -46,11 +46,13 @@ docker:

|

||||

so-kibana: *dockerOptions

|

||||

so-kratos: *dockerOptions

|

||||

so-logstash: *dockerOptions

|

||||

so-mysql: *dockerOptions

|

||||

so-nginx: *dockerOptions

|

||||

so-nginx-fleet-node: *dockerOptions

|

||||

so-playbook: *dockerOptions

|

||||

so-redis: *dockerOptions

|

||||

so-sensoroni: *dockerOptions

|

||||

so-soc: *dockerOptions

|

||||

so-soctopus: *dockerOptions

|

||||