mirror of

https://github.com/Security-Onion-Solutions/securityonion.git

synced 2025-12-06 09:12:45 +01:00

Merge remote-tracking branch 'origin/2.4/dev' into salt3006.8

This commit is contained in:

@@ -1,17 +1,17 @@

|

|||||||

### 2.4.60-20240320 ISO image released on 2024/03/20

|

### 2.4.70-20240529 ISO image released on 2024/05/29

|

||||||

|

|

||||||

|

|

||||||

### Download and Verify

|

### Download and Verify

|

||||||

|

|

||||||

2.4.60-20240320 ISO image:

|

2.4.70-20240529 ISO image:

|

||||||

https://download.securityonion.net/file/securityonion/securityonion-2.4.60-20240320.iso

|

https://download.securityonion.net/file/securityonion/securityonion-2.4.70-20240529.iso

|

||||||

|

|

||||||

MD5: 178DD42D06B2F32F3870E0C27219821E

|

MD5: 8FCCF31C2470D1ABA380AF196B611DEC

|

||||||

SHA1: 73EDCD50817A7F6003FE405CF1808A30D034F89D

|

SHA1: EE5E8F8C14819E7A1FE423E6920531A97F39600B

|

||||||

SHA256: DD334B8D7088A7B78160C253B680D645E25984BA5CCAB5CC5C327CA72137FC06

|

SHA256: EF5E781D50D50660F452ADC54FD4911296ECBECED7879FA8E04687337CA89BEC

|

||||||

|

|

||||||

Signature for ISO image:

|

Signature for ISO image:

|

||||||

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.60-20240320.iso.sig

|

https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.70-20240529.iso.sig

|

||||||

|

|

||||||

Signing key:

|

Signing key:

|

||||||

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.4/main/KEYS

|

||||||

@@ -25,22 +25,22 @@ wget https://raw.githubusercontent.com/Security-Onion-Solutions/securityonion/2.

|

|||||||

|

|

||||||

Download the signature file for the ISO:

|

Download the signature file for the ISO:

|

||||||

```

|

```

|

||||||

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.60-20240320.iso.sig

|

wget https://github.com/Security-Onion-Solutions/securityonion/raw/2.4/main/sigs/securityonion-2.4.70-20240529.iso.sig

|

||||||

```

|

```

|

||||||

|

|

||||||

Download the ISO image:

|

Download the ISO image:

|

||||||

```

|

```

|

||||||

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.60-20240320.iso

|

wget https://download.securityonion.net/file/securityonion/securityonion-2.4.70-20240529.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

Verify the downloaded ISO image using the signature file:

|

Verify the downloaded ISO image using the signature file:

|

||||||

```

|

```

|

||||||

gpg --verify securityonion-2.4.60-20240320.iso.sig securityonion-2.4.60-20240320.iso

|

gpg --verify securityonion-2.4.70-20240529.iso.sig securityonion-2.4.70-20240529.iso

|

||||||

```

|

```

|

||||||

|

|

||||||

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

The output should show "Good signature" and the Primary key fingerprint should match what's shown below:

|

||||||

```

|

```

|

||||||

gpg: Signature made Tue 19 Mar 2024 03:17:58 PM EDT using RSA key ID FE507013

|

gpg: Signature made Wed 29 May 2024 11:40:59 AM EDT using RSA key ID FE507013

|

||||||

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

gpg: Good signature from "Security Onion Solutions, LLC <info@securityonionsolutions.com>"

|

||||||

gpg: WARNING: This key is not certified with a trusted signature!

|

gpg: WARNING: This key is not certified with a trusted signature!

|

||||||

gpg: There is no indication that the signature belongs to the owner.

|

gpg: There is no indication that the signature belongs to the owner.

|

||||||

|

|||||||

@@ -14,7 +14,7 @@ Hunt

|

|||||||

|

|

||||||

|

|

||||||

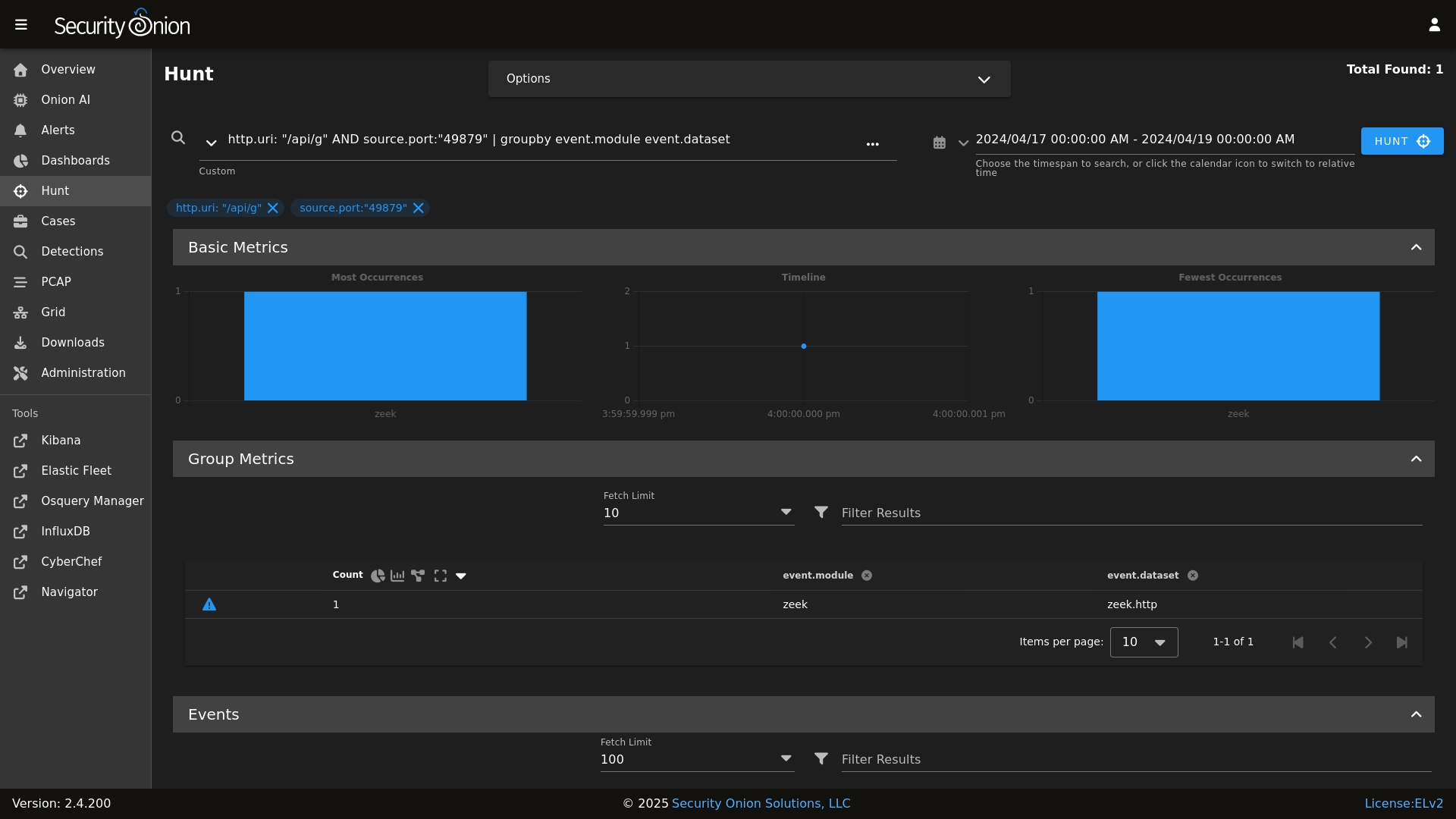

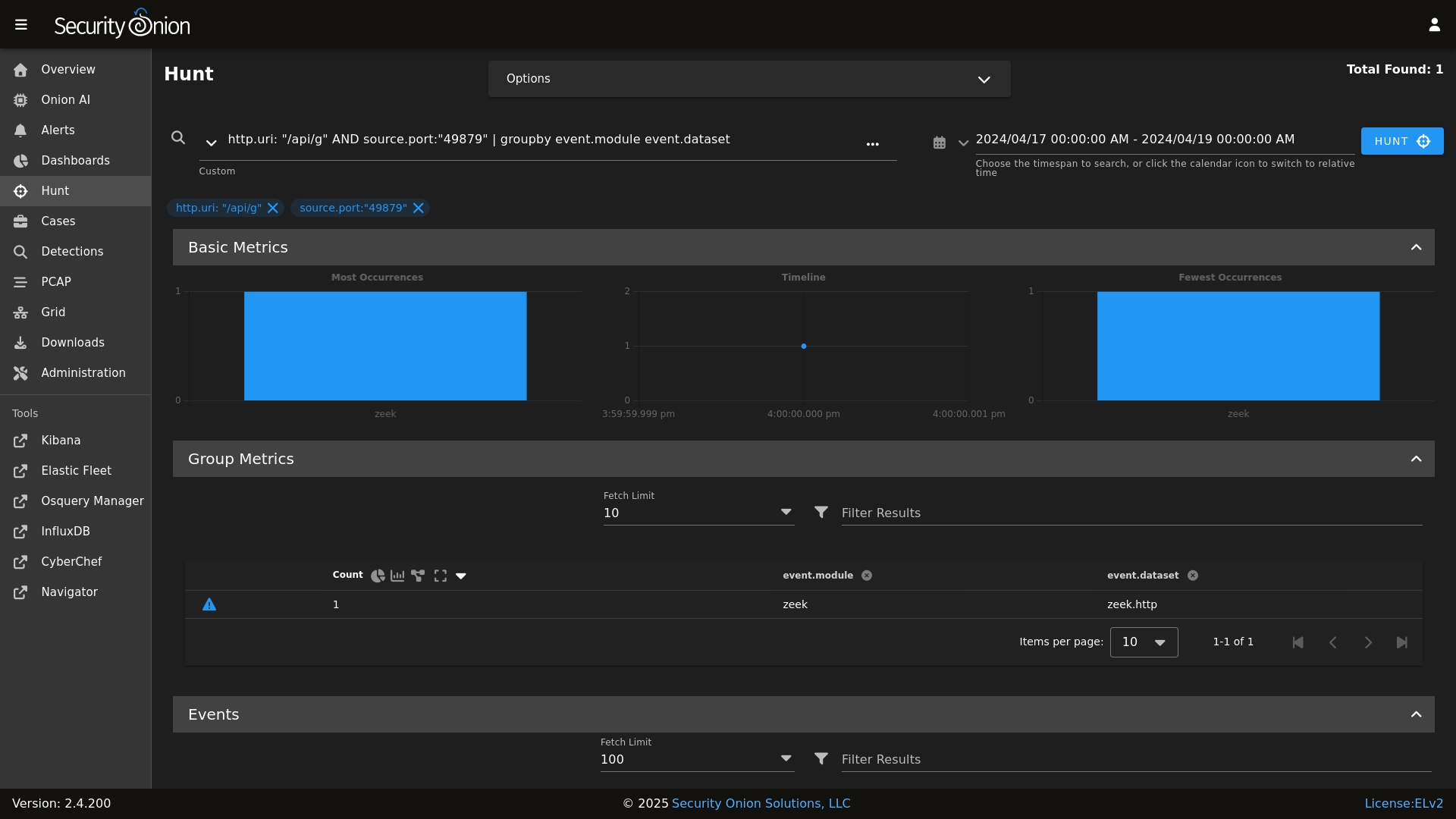

Detections

|

Detections

|

||||||

|

|

||||||

|

|

||||||

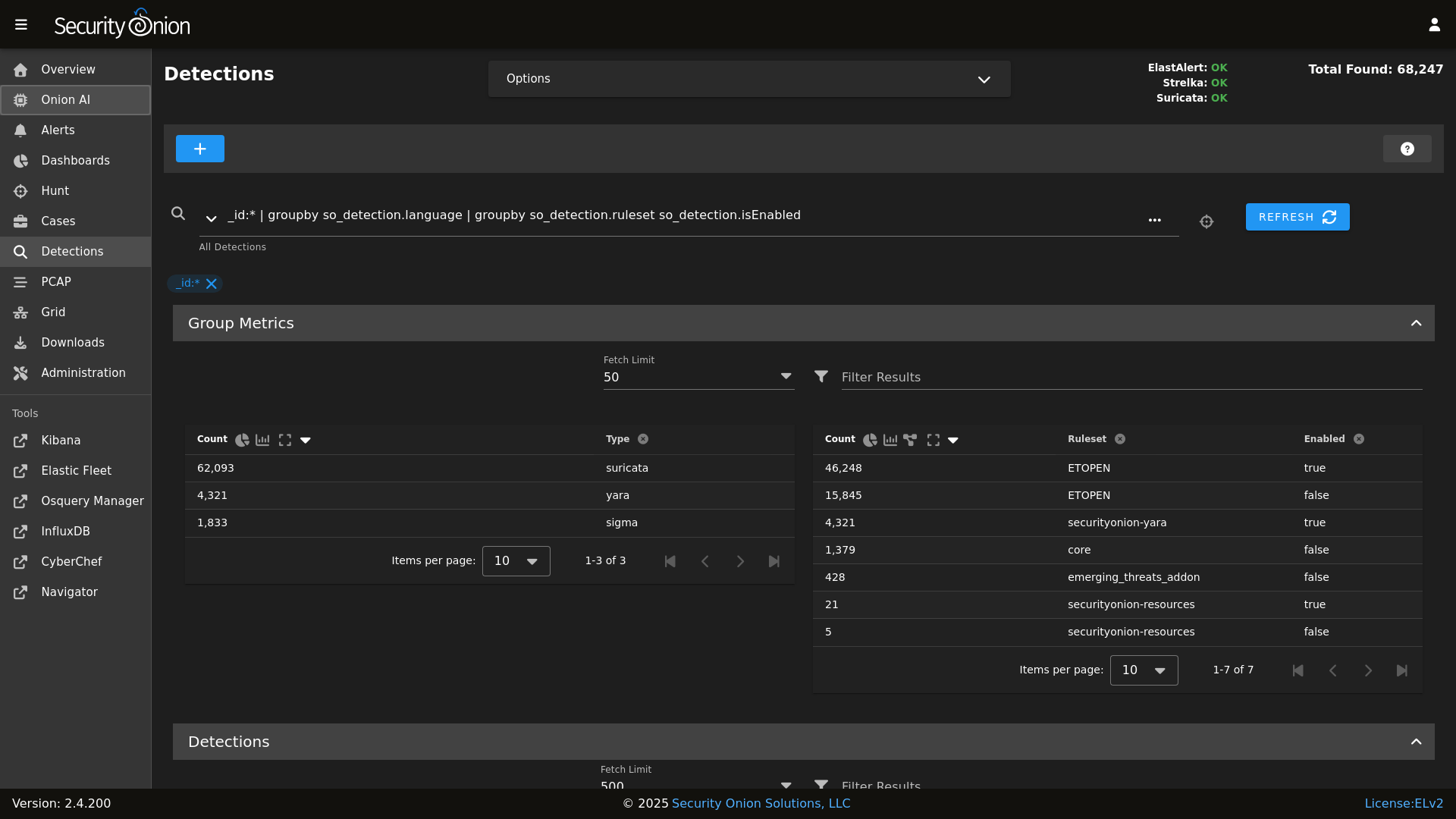

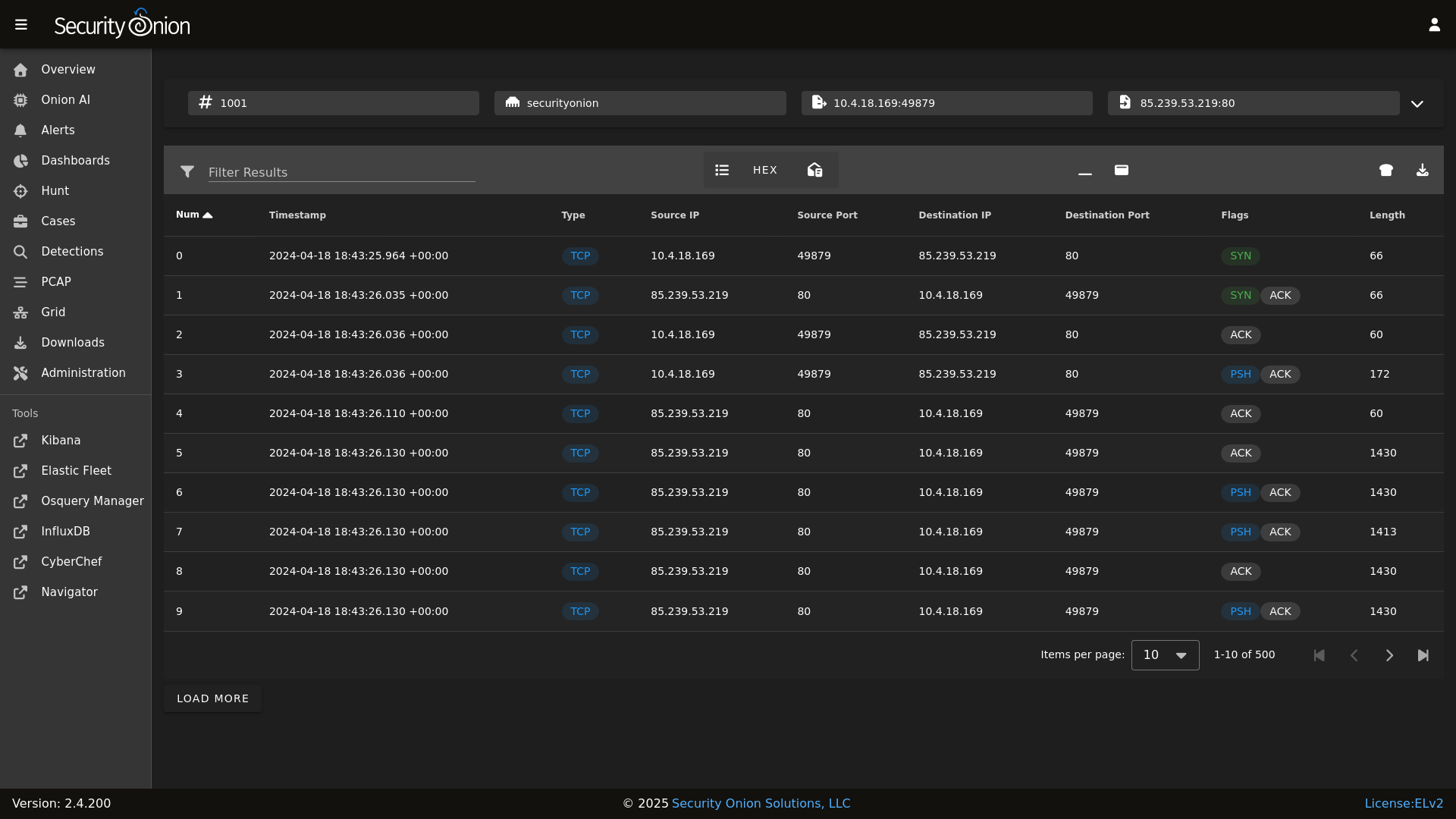

PCAP

|

PCAP

|

||||||

|

|

||||||

|

|||||||

@@ -203,6 +203,8 @@ if [[ $EXCLUDE_KNOWN_ERRORS == 'Y' ]]; then

|

|||||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|context deadline exceeded"

|

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|context deadline exceeded"

|

||||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Error running query:" # Specific issues with detection rules

|

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|Error running query:" # Specific issues with detection rules

|

||||||

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|detect-parse" # Suricata encountering a malformed rule

|

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|detect-parse" # Suricata encountering a malformed rule

|

||||||

|

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|integrity check failed" # Detections: Exclude false positive due to automated testing

|

||||||

|

EXCLUDED_ERRORS="$EXCLUDED_ERRORS|syncErrors" # Detections: Not an actual error

|

||||||

fi

|

fi

|

||||||

|

|

||||||

RESULT=0

|

RESULT=0

|

||||||

|

|||||||

98

salt/common/tools/sbin/so-luks-tpm-regen

Normal file

98

salt/common/tools/sbin/so-luks-tpm-regen

Normal file

@@ -0,0 +1,98 @@

|

|||||||

|

#!/bin/bash

|

||||||

|

#

|

||||||

|

# Copyright Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||||

|

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||||

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

|

# Elastic License 2.0."

|

||||||

|

|

||||||

|

set -e

|

||||||

|

# This script is intended to be used in the case the ISO install did not properly setup TPM decrypt for LUKS partitions at boot.

|

||||||

|

if [ -z $NOROOT ]; then

|

||||||

|

# Check for prerequisites

|

||||||

|

if [ "$(id -u)" -ne 0 ]; then

|

||||||

|

echo "This script must be run using sudo!"

|

||||||

|

exit 1

|

||||||

|

fi

|

||||||

|

fi

|

||||||

|

ENROLL_TPM=N

|

||||||

|

|

||||||

|

while [[ $# -gt 0 ]]; do

|

||||||

|

case $1 in

|

||||||

|

--enroll-tpm)

|

||||||

|

ENROLL_TPM=Y

|

||||||

|

;;

|

||||||

|

*)

|

||||||

|

echo "Usage: $0 [options]"

|

||||||

|

echo ""

|

||||||

|

echo "where options are:"

|

||||||

|

echo " --enroll-tpm for when TPM enrollment was not selected during ISO install."

|

||||||

|

echo ""

|

||||||

|

exit 1

|

||||||

|

;;

|

||||||

|

esac

|

||||||

|

shift

|

||||||

|

done

|

||||||

|

|

||||||

|

check_for_tpm() {

|

||||||

|

echo -n "Checking for TPM: "

|

||||||

|

if [ -d /sys/class/tpm/tpm0 ]; then

|

||||||

|

echo -e "tpm0 found."

|

||||||

|

TPM="yes"

|

||||||

|

# Check if TPM is using sha1 or sha256

|

||||||

|

if [ -d /sys/class/tpm/tpm0/pcr-sha1 ]; then

|

||||||

|

echo -e "TPM is using sha1.\n"

|

||||||

|

TPM_PCR="sha1"

|

||||||

|

elif [ -d /sys/class/tpm/tpm0/pcr-sha256 ]; then

|

||||||

|

echo -e "TPM is using sha256.\n"

|

||||||

|

TPM_PCR="sha256"

|

||||||

|

fi

|

||||||

|

else

|

||||||

|

echo -e "No TPM found.\n"

|

||||||

|

exit 1

|

||||||

|

fi

|

||||||

|

}

|

||||||

|

|

||||||

|

check_for_luks_partitions() {

|

||||||

|

echo "Checking for LUKS partitions"

|

||||||

|

for part in $(lsblk -o NAME,FSTYPE -ln | grep crypto_LUKS | awk '{print $1}'); do

|

||||||

|

echo "Found LUKS partition: $part"

|

||||||

|

LUKS_PARTITIONS+=("$part")

|

||||||

|

done

|

||||||

|

if [ ${#LUKS_PARTITIONS[@]} -eq 0 ]; then

|

||||||

|

echo -e "No LUKS partitions found.\n"

|

||||||

|

exit 1

|

||||||

|

fi

|

||||||

|

echo ""

|

||||||

|

}

|

||||||

|

|

||||||

|

enroll_tpm_in_luks() {

|

||||||

|

read -s -p "Enter the LUKS passphrase used during ISO install: " LUKS_PASSPHRASE

|

||||||

|

echo ""

|

||||||

|

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||||

|

echo "Enrolling TPM for LUKS device: /dev/$part"

|

||||||

|

if [ "$TPM_PCR" == "sha1" ]; then

|

||||||

|

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha1","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||||

|

elif [ "$TPM_PCR" == "sha256" ]; then

|

||||||

|

clevis luks bind -d /dev/$part tpm2 '{"pcr_bank":"sha256","pcr_ids":"7"}' <<< $LUKS_PASSPHRASE

|

||||||

|

fi

|

||||||

|

done

|

||||||

|

}

|

||||||

|

|

||||||

|

regenerate_tpm_enrollment_token() {

|

||||||

|

for part in "${LUKS_PARTITIONS[@]}"; do

|

||||||

|

clevis luks regen -d /dev/$part -s 1 -q

|

||||||

|

done

|

||||||

|

}

|

||||||

|

|

||||||

|

check_for_tpm

|

||||||

|

check_for_luks_partitions

|

||||||

|

|

||||||

|

if [[ $ENROLL_TPM == "Y" ]]; then

|

||||||

|

enroll_tpm_in_luks

|

||||||

|

else

|

||||||

|

regenerate_tpm_enrollment_token

|

||||||

|

fi

|

||||||

|

|

||||||

|

echo "Running dracut"

|

||||||

|

dracut -fv

|

||||||

|

echo -e "\nTPM configuration complete. Reboot the system to verify the TPM is correctly decrypting the LUKS partition(s) at boot.\n"

|

||||||

@@ -82,6 +82,36 @@ elastasomodulesync:

|

|||||||

- group: 933

|

- group: 933

|

||||||

- makedirs: True

|

- makedirs: True

|

||||||

|

|

||||||

|

elastacustomdir:

|

||||||

|

file.directory:

|

||||||

|

- name: /opt/so/conf/elastalert/custom

|

||||||

|

- user: 933

|

||||||

|

- group: 933

|

||||||

|

- makedirs: True

|

||||||

|

|

||||||

|

elastacustomsync:

|

||||||

|

file.recurse:

|

||||||

|

- name: /opt/so/conf/elastalert/custom

|

||||||

|

- source: salt://elastalert/files/custom

|

||||||

|

- user: 933

|

||||||

|

- group: 933

|

||||||

|

- makedirs: True

|

||||||

|

- file_mode: 660

|

||||||

|

- show_changes: False

|

||||||

|

|

||||||

|

elastapredefinedsync:

|

||||||

|

file.recurse:

|

||||||

|

- name: /opt/so/conf/elastalert/predefined

|

||||||

|

- source: salt://elastalert/files/predefined

|

||||||

|

- user: 933

|

||||||

|

- group: 933

|

||||||

|

- makedirs: True

|

||||||

|

- template: jinja

|

||||||

|

- file_mode: 660

|

||||||

|

- context:

|

||||||

|

elastalert: {{ ELASTALERTMERGED }}

|

||||||

|

- show_changes: False

|

||||||

|

|

||||||

elastaconf:

|

elastaconf:

|

||||||

file.managed:

|

file.managed:

|

||||||

- name: /opt/so/conf/elastalert/elastalert_config.yaml

|

- name: /opt/so/conf/elastalert/elastalert_config.yaml

|

||||||

|

|||||||

@@ -30,6 +30,8 @@ so-elastalert:

|

|||||||

- /opt/so/rules/elastalert:/opt/elastalert/rules/:ro

|

- /opt/so/rules/elastalert:/opt/elastalert/rules/:ro

|

||||||

- /opt/so/log/elastalert:/var/log/elastalert:rw

|

- /opt/so/log/elastalert:/var/log/elastalert:rw

|

||||||

- /opt/so/conf/elastalert/modules/:/opt/elastalert/modules/:ro

|

- /opt/so/conf/elastalert/modules/:/opt/elastalert/modules/:ro

|

||||||

|

- /opt/so/conf/elastalert/predefined/:/opt/elastalert/predefined/:ro

|

||||||

|

- /opt/so/conf/elastalert/custom/:/opt/elastalert/custom/:ro

|

||||||

- /opt/so/conf/elastalert/elastalert_config.yaml:/opt/elastalert/config.yaml:ro

|

- /opt/so/conf/elastalert/elastalert_config.yaml:/opt/elastalert/config.yaml:ro

|

||||||

{% if DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

{% if DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

||||||

{% for BIND in DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

{% for BIND in DOCKER.containers['so-elastalert'].custom_bind_mounts %}

|

||||||

|

|||||||

1

salt/elastalert/files/custom/placeholder

Normal file

1

salt/elastalert/files/custom/placeholder

Normal file

@@ -0,0 +1 @@

|

|||||||

|

THIS IS A PLACEHOLDER FILE

|

||||||

6

salt/elastalert/files/predefined/jira_auth.yaml

Normal file

6

salt/elastalert/files/predefined/jira_auth.yaml

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

{% if elastalert.get('jira_user', '') | length > 0 and elastalert.get('jira_pass', '') | length > 0 %}

|

||||||

|

user: {{ elastalert.jira_user }}

|

||||||

|

password: {{ elastalert.jira_pass }}

|

||||||

|

{% else %}

|

||||||

|

apikey: {{ elastalert.get('jira_api_key', '') }}

|

||||||

|

{% endif %}

|

||||||

2

salt/elastalert/files/predefined/smtp_auth.yaml

Normal file

2

salt/elastalert/files/predefined/smtp_auth.yaml

Normal file

@@ -0,0 +1,2 @@

|

|||||||

|

user: {{ elastalert.get('smtp_user', '') }}

|

||||||

|

password: {{ elastalert.get('smtp_pass', '') }}

|

||||||

@@ -14,7 +14,18 @@

|

|||||||

|

|

||||||

{% set ELASTALERTMERGED = salt['pillar.get']('elastalert', ELASTALERTDEFAULTS.elastalert, merge=True) %}

|

{% set ELASTALERTMERGED = salt['pillar.get']('elastalert', ELASTALERTDEFAULTS.elastalert, merge=True) %}

|

||||||

|

|

||||||

{% set params = ELASTALERTMERGED.alerter_parameters | load_yaml %}

|

{% if 'ntf' in salt['pillar.get']('features', []) %}

|

||||||

{% if params != None %}

|

{% set params = ELASTALERTMERGED.get('alerter_parameters', '') | load_yaml %}

|

||||||

|

{% if params != None and params | length > 0 %}

|

||||||

{% do ELASTALERTMERGED.config.update(params) %}

|

{% do ELASTALERTMERGED.config.update(params) %}

|

||||||

{% endif %}

|

{% endif %}

|

||||||

|

|

||||||

|

{% if ELASTALERTMERGED.get('smtp_user', '') | length > 0 %}

|

||||||

|

{% do ELASTALERTMERGED.config.update({'smtp_auth_file': '/opt/elastalert/predefined/smtp_auth.yaml'}) %}

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% if ELASTALERTMERGED.get('jira_user', '') | length > 0 or ELASTALERTMERGED.get('jira_key', '') | length > 0 %}

|

||||||

|

{% do ELASTALERTMERGED.config.update({'jira_account_file': '/opt/elastalert/predefined/jira_auth.yaml'}) %}

|

||||||

|

{% endif %}

|

||||||

|

|

||||||

|

{% endif %}

|

||||||

|

|||||||

@@ -4,12 +4,97 @@ elastalert:

|

|||||||

helpLink: elastalert.html

|

helpLink: elastalert.html

|

||||||

alerter_parameters:

|

alerter_parameters:

|

||||||

title: Alerter Parameters

|

title: Alerter Parameters

|

||||||

description: Custom configuration parameters for additional, optional alerters that can be enabled for all Sigma rules. Filter for 'Additional Alerters' in this Configuration screen to find the setting that allows these alerters to be enabled within the SOC ElastAlert module. Use YAML format for these parameters, and reference the ElastAlert 2 documentation, located at https://elastalert2.readthedocs.io, for available alerters and their required configuration parameters.

|

description: Optional configuration parameters for additional alerters that can be enabled for all Sigma rules. Filter for 'Alerter' in this Configuration screen to find the setting that allows these alerters to be enabled within the SOC ElastAlert module. Use YAML format for these parameters, and reference the ElastAlert 2 documentation, located at https://elastalert2.readthedocs.io, for available alerters and their required configuration parameters. A full update of the ElastAlert rule engine, via the Detections screen, is required in order to apply these changes. Requires a valid Security Onion license key.

|

||||||

global: True

|

global: True

|

||||||

multiline: True

|

multiline: True

|

||||||

syntax: yaml

|

syntax: yaml

|

||||||

helpLink: elastalert.html

|

helpLink: elastalert.html

|

||||||

forcedType: string

|

forcedType: string

|

||||||

|

jira_api_key:

|

||||||

|

title: Jira API Key

|

||||||

|

description: Optional configuration parameter for Jira API Key, used instead of the Jira username and password. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

sensitive: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

forcedType: string

|

||||||

|

jira_pass:

|

||||||

|

title: Jira Password

|

||||||

|

description: Optional configuration parameter for Jira password. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

sensitive: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

forcedType: string

|

||||||

|

jira_user:

|

||||||

|

title: Jira Username

|

||||||

|

description: Optional configuration parameter for Jira username. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

forcedType: string

|

||||||

|

smtp_pass:

|

||||||

|

title: SMTP Password

|

||||||

|

description: Optional configuration parameter for SMTP password, required for authenticating email servers. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

sensitive: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

forcedType: string

|

||||||

|

smtp_user:

|

||||||

|

title: SMTP Username

|

||||||

|

description: Optional configuration parameter for SMTP username, required for authenticating email servers. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

forcedType: string

|

||||||

|

files:

|

||||||

|

custom:

|

||||||

|

alertmanager_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to an AlertManager server. To utilize this custom file, the alertmanager_ca_certs key must be set to /opt/elastalert/custom/alertmanager_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

gelf_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to a Graylog server. To utilize this custom file, the graylog_ca_certs key must be set to /opt/elastalert/custom/graylog_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

http_post_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to a generic HTTP server, via the legacy HTTP POST alerter. To utilize this custom file, the http_post_ca_certs key must be set to /opt/elastalert/custom/http_post2_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

http_post2_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to a generic HTTP server, via the newer HTTP POST 2 alerter. To utilize this custom file, the http_post2_ca_certs key must be set to /opt/elastalert/custom/http_post2_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

ms_teams_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to Microsoft Teams server. To utilize this custom file, the ms_teams_ca_certs key must be set to /opt/elastalert/custom/ms_teams_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

pagerduty_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to PagerDuty server. To utilize this custom file, the pagerduty_ca_certs key must be set to /opt/elastalert/custom/pagerduty_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

rocket_chat_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to PagerDuty server. To utilize this custom file, the rocket_chart_ca_certs key must be set to /opt/elastalert/custom/rocket_chat_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

smtp__crt:

|

||||||

|

description: Optional custom certificate for connecting to an SMTP server. To utilize this custom file, the smtp_cert_file key must be set to /opt/elastalert/custom/smtp.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

smtp__key:

|

||||||

|

description: Optional custom certificate key for connecting to an SMTP server. To utilize this custom file, the smtp_key_file key must be set to /opt/elastalert/custom/smtp.key in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

|

slack_ca__crt:

|

||||||

|

description: Optional custom Certificate Authority for connecting to Slack. To utilize this custom file, the slack_ca_certs key must be set to /opt/elastalert/custom/slack_ca.crt in the Alerter Parameters setting. Requires a valid Security Onion license key.

|

||||||

|

global: True

|

||||||

|

file: True

|

||||||

|

helpLink: elastalert.html

|

||||||

config:

|

config:

|

||||||

disable_rules_on_error:

|

disable_rules_on_error:

|

||||||

description: Disable rules on failure.

|

description: Disable rules on failure.

|

||||||

|

|||||||

@@ -56,6 +56,7 @@

|

|||||||

{ "set": { "if": "ctx.exiftool?.Subsystem != null", "field": "host.subsystem", "value": "{{exiftool.Subsystem}}", "ignore_failure": true }},

|

{ "set": { "if": "ctx.exiftool?.Subsystem != null", "field": "host.subsystem", "value": "{{exiftool.Subsystem}}", "ignore_failure": true }},

|

||||||

{ "set": { "if": "ctx.scan?.yara?.matches instanceof List", "field": "rule.name", "value": "{{scan.yara.matches.0}}" }},

|

{ "set": { "if": "ctx.scan?.yara?.matches instanceof List", "field": "rule.name", "value": "{{scan.yara.matches.0}}" }},

|

||||||

{ "set": { "if": "ctx.rule?.name != null", "field": "event.dataset", "value": "alert", "override": true }},

|

{ "set": { "if": "ctx.rule?.name != null", "field": "event.dataset", "value": "alert", "override": true }},

|

||||||

|

{ "set": { "if": "ctx.rule?.name != null", "field": "rule.uuid", "value": "{{rule.name}}", "override": true }},

|

||||||

{ "rename": { "field": "file.flavors.mime", "target_field": "file.mime_type", "ignore_missing": true }},

|

{ "rename": { "field": "file.flavors.mime", "target_field": "file.mime_type", "ignore_missing": true }},

|

||||||

{ "set": { "if": "ctx.rule?.name != null && ctx.rule?.score == null", "field": "event.severity", "value": 3, "override": true } },

|

{ "set": { "if": "ctx.rule?.name != null && ctx.rule?.score == null", "field": "event.severity", "value": 3, "override": true } },

|

||||||

{ "convert" : { "if": "ctx.rule?.score != null", "field" : "rule.score","type": "integer"}},

|

{ "convert" : { "if": "ctx.rule?.score != null", "field" : "rule.score","type": "integer"}},

|

||||||

|

|||||||

@@ -133,7 +133,7 @@ if [ ! -f $STATE_FILE_SUCCESS ]; then

|

|||||||

for i in $pattern; do

|

for i in $pattern; do

|

||||||

TEMPLATE=${i::-14}

|

TEMPLATE=${i::-14}

|

||||||

COMPONENT_PATTERN=${TEMPLATE:3}

|

COMPONENT_PATTERN=${TEMPLATE:3}

|

||||||

MATCH=$(echo "$TEMPLATE" | grep -E "^so-logs-|^so-metrics" | grep -v osquery)

|

MATCH=$(echo "$TEMPLATE" | grep -E "^so-logs-|^so-metrics" | grep -vE "detections|osquery")

|

||||||

if [[ -n "$MATCH" && ! "$COMPONENT_LIST" =~ "$COMPONENT_PATTERN" ]]; then

|

if [[ -n "$MATCH" && ! "$COMPONENT_LIST" =~ "$COMPONENT_PATTERN" ]]; then

|

||||||

load_failures=$((load_failures+1))

|

load_failures=$((load_failures+1))

|

||||||

echo "Component template does not exist for $COMPONENT_PATTERN. The index template will not be loaded. Load failures: $load_failures"

|

echo "Component template does not exist for $COMPONENT_PATTERN. The index template will not be loaded. Load failures: $load_failures"

|

||||||

|

|||||||

@@ -672,6 +672,13 @@ suricata_idstools_migration() {

|

|||||||

fail "Error: rsync failed to copy the files. Thresholds have not been backed up."

|

fail "Error: rsync failed to copy the files. Thresholds have not been backed up."

|

||||||

fi

|

fi

|

||||||

|

|

||||||

|

#Backup local rules

|

||||||

|

mkdir -p /nsm/backup/detections-migration/suricata/local-rules

|

||||||

|

rsync -av /opt/so/rules/nids/suri/local.rules /nsm/backup/detections-migration/suricata/local-rules

|

||||||

|

if [[ -f /opt/so/saltstack/local/salt/idstools/rules/local.rules ]]; then

|

||||||

|

rsync -av /opt/so/saltstack/local/salt/idstools/rules/local.rules /nsm/backup/detections-migration/suricata/local-rules/local.rules.bak

|

||||||

|

fi

|

||||||

|

|

||||||

#Tell SOC to migrate

|

#Tell SOC to migrate

|

||||||

mkdir -p /opt/so/conf/soc/migrations

|

mkdir -p /opt/so/conf/soc/migrations

|

||||||

echo "0" > /opt/so/conf/soc/migrations/suricata-migration-2.4.70

|

echo "0" > /opt/so/conf/soc/migrations/suricata-migration-2.4.70

|

||||||

@@ -689,22 +696,21 @@ playbook_migration() {

|

|||||||

if grep -A 1 'playbook:' /opt/so/saltstack/local/pillar/minions/* | grep -q 'enabled: True'; then

|

if grep -A 1 'playbook:' /opt/so/saltstack/local/pillar/minions/* | grep -q 'enabled: True'; then

|

||||||

|

|

||||||

# Check for active Elastalert rules

|

# Check for active Elastalert rules

|

||||||

active_rules_count=$(find /opt/so/rules/elastalert/playbook/ -type f -name "*.yaml" | wc -l)

|

active_rules_count=$(find /opt/so/rules/elastalert/playbook/ -type f \( -name "*.yaml" -o -name "*.yml" \) | wc -l)

|

||||||

|

|

||||||

if [[ "$active_rules_count" -gt 0 ]]; then

|

if [[ "$active_rules_count" -gt 0 ]]; then

|

||||||

# Prompt the user to AGREE if active Elastalert rules found

|

# Prompt the user to press ENTER if active Elastalert rules found

|

||||||

echo

|

echo

|

||||||

echo "$active_rules_count Active Elastalert/Playbook rules found."

|

echo "$active_rules_count Active Elastalert/Playbook rules found."

|

||||||

echo "In preparation for the new Detections module, they will be backed up and then disabled."

|

echo "In preparation for the new Detections module, they will be backed up and then disabled."

|

||||||

echo

|

echo

|

||||||

echo "If you would like to proceed, then type AGREE and press ENTER."

|

echo "Press ENTER to proceed."

|

||||||

echo

|

echo

|

||||||

# Read user input

|

# Read user input

|

||||||

read INPUT

|

read -r

|

||||||

if [ "${INPUT^^}" != 'AGREE' ]; then fail "SOUP canceled."; fi

|

|

||||||

|

|

||||||

echo "Backing up the Elastalert rules..."

|

echo "Backing up the Elastalert rules..."

|

||||||

rsync -av --stats /opt/so/rules/elastalert/playbook/*.yaml /nsm/backup/detections-migration/elastalert/

|

rsync -av --ignore-missing-args --stats /opt/so/rules/elastalert/playbook/*.{yaml,yml} /nsm/backup/detections-migration/elastalert/

|

||||||

|

|

||||||

# Verify that rsync completed successfully

|

# Verify that rsync completed successfully

|

||||||

if [[ $? -eq 0 ]]; then

|

if [[ $? -eq 0 ]]; then

|

||||||

|

|||||||

@@ -80,6 +80,14 @@ socmotd:

|

|||||||

- mode: 600

|

- mode: 600

|

||||||

- template: jinja

|

- template: jinja

|

||||||

|

|

||||||

|

filedetectionsbackup:

|

||||||

|

file.managed:

|

||||||

|

- name: /opt/so/conf/soc/so-detections-backup.py

|

||||||

|

- source: salt://soc/files/soc/so-detections-backup.py

|

||||||

|

- user: 939

|

||||||

|

- group: 939

|

||||||

|

- mode: 600

|

||||||

|

|

||||||

crondetectionsruntime:

|

crondetectionsruntime:

|

||||||

cron.present:

|

cron.present:

|

||||||

- name: /usr/sbin/so-detections-runtime-status cron

|

- name: /usr/sbin/so-detections-runtime-status cron

|

||||||

@@ -91,6 +99,17 @@ crondetectionsruntime:

|

|||||||

- month: '*'

|

- month: '*'

|

||||||

- dayweek: '*'

|

- dayweek: '*'

|

||||||

|

|

||||||

|

crondetectionsbackup:

|

||||||

|

cron.present:

|

||||||

|

- name: python3 /opt/so/conf/soc/so-detections-backup.py &>> /opt/so/log/soc/detections-backup.log

|

||||||

|

- identifier: detections-backup

|

||||||

|

- user: root

|

||||||

|

- minute: '0'

|

||||||

|

- hour: '0'

|

||||||

|

- daymonth: '*'

|

||||||

|

- month: '*'

|

||||||

|

- dayweek: '*'

|

||||||

|

|

||||||

socsigmafinalpipeline:

|

socsigmafinalpipeline:

|

||||||

file.managed:

|

file.managed:

|

||||||

- name: /opt/so/conf/soc/sigma_final_pipeline.yaml

|

- name: /opt/so/conf/soc/sigma_final_pipeline.yaml

|

||||||

|

|||||||

@@ -1271,6 +1271,15 @@ soc:

|

|||||||

- netflow.type

|

- netflow.type

|

||||||

- netflow.exporter.version

|

- netflow.exporter.version

|

||||||

- observer.ip

|

- observer.ip

|

||||||

|

':soc:':

|

||||||

|

- soc_timestamp

|

||||||

|

- event.dataset

|

||||||

|

- source.ip

|

||||||

|

- soc.fields.requestMethod

|

||||||

|

- soc.fields.requestPath

|

||||||

|

- soc.fields.statusCode

|

||||||

|

- event.action

|

||||||

|

- soc.fields.error

|

||||||

server:

|

server:

|

||||||

bindAddress: 0.0.0.0:9822

|

bindAddress: 0.0.0.0:9822

|

||||||

baseUrl: /

|

baseUrl: /

|

||||||

@@ -1305,6 +1314,7 @@ soc:

|

|||||||

reposFolder: /opt/sensoroni/sigma/repos

|

reposFolder: /opt/sensoroni/sigma/repos

|

||||||

rulesFingerprintFile: /opt/sensoroni/fingerprints/sigma.fingerprint

|

rulesFingerprintFile: /opt/sensoroni/fingerprints/sigma.fingerprint

|

||||||

stateFilePath: /opt/sensoroni/fingerprints/elastalertengine.state

|

stateFilePath: /opt/sensoroni/fingerprints/elastalertengine.state

|

||||||

|

integrityCheckFrequencySeconds: 600

|

||||||

rulesRepos:

|

rulesRepos:

|

||||||

default:

|

default:

|

||||||

- repo: https://github.com/Security-Onion-Solutions/securityonion-resources

|

- repo: https://github.com/Security-Onion-Solutions/securityonion-resources

|

||||||

@@ -1383,6 +1393,7 @@ soc:

|

|||||||

community: true

|

community: true

|

||||||

yaraRulesFolder: /opt/sensoroni/yara/rules

|

yaraRulesFolder: /opt/sensoroni/yara/rules

|

||||||

stateFilePath: /opt/sensoroni/fingerprints/strelkaengine.state

|

stateFilePath: /opt/sensoroni/fingerprints/strelkaengine.state

|

||||||

|

integrityCheckFrequencySeconds: 600

|

||||||

suricataengine:

|

suricataengine:

|

||||||

allowRegex: ''

|

allowRegex: ''

|

||||||

autoUpdateEnabled: true

|

autoUpdateEnabled: true

|

||||||

@@ -1393,6 +1404,7 @@ soc:

|

|||||||

denyRegex: ''

|

denyRegex: ''

|

||||||

rulesFingerprintFile: /opt/sensoroni/fingerprints/emerging-all.fingerprint

|

rulesFingerprintFile: /opt/sensoroni/fingerprints/emerging-all.fingerprint

|

||||||

stateFilePath: /opt/sensoroni/fingerprints/suricataengine.state

|

stateFilePath: /opt/sensoroni/fingerprints/suricataengine.state

|

||||||

|

integrityCheckFrequencySeconds: 600

|

||||||

client:

|

client:

|

||||||

enableReverseLookup: false

|

enableReverseLookup: false

|

||||||

docsUrl: /docs/

|

docsUrl: /docs/

|

||||||

@@ -1479,7 +1491,7 @@ soc:

|

|||||||

showSubtitle: true

|

showSubtitle: true

|

||||||

- name: Elastalerts

|

- name: Elastalerts

|

||||||

description: ''

|

description: ''

|

||||||

query: '_type:elastalert | groupby rule.name'

|

query: 'event.dataset:sigma.alert | groupby rule.name'

|

||||||

showSubtitle: true

|

showSubtitle: true

|

||||||

- name: Alerts

|

- name: Alerts

|

||||||

description: Show all alerts grouped by alert source

|

description: Show all alerts grouped by alert source

|

||||||

@@ -1814,7 +1826,7 @@ soc:

|

|||||||

query: 'tags:dhcp | groupby host.hostname | groupby -sankey host.hostname client.address | groupby client.address | groupby -sankey client.address server.address | groupby server.address | groupby dhcp.message_types | groupby host.domain'

|

query: 'tags:dhcp | groupby host.hostname | groupby -sankey host.hostname client.address | groupby client.address | groupby -sankey client.address server.address | groupby server.address | groupby dhcp.message_types | groupby host.domain'

|

||||||

- name: DNS

|

- name: DNS

|

||||||

description: DNS (Domain Name System) queries

|

description: DNS (Domain Name System) queries

|

||||||

query: 'tags:dns | groupby dns.query.name | groupby source.ip | groupby -sankey source.ip destination.ip | groupby destination.ip | groupby destination.port | groupby dns.highest_registered_domain | groupby dns.parent_domain | groupby dns.response.code_name | groupby dns.answers.name | groupby dns.query.type_name | groupby dns.response.code_name | groupby destination_geo.organization_name'

|

query: 'tags:dns | groupby dns.query.name | groupby source.ip | groupby -sankey source.ip destination.ip | groupby destination.ip | groupby destination.port | groupby dns.highest_registered_domain | groupby dns.parent_domain | groupby dns.query.type_name | groupby dns.response.code_name | groupby dns.answers.name | groupby destination_geo.organization_name'

|

||||||

- name: DPD

|

- name: DPD

|

||||||

description: DPD (Dynamic Protocol Detection) errors

|

description: DPD (Dynamic Protocol Detection) errors

|

||||||

query: 'tags:dpd | groupby error.reason | groupby -sankey error.reason source.ip | groupby source.ip | groupby -sankey source.ip destination.ip | groupby destination.ip | groupby destination.port | groupby network.protocol | groupby destination_geo.organization_name'

|

query: 'tags:dpd | groupby error.reason | groupby -sankey error.reason source.ip | groupby source.ip | groupby -sankey source.ip destination.ip | groupby destination.ip | groupby destination.port | groupby network.protocol | groupby destination_geo.organization_name'

|

||||||

@@ -2053,17 +2065,17 @@ soc:

|

|||||||

- acknowledged

|

- acknowledged

|

||||||

queries:

|

queries:

|

||||||

- name: 'Group By Name, Module'

|

- name: 'Group By Name, Module'

|

||||||

query: '* | groupby rule.name event.module* event.severity_label'

|

query: '* | groupby rule.name event.module* event.severity_label rule.uuid'

|

||||||

- name: 'Group By Sensor, Source IP/Port, Destination IP/Port, Name'

|

- name: 'Group By Sensor, Source IP/Port, Destination IP/Port, Name'

|

||||||

query: '* | groupby observer.name source.ip source.port destination.ip destination.port rule.name network.community_id event.severity_label'

|

query: '* | groupby observer.name source.ip source.port destination.ip destination.port rule.name network.community_id event.severity_label rule.uuid'

|

||||||

- name: 'Group By Source IP, Name'

|

- name: 'Group By Source IP, Name'

|

||||||

query: '* | groupby source.ip rule.name event.severity_label'

|

query: '* | groupby source.ip rule.name event.severity_label rule.uuid'

|

||||||

- name: 'Group By Source Port, Name'

|

- name: 'Group By Source Port, Name'

|

||||||

query: '* | groupby source.port rule.name event.severity_label'

|

query: '* | groupby source.port rule.name event.severity_label rule.uuid'

|

||||||

- name: 'Group By Destination IP, Name'

|

- name: 'Group By Destination IP, Name'

|

||||||

query: '* | groupby destination.ip rule.name event.severity_label'

|

query: '* | groupby destination.ip rule.name event.severity_label rule.uuid'

|

||||||

- name: 'Group By Destination Port, Name'

|

- name: 'Group By Destination Port, Name'

|

||||||

query: '* | groupby destination.port rule.name event.severity_label'

|

query: '* | groupby destination.port rule.name event.severity_label rule.uuid'

|

||||||

- name: Ungroup

|

- name: Ungroup

|

||||||

query: '*'

|

query: '*'

|

||||||

grid:

|

grid:

|

||||||

|

|||||||

@@ -17,6 +17,16 @@ transformations:

|

|||||||

dst_ip: destination.ip.keyword

|

dst_ip: destination.ip.keyword

|

||||||

dst_port: destination.port

|

dst_port: destination.port

|

||||||

winlog.event_data.User: user.name

|

winlog.event_data.User: user.name

|

||||||

|

logtype: event.code # OpenCanary

|

||||||

|

# Maps "opencanary" product to SO IDH logs

|

||||||

|

- id: opencanary_idh_add-fields

|

||||||

|

type: add_condition

|

||||||

|

conditions:

|

||||||

|

event.module: 'opencanary'

|

||||||

|

event.dataset: 'opencanary.idh'

|

||||||

|

rule_conditions:

|

||||||

|

- type: logsource

|

||||||

|

product: opencanary

|

||||||

# Maps "antivirus" category to Windows Defender logs shipped by Elastic Agent Winlog Integration

|

# Maps "antivirus" category to Windows Defender logs shipped by Elastic Agent Winlog Integration

|

||||||

# winlog.event_data.threat_name has to be renamed prior to ingestion, it is originally winlog.event_data.Threat Name

|

# winlog.event_data.threat_name has to be renamed prior to ingestion, it is originally winlog.event_data.Threat Name

|

||||||

- id: antivirus_field-mappings_windows-defender

|

- id: antivirus_field-mappings_windows-defender

|

||||||

@@ -88,3 +98,11 @@ transformations:

|

|||||||

- type: logsource

|

- type: logsource

|

||||||

product: linux

|

product: linux

|

||||||

service: auth

|

service: auth

|

||||||

|

# event.code should always be a string

|

||||||

|

- id: convert_event_code_to_string

|

||||||

|

type: convert_type

|

||||||

|

target_type: 'str'

|

||||||

|

field_name_conditions:

|

||||||

|

- type: include_fields

|

||||||

|

fields:

|

||||||

|

- event.code

|

||||||

|

|||||||

113

salt/soc/files/soc/so-detections-backup.py

Normal file

113

salt/soc/files/soc/so-detections-backup.py

Normal file

@@ -0,0 +1,113 @@

|

|||||||

|

# Copyright 2020-2023 Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||||

|

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||||

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

|

# Elastic License 2.0.

|

||||||

|

|

||||||

|

# This script queries Elasticsearch for Custom Detections and all Overrides,

|

||||||

|

# and git commits them to disk at $OUTPUT_DIR

|

||||||

|

|

||||||

|

import os

|

||||||

|

import subprocess

|

||||||

|

import json

|

||||||

|

import requests

|

||||||

|

from requests.auth import HTTPBasicAuth

|

||||||

|

import urllib3

|

||||||

|

from datetime import datetime

|

||||||

|

|

||||||

|

# Suppress SSL warnings

|

||||||

|

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

|

||||||

|

|

||||||

|

# Constants

|

||||||

|

ES_URL = "https://localhost:9200/so-detection/_search"

|

||||||

|

QUERY_DETECTIONS = '{"query": {"bool": {"must": [{"match_all": {}}, {"term": {"so_detection.ruleset": "__custom__"}}]}},"size": 10000}'

|

||||||

|

QUERY_OVERRIDES = '{"query": {"bool": {"must": [{"exists": {"field": "so_detection.overrides"}}]}},"size": 10000}'

|

||||||

|

OUTPUT_DIR = "/nsm/backup/detections/repo"

|

||||||

|

AUTH_FILE = "/opt/so/conf/elasticsearch/curl.config"

|

||||||

|

|

||||||

|

def get_auth_credentials(auth_file):

|

||||||

|

with open(auth_file, 'r') as file:

|

||||||

|

for line in file:

|

||||||

|

if line.startswith('user ='):

|

||||||

|

return line.split('=', 1)[1].strip().replace('"', '')

|

||||||

|

|

||||||

|

def query_elasticsearch(query, auth):

|

||||||

|

headers = {"Content-Type": "application/json"}

|

||||||

|

response = requests.get(ES_URL, headers=headers, data=query, auth=auth, verify=False)

|

||||||

|

response.raise_for_status()

|

||||||

|

return response.json()

|

||||||

|

|

||||||

|

def save_content(hit, base_folder, subfolder="", extension="txt"):

|

||||||

|

so_detection = hit["_source"]["so_detection"]

|

||||||

|

public_id = so_detection["publicId"]

|

||||||

|

content = so_detection["content"]

|

||||||

|

file_dir = os.path.join(base_folder, subfolder)

|

||||||

|

os.makedirs(file_dir, exist_ok=True)

|

||||||

|

file_path = os.path.join(file_dir, f"{public_id}.{extension}")

|

||||||

|

with open(file_path, "w") as f:

|

||||||

|

f.write(content)

|

||||||

|

return file_path

|

||||||

|

|

||||||

|

def save_overrides(hit):

|

||||||

|

so_detection = hit["_source"]["so_detection"]

|

||||||

|

public_id = so_detection["publicId"]

|

||||||

|

overrides = so_detection["overrides"]

|

||||||

|

language = so_detection["language"]

|

||||||

|

folder = os.path.join(OUTPUT_DIR, language, "overrides")

|

||||||

|

os.makedirs(folder, exist_ok=True)

|

||||||

|

extension = "yaml" if language == "sigma" else "txt"

|

||||||

|

file_path = os.path.join(folder, f"{public_id}.{extension}")

|

||||||

|

with open(file_path, "w") as f:

|

||||||

|

f.write('\n'.join(json.dumps(override) for override in overrides) if isinstance(overrides, list) else overrides)

|

||||||

|

return file_path

|

||||||

|

|

||||||

|

def ensure_git_repo():

|

||||||

|

if not os.path.isdir(os.path.join(OUTPUT_DIR, '.git')):

|

||||||

|

subprocess.run(["git", "config", "--global", "init.defaultBranch", "main"], check=True)

|

||||||

|

subprocess.run(["git", "-C", OUTPUT_DIR, "init"], check=True)

|

||||||

|

subprocess.run(["git", "-C", OUTPUT_DIR, "remote", "add", "origin", "default"], check=True)

|

||||||

|

|

||||||

|

def commit_changes():

|

||||||

|

ensure_git_repo()

|

||||||

|

subprocess.run(["git", "-C", OUTPUT_DIR, "config", "user.email", "securityonion@local.invalid"], check=True)

|

||||||

|

subprocess.run(["git", "-C", OUTPUT_DIR, "config", "user.name", "securityonion"], check=True)

|

||||||

|

subprocess.run(["git", "-C", OUTPUT_DIR, "add", "."], check=True)

|

||||||

|

status_result = subprocess.run(["git", "-C", OUTPUT_DIR, "status"], capture_output=True, text=True)

|

||||||

|

print(status_result.stdout)

|

||||||

|

commit_result = subprocess.run(["git", "-C", OUTPUT_DIR, "commit", "-m", "Update detections and overrides"], check=False, capture_output=True)

|

||||||

|

if commit_result.returncode == 1:

|

||||||

|

print("No changes to commit.")

|

||||||

|

elif commit_result.returncode == 0:

|

||||||

|

print("Changes committed successfully.")

|

||||||

|

else:

|

||||||

|

commit_result.check_returncode()

|

||||||

|

|

||||||

|

def main():

|

||||||

|

try:

|

||||||

|

timestamp = datetime.now().strftime("%Y-%m-%d %H:%M:%S")

|

||||||

|

print(f"Backing up Custom Detections and all Overrides to {OUTPUT_DIR} - {timestamp}\n")

|

||||||

|

|

||||||

|

os.makedirs(OUTPUT_DIR, exist_ok=True)

|

||||||

|

|

||||||

|

auth_credentials = get_auth_credentials(AUTH_FILE)

|

||||||

|

username, password = auth_credentials.split(':', 1)

|

||||||

|

auth = HTTPBasicAuth(username, password)

|

||||||

|

|

||||||

|

# Query and save custom detections

|

||||||

|

detections = query_elasticsearch(QUERY_DETECTIONS, auth)["hits"]["hits"]

|

||||||

|

for hit in detections:

|

||||||

|

save_content(hit, OUTPUT_DIR, hit["_source"]["so_detection"]["language"], "yaml" if hit["_source"]["so_detection"]["language"] == "sigma" else "txt")

|

||||||

|

|

||||||

|

# Query and save overrides

|

||||||

|

overrides = query_elasticsearch(QUERY_OVERRIDES, auth)["hits"]["hits"]

|

||||||

|

for hit in overrides:

|

||||||

|

save_overrides(hit)

|

||||||

|

|

||||||

|

commit_changes()

|

||||||

|

|

||||||

|

timestamp = datetime.now().strftime("%Y-%m-%d %H:%M:%S")

|

||||||

|

print(f"Backup Completed - {timestamp}")

|

||||||

|

except Exception as e:

|

||||||

|

print(f"An error occurred: {e}")

|

||||||

|

|

||||||

|

if __name__ == "__main__":

|

||||||

|

main()

|

||||||

159

salt/soc/files/soc/so-detections-backup_test.py

Normal file

159

salt/soc/files/soc/so-detections-backup_test.py

Normal file

@@ -0,0 +1,159 @@

|

|||||||

|

# Copyright 2020-2023 Security Onion Solutions LLC and/or licensed to Security Onion Solutions LLC under one

|

||||||

|

# or more contributor license agreements. Licensed under the Elastic License 2.0 as shown at

|

||||||

|

# https://securityonion.net/license; you may not use this file except in compliance with the

|

||||||

|

# Elastic License 2.0.

|

||||||

|

|

||||||

|

import unittest

|

||||||

|

from unittest.mock import patch, MagicMock, mock_open, call

|

||||||

|

import requests

|

||||||

|

import os

|

||||||

|

import subprocess

|

||||||

|

import json

|

||||||

|

from datetime import datetime

|

||||||

|

import importlib

|

||||||

|

|

||||||

|

ds = importlib.import_module('so-detections-backup')

|

||||||

|

|

||||||

|

class TestBackupScript(unittest.TestCase):

|

||||||

|

|

||||||

|

def setUp(self):

|

||||||

|

self.output_dir = '/nsm/backup/detections/repo'

|

||||||

|

self.auth_file_path = '/nsm/backup/detections/repo'

|

||||||

|

self.mock_auth_data = 'user = "so_elastic:@Tu_dv_[7SvK7[-JZN39BBlSa;WAyf8rCY+3w~Sntp=7oR9*~34?Csi)a@v?)K*vK4vQAywS"'

|

||||||

|

self.auth_credentials = 'so_elastic:@Tu_dv_[7SvK7[-JZN39BBlSa;WAyf8rCY+3w~Sntp=7oR9*~34?Csi)a@v?)K*vK4vQAywS'

|

||||||

|

self.auth = requests.auth.HTTPBasicAuth('so_elastic', '@Tu_dv_[7SvK7[-JZN39BBlSa;WAyf8rCY+3w~Sntp=7oR9*~34?Csi)a@v?)K*vK4vQAywS')

|

||||||

|

self.mock_detection_hit = {

|

||||||

|

"_source": {

|

||||||

|

"so_detection": {

|

||||||

|

"publicId": "test_id",

|

||||||

|

"content": "test_content",

|

||||||

|

"language": "suricata"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

self.mock_override_hit = {

|

||||||

|

"_source": {

|

||||||

|

"so_detection": {

|

||||||

|

"publicId": "test_id",

|

||||||

|

"overrides": [{"key": "value"}],

|

||||||

|

"language": "sigma"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

def assert_file_written(self, mock_file, expected_path, expected_content):

|

||||||

|

mock_file.assert_called_once_with(expected_path, 'w')

|

||||||

|

mock_file().write.assert_called_once_with(expected_content)

|

||||||

|

|

||||||

|

@patch('builtins.open', new_callable=mock_open, read_data='user = "so_elastic:@Tu_dv_[7SvK7[-JZN39BBlSa;WAyf8rCY+3w~Sntp=7oR9*~34?Csi)a@v?)K*vK4vQAywS"')

|

||||||

|

def test_get_auth_credentials(self, mock_file):

|

||||||

|

credentials = ds.get_auth_credentials(self.auth_file_path)

|

||||||

|

self.assertEqual(credentials, self.auth_credentials)

|

||||||

|

mock_file.assert_called_once_with(self.auth_file_path, 'r')

|

||||||

|

|

||||||

|

@patch('requests.get')

|

||||||

|

def test_query_elasticsearch(self, mock_get):

|

||||||

|

mock_response = MagicMock()

|

||||||

|

mock_response.json.return_value = {'hits': {'hits': []}}

|

||||||

|

mock_response.raise_for_status = MagicMock()

|

||||||

|

mock_get.return_value = mock_response

|

||||||

|

|

||||||

|

response = ds.query_elasticsearch(ds.QUERY_DETECTIONS, self.auth)

|

||||||

|

|

||||||

|

self.assertEqual(response, {'hits': {'hits': []}})

|

||||||

|

mock_get.assert_called_once_with(

|

||||||

|

ds.ES_URL,

|

||||||

|

headers={"Content-Type": "application/json"},

|

||||||

|

data=ds.QUERY_DETECTIONS,

|

||||||

|

auth=self.auth,

|

||||||

|

verify=False

|

||||||

|

)

|

||||||

|

|

||||||

|

@patch('os.makedirs')

|

||||||

|

@patch('builtins.open', new_callable=mock_open)

|

||||||

|

def test_save_content(self, mock_file, mock_makedirs):

|

||||||

|

file_path = ds.save_content(self.mock_detection_hit, self.output_dir, 'subfolder', 'txt')

|

||||||

|

expected_path = f'{self.output_dir}/subfolder/test_id.txt'

|

||||||

|

self.assertEqual(file_path, expected_path)

|

||||||

|

mock_makedirs.assert_called_once_with(f'{self.output_dir}/subfolder', exist_ok=True)

|

||||||

|

self.assert_file_written(mock_file, expected_path, 'test_content')

|

||||||

|

|

||||||

|

@patch('os.makedirs')

|

||||||

|

@patch('builtins.open', new_callable=mock_open)

|

||||||

|

def test_save_overrides(self, mock_file, mock_makedirs):

|

||||||

|

file_path = ds.save_overrides(self.mock_override_hit)

|

||||||

|

expected_path = f'{self.output_dir}/sigma/overrides/test_id.yaml'

|

||||||

|

self.assertEqual(file_path, expected_path)

|

||||||

|

mock_makedirs.assert_called_once_with(f'{self.output_dir}/sigma/overrides', exist_ok=True)

|

||||||

|

self.assert_file_written(mock_file, expected_path, json.dumps({"key": "value"}))

|

||||||

|

|

||||||

|

@patch('subprocess.run')

|

||||||

|

def test_ensure_git_repo(self, mock_run):

|

||||||

|

mock_run.return_value = MagicMock(returncode=0)

|

||||||

|

|

||||||

|

ds.ensure_git_repo()

|

||||||

|

|

||||||

|

mock_run.assert_has_calls([

|

||||||

|

call(["git", "config", "--global", "init.defaultBranch", "main"], check=True),

|

||||||

|

call(["git", "-C", self.output_dir, "init"], check=True),

|

||||||

|

call(["git", "-C", self.output_dir, "remote", "add", "origin", "default"], check=True)

|

||||||

|

])

|

||||||

|

|

||||||

|

@patch('subprocess.run')

|

||||||

|

def test_commit_changes(self, mock_run):

|

||||||

|

mock_status_result = MagicMock()

|

||||||

|

mock_status_result.stdout = "On branch main\nnothing to commit, working tree clean"

|

||||||

|

mock_commit_result = MagicMock(returncode=1)

|

||||||

|

# Ensure sufficient number of MagicMock instances for each subprocess.run call

|

||||||

|

mock_run.side_effect = [mock_status_result, mock_commit_result, MagicMock(returncode=0), MagicMock(returncode=0), MagicMock(returncode=0), MagicMock(returncode=0), MagicMock(returncode=0), MagicMock(returncode=0)]

|

||||||

|

|

||||||

|

print("Running test_commit_changes...")

|

||||||

|

ds.commit_changes()

|

||||||

|

print("Finished test_commit_changes.")

|

||||||

|

|

||||||

|

mock_run.assert_has_calls([

|

||||||

|

call(["git", "-C", self.output_dir, "config", "user.email", "securityonion@local.invalid"], check=True),

|

||||||

|

call(["git", "-C", self.output_dir, "config", "user.name", "securityonion"], check=True),

|

||||||

|

call(["git", "-C", self.output_dir, "add", "."], check=True),

|

||||||

|

call(["git", "-C", self.output_dir, "status"], capture_output=True, text=True),

|

||||||

|

call(["git", "-C", self.output_dir, "commit", "-m", "Update detections and overrides"], check=False, capture_output=True)

|

||||||

|

])

|

||||||

|

|

||||||

|

@patch('builtins.print')

|

||||||

|

@patch('so-detections-backup.commit_changes')

|

||||||

|

@patch('so-detections-backup.save_overrides')

|

||||||

|

@patch('so-detections-backup.save_content')

|

||||||

|

@patch('so-detections-backup.query_elasticsearch')

|

||||||

|

@patch('so-detections-backup.get_auth_credentials')

|

||||||

|

@patch('os.makedirs')

|

||||||

|

def test_main(self, mock_makedirs, mock_get_auth, mock_query, mock_save_content, mock_save_overrides, mock_commit, mock_print):

|

||||||

|

mock_get_auth.return_value = self.auth_credentials

|

||||||

|

mock_query.side_effect = [

|

||||||

|

{'hits': {'hits': [{"_source": {"so_detection": {"publicId": "1", "content": "content1", "language": "sigma"}}}]}},

|

||||||

|

{'hits': {'hits': [{"_source": {"so_detection": {"publicId": "2", "overrides": [{"key": "value"}], "language": "suricata"}}}]}}

|

||||||

|

]

|

||||||

|

|

||||||

|

with patch('datetime.datetime') as mock_datetime:

|

||||||

|

mock_datetime.now.return_value.strftime.return_value = "2024-05-23 20:49:44"

|

||||||

|

ds.main()

|

||||||

|

|

||||||

|

mock_makedirs.assert_called_once_with(self.output_dir, exist_ok=True)

|

||||||

|

mock_get_auth.assert_called_once_with(ds.AUTH_FILE)

|

||||||

|

mock_query.assert_has_calls([

|

||||||

|

call(ds.QUERY_DETECTIONS, self.auth),

|

||||||

|

call(ds.QUERY_OVERRIDES, self.auth)

|

||||||

|

])

|

||||||

|

mock_save_content.assert_called_once_with(

|

||||||

|

{"_source": {"so_detection": {"publicId": "1", "content": "content1", "language": "sigma"}}},

|

||||||

|

self.output_dir,

|

||||||

|

"sigma",

|

||||||

|

"yaml"

|

||||||

|

)

|

||||||

|

mock_save_overrides.assert_called_once_with(

|

||||||

|

{"_source": {"so_detection": {"publicId": "2", "overrides": [{"key": "value"}], "language": "suricata"}}}

|

||||||

|

)

|

||||||

|

mock_commit.assert_called_once()

|

||||||

|

mock_print.assert_called()

|

||||||

|

|

||||||

|

if __name__ == '__main__':

|

||||||

|

unittest.main(verbosity=2)

|

||||||

@@ -85,7 +85,7 @@ soc:

|

|||||||

elastalertengine:

|

elastalertengine:

|

||||||

additionalAlerters:

|

additionalAlerters:

|

||||||

title: Additional Alerters

|

title: Additional Alerters

|

||||||

description: Specify additional alerters to enable for all Sigma rules, one alerter name per line. Alerters refers to ElastAlert 2 alerters, as documented at https://elastalert2.readthedocs.io. Note that the configuration parameters for these alerters must be provided in the ElastAlert configuration section. Filter for 'Alerter Parameters' to find this related setting.

|

description: Specify additional alerters to enable for all Sigma rules, one alerter name per line. Alerters refers to ElastAlert 2 alerters, as documented at https://elastalert2.readthedocs.io. Note that the configuration parameters for these alerters must be provided in the ElastAlert configuration section. Filter for 'Alerter' to find this related setting. A full update of the ElastAlert rule engine, via the Detections screen, is required in order to apply these changes. Requires a valid Security Onion license key.

|

||||||

global: True

|

global: True

|

||||||

helpLink: sigma.html

|

helpLink: sigma.html

|

||||||

forcedType: "[]string"

|

forcedType: "[]string"

|

||||||

@@ -113,16 +113,20 @@ soc:

|

|||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

helpLink: sigma.html

|

helpLink: sigma.html

|

||||||

|

integrityCheckFrequencySeconds:

|

||||||

|

description: 'How often the ElastAlert integrity checker runs (in seconds). This verifies the integrity of deployed rules.'

|

||||||

|

global: True

|

||||||

|

advanced: True

|

||||||

rulesRepos:

|

rulesRepos:

|

||||||

default: &eerulesRepos

|

default: &eerulesRepos

|

||||||

description: "Custom Git repos to pull Sigma rules from. 'license' field is required, 'folder' is optional. 'community' disables some management options for the imported rules - they can't be deleted or edited, just tuned, duplicated and Enabled | Disabled."

|

description: "Custom Git repositories to pull Sigma rules from. 'license' field is required, 'folder' is optional. 'community' disables some management options for the imported rules - they can't be deleted or edited, just tuned, duplicated and Enabled | Disabled. The new settings will be applied within 15 minutes. At that point, you will need to wait for the scheduled rule update to take place (by default, every 24 hours), or you can force the update by nagivating to Detections --> Options dropdown menu --> Elastalert --> Full Update."

|

||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

forcedType: "[]{}"

|

forcedType: "[]{}"

|

||||||

helpLink: sigma.html

|

helpLink: sigma.html

|

||||||

airgap: *eerulesRepos

|

airgap: *eerulesRepos

|

||||||

sigmaRulePackages:

|

sigmaRulePackages:

|

||||||

description: 'Defines the Sigma Community Ruleset you want to run. One of these (core | core+ | core++ | all ) as well as an optional Add-on (emerging_threats_addon). Once you have changed the ruleset here, you will need to wait for the scheduled rule update to take place (by default, every 24 hours), or you can force the update by nagivating to Detections --> Options dropdown menu --> Elastalert --> Full Update. WARNING! Changing the ruleset will remove all existing non-overlapping Sigma rules of the previous ruleset and their associated overrides. This removal cannot be undone.'

|

description: 'Defines the Sigma Community Ruleset you want to run. One of these (core | core+ | core++ | all ) as well as an optional Add-on (emerging_threats_addon). Once you have changed the ruleset here, the new settings will be applied within 15 minutes. At that point, you will need to wait for the scheduled rule update to take place (by default, every 24 hours), or you can force the update by nagivating to Detections --> Options dropdown menu --> Elastalert --> Full Update. WARNING! Changing the ruleset will remove all existing non-overlapping Sigma rules of the previous ruleset and their associated overrides. This removal cannot be undone.'

|

||||||

global: True

|

global: True

|

||||||

advanced: False

|

advanced: False

|

||||||

helpLink: sigma.html

|

helpLink: sigma.html

|

||||||

@@ -196,7 +200,7 @@ soc:

|

|||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

helpLink: yara.html

|

helpLink: yara.html

|

||||||

autoEnabledYARARules:

|

autoEnabledYaraRules:

|

||||||

description: 'YARA rules to automatically enable on initial import. Format is $Ruleset - for example, for the default shipped ruleset: securityonion-yara'

|

description: 'YARA rules to automatically enable on initial import. Format is $Ruleset - for example, for the default shipped ruleset: securityonion-yara'

|

||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

@@ -211,9 +215,13 @@ soc:

|

|||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

helpLink: yara.html

|

helpLink: yara.html

|

||||||

|

integrityCheckFrequencySeconds:

|

||||||

|

description: 'How often the Strelka integrity checker runs (in seconds). This verifies the integrity of deployed rules.'

|

||||||

|

global: True

|

||||||

|

advanced: True

|

||||||

rulesRepos:

|

rulesRepos:

|

||||||

default: &serulesRepos

|

default: &serulesRepos

|

||||||

description: "Custom Git repos to pull YARA rules from. 'license' field is required, 'folder' is optional. 'community' disables some management options for the imported rules - they can't be deleted or edited, just tuned, duplicated and Enabled | Disabled."

|

description: "Custom Git repositories to pull YARA rules from. 'license' field is required, 'folder' is optional. 'community' disables some management options for the imported rules - they can't be deleted or edited, just tuned, duplicated and Enabled | Disabled. The new settings will be applied within 15 minutes. At that point, you will need to wait for the scheduled rule update to take place (by default, every 24 hours), or you can force the update by nagivating to Detections --> Options dropdown menu --> Strelka --> Full Update."

|

||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

forcedType: "[]{}"

|

forcedType: "[]{}"

|

||||||

@@ -235,6 +243,10 @@ soc:

|

|||||||

global: True

|

global: True

|

||||||

advanced: True

|

advanced: True

|

||||||

helpLink: suricata.html

|

helpLink: suricata.html

|